Bias testing used to be something teams talked about “later.” After launch. After growth. After the first big customer.

That is not how it works anymore.

If you build AI that touches hiring, lending, insurance, health, education, safety, public services, or even basic customer support, people will ask the same hard question early: “How do you know this system is fair enough, safe enough, and consistent enough to trust?”

Regulators ask it because they have to. Buyers ask it because they do not want risk on their brand, their customers, or their balance sheet. And if you are a founder, you should want to ask it too, because bias issues are not only a “PR problem.” They become product problems, sales problems, and legal problems. They slow deals down. They create surprise rework. They can block partnerships. They can also kill trust, which is the one thing you cannot patch with a quick update.

This article is about the bias testing that serious buyers and regulators expect to see. Not in theory. In practice. The kind of testing that shows you did your homework, that you understand your own model limits, and that you can prove it with clear evidence.

We will keep it simple and real.

We will talk about what “bias” really means in a business setting, why different people mean different things when they say “fair,” and how that plays out when someone is deciding whether to approve your product. We will cover the tests that matter most, how to set them up even if you are a small team, and how to present results in a way that builds confidence instead of raising new questions.

We will also talk about something most teams skip: how bias testing connects to your IP. Because the way you test, measure, monitor, and reduce bias can become part of your company’s edge. It can be more than a checklist. It can be a system you own. A repeatable method. A defensible process that grows stronger as you collect more data and learn from the field.

That is where Tran.vc comes in.

Tran.vc helps technical founders turn deep work into real business strength. If you are building AI, robotics, or other hard tech, you can apply any time here: https://www.tran.vc/apply-now-form/. Tran.vc invests up to $50,000 in in-kind patent and IP services, so you can lock down what you are building while you are still early. That means you can move fast and still protect the parts that matter.

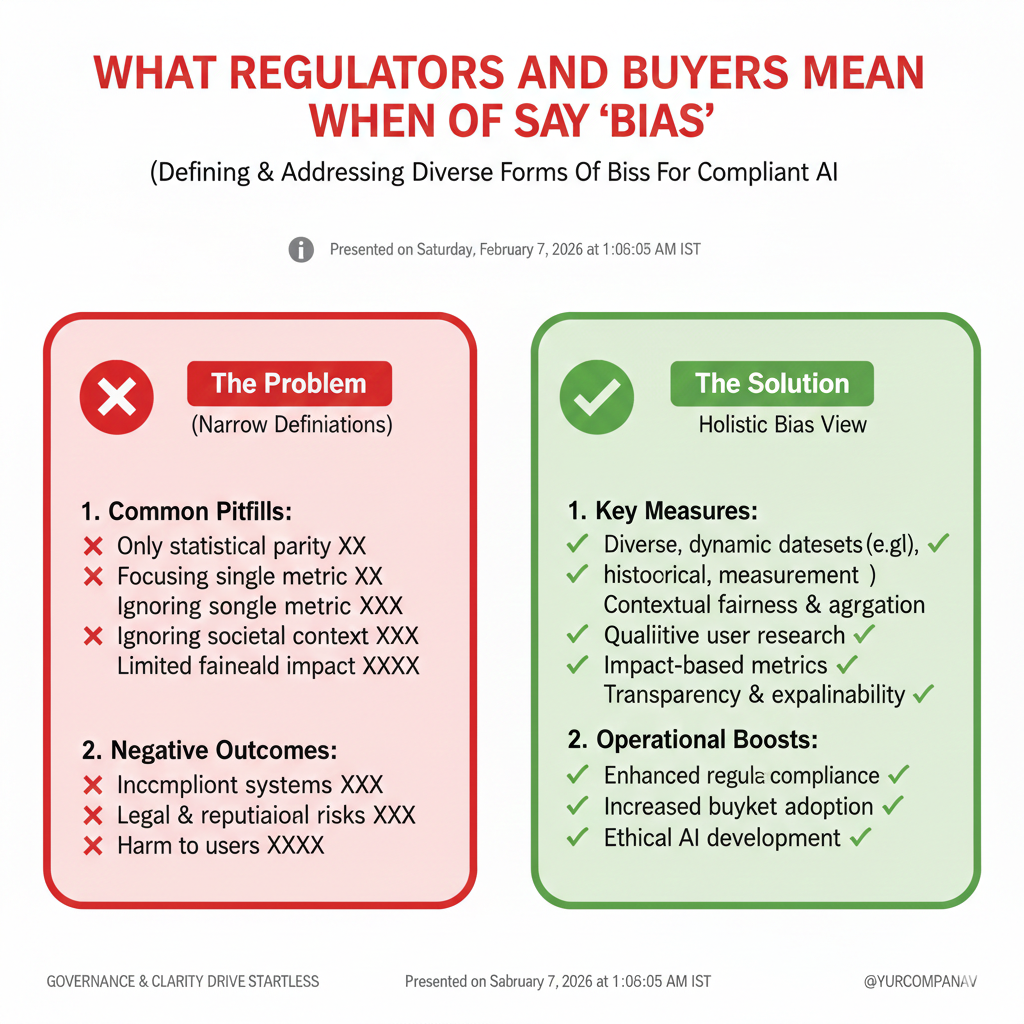

What Regulators and Buyers Mean When They Say “Bias”

Bias is not one thing

When most people hear the word “bias,” they think it means someone acted with bad intent.

In AI, bias usually means something else: the system gives different results for different groups in a way that creates harm, risk, or unfair outcomes.

That difference matters because buyers do not care if the model “meant well.”

They care about what happens when real people use it in real life, at scale, every day.

Regulators think in a similar way.

They focus on outcomes, impacts, and whether a company can show control over the system, not just good intentions.

Buyers look for risk, not perfect fairness

Most buyers are not asking you to prove your model is perfect.

They are asking you to prove you understand where it can fail, how bad the failure can be, and what you do to prevent it.

They also want to see you can explain your approach in plain words.

If your fairness story needs ten slides of math before it makes sense, your deal will slow down, even if your model is strong.

The fastest path is clarity.

Clear scope, clear tests, clear results, and clear actions when things drift.

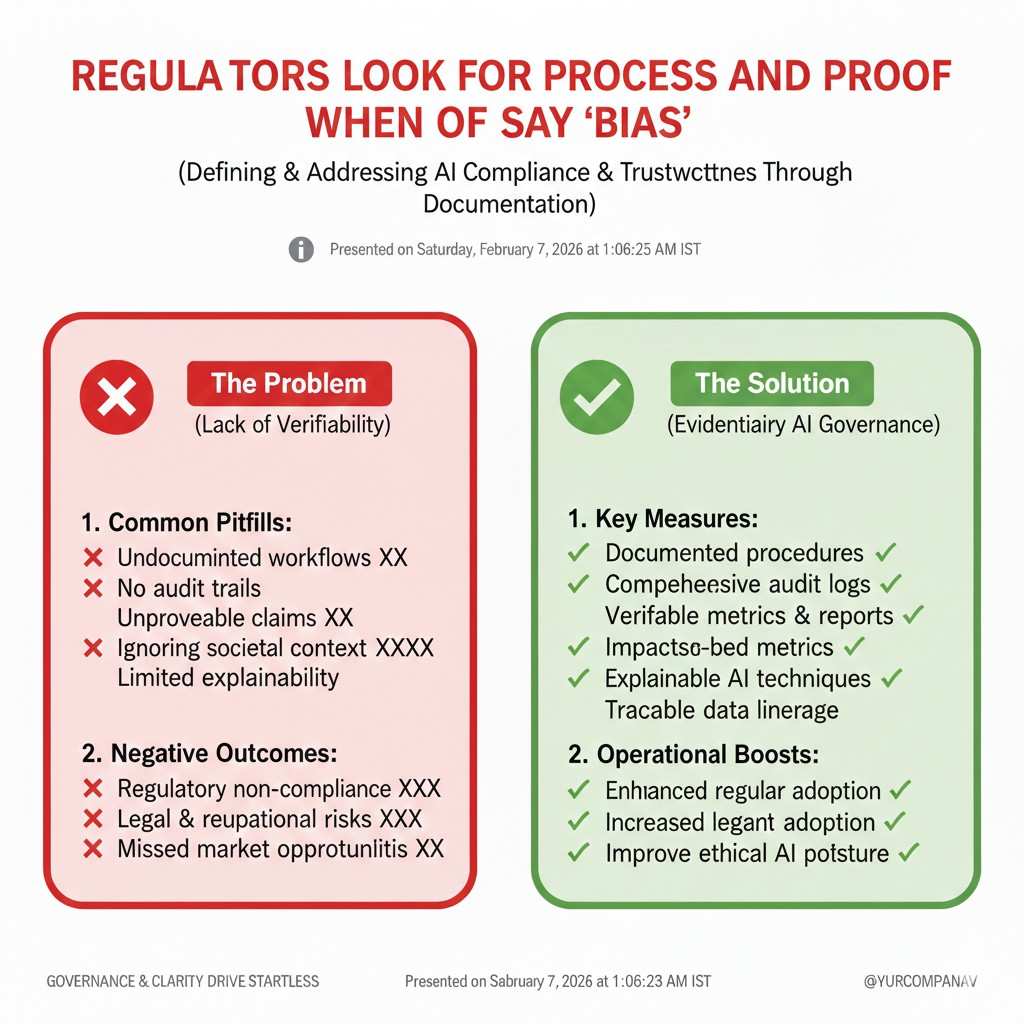

Regulators look for process and proof

Regulators often care less about one single metric and more about whether you have a repeatable system.

They want to see that you can test before launch, monitor after launch, and respond when issues show up.

That means bias testing is not just a one-time report.

It becomes part of product development, data work, model training, and customer delivery.

If you build that system early, it is easier and cheaper.

If you bolt it on later, it becomes messy, slow, and expensive.

Start With the Use Case, Not the Model

Your model is not the product

A buyer is not buying “a classifier” or “a ranker.”

They are buying a decision that affects people, money, access, safety, or time.

Bias testing must match that decision.

A resume screening tool, a loan risk model, and a medical triage model can use similar tech, but they need very different tests.

So before you pick a metric, write the decision in one simple sentence.

Then write who it affects, what the harm could be, and what “good” looks like.

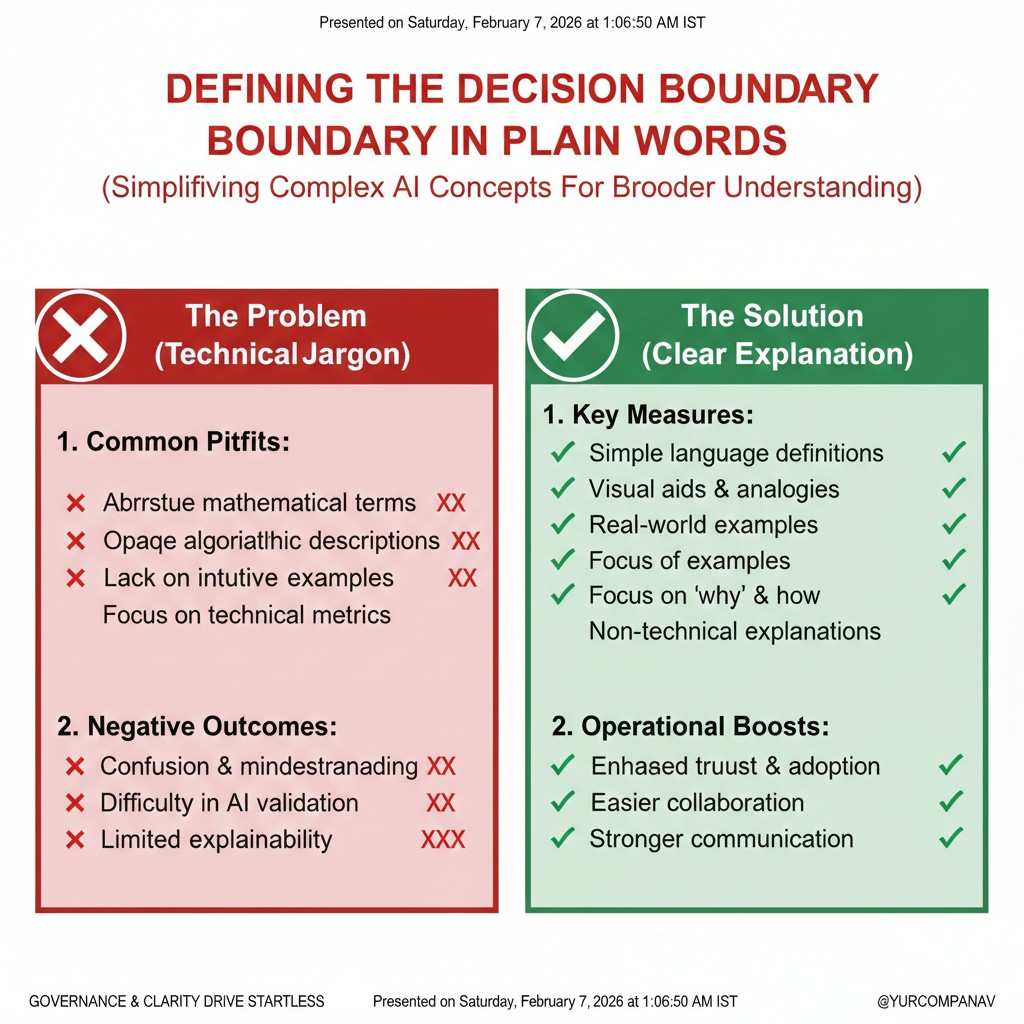

Define the decision boundary in plain words

One mistake teams make is they test fairness in a way that has nothing to do with the actual product behavior.

For example, they test “model accuracy by group,” but the product is really a threshold-based approval flow.

In that case, buyers will ask the right follow-up question:

“What happens to approval rates when you set the threshold where we need it?”

Your testing should match the decision boundary your customer will actually use.

If your customer can change thresholds, your testing should show results across realistic ranges, not just one point.

Map the full pipeline, not only the last step

Bias can enter before the model even sees data.

It can come from how you collect labels, how you filter records, how you define success, and how humans review edge cases.

Buyers know this now.

They may ask about data sources, labelers, review steps, and even how you handle missing values.

That is why strong bias testing includes the full pipeline.

It does not blame everything on the model, and it does not hide weak parts of the process.

If you want to build this the right way while protecting what you invent, apply to Tran.vc here: https://www.tran.vc/apply-now-form/.

Your testing method and monitoring workflow can become part of your moat, and Tran.vc helps founders capture that value as IP.

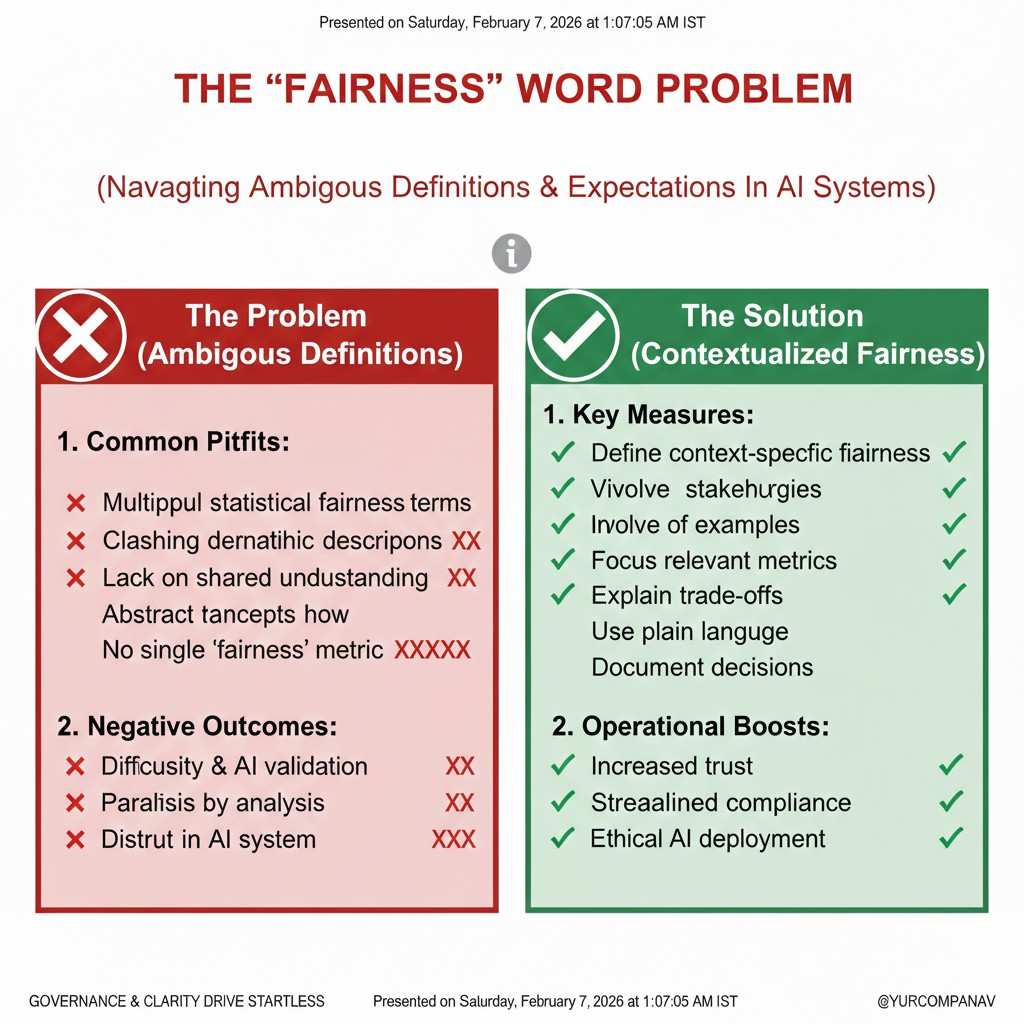

The “Fairness” Word Problem

Different people mean different things

When a buyer says “fair,” they may mean “equal outcomes.”

When your legal team says “fair,” they may mean “no illegal discrimination.”

When your ML lead says “fair,” they may mean “balanced error rates.”

All of those can be valid, and they can also conflict.

You can meet one fairness goal and still fail another.

So do not start bias work by chasing a single fairness metric.

Start by making the fairness goal a business decision tied to your use case and your risk.

The most common fairness questions buyers ask

In real sales calls, buyers often ask variations of a few questions.

They want to know if protected groups are treated worse, if mistakes cluster in certain groups, and if the product behaves differently in different settings.

They also ask whether you tested on data that looks like their data.

A model can look “fair” on a public benchmark and still behave badly in a specific region, language, or job market.

So you should prepare answers that connect directly to their world.

That means testing should include slices that match customer reality, not just academic groupings.

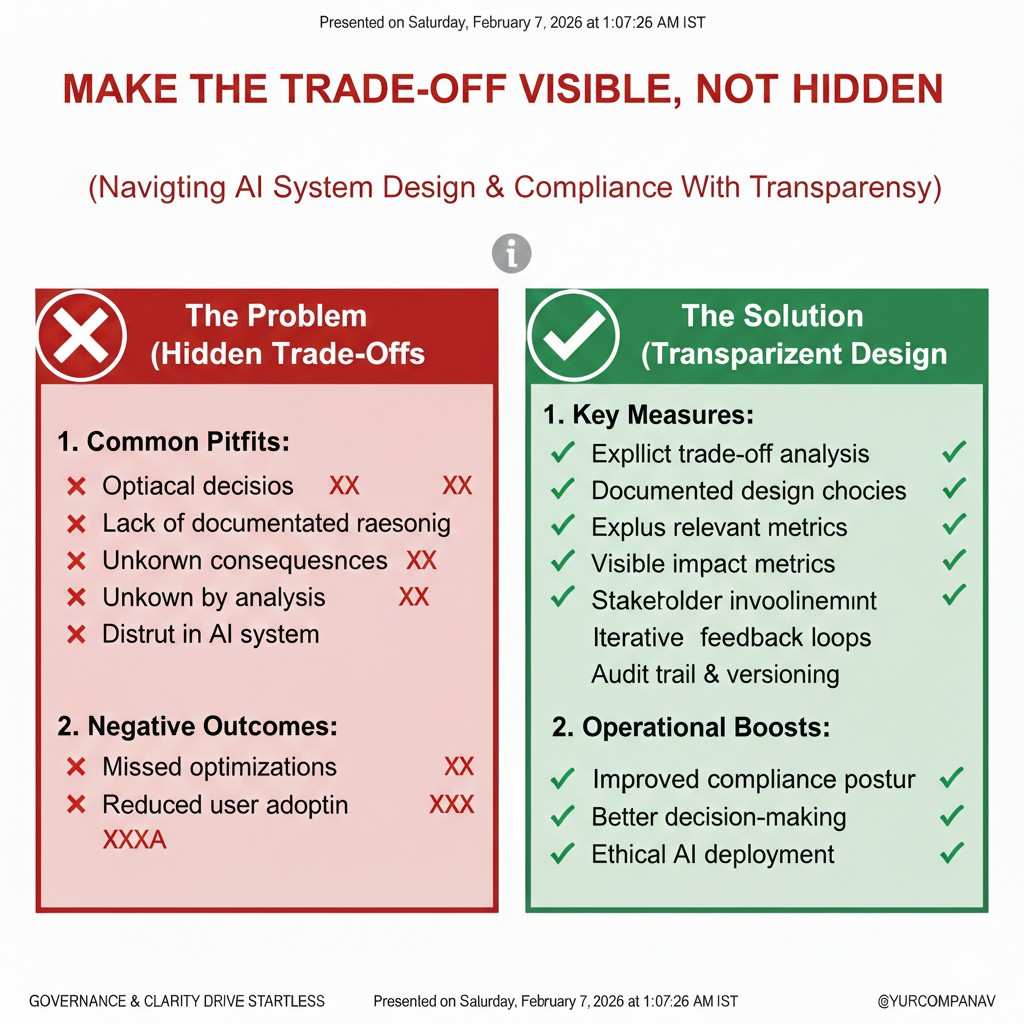

Make the trade-offs visible, not hidden

A strong bias report does not pretend there are no trade-offs.

It shows the trade-offs clearly, explains why a choice was made, and states what will be monitored over time.

That honesty builds trust.

It also prevents the worst kind of surprise: the buyer finds the trade-off later, after the system is already deployed.

If you handle trade-offs upfront, you control the story.

If you hide them, the story controls you.

The Bias Tests That Actually Show Control

Start with group coverage and data health

Before you measure fairness, you need to know if your dataset even represents the groups you care about.

If one group has very few samples, your metrics may look “stable” while being meaningless.

Buyers expect you to know your coverage.

They also expect you to know where labels are weaker, where missing data is common, and where proxies may leak sensitive traits.

This is basic, but it is often skipped.

And it is one of the easiest ways for a buyer to lose confidence fast.

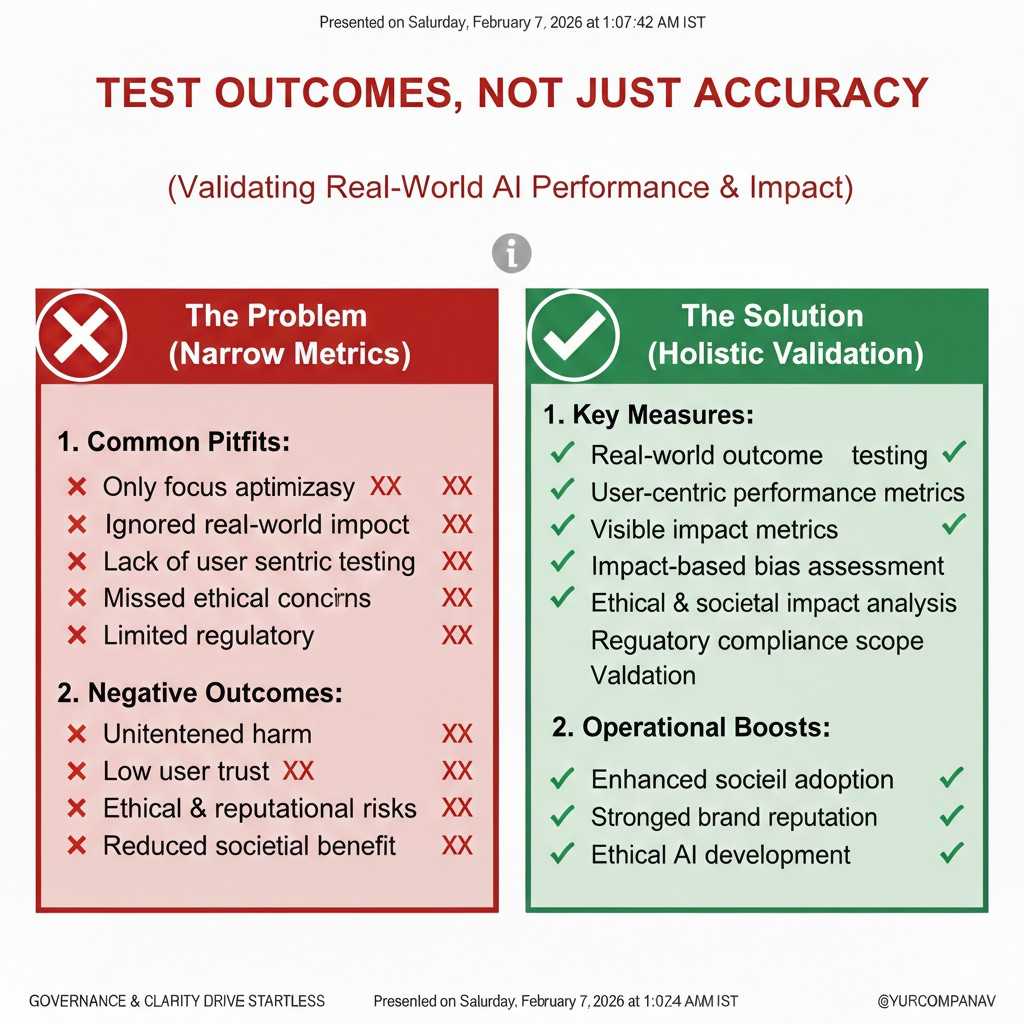

Test outcomes, not just accuracy

Many teams show “accuracy by group” and stop there.

That can be helpful, but it is rarely enough for a real buyer.

If the system makes approvals, denials, rankings, or prioritizations, then buyers want to see how those outcomes differ by group.

They want to see selection rates, denial rates, and how often people are sent to manual review.

They also want to see what happens near the border.

Borderline cases are where bias often shows up, because small shifts can flip decisions.

Test error types, not only error size

Two models can have the same overall error rate and still behave very differently.

One may create more false positives for one group, while the other creates more false negatives.

In many products, those errors have very different harms.

A false rejection in hiring is not the same as a false acceptance in fraud detection.

That is why buyers ask, “Which group gets which kind of mistake?”

If you can answer that cleanly, you look prepared and reliable.

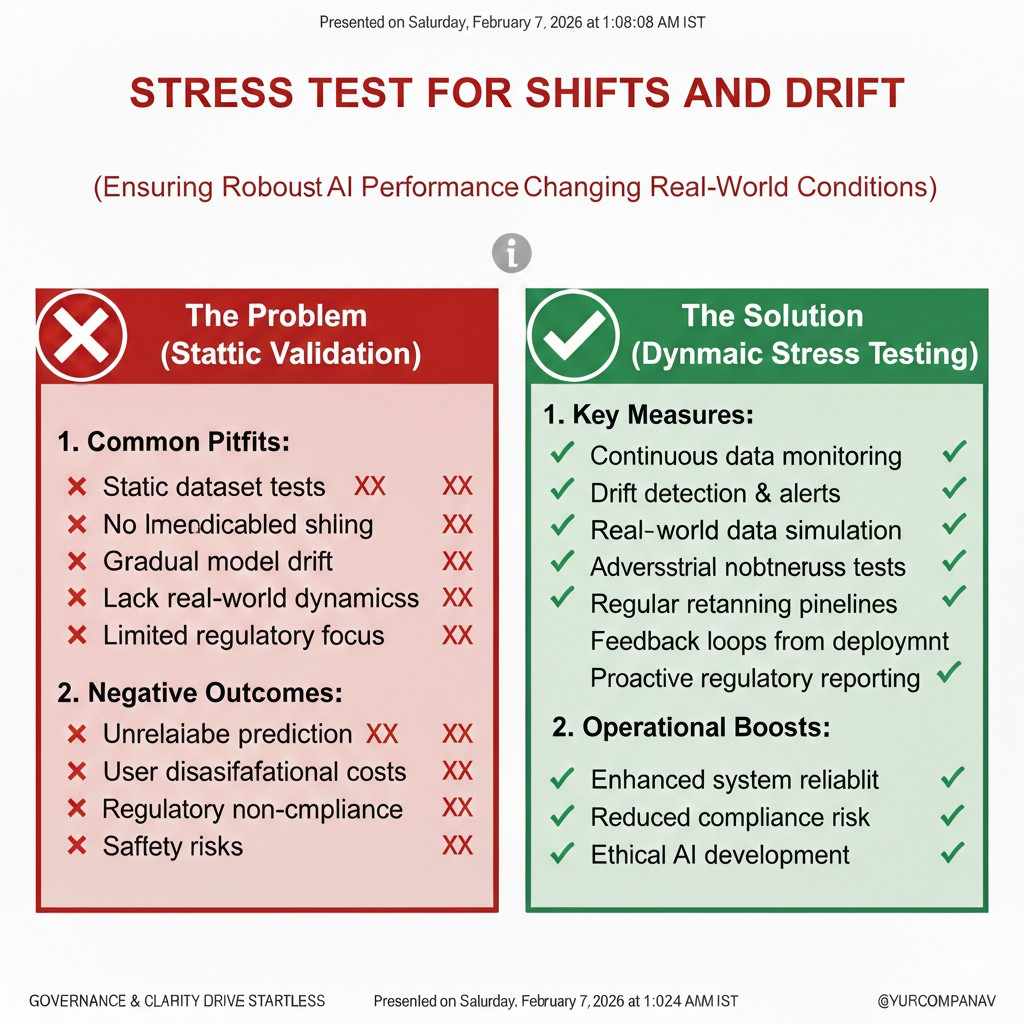

Stress test for shifts and drift

Bias can get worse when data shifts.

A model trained on one region, one season, or one customer segment can behave differently when it meets new patterns.

Regulators and buyers expect you to test for this.

Not with perfect prediction, but with reasonable stress tests that show you thought about reality.

This can be as simple as testing across time windows, geographies, device types, languages, or job families.

The goal is to show you can spot when performance changes, and you have a plan to respond.

If you want help building this into a real company asset, not just a compliance task, apply to Tran.vc: https://www.tran.vc/apply-now-form/.

Tran.vc helps early teams turn work like this into defensible IP and stronger investor stories.

How to Present Bias Testing So Buyers Say “Yes”

A buyer wants a story they can repeat

Your champion inside the company has to explain your model to people who are not ML experts.

That includes legal, security, procurement, and often the executive team.

So your bias testing output should read like a clear story.

What you tested, why you tested it, what you found, what you changed, and how you monitor it now.

If it is a pile of charts with no narrative, it will not travel inside the buyer’s org.

And if it does not travel, it does not close.

Keep the logic simple and consistent

Pick a small set of core tests that match your use case, and run them the same way each time.

Consistency signals maturity, even if you are early.

Then add deeper tests for higher-risk customers or higher-risk decisions.

This shows you can scale your rigor without changing your core logic every time.

Buyers do not want “one report per deal.”

They want a stable process that can pass audits and renewals.

Show actions, not just numbers

Numbers matter, but actions close deals.

When you show a metric, also show what you do when it moves the wrong way.

That might include threshold controls, human review rules, data refresh triggers, or alerting.

You do not need a complex system at first, but you do need a clear plan that is real.

A buyer will often trust a team with imperfect metrics and strong controls more than a team with pretty metrics and no response plan.

Control is what reduces risk.

Build a Bias Test Plan That Holds Up in Due Diligence

Treat bias testing like a product feature

If you treat bias testing like a checkbox, it will feel like a checkbox to everyone who reviews it.

But if you treat it like a product feature, the whole tone changes.

A product feature has owners, schedules, and clear inputs and outputs.

It also has versions, improvements, and a roadmap.

That is what buyers want to see, even if you are still early.

They want proof that you can run the same tests again next month, on a new dataset, or with a new customer.

Make the plan match the buying process

Many enterprise deals follow the same path.

First comes a product demo, then a pilot, then a security review, then legal and procurement.

Bias questions can appear at any step, but they often show up late, when legal gets involved.

If you wait until then, you may be stuck trying to build evidence under time pressure.

So build a bias test plan that is ready before the pilot begins.

That way, when the buyer asks, you can respond in one day, not three weeks.

A simple plan is fine, as long as it is real.

A fancy plan that you cannot execute will hurt you more than it helps.

Keep one “core pack” and one “deal pack”

You want a set of bias tests you run for every release.

This becomes your core pack.

Then you want a second layer you can run for a specific buyer’s needs.

This becomes your deal pack.

The core pack protects you from surprises and keeps you honest over time.

The deal pack helps you speak the customer’s language and reduce their fear.

You do not need a long list of tests for the core pack.

What matters is that it matches your use case and stays consistent.

Use a few strong slices that buyers care about

A slice is a way to cut your results into groups to see where things differ.

If you pick slices that do not match how your customer uses the product, your testing will feel shallow.

Pick slices that match real operations.

Think region, language, device, job family, seniority level, channel, time period, and workflow path.

Then, where it is legally and ethically appropriate, test protected groups too.

In many settings, you may not have direct access to protected traits, so you may use careful alternatives, or customer-provided analysis under rules they control.

The key is to show you understand the context and do not hide from it.

Buyers respect teams that engage with reality in a careful, responsible way.

Handling Sensitive Attributes the Right Way

The data problem most teams do not admit

Bias testing often needs demographic or protected attribute data.

But many companies do not collect it, cannot collect it, or should not collect it.

That creates a hard truth: you can care about fairness and still lack the data you want.

Regulators know this problem exists.

Buyers know it too, especially in global settings with different laws.

So the expectation is not always “have every attribute.”

The expectation is “show a responsible plan for what you can and cannot measure.”

When you have the attributes, protect them

If you do have sensitive attributes, treat them like high-risk data.

Limit access, log use, and store them carefully.

In many companies, only a small set of people should touch that data.

And you should have a clear reason every time you use it.

Buyers will ask how you protect it, how long you retain it, and whether you use it in training or only in auditing.

If you cannot answer cleanly, they will assume the worst.

Your message should be simple: you use sensitive data only for fairness analysis, under strict controls, and you do not leak it into places it should not be.

When you do not have the attributes, avoid weak shortcuts

Some teams try to guess protected traits from names, photos, or zip codes.

That is risky and can be unethical, and it often creates new bias.

Buyers are getting sharper about this.

If they sense you are “making up demographics,” trust drops fast.

A safer approach is to use operational slices, careful proxy checks, and customer-led analysis when possible.

For some use cases, you can also use surveys, voluntary self-reporting, or third-party audits where the right controls exist.

The goal is not to pretend you know what you do not know.

The goal is to reduce harm with responsible methods, and to be clear about limits.

What a Strong Bias Report Looks Like

Start with a one-page summary that a lawyer can read

If you give a buyer a twenty-page report with no clear summary, you create friction.

Legal and compliance teams do not have time to decode your charts.

Your first page should explain the decision your system makes, what data it uses, what tests you ran, and the top results.

It should also state what changed since the last version and what you monitor in production.

This is not marketing.

It is a simple brief that helps others do their jobs.

When you write it well, your champion inside the buyer’s company can forward it without rewriting it.

That speeds deals up.

Make your metrics easy to interpret

Most buyers do not want a debate about ten fairness metrics.

They want a small set of measures tied to the risk of the use case.

For example, if your tool denies access to something important, error rates and false negatives matter a lot.

If your tool flags fraud, false positives and who gets flagged matter a lot.

You should define each metric in plain words.

Explain what a “bad” value looks like.

Then show the actual numbers and what actions you took.

When you do this, you turn metrics into decisions.

That is what buyers need.

Show confidence intervals or stability checks when possible

A single number can lie, even if you did not mean it to.

Small sample sizes can make results swing wildly.

Buyers may not ask for confidence intervals by name, but they do ask the idea behind it:

“How stable is this result?” and “Will it hold when we roll out?”

So show some form of stability check.

This can be as simple as testing on multiple splits, time windows, or subsets and showing the range.

The goal is not to impress anyone with statistics.

The goal is to show you understand uncertainty and you do not overclaim.

Document what you did when you saw issues

This is where many teams fail.

They show a chart with a gap, then move on like it is just a fact of life.

Buyers want to know what you did.

Did you change the data?

Did you adjust the threshold?

Did you add a human review step?

Did you change the labeling guide?

Even if the issue is not fully solved, actions show maturity.

It tells the buyer you are a team that responds, not a team that shrugs.

If you want to turn this kind of work into something investors value, apply to Tran.vc: https://www.tran.vc/apply-now-form/.

Tran.vc helps technical founders protect the systems, methods, and workflows that make their product safer and harder to copy.

Bias Testing During the Pilot

Test with the customer’s real workflow

A pilot is not just a model test.

It is a workflow test.

Bias can show up because of how people use the tool, not only because of the model.

For example, one team may trust the tool too much and stop reviewing edge cases.

Another team may override the tool only for certain groups without realizing it.

So bias testing during a pilot should look at the full loop.

Inputs, model outputs, human actions, and final outcomes.

This is also where buyers feel the most fear.

They see the product in motion and imagine headlines.

If you show that you are measuring and monitoring during the pilot, you reduce that fear.

And reducing fear is one of the fastest ways to close.

Watch for “feedback loop” bias

Some systems change the world they measure.

A fraud tool can cause more scrutiny in one area, which then creates more recorded fraud in that area, even if the real rate is unchanged.

A recommendation system can hide certain options from certain groups, which then makes those options look “less relevant,” which then hides them even more.

These feedback loops can create growing bias over time.

Regulators care about this.

Sophisticated buyers care too.

During a pilot, look for patterns where the system’s output changes what data you collect next.

Then test whether that loop is skewing outcomes across groups or slices.