Secure ML pipelines are not “nice to have” anymore. If you ship models into the real world, you are already running software that can be tricked, copied, poisoned, or quietly changed. And the scary part is this: most ML failures do not look like a hack. They look like “a strange drop in accuracy,” “a weird data shift,” or “a small config change” that no one notices until it costs you customers, cash, or trust.

This guide is about making your ML pipeline harder to break without slowing your team down. We will keep it practical. We will focus on what real attackers do, what breaks most often, and what controls give you the biggest safety gain for the least work. If you want help turning your ML work into real, protectable assets, Tran.vc can support you with up to $50,000 in in-kind patent and IP services—so your moat is not just “we have a model,” but “we own key ideas others cannot copy.” You can apply anytime here: https://www.tran.vc/apply-now-form/

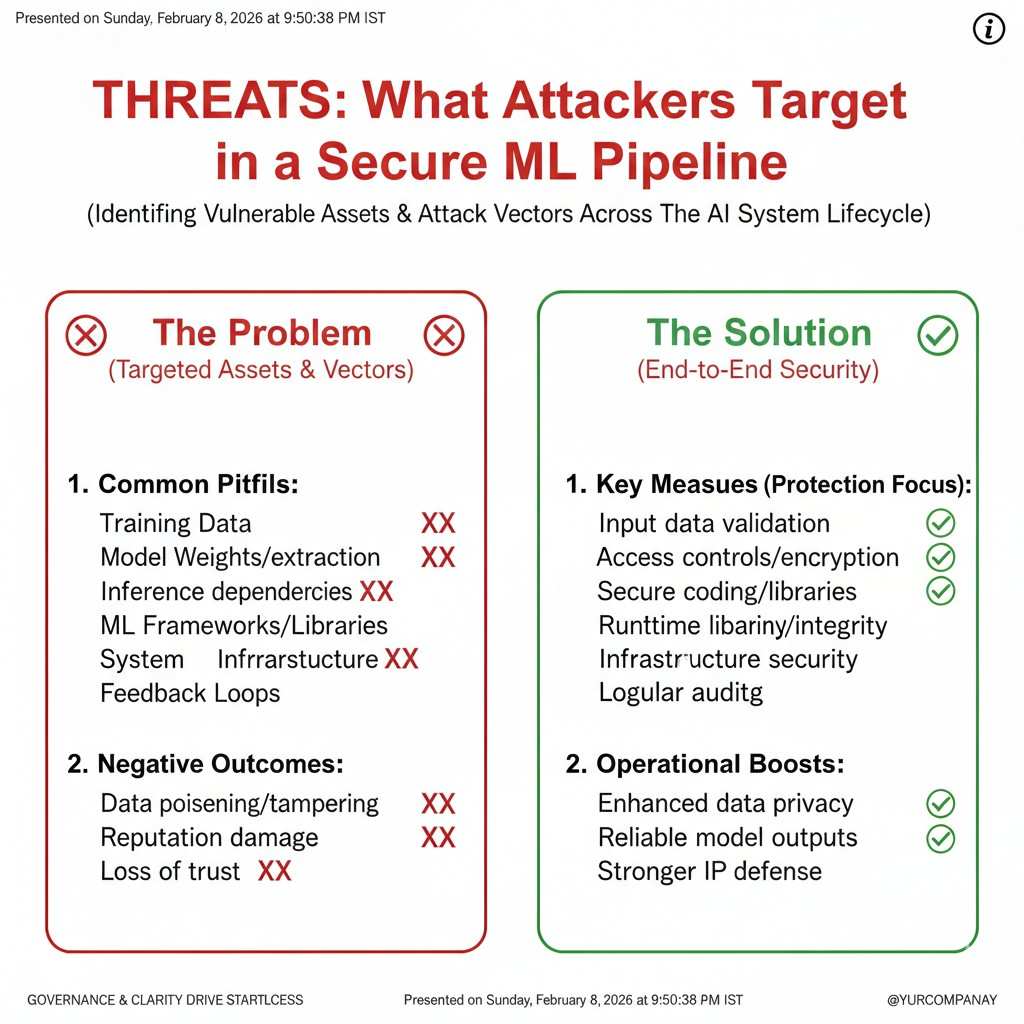

Threats: What Attackers Target in a Secure ML Pipeline

The pipeline is the product, not just the model

Most teams talk about “model security” like the model is a single file you lock in a safe. In real life, the model is only one piece. The real product is the full pipeline: data comes in, code cleans it, features get built, training runs, a model gets stored, and then it gets served to users. If any part of that chain can be changed, tricked, or copied, the end result can still fail.

Attackers know this. They do not need to “beat” your neural net in a lab. They only need one weak step where they can slip in bad data, steal a key, or swap a model artifact. That is why secure ML is mostly about boring pipeline details. Those details decide whether your work is safe, repeatable, and trustworthy.

Tran.vc works with technical founders who are building exactly these kinds of systems in AI, robotics, and deep tech. If your pipeline is the heart of your company, you should also think about how to protect the unique parts as IP, not just as code. You can apply anytime here: https://www.tran.vc/apply-now-form/

The attacker’s goal is often quiet control

Many people picture an attacker as someone who wants to crash your service. That does happen. But in ML systems, the highest value attacks are often silent. The model keeps running, dashboards look “mostly okay,” and the system slowly starts making worse choices. Or the attacker steals your training recipe and ships a copycat product before you even notice.

Quiet control can mean many things. It can mean pushing your model to approve bad transactions while still passing most checks. It can mean changing a data label rule so your model learns the wrong thing, slowly. It can mean swapping a model file so the system behaves in a way that helps the attacker. The biggest danger is not drama. It is drift with a cause.

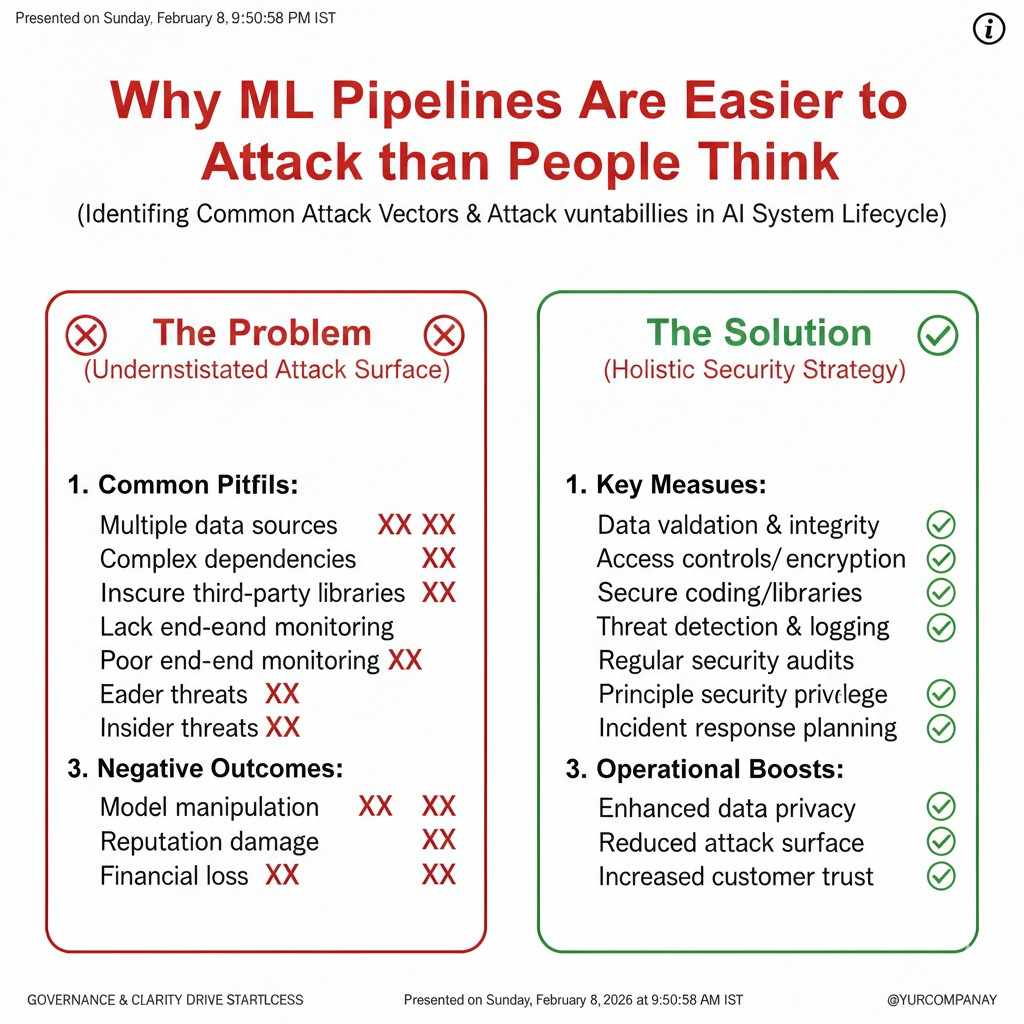

Why ML pipelines are easier to attack than people think

ML pipelines have more moving parts than a normal software service. There are more data sources. There are more storage buckets. There are more jobs running on schedules. There are more “one time scripts” that become permanent. There are also more third-party tools, from data connectors to labeling vendors to model registries.

Each tool brings its own access keys, roles, and settings. Most teams set them up fast to ship, then never return to harden them. Over time, the pipeline becomes a maze. A maze is good for attackers because it is hard for owners to see what is normal.

If you want a simple way to think about it, think of a pipeline as a factory line. A factory can build great products, but only if raw parts are clean, machines are locked, and every handoff is tracked. If you cannot prove what went in and what happened, you cannot prove what came out.

Threats in Data: Poisoning, Leaks, and Hidden Corruption

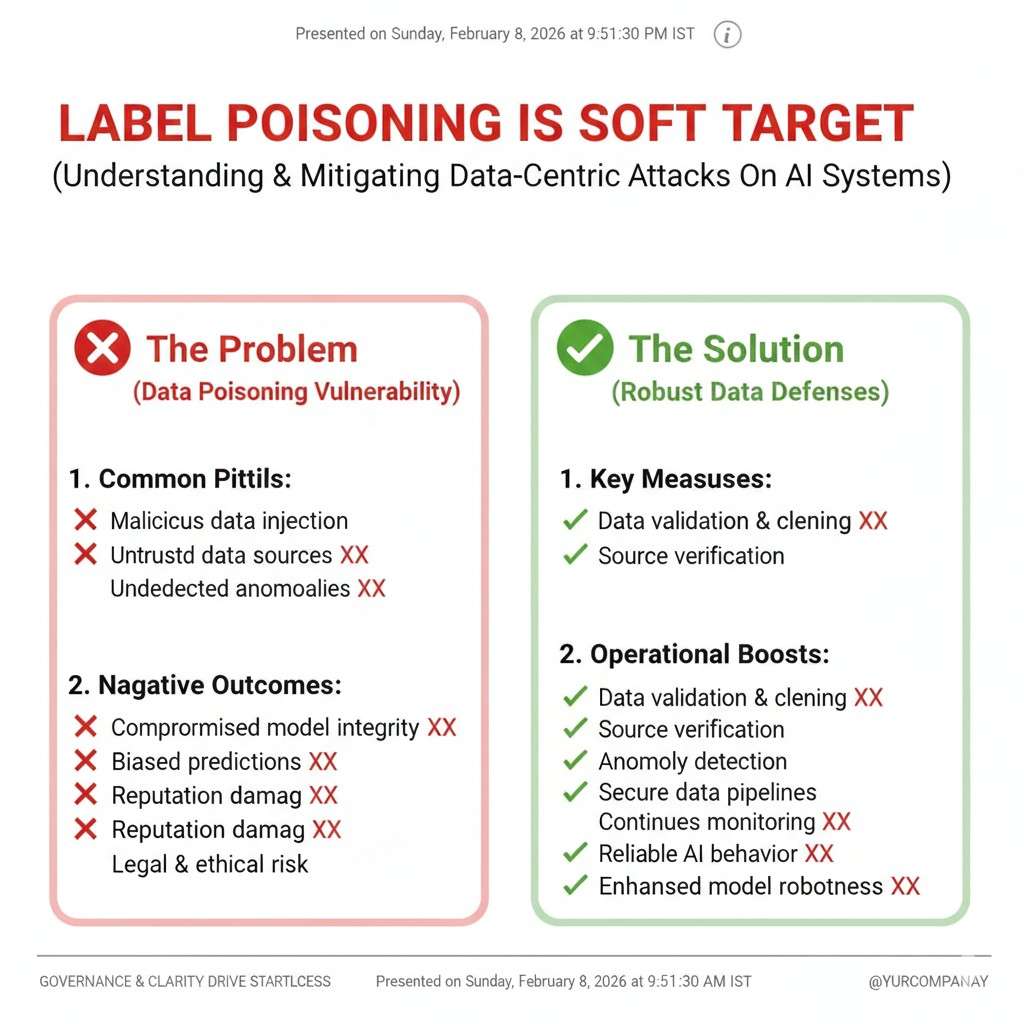

Training data poisoning is not always obvious

Data poisoning means someone gets bad examples into your training set so the model learns the wrong pattern. People often imagine extreme poison, like labels that are fully flipped. In practice, poison can be small and still matter. A tiny percent of bad examples, placed in the right spots, can change a decision boundary in ways that help the attacker.

The worst poison is crafted to look like normal noise. It might be “edge cases” that appear rare. It might be data that passes your schema checks. It might be content that looks real but is planted. In robotics or vision, it could be images that appear harmless but teach the model to react in unsafe ways.

Label poisoning is a soft target

Many ML teams treat labels like “ground truth,” but labels are made by people and systems under time pressure. If labels come from a vendor, or from user feedback, or from a rule engine, they can be attacked. A bad actor can create fake user reports. A competitor can flood your feedback loop. Even an internal error can act like an attacker if it silently changes label logic.

This is why label pipelines need the same care as code pipelines. If the label rule changes, you want a record. If a vendor changes staff, you want spot checks. If a user-driven label path becomes noisy, you want alarms that tell you quickly, not months later.

Data leakage can destroy trust even if the model “works”

Data leakage means private, sensitive, or restricted data ends up where it should not be. In ML pipelines, leakage can happen at many points. Raw logs may include personal details. Features may store a hidden identifier. Training sets may include data you do not have the rights to use. Exports to a partner may contain more than intended.

Even if your model is accurate, leakage can lead to legal risk, customer loss, and sudden platform bans. It can also ruin partnerships. In regulated areas, it can stop sales completely. The cost is not just fines. The cost is lost time when you should be building.

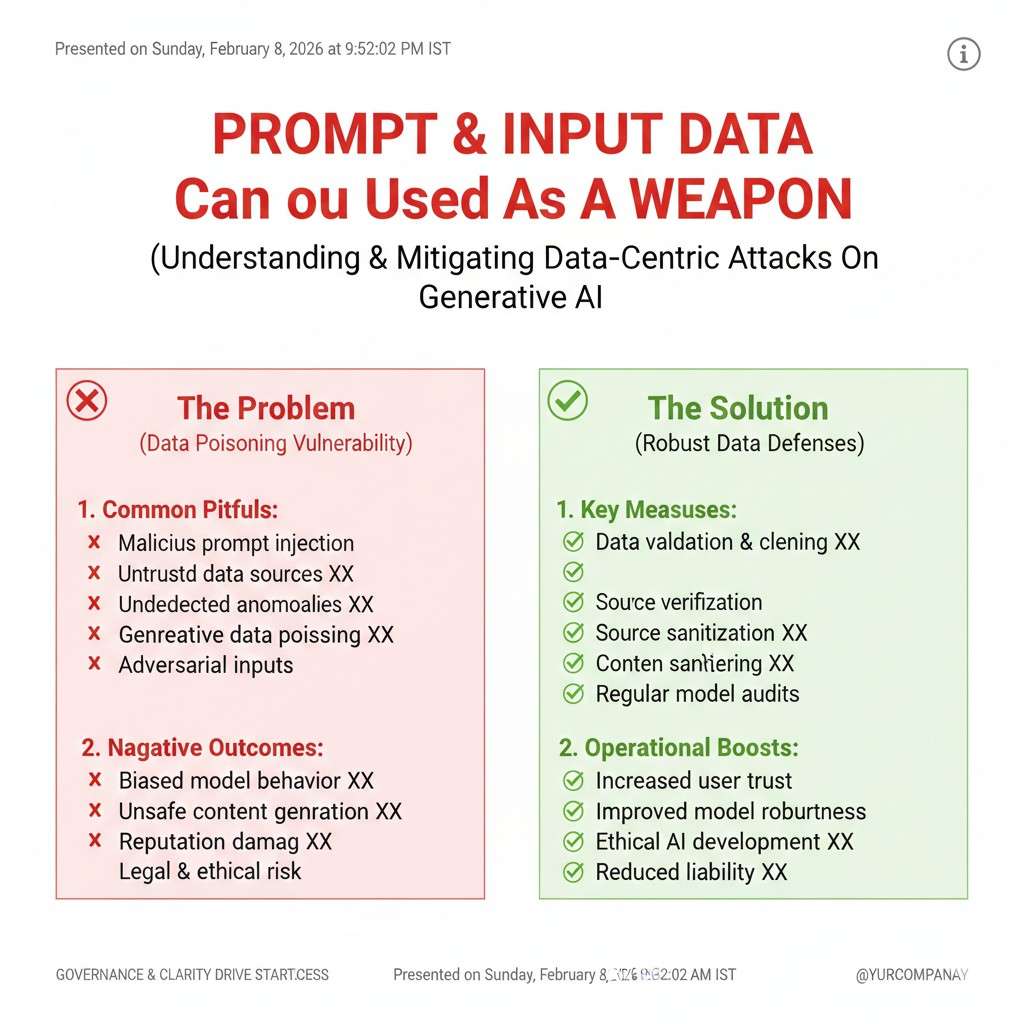

Prompt and input data can be used as a weapon

If you run an ML system that takes user input, that input can be hostile. For classic ML, an attacker might craft inputs to trigger a wrong output. For LLM systems, attackers use prompt tricks to pull private data, bypass safety rules, or get the system to do actions it should not do.

The input path needs strong boundaries. You need to assume users will try weird strings, strange formats, and massive payloads. You also need to assume that “valid input” is not the same as “safe input.” A payload can be valid and still be designed to cause harm.

Threats in Code and Dependencies: The Supply Chain Problem

One bad package can break the whole system

ML teams rely heavily on open-source libraries. That is normal and smart. But it also means your pipeline can be attacked through dependencies. A library update can include a hidden backdoor. A package name can be spoofed so your build pulls the wrong thing. A compromised maintainer account can push a harmful release.

This is not a rare risk in the broader software world, and ML makes it worse because training jobs often run with high permissions. They can read big data stores, write artifacts, and access internal networks. If a training job runs malicious code, it can steal a lot fast.

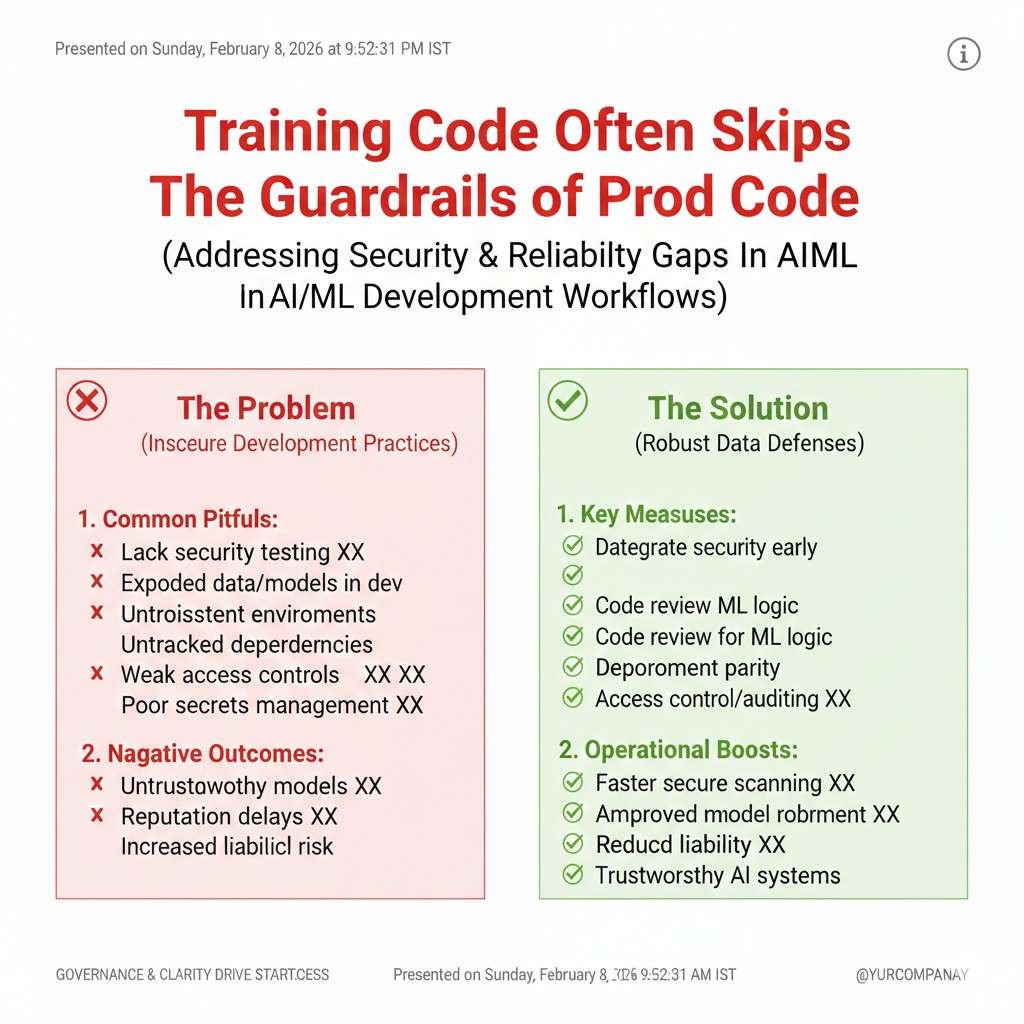

Training code often skips the guardrails of prod code

Many teams have decent checks for their main app, but training code lives in notebooks, scripts, and job runners that do not get the same review. People “just run” a script to clean data or patch a bug. Later that script becomes part of the pipeline. Then it runs every day with no tests and no review.

Attackers love this gap. So do accidents. A small change in a feature script can change the model, and if you cannot trace it, you cannot fix it fast. Secure ML is as much about making training code feel like production code as it is about firewalls.

Secrets in code are still common

API keys and tokens end up in training scripts, config files, and notebooks more often than teams admit. Sometimes it is not even a real key. It is a “temporary key” that becomes permanent. Or it is a service account that has wide access “just for now.”

Once a secret leaks, it often ends up in logs, backups, and forks. Then it is hard to clean up. Worse, secrets used in ML pipelines often unlock data buckets and model registries. That means a leaked key can become a direct path to your core assets.

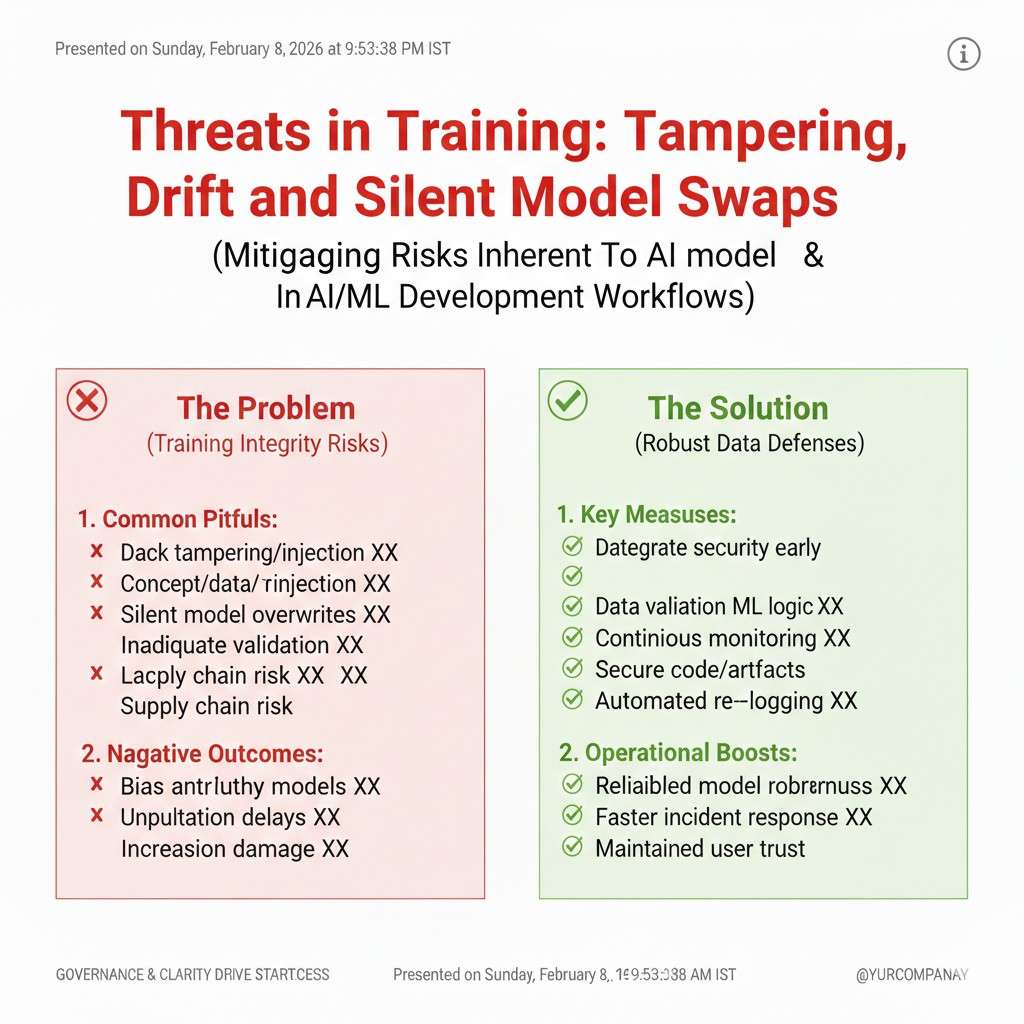

Threats in Training: Tampering, Drift, and Silent Model Swaps

Training jobs are high-value targets

A training run is a moment when your system touches a lot of sensitive things at once. It reads large data. It runs code with broad access. It writes artifacts that become production models. If an attacker can change what happens during training, they can change what your product does.

Training environments are often less locked down than serving environments. Teams treat them as “internal.” But internal systems are attacked all the time, often through stolen credentials or compromised laptops. The question is not “are we internal?” The question is “can we prove this training run is clean?”

Model tampering can happen after training too

Even if training is safe, the model file can be swapped later. If your storage bucket is not locked, a bad actor can replace the artifact. If your model registry is not protected, someone can publish a “new version” that is not real. If your deploy process trusts tags instead of hashes, it can pull the wrong thing.

Model swaps can be hard to spot if you do not have checks in place. A swapped model might still respond correctly most of the time. It might only misbehave for certain inputs. That is enough to cause harm while staying under the radar.

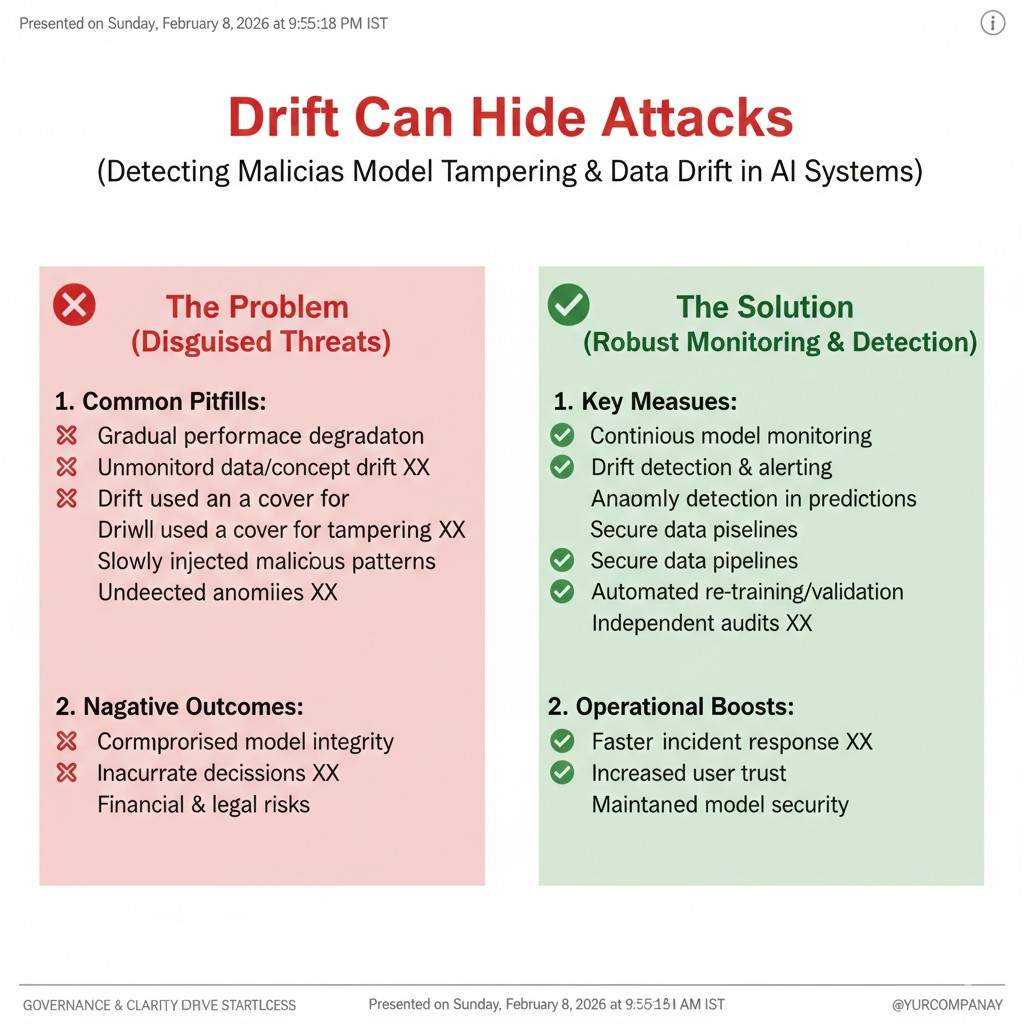

Drift can hide attacks

Data drift is normal. The world changes. User behavior changes. Sensors degrade. But drift can also cover up an attack. If your team is used to “some drift,” you may normalize a change that is not natural. A poisoning campaign can look like a market shift. A targeted attack can look like a seasonal trend.

This is why monitoring needs context. You want to know what changed and why. You want strong baselines and clear triggers. You also want the habit of asking: “Is this drift, or is this someone pushing us?”

Threats in Serving: Extraction, Abuse, and Decision Manipulation

Model extraction is a business threat, not just a technical one

If someone can query your model enough times, they may be able to create a copy that behaves similarly. They do not need your weights. They only need outputs. Over time, they can build a “shadow model” that is good enough to compete.

This is not only about lost revenue. It is about lost leverage. If your model is easy to copy, your moat is weak. This is where security and IP meet. The best place to be is: difficult to copy in practice, and protected in law where it matters. Tran.vc helps founders think through that full stack, including what to patent and how to shape claims around the key inventive parts. Apply anytime here: https://www.tran.vc/apply-now-form/

Abuse is often cheaper than a “hack”

Attackers may not break in at all. They may just use your system in a way that costs you money or harms your users. They might run heavy queries to raise your compute bill. They might trigger slow paths. They might use your model to generate spam, fraud, or harmful content and blame your brand.

The fix is rarely one magic filter. It is a mix of rate limits, input checks, and usage rules that are enforced in code. It also includes good logging so you can see patterns early.

Adversarial inputs can cause targeted failures

In some systems, small input changes can cause wrong outputs. That might be a slight pixel shift in an image. It might be a crafted string that breaks a parser. It might be a pattern that causes a classifier to misfire. In robotics, this can be physical: stickers on signs, lights that confuse sensors, or patterns that change camera reads.

You do not need to solve every adversarial case on day one. But you do need to know which outputs are high risk. If a failure can cause injury, loss, or legal risk, you treat it differently than a harmless mistake. Secure ML starts by mapping impact, not by chasing every paper.

Controls: Guardrails That Actually Work

Start with one rule: make changes easy to trace

A secure ML pipeline is not only about stopping attackers. It is also about stopping “mystery changes.” If your model output shifts and nobody can explain why, you are already in danger. Attackers love confusion, and accidents create the same harm.

The simplest control is traceability. You want to be able to answer basic questions fast: which data version was used, which code version ran, who approved the run, which config settings were applied, and which model artifact got deployed. When you can answer those questions in minutes, most attacks become harder to hide.

Traceability also saves you during normal chaos. When a customer reports a problem, you can reproduce the exact model and the exact inputs. That reduces panic and lets you fix the real issue instead of guessing.

Treat training like production, even if it feels “offline”

Many teams lock down their serving layer but leave training wide open. That is a mistake because training is where the model learns, and it touches the most sensitive systems. A clean production service does not help if a poisoned model is what you deployed.

A practical shift is to treat training jobs as first-class production workloads. That means controlled access, clear ownership, consistent review, and a standard path for runs. It also means fewer “run this one script from my laptop” moments.

You do not have to make training perfect on day one. You just need to stop the habit of informal changes that nobody tracks. Most secure pipelines start by removing the easiest paths for silent edits.

Controls for Data: Protect the Inputs Before You Protect the Model

Lock down data sources like they are money

In most AI startups, data is either the product or the fuel that creates the product. Yet many teams store raw data in buckets that have broad read access, wide write access, and weak monitoring. If an attacker can write to your “trusted” data bucket, they can poison you at scale.

Start by separating data zones. Raw ingestion should not be the same place as curated training sets. Curated sets should not be editable by every engineer. If you want a quick win, make “write access” rare and “read access” normal. Most pipelines only need a small number of writers.

Also, treat third-party connectors as risky until proven safe. If a data feed can be changed upstream, your model can be changed downstream. Build a habit of checking who controls the source and what guarantees they provide.

Use simple data checks that catch real poison

Many teams do schema checks and stop there. Schema checks are useful, but poison often passes schema. The best checks are simple and behavior-based. You want to know if the data distribution changed in a way that matters.

A good starting point is to track basic stats that are hard to fake at scale: counts, ranges, missing rate, top categories, and sudden spikes. If a feature suddenly has a new top value, ask why. If a label rate jumps, ask why. If a rare class becomes common overnight, do not ignore it.

These checks do not need fancy math to be effective. They need to run every time, with alerts that reach a real person. A secure pipeline does not rely on someone “noticing a graph.”

Add “trust tags” to your data

One of the most helpful habits is to label data by trust level. For example, human-reviewed labels are not the same as user-reported labels. Vendor labels are not the same as internal labels. Automatically scraped data is not the same as data from signed partners.

When you tag trust level, you can control what data is allowed into high-stakes models. You can also test models trained on higher-trust slices to see if outcomes improve. Attackers often target the weakest input path. Trust tags make that path visible.

In early-stage startups, this can be as simple as a column or metadata field. The value is not the format. The value is the discipline.

Prevent accidental leaks with “need to know” storage

A common leak pattern is “we dumped raw logs to debug” and then forgot they exist. Another pattern is “we exported a dataset for labeling” and included extra columns that were not needed. Over time, those copies multiply and become impossible to track.

A practical control is to create a standard “safe export” format. It contains only what the next step needs, nothing more. You also want exports to be time-limited when possible, with access that expires. If your team has to share data, make it a controlled act, not an informal one.

Even if you are not in a regulated space, this helps you move faster in sales. Buyers ask about data handling. If you can explain it clearly, you build trust early.

Controls for Code and Dependencies: Stop Supply Chain Surprises

Pin what you run, or you will not know what changed

If your training job pulls “latest” versions of libraries, you are inviting surprises. A secure pipeline pins versions. That means your environment is repeatable, and updates are intentional. It also means that if a dependency is compromised, you can quickly see whether you were exposed.

A simple way to start is to lock dependency versions in a single place that is reviewed. For container builds, use a known base image. For Python, pin package versions. For system libraries, keep the build steps stable. Your goal is not to be perfect. Your goal is to reduce unknowns.

Repeatability is security. Attackers want unknowns because unknowns hide their work.

Use signed artifacts and checksums where it matters

When you train a model and store it, that artifact becomes a high-value object. If it can be changed silently, you do not really control your system. A strong control is to store artifacts with hashes and verify them before deploy.

This sounds heavy, but it can be lightweight. You can compute a hash for the model file and store it in the model registry metadata. Your deploy step checks the hash. If it does not match, deploy fails and someone gets alerted. This one step blocks a lot of quiet model swaps.

The same idea applies to data snapshots and feature sets. If you can hash the input bundle for a training run, you can later prove what the model learned from.

Reduce notebook risk without banning notebooks

Notebooks are useful. The problem is when notebooks become production without review. A simple control is to draw a clear line: notebooks can explore, but a model that ships must be trained by a reviewed script or pipeline job.

You can keep a “research” area and a “production” area. Research is flexible. Production is controlled. You do not need to slow down research to keep production safe. You just need a step where work moves from one world to the other with checks.

Teams that make this change usually see a second benefit: fewer “works on my machine” problems, and faster debugging.

Keep secrets out of training code by design

Secrets leak when they are easy to paste. If your pipeline encourages people to paste keys into config files, keys will leak. Instead, make the safe path the easy path.

Use a secrets manager, or at least environment variables that are injected at runtime. Make sure logs do not print secrets by accident. Rotate keys on a schedule. Keep service accounts narrow, so one leaked key cannot do everything.

One very practical control is to run a secret scan in CI. It catches many issues early. It also teaches the team a habit: “keys do not go in code.”

Controls for Training: Make Runs Verifiable and Hard to Tamper With

Use a “training manifest” for every model

A training manifest is a plain record of what created the model. It includes data snapshot IDs, code commit, config values, feature version, and the identity of the job runner. It can also include the evaluation metrics and the approval step.

This is not paperwork. It is your safety net. When a model behaves oddly, you can trace the exact run. When you need to prove to a buyer that your system is controlled, you can show how models are produced. When you want to file patents, a manifest also helps you capture what is unique about your training method and data use.

Many founders underestimate how much an IP story improves when you can explain your method clearly. Tran.vc helps teams capture that story and turn it into filings that build real leverage. You can apply anytime here: https://www.tran.vc/apply-now-form/

Separate “who can run” from “who can approve”

In secure systems, the person who can execute a process is not always the person who can push it to production. For ML, this is a powerful control. Many pipeline failures happen because someone runs an experiment and it accidentally becomes the deployed model.

A simple approach is to keep experimentation open but deployment strict. Engineers can run training jobs. Only a smaller group can mark a model as “approved for production.” This reduces risk without crushing speed.

Even in a small team, you can do this with a lightweight process. The key is to avoid a world where “any run can become prod.”

Add a “known good” baseline and compare every time

A secure pipeline uses comparisons. Every new model is judged against a baseline model that is already trusted. You do not only check absolute metrics. You check for unexpected changes.

This can be as simple as: the new model must not get worse on key slices, and it must not change key outputs in surprising ways. Slice testing is important because attacks and errors often show up in a small region of the input space, not in the overall average.

If you have a set of “golden inputs” that represent important user cases, keep them and run them every time. When results shift, you pause and investigate. This blocks a lot of slow damage.

Controls for Serving: Protect the Model in the Wild

Rate limits and quotas are security controls, not just billing tools

Rate limits stop extraction and abuse. They also stop accidental runaway usage. If a user can query your model endlessly, they can learn it and copy it. If a user can send huge payloads, they can raise your costs or crash your service.

Set limits that match your product. For B2B tools, you can usually be strict. Most legitimate customers will accept limits if you explain them well. If you need higher limits, make them part of a paid plan or an approved contract. Security often becomes easier when usage is intentional.

Add input validation that matches model risk

If your model controls something risky, you need stronger input checks. If it is a low-stakes recommendation system, you can be lighter. The mistake is using the same input policy for every model.

For LLM or agent systems, the safest pattern is to treat user text as untrusted and keep tool actions behind a strong policy. If the model can call tools, those tools should have their own allow rules. Do not rely on the model to “choose well.” The model is not a security boundary.

For classic ML, you still want checks for format, length, and known weird cases. Many attacks start by breaking parsers, not by beating the classifier.

Log what matters, but do not log secrets

Serving logs help you detect abuse, drift, and targeted attacks. But logs can also become a leak if they store personal data or prompts. You want logs that capture the shape of requests, the identity of clients, and key outcomes, without storing sensitive content when it is not needed.

This is a place where many teams take an extreme approach and regret it. Logging nothing makes you blind. Logging everything creates risk. The best path is selective logging with clear retention rules.