Invented by Kanazawa; Noritsugu, Wadhwa; Neal

Welcome! Today we’re breaking down a powerful patent application that reimagines how machines can edit and analyze images, especially when devices don’t have much computing power. If you’ve ever wondered how your phone magically erases unwanted people from your photos, or how apps instantly enhance blurry pictures, this technology is the secret sauce. Let’s explore how it works, why the market needs it, the science behind it, and what makes this invention stand out in the world of image processing.

Background and Market Context

In today’s world, we all use images everywhere. From social media posts to professional photography, pictures are everywhere. But as cameras get better, the images they capture get bigger. Higher resolution means sharper, clearer photos, but it also means bigger files. Processing these files requires more computer power, and not all devices have that luxury.

Think about your smartphone. It fits in your pocket, but the pictures it takes are huge. You want to edit those pictures—maybe remove a stranger from the background or sharpen a blurry face. But running powerful editing tools on a phone can be slow or might even crash the app. This is because editing big images takes up a lot of memory and processing speed. Laptops and desktops handle this better, but more and more people want to edit, filter, and share photos straight from their phones or tablets.

People expect quick, high-quality edits. No one wants to wait for a photo to process, and no one wants to deal with a device that gets hot or runs out of battery because an app is working too hard. At the same time, everyone wants features like object removal, face beautification, sharpening, and more. What’s the solution?

The answer lies in using smart computer programs—called machine learning models—designed to edit images. These models can do everything from color correction to removing unwanted objects. But here’s the challenge: the better these models get, the more resources they use. High-end models might work well on a server or a desktop, but they struggle on phones or other small devices.

The market is huge. Billions of devices—phones, tablets, even cameras—could benefit from smarter, faster image editing. App developers want to offer advanced features without draining your battery or making you wait. Camera makers want their products to stand out with instant, on-device editing. Even businesses want to process images quickly for things like security cameras or smart doorbells.

But so far, the trade-off has been clear: edit at high quality, but slowly and with lots of battery use, or edit quickly but with lower quality. This patent application addresses this exact problem. It offers a way to edit images with high quality and speed, even on devices that have limited power or memory.

In short, the market needs a smarter way to process images. It needs a method that is fast, doesn’t use too much memory, and still delivers great results. That’s where this invention comes in.

Scientific Rationale and Prior Art

Let’s talk about the science behind editing images with computers. Computers see images as a set of numbers—pixels. To change an image, you have to change those numbers. Simple edits like brightness or cropping are easy, but advanced edits, like removing an object or sharpening a blurry area, are much harder. They often require computers to “guess” what should be there instead.

Machine learning models, especially neural networks, have become the go-to tools for these tasks. They learn from lots of examples—thousands or even millions of images—so they can make smart guesses when editing new images. Tasks like inpainting (filling in missing parts), deblurring, recoloring, and smoothing all use these models.

But there’s a problem: neural networks need a lot of memory and computing power, especially for big, high-resolution images. The more detail in the image, the harder the model has to work. On a phone or other small device, this can slow things down or even make editing impossible.

People have tried a few workarounds. One is to shrink the image and run the model on this smaller version. This saves memory and works faster, but it also loses detail. The edits might look blurry or not match the rest of the image well. Another solution is to edit only a small part of the image at high quality. This works if you only care about a tiny area, but often the context of the whole image is important. For example, when removing a person from a photo, the model needs to know what the background should look like—something you can’t see in a small crop.

Some methods run a model on the whole image at low quality, then use another model to clean up just the modified parts, but this can get complicated. Training these models to work together is also a challenge. Plus, passing information between models without slowing things down is tricky.

Existing solutions often force a trade-off between speed and quality. They might run fast, but the result looks fake or mismatched. Or they deliver great edits, but only on powerful computers, not on your phone. Some methods use clever tricks to save memory, like processing the image in sections, but these can leave visible lines or mismatched colors at the edges.

The scientific goal is to get the best of both worlds: smart edits that use the context of the whole image, but without needing lots of power. That means finding a way for models to work on both low-resolution and high-resolution versions of the image, combining their strengths. The trick is to have one model look at the whole picture in a simple way, then have another model focus just on the tricky parts in detail.

This approach isn’t completely new—researchers have tried cascaded or multi-stage models before. But past methods often fall short when it comes to balancing speed, quality, and memory use, especially on small devices. This patent application brings a new structure to this idea, making it practical for real-world devices like smartphones.

So the science is clear: if you want fast, high-quality edits on any device, you need a new system. It must use models that see the big picture, yet focus on the details, all while saving memory and battery life. This is the gap that the invention fills.

Invention Description and Key Innovations

Now let’s break down what this invention does and why it’s so clever.

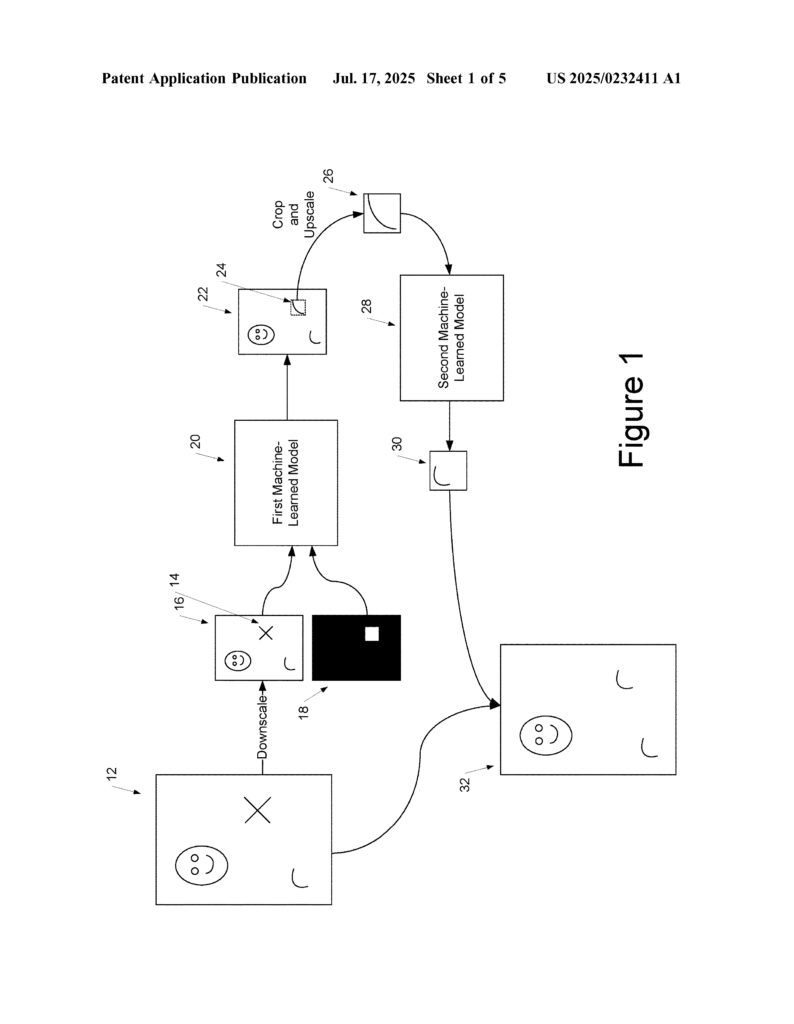

The system is built around two main machine learning models. The first model works on a low-resolution copy of your image. It edits or analyzes the entire picture, but because the image is small, it works quickly and uses little memory. This model can do tasks like inpainting (removing or filling in objects), deblurring, recoloring, and more. It can even do things like edge detection, face recognition, or finding key points in a human body.

Here’s where it gets interesting. The first model’s output is not the final edit. Instead, the system takes the specific parts that were changed—the areas where edits happened—and cuts them out. These are called “crops” or “extracted portions.” Then, these cropped areas are made bigger, upscaling them to match the size and detail of the original, high-resolution image.

Now the second model comes in. It’s designed to work on these high-resolution crops, but only on the small parts that need detailed attention. This model refines the edits, making sure they match the rest of the image perfectly. Because it’s only working on small areas, even though the detail is high, it doesn’t use too much memory or slow down the device.

Finally, the refined, high-quality crop is placed back into the original, full-sized image, replacing the part that was edited. The result is an image that looks great, with edits that blend in and match the overall context, but the process was fast and didn’t use much battery or memory.

This system can work in a few ways. Sometimes, the user picks what to edit (like marking an object to remove). Other times, the computer can decide on its own, using classification methods to find objects, faces, or other elements to modify.

Training these models is also smart. The system can be taught to edit images by comparing its results to a “ground truth” or perfect version. It measures how close its edits are to the right answer, then adjusts itself to do better next time. The two models can be trained together, making sure they work as a team.

There are extra features too. The models can pass information back and forth, like sharing feature vectors (which are like summaries of what the model knows about an image). They can even add extra information, like a depth channel, which shows how far away things are in the picture.

The invention isn’t just for one type of edit. It can do inpainting, edge detection, object recognition, face recognition, and more. It’s flexible and works for all sorts of image analysis, not just modifying pictures but also understanding them.

This idea can run on all kinds of devices. It can be built into camera apps, editing apps, or even run on servers for more power. The models can live on your device or be downloaded from the cloud. They can work together in parallel for even faster results.

What makes this invention special is its balance. It delivers high-quality edits, uses the full context of the image, but only spends lots of computing power where it really matters. It’s like using a wide brush for the background and a fine brush for the details—all in one system.

For users, this means faster edits, longer battery life, and better-looking photos. For developers and device makers, it means they can offer advanced features without needing expensive hardware. It opens the door for smarter, more powerful image processing on any device, anywhere.

Conclusion

The patent application we explored today brings a new era to image editing and analysis. By using a two-model structure—one that sees the big picture at low resolution and one that perfects the details at high resolution—it solves the biggest challenges facing image processing on resource-limited devices. The invention is flexible, efficient, and delivers high-quality results without demanding lots of memory or power.

In a world where everyone wants to do more with their pictures—faster and with better results—this technology is set to be a game changer. Whether you’re a developer, a device maker, or just someone who loves taking photos, this breakthrough makes smarter, more powerful image editing possible for everyone.

Click here https://ppubs.uspto.gov/pubwebapp/ and search 20250232411.