If your product uses AI, you already know the truth: the hardest part is not shipping the model.

The hard part is shipping the model safely, on purpose, and in a way you can defend when a customer, an auditor, or a regulator asks, “How do you know this is okay?”

That is exactly where ISO/IEC 42001 comes in.

ISO/IEC 42001 is the first global standard for an AI Management System (AIMS). In plain words, it is a practical way to run AI work inside a company so you can manage risk, make better choices, and keep improving—without turning your team into a paperwork machine. (ISO)

Many product teams hear “ISO” and think: slow, boring, and only for big companies. But 42001 is different in one key way: it is built for a world where AI systems can change over time, can fail in odd ways, and can harm users even when the code “works.” It pushes you to treat AI like a living product, not a one-time feature you ship and forget. (ISO)

Here is the simple promise of ISO/IEC 42001:

It helps you set up a repeatable system to build, use, and manage AI responsibly—so you can move fast and keep control. (ISO)

And yes, it applies whether you build AI, sell AI, or use AI inside your product or operations. (ISO)

Why should product teams care?

Because product teams are the place where “AI risk” becomes real. Not in a policy doc. Not in a legal deck. In the product backlog. In the data choices. In the launch plan. In the support queue. In the metrics you pick. In the pressure to ship.

ISO/IEC 42001 gives you a shared map for questions product teams struggle with every week, like:

- “Are we allowed to use this data?”

- “What do we tell users this model can and can’t do?”

- “How do we test for bias when the edge cases keep changing?”

- “What do we log, and what do we not log?”

- “Who signs off before we push this to production?”

- “What do we do when the model drifts and quietly gets worse?”

This standard does not exist to make you sound fancy. It exists to make your AI product predictable, traceable, and trustworthy—which is what enterprise buyers, partners, and future investors want to see.

And if you are an early-stage startup, this matters even more. A mature AI management system can become a real advantage in sales and due diligence. It can also reduce messy rework later, when a big customer asks for proof that you run AI in a controlled way.

At Tran.vc, we see one pattern again and again: teams that treat AI governance early do not move slower. They move cleaner. They waste less time. They avoid costly dead ends. And they build stronger foundations that are harder to copy—especially when paired with strong patent and IP strategy.

If you’re building in robotics, AI, or deep tech and you want to build an IP-backed moat while you build your product, you can apply anytime here: https://www.tran.vc/apply-now-form/

What ISO/IEC 42001 really is for product teams

Think of it as “how we run AI here”

ISO/IEC 42001 is not a checklist you complete once. It is a management system, which means it is a way of running your AI work on purpose, the same way strong teams run security, quality, or privacy.

For product teams, this matters because AI work touches many moving parts. Data changes. User behavior changes. Models drift. A “small tweak” to a prompt can change outcomes in ways you did not plan.

This standard asks you to build habits that keep you in control even when the system changes. The goal is not perfection. The goal is repeatable good decisions.

It covers the whole AI lifecycle, not just the model

Most teams focus on training or model choice. ISO/IEC 42001 pushes you to manage the full product story: why you use AI, what data feeds it, how you test it, how you deploy it, how you watch it, and how you fix it when it breaks.

That full view is what enterprise buyers want, even if they do not use ISO language. They want to know you can explain what your AI does and how you keep it safe.

If you want to build a defensible foundation early, this is the mindset that helps you scale without chaos.

Why founders should care even before big customers ask

Early teams often say, “We are too small for standards.” The truth is that small teams benefit the most from clear rules because every mistake costs more time.

When you build a light version of ISO/IEC 42001 early, you reduce rework. You also build trust faster with pilots, partners, and seed investors.

This is also a strong place to pair governance with IP strategy. If you build unique control methods, testing approaches, or safety workflows, you may be building patentable assets, not just process.

If you are building AI, robotics, or deep tech and want to build strong IP while you build the product, apply anytime: https://www.tran.vc/apply-now-form/

The core idea: an AI Management System (AIMS)

AIMS is a “system,” not a document

AIMS means you have clear roles, clear decisions, and clear proof. Not because someone loves paperwork, but because AI systems can create harm even when nobody meant to.

A system gives you a way to show that you thought through risks before launch, and that you watch what happens after launch. It also helps you learn from incidents and improve.

If you ever sell to a regulated industry, this kind of system quickly becomes the difference between “nice demo” and “approved vendor.”

What changes for product teams

If you already run agile, you already have a system. You have planning, build, test, release, and learn loops.

ISO/IEC 42001 does not ask you to throw that away. It asks you to add a few control points so the AI parts do not slip through the cracks.

In practice, product teams start treating AI decisions like product decisions that must be explained. Why this data, why this model, why this output format, why this guardrail, why this fallback.

What “good” looks like in real life

Good does not mean long policy docs. Good means your team can answer key questions quickly, using simple proof.

You can point to who approved a risky feature. You can show what tests you ran. You can show what you monitor in production. You can explain what you do when the model fails.

This makes your team faster because you stop arguing the same issues every sprint.

The big subjects inside ISO/IEC 42001

It is mainly about control, clarity, and proof

The standard focuses on things that reduce surprise. It pushes you to define what you are building, what could go wrong, and how you will handle it.

It also pushes you to keep records that are useful. Not records for their own sake, but records that help you learn, defend decisions, and improve the product.

This is especially important for AI because failures are often not obvious until users hit weird edge cases.

It connects people, process, and product

Many teams try to “solve AI safety” with one person or one tool. ISO/IEC 42001 pushes you to connect the whole organization.

Product, engineering, data, legal, support, and leadership each hold part of the risk. If any one part is missing, the product may ship, but the company cannot defend it.

This is why the standard is useful even if you never pursue formal certification. The structure itself makes teams stronger.

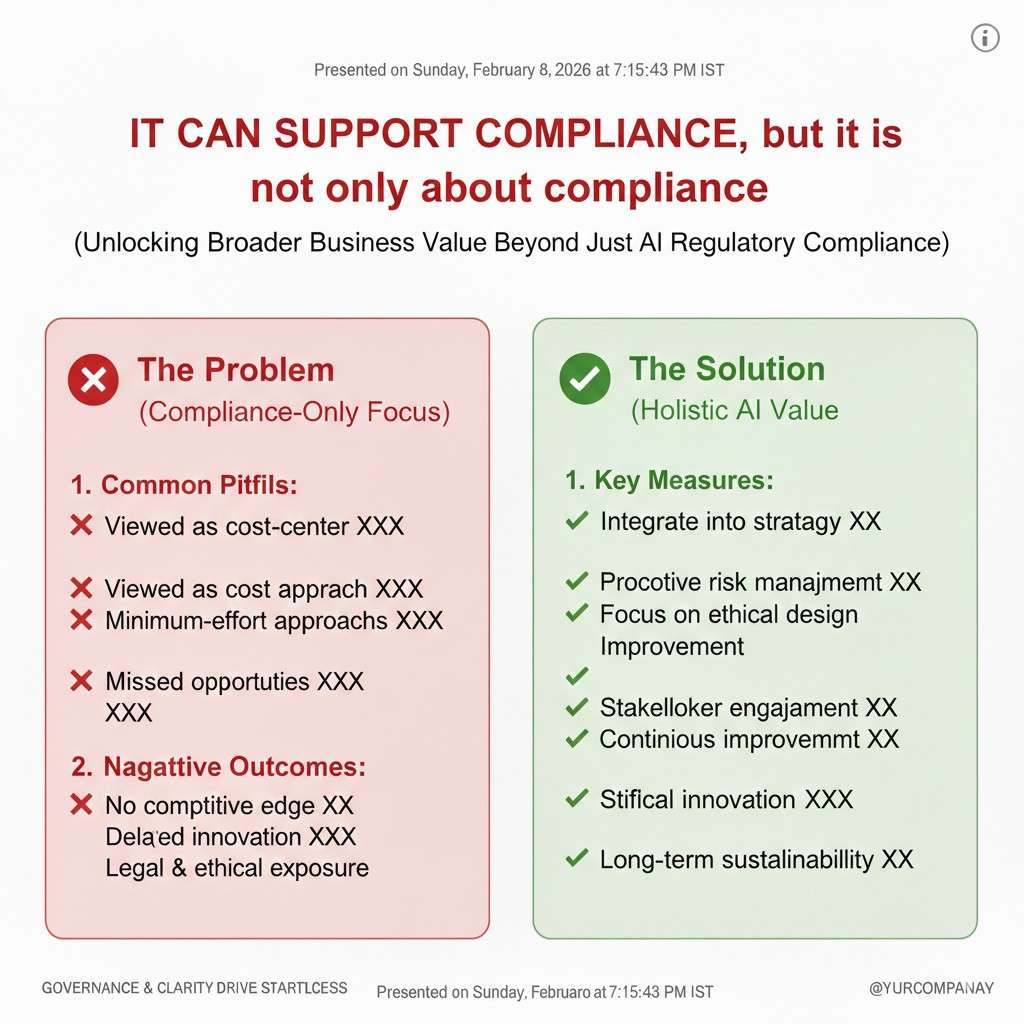

It can support compliance, but it is not only about compliance

Yes, standards can help with audits. But the bigger win is operational: fewer surprises, cleaner launches, faster incident response, and clearer customer answers.

In sales, that shows up as trust. In engineering, it shows up as fewer midnight emergencies. In leadership, it shows up as fewer unknown risks.

The first step: define the “AI scope” of your product

Scope is where most teams get stuck

When teams hear “AI management system,” they think it must cover every line of code. That is not realistic.

Scope means you clearly state which parts of the business and product are in the AI system. You define the AI features, the data flows, and the teams involved.

A clear scope stops endless debate and prevents hidden AI usage from slipping into production without review.

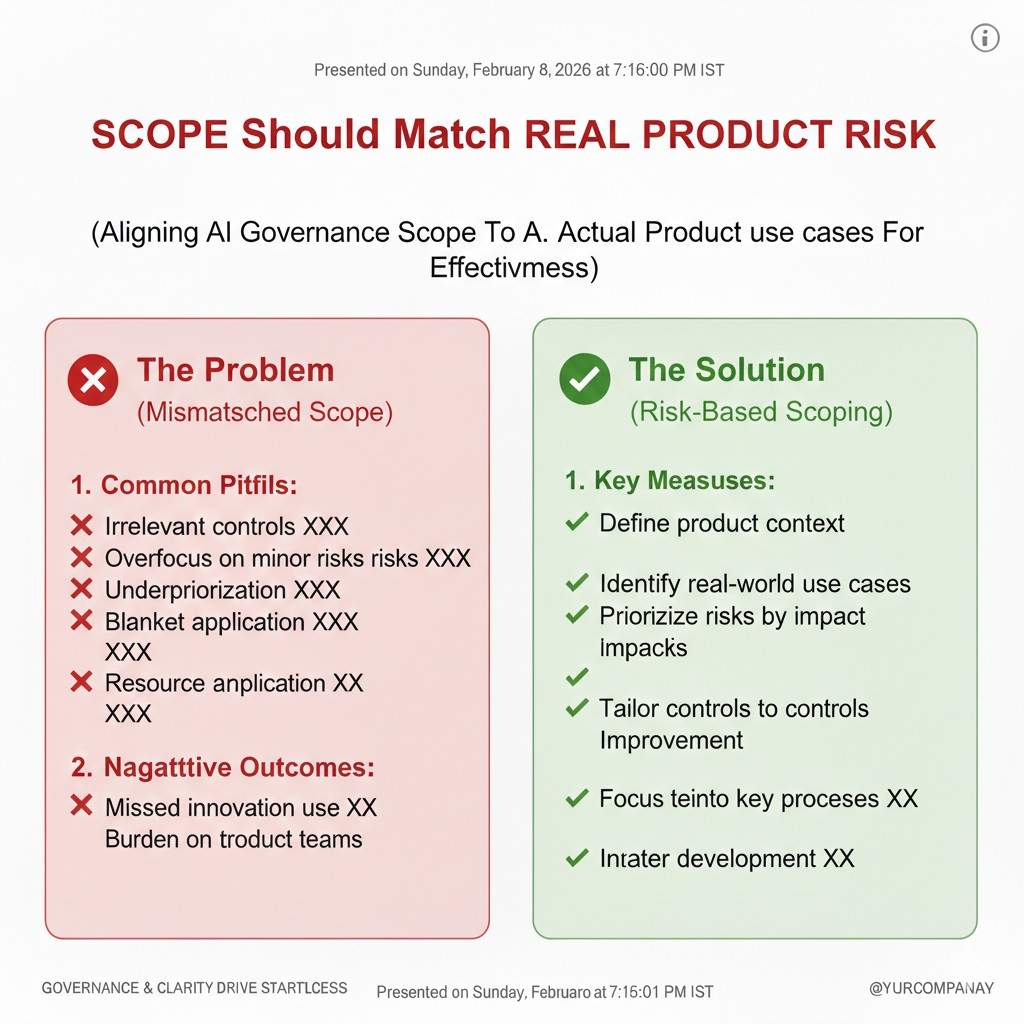

Scope should match real product risk

A small internal AI tool has a different risk than a customer-facing medical triage feature.

ISO/IEC 42001 expects you to be honest about that difference. Your controls should match the level of impact.

For product leaders, this is a key move: you can keep speed for low-risk work while adding stronger steps where the risk is real.

Scope also helps with outsourcing and vendors

If you use third-party models, hosted APIs, data labeling vendors, or external evaluators, that must be part of your scope thinking.

Even if you do not build the model, you still ship the outcome. Customers will hold you responsible.

So a strong scope statement includes vendor dependencies and clear ownership for monitoring and change management.

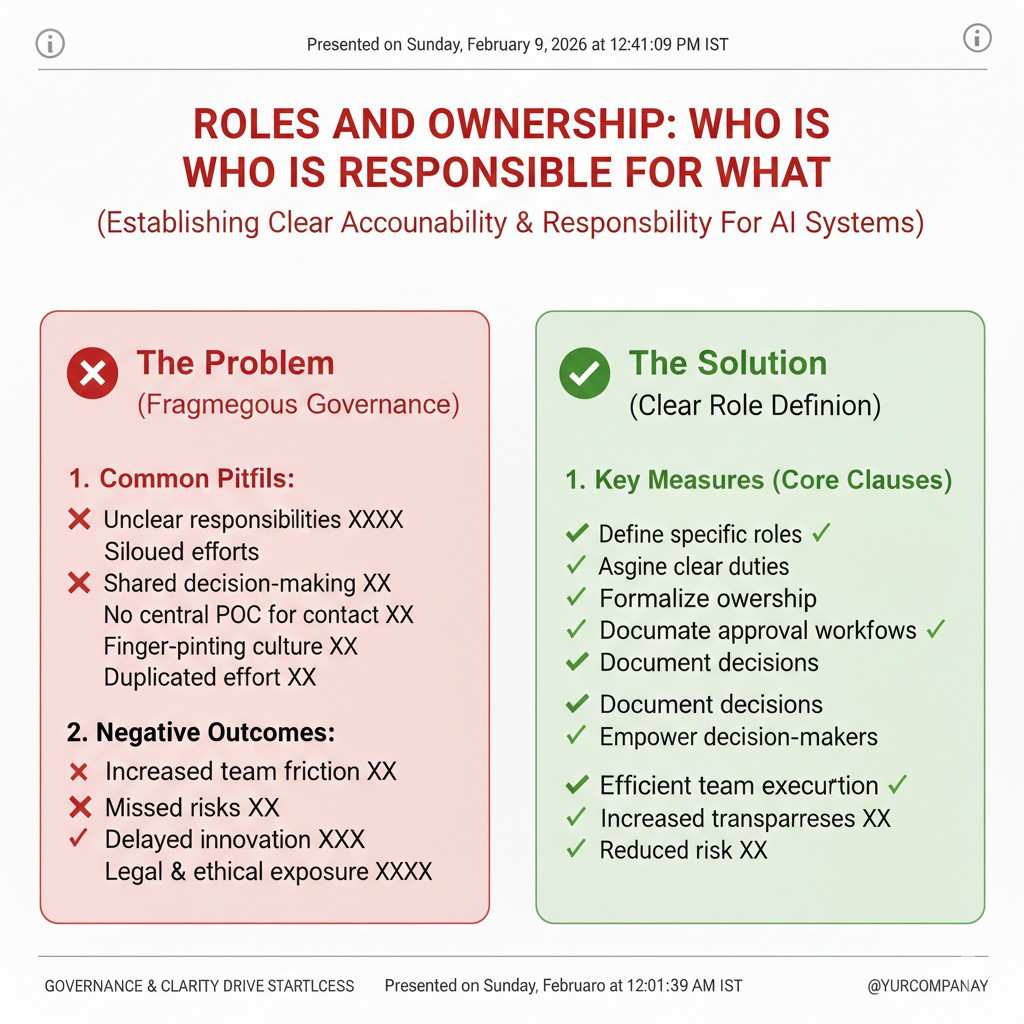

Roles and ownership: who is responsible for what

AI products fail when ownership is unclear

Most AI incidents are not “model problems.” They are “team problems.”

No one owns the label definitions. No one owns the feedback loop. No one owns the decision to launch. No one owns the rollback plan.

ISO/IEC 42001 pushes you to fix that by assigning responsibilities so the work does not fall between teams.

Product is not the only owner, but product is the connector

Product teams do not need to become policy experts. But product must connect choices across teams.

Product defines user intent, expected behavior, and acceptable failure. Engineering defines technical controls. Data defines input quality and lineage. Support defines what users report. Leadership defines risk appetite.

When product connects these, the AI system becomes manageable instead of chaotic.

Simple ownership makes day-to-day work easier

When ownership is clear, decisions move faster. People know who to ask. Reviews become shorter and less emotional.

This is also where early startups can win. Small teams can set ownership quickly and build habits that scale.

Risk thinking: the heart of ISO/IEC 42001 for product teams

Risk is not only “harm,” it is also “surprise”

Some risks are obvious, like unsafe advice or biased outcomes.

Other risks are quieter, like model drift, data contamination, poor user understanding, or a prompt that leaks private data.

ISO/IEC 42001 pushes you to treat risk as anything that can break trust or cause damage, even if the model still returns “an answer.”

Risk should be tied to product impact

A wrong recommendation in a music app is not the same as a wrong recommendation in a hiring tool.

So you do not measure risk only by model accuracy. You measure it by what happens to the user, the customer, and the business.

This is one of the biggest mindset shifts. Product teams learn to write down harms in plain language and connect them to real flows.

A practical way to start without heavy process

Start with one AI feature. Write the top ways it could fail, using examples.

Then map where you can reduce that risk: better data checks, better UI warnings, better guardrails, stronger monitoring, and a fallback when the model is unsure.

This is not about fear. It is about being prepared.

Controls: how you keep AI work from going off the rails

Controls are your “quality gates” for AI

In normal software, you have code review, tests, and staged release.

AI needs those too, but with extra checks. You need to validate data, evaluate outputs, and test behavior across edge cases.

ISO/IEC 42001 supports the idea that controls should exist before launch and after launch, because the system can change over time.

Controls should be built into the product workflow

The worst approach is to add a big review at the very end.

The best approach is to add small checks at the moments that matter, like data selection, feature design, training or prompt changes, and release decisions.

When controls are part of how teams already work, they feel light, not heavy.

Controls should be matched to the feature’s risk

You do not need the same depth of review for every AI feature.

High-risk features may need stronger approval, stronger testing, and more monitoring. Low-risk features can move faster.

This is how you keep momentum while still being responsible.

Documentation: what you really need, and what you do not

Documentation is your “memory” and your “proof”

People often hate documentation because it becomes useless.

ISO/IEC 42001 expects documentation to serve real needs: to explain the system, to support decisions, and to help you improve.

For product teams, the best documentation is short, clear, and tied to the feature. It should help the next person understand why choices were made.

Focus on decisions, not on long descriptions

The most valuable records are decision records.

Why you chose this dataset. Why you chose this evaluation method. Why you chose this threshold. Why you allowed a feature to launch.

This kind of writing helps you defend the product later and speeds up future work because you do not repeat old debates.

Keep language simple so the whole company can use it

If only one person understands the docs, they are not a system.

Write like you are speaking to a smart teammate who just joined. That is the best test.

It also helps in sales, because many customer questions can be answered by the same clear records.

Metrics and monitoring: what you watch after launch

AI needs a “watch loop,” not just a “test loop”

A model can be great in the lab and still fail in the real world.

Real user input is messy. Real data shifts. New edge cases appear. Bad actors try to break your system.

ISO/IEC 42001 supports strong monitoring so you can detect problems early and fix them before they become public incidents.

Monitoring should include product signals, not just model signals

Accuracy is not enough.

Product teams should watch user complaints, drop-offs, confusion, time-to-complete, override rates, and other behavior signals that show the AI is not helping.

These signals often catch issues before your model metrics do.

You need a plan for what happens when monitoring shows trouble

It is not enough to detect problems.

You need a clear response plan: who gets alerted, how you pause or roll back, what you tell customers, and how you prevent the same issue next time.

This is where trust is built. Customers forgive mistakes more easily when you respond fast and clearly.

Supplier and vendor management: when your AI is partly outside your company

Using third-party AI does not remove responsibility

If you ship a feature based on an external model, your customer still sees it as your product.

If something goes wrong, you cannot say, “That was the vendor.” You still own the impact.

ISO/IEC 42001 pushes you to manage vendor risk with clear selection rules, clear monitoring, and clear change handling.

Changes in vendor systems can change your product

A vendor may update their model, change behavior, or change pricing.

Those shifts can break your UX, your safety controls, or your expected output format.

So product teams need a way to detect vendor changes and test them before they hit users.

Vendor thinking can also create product advantage

If you handle vendors well, you become a safer partner.

That matters in enterprise deals. Many buyers now ask how you manage third-party AI dependencies.

This is also a place where strong IP strategy can help, because your wrapper, your safety layer, and your monitoring approach can become part of your defensible moat.

If you want Tran.vc’s support to build your IP foundation while you build the product, apply anytime: https://www.tran.vc/apply-now-form/

Where product teams start: a simple adoption path

Start with one feature, not the whole company

Most teams fail by trying to boil the ocean.

Pick the AI feature that matters most to customers or carries the highest risk. Build your AIMS habits there first.

When it works, you expand the approach to other features.

Build a “thin” version that still works

You do not need perfect templates.

You need a clear scope, clear ownership, a simple risk review, basic controls, and basic monitoring.

Even this thin version makes you better than most startups, and it sets you up to mature without painful rewrites.

Keep it tied to product outcomes

If the process does not help shipping and trust, teams will ignore it.

So always connect it to real outcomes: fewer incidents, smoother launches, faster customer answers, and stronger enterprise readiness.

That is how you get buy-in without forcing it.