Invented by FREYTAG; Alexander, KUNGEL; Christian, Carl Zeiss AG

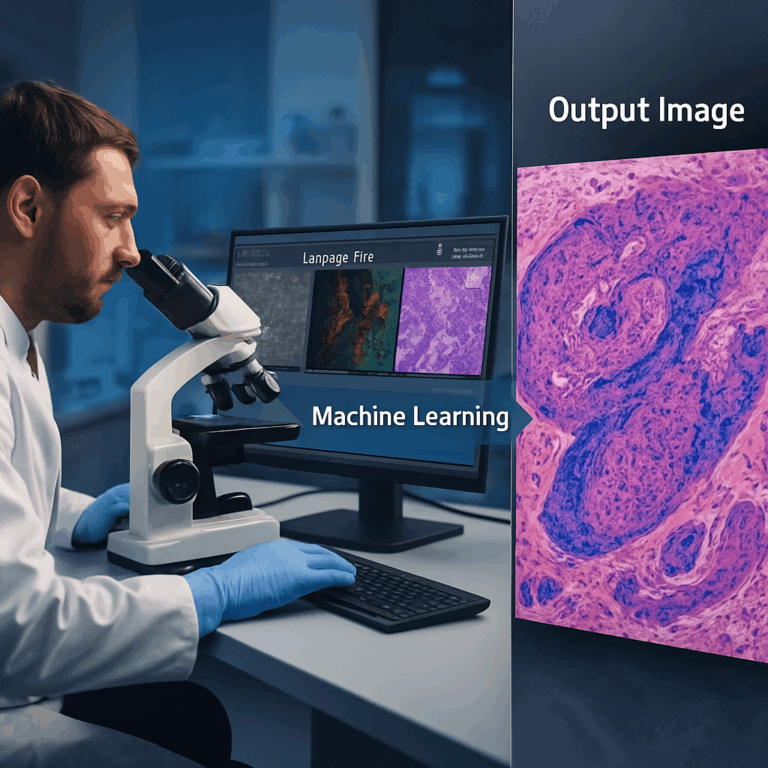

Virtual staining is changing how we see and understand tissue samples. The patent at hand is a leap forward, bringing the power of machine learning to create digital versions of chemical stains. In this article, we will explore the background and market context, dive into the science and prior art, and finally, examine the new invention and its key innovations. Let’s unpack how this technology can make tissue analysis faster, cheaper, and more flexible for labs, researchers, and clinicians.

Background and Market Context

Pathology is the heart of disease diagnosis. When someone is sick, a doctor might take a tissue sample from their body. This piece of tissue is then sent to a laboratory where it gets processed so that doctors and scientists can look at it under a microscope. But just looking at the tissue isn’t always enough. Most of the time, the tissue is almost see-through, and important details are hard to spot.

To make things clearer, lab workers use special chemicals called stains. These stains color parts of the tissue in ways that help doctors see the shapes, sizes, and types of cells. The most famous stain is called H&E, or hematoxylin and eosin. It turns the cell nucleus blue and the rest of the cell pink. Other stains can show specific features, like proteins that might mean cancer or infection is present. This process, called histopathology, is key for finding out what’s wrong and how to treat it.

But staining tissue the old way is slow and can be expensive. Each stain needs its own careful steps. If a doctor wants to see several stains on the same tissue, they usually need to cut many slices from the original block—one for each stain. This is wasteful and takes a lot of time. Also, each stain can only show so much. Sometimes, the tissue gets damaged or lost during the process.

With more people needing fast answers and with the rise of personalized medicine, there is big pressure on labs to work faster and save money. Digital technology, like special microscopes and scanners, is already changing the field. Now, computers and artificial intelligence (AI) are being used to help spot patterns in tissue images, making diagnosis smarter and faster. One of the new frontiers is virtual staining. Imagine if you could get all the benefits of chemical stains, but without using any chemicals or damaging the tissue. That’s what this new patent aims to do.

Virtual staining uses digital images and AI to create pictures that look just like stained tissue, but the stains are made by a computer. This is a big deal for researchers, hospitals, and even drug companies. It means less waiting, less waste, and new ways to understand diseases. As digital pathology grows, the market is hungry for better, faster, and more flexible tools. Virtual staining is a step in that direction.

Scientific Rationale and Prior Art

The science behind tissue staining is simple: different stains stick to different parts of a cell or tissue. Some stains show up only under certain types of light, like ultraviolet. Others bind to proteins, DNA, or even sugars. This lets doctors and scientists pick the right stain for the right job.

Traditionally, a tissue sample is cut into thin slices, placed on slides, stained, and then looked at under a microscope. Over time, new stains and techniques have been invented, like immunohistochemistry, which uses antibodies to target specific cell parts. Each advance lets doctors see more details and make better decisions. But each also adds time, cost, and complexity.

Digital scanning has started to replace old microscopes in many labs. These scanners turn tissue slides into high-quality digital images. Once the tissue is digital, AI can help analyze it. One of the first uses of AI in pathology was to spot cancer cells. Then, researchers started thinking: if a computer can spot cancer, why can’t it create stains too?

A big step in this direction was the idea of virtual staining. Instead of using chemicals, you take a digital image of unstained tissue and use machine learning to predict what it would look like if stained. Early patents, like WO 2019/154987 A1, showed that it was possible to use AI to make images that look almost identical to traditional stains. This saves time and money, and sometimes, it can even see things that are hard to spot with real stains.

These first-generation virtual staining systems often worked with just one kind of image input and produced a single type of virtual stain. The AI models used were sometimes limited in how much detail they could use, and they often worked only with certain types of microscope images. The results were good, but not perfect. There were also challenges in handling more complex tissue types, or in creating multiple stains from a single sample.

Another challenge was that most tissue samples can only be stained once—so if you want more than one stain on the same piece of tissue, you need to cut and stain more slices. This limits how much information you can get from a single sample. It also means you can’t always compare stains perfectly, because they might be on slightly different tissue slices.

The new patent addresses these gaps. It builds on the idea of using more than one type of image (like combining regular light, fluorescence, or even special spectroscopy methods) and fusing this data in a smarter way. The result is a system that can create one or more virtual stains from many kinds of input images, making it much more flexible and powerful than older methods.

The science behind this leap is in the use of deep learning, especially neural networks with encoder and decoder branches. These networks can “learn” from lots of examples of real stained and unstained images, building up a smart model that can turn new, unstained images into any number of virtual stains. The patent also covers how to train these networks, how to handle different imaging methods, and how to make the whole process work in real labs.

Invention Description and Key Innovations

Now, let’s look at what this new patent really does, and why it matters.

At its core, the invention is a method and device for virtual staining of tissue samples using machine learning. The process starts by collecting several sets of images from a tissue sample. These can be from different imaging technologies—such as regular light microscopes, fluorescence, hyperspectral imaging, or even Raman spectroscopy. Each type of imaging highlights different features in the tissue.

The next step is where the magic happens. The images from all these sources are fed into a machine learning system. This system is built around neural networks—special types of AI that can find patterns in complex data. The network has an “encoder” part that pulls out important details from each image, and a “decoder” part that uses those details to create new images. The new images are the virtual stains.

One of the big innovations here is how the different images are combined. The patent describes several ways to “fuse” the data. Sometimes, all the images are combined right at the start. Other times, each image goes through its own encoder, and the results are joined later in the process. This fusion can happen at different points in the network, like at the first layer, inside a hidden layer, or at a special point called the bottleneck. This flexibility means the system can handle many kinds of input and can be tuned for best results.

Another key feature is the use of multiple “decoder branches.” What does this mean? It means the system can create several different virtual stains at once, all from the same input images. For example, it could make a virtual H&E stain and a virtual antibody stain at the same time. This is a big step up from older methods that could only do one stain at a time.

Training the AI is also covered in detail. The system learns by comparing its virtual stains to real stained images. If the virtual image matches the real one, the network gets positive feedback. If not, it adjusts its settings. Over time, the system gets better and better. The patent describes clever ways to handle training when you don’t have real stained images for every type of input, such as using “registration” to line up images from different samples, or freezing parts of the network while others are updated. This makes the training more efficient and flexible.

The patent also covers how this system can be built into a device. This device can be a computer with special hardware for AI, like GPUs or TPUs, or even a cloud service. The device can take in images, run the AI, and output virtual stains ready for analysis or display.

So, what are the real-world benefits? First, labs can get digital stains in minutes instead of hours—without using chemicals or risking tissue damage. Second, by using more than one imaging method, the system can see more details and create stains that are hard or even impossible to do with chemicals. Third, because the process is digital, the same piece of tissue can be “stained” in many ways, opening up new research and diagnostic possibilities.

For pathologists and researchers, this means more information from every sample, faster turnaround, and lower costs. For patients, it means faster and possibly more accurate diagnoses. The flexibility to use different types of input images and create multiple stains from one sample is a big innovation that could change the workflow in labs everywhere.

From an IP (intellectual property) standpoint, the patent covers not just the idea of virtual staining, but the specific methods for fusing image data, the structure of the neural networks, the training process, and the device itself. This gives strong protection to the inventors and their partners, and sets a clear pathway for commercial products.

How Does It Work In Practice?

Let’s say a lab wants to check a tissue sample for cancer. They scan the sample with several imaging techniques, such as brightfield microscopy and fluorescence. The images are sent to the AI system. The system processes the images, fusing the data and applying its trained neural network. In just a short time, the lab gets back several virtual stained images—one showing cell structures, another highlighting proteins linked to cancer, and maybe a third showing features only visible with special stains. The pathologist can review all these images right away, without waiting for chemical stains or risking tissue loss.

If needed, the AI can be updated or trained with new types of stains as science advances. The system is also flexible enough to work with different kinds of tissues (human, animal, or plant), and with samples prepared in different ways.

Another strong point is the use of skip connections in the neural network. These connections help the system keep important details from the input images, making the virtual stains sharper and more accurate. This is especially helpful when trying to spot tiny features or when working with very complex tissues.

The patent also describes ways to handle situations where not all types of stains or images are available for every sample. By using smart training routines and registration techniques, the system can still learn effectively, making it practical for real-world use where perfect data is rare.

SEO Takeaways and Action Steps

For labs, hospitals, and tech companies interested in digital pathology, this patent sets out a clear and flexible framework for virtual staining. Actionable steps include:

– Assess your current imaging equipment: Can it produce the types of images required (brightfield, fluorescence, etc.)?

– Consider how virtual staining could speed up your workflow and save costs on chemicals and labor.

– Look for AI solutions that offer multi-input and multi-output capabilities, as described in the patent.

– Explore partnerships or licensing if you want to use or build on this technology.

– Keep an eye on regulatory guidelines as digital pathology tools become more common in clinical settings.

By adopting virtual staining, you can increase throughput, reduce costs, and open up new avenues for research and diagnosis. The key is to understand the technology and its requirements, so you can make smart investments and stay ahead in a fast-changing field.

Conclusion

Virtual staining is no longer just an idea—it is becoming a practical tool that can transform tissue analysis. This new patent brings together multiple imaging types, smart data fusion, flexible AI models, and a robust training process to deliver virtual stains that are fast, accurate, and cost-effective. By allowing labs to create multiple stains from a single digital image, it opens up new possibilities for research, diagnosis, and patient care.

As digital pathology continues to grow, innovations like this will be at the center of more efficient, scalable, and insightful lab work. Whether you are a scientist, a pathologist, or a business leader, understanding and embracing virtual staining will be key to staying at the forefront of medical diagnostics and research.

Click here https://ppubs.uspto.gov/pubwebapp/ and search 20250232443.