AI is moving into hospitals fast. It reads scans, flags risks, guides doctors, and watches patients from afar. But in the U.S., you cannot ship AI for health care just because it “works.” If your software helps diagnose, treat, prevent, or even guides a clinical decision, the Food and Drug Administration (FDA) may treat it as a medical device. And once you are in “device” land, the rules change: you need clear proof, tight controls, and a plan for how your AI behaves over time.

This article is a practical guide to how the FDA thinks about AI in Software as a Medical Device (SaMD). We will talk about how the FDA decides if your AI is regulated, what class your product might fall into, what evidence you must collect, how to handle training data and updates, and how to avoid the common traps that slow teams down. I will keep it simple, direct, and focused on actions you can take.

Also, if you are building AI, robotics, or deep tech in health and you want to protect what you are creating while you build, Tran.vc can help you turn your core tech into real IP assets early. You can apply anytime here: https://www.tran.vc/apply-now-form/

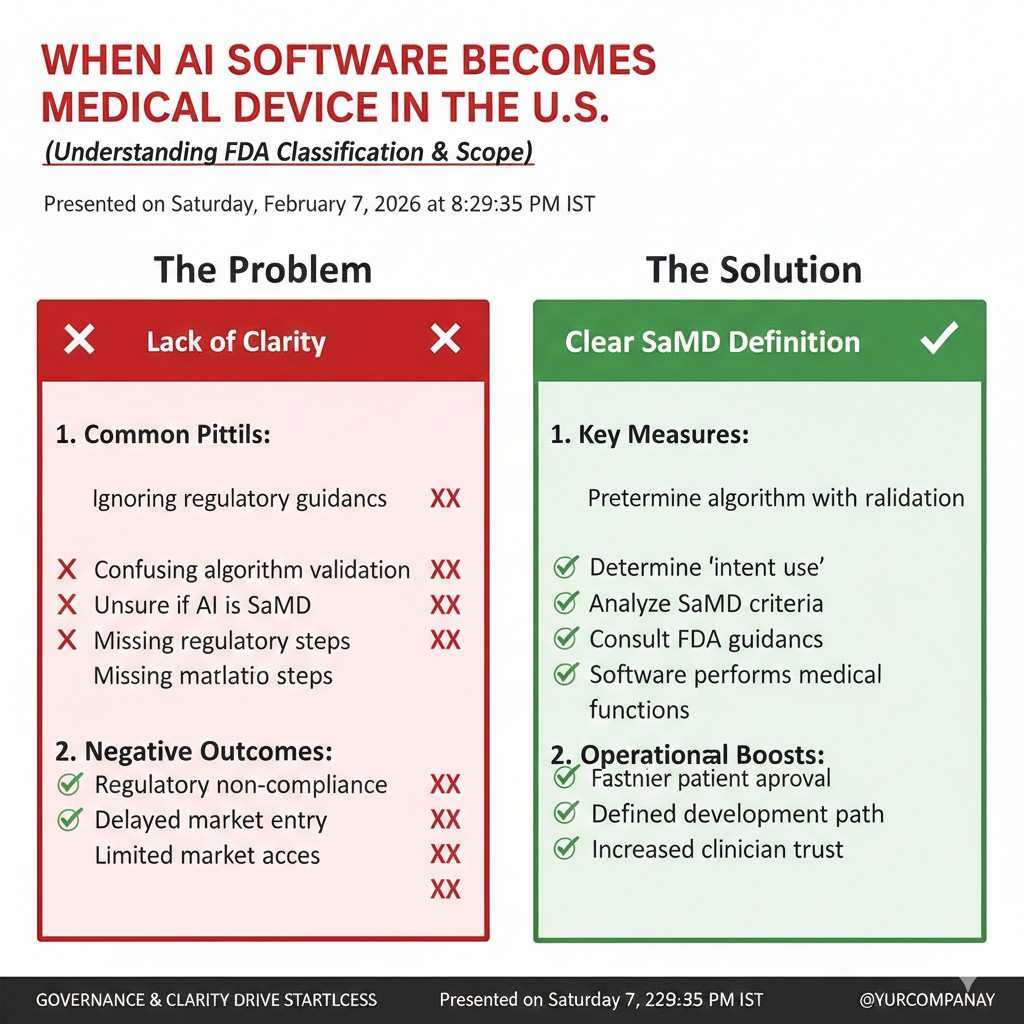

When AI Software Becomes a Medical Device in the U.S.

The FDA does not start with your code

The FDA starts with what your software is meant to do. If your AI helps diagnose a disease, predicts a patient risk, picks a treatment, or drives a clinical action, it can fall under FDA device rules. It does not matter if the product looks like “just software.” If it affects patient care in a meaningful way, the FDA may treat it like a device.

“Intended use” is the center of everything

Intended use is the “job” you claim your software does. It comes from your marketing, website, pitch deck, demo scripts, and even what your sales team says. If you imply your AI detects cancer, the FDA can treat it as a diagnostic tool. Many teams get pulled into regulation by claims they did not realize were strong medical promises.

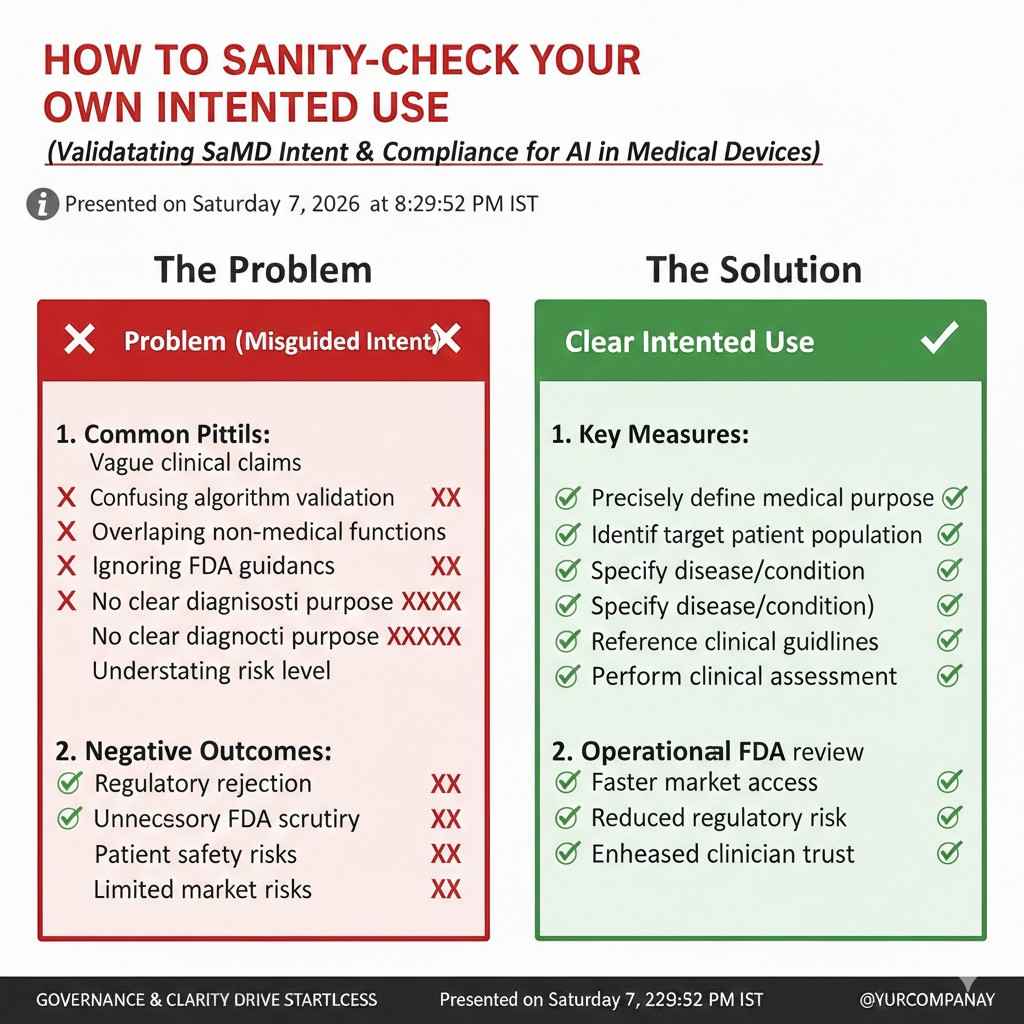

How to sanity-check your own intended use

A simple test is to ask: “If a clinician follows my software output, could a patient be helped or harmed?” If the answer is yes, you are likely in device territory. Another test is: “Would a reasonable person call this a medical purpose?” If you are screening, diagnosing, measuring, treating, or preventing, assume the FDA will care.

The words that quietly trigger regulation

Some words sound harmless but can trigger medical meaning. “Detect,” “diagnose,” “triage,” “treat,” “predict deterioration,” “reduce mortality,” “prevent stroke,” and “clinical-grade” can all raise the stakes. Even “early warning” can imply you are guiding care. If you must use these words, you should be ready to support them with strong evidence.

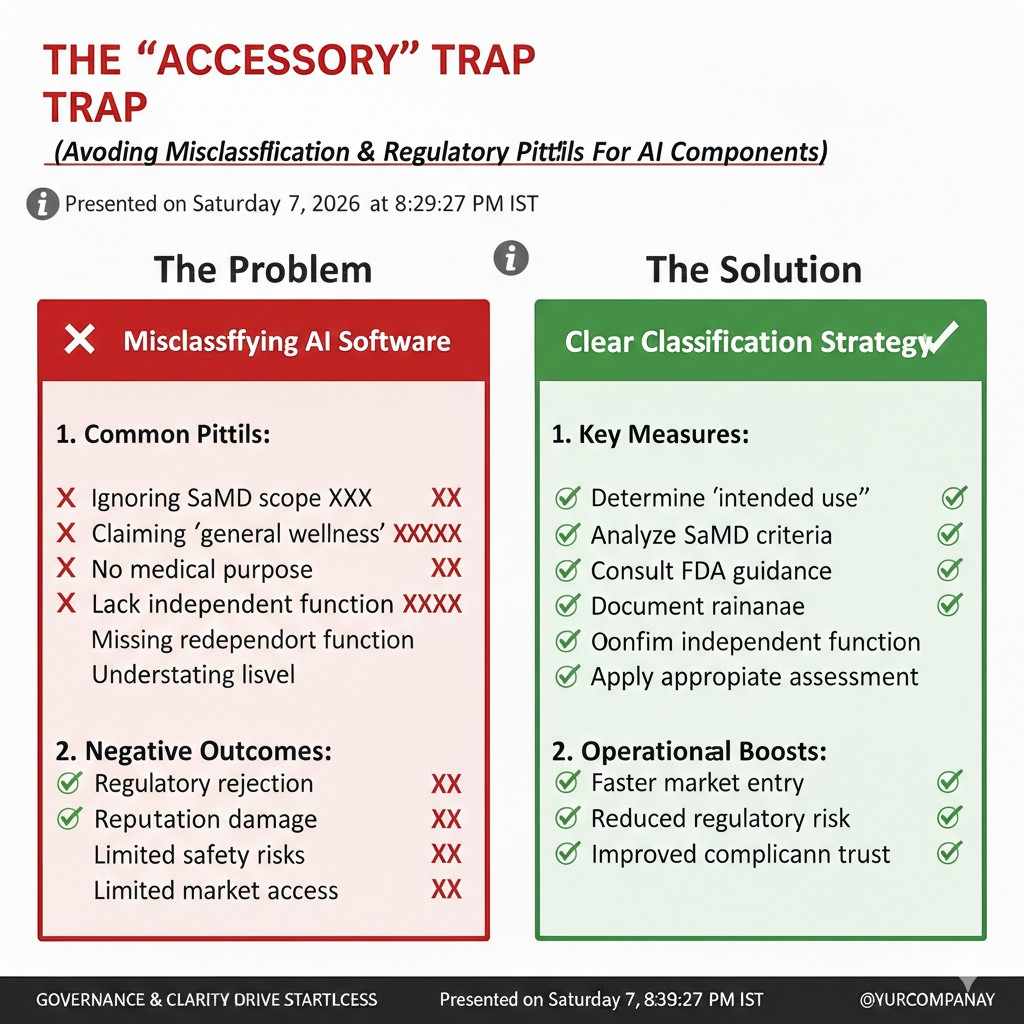

The “accessory” trap

Sometimes your app supports a regulated device, like a monitor or imaging system. If your AI is needed for that device to work as intended, your AI may be treated as an accessory. Accessories can be regulated even if the app itself does not touch the patient. This is common when AI analyzes data coming from a device already on the market.

What Usually Stays Outside FDA Device Rules

General wellness is a narrow lane

Some software is aimed at general wellness, like basic fitness coaching or stress tracking, without making medical claims. If the product does not claim to diagnose or treat a condition, it may avoid FDA device rules. But this lane is narrower than many founders expect, especially in medical settings where users assume clinical meaning.

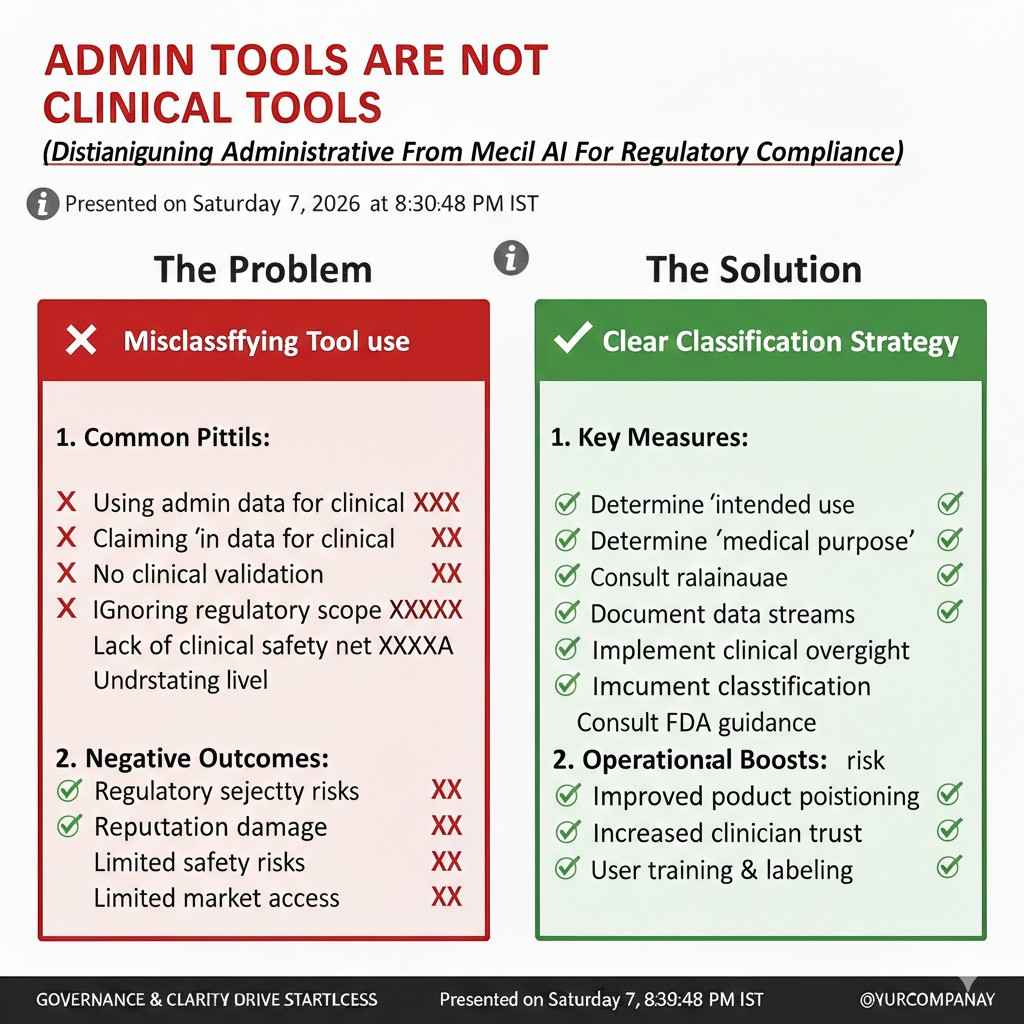

Admin tools are not clinical tools

Scheduling, billing, note formatting, and basic workflow tools usually are not medical devices. The moment your tool starts giving patient-specific medical guidance, you move closer to device status. Many products begin as admin software and later add “smart” features. That is where teams accidentally cross the line.

Research-only does not mean “free pass”

“Research use only” language can help, but it is not magic. If you sell or deploy in real care settings and people use outputs to guide care, the FDA can still view it as a device. If you are truly research-only, keep it in controlled studies, avoid clinical claims, and document how outputs are not used in treatment decisions.

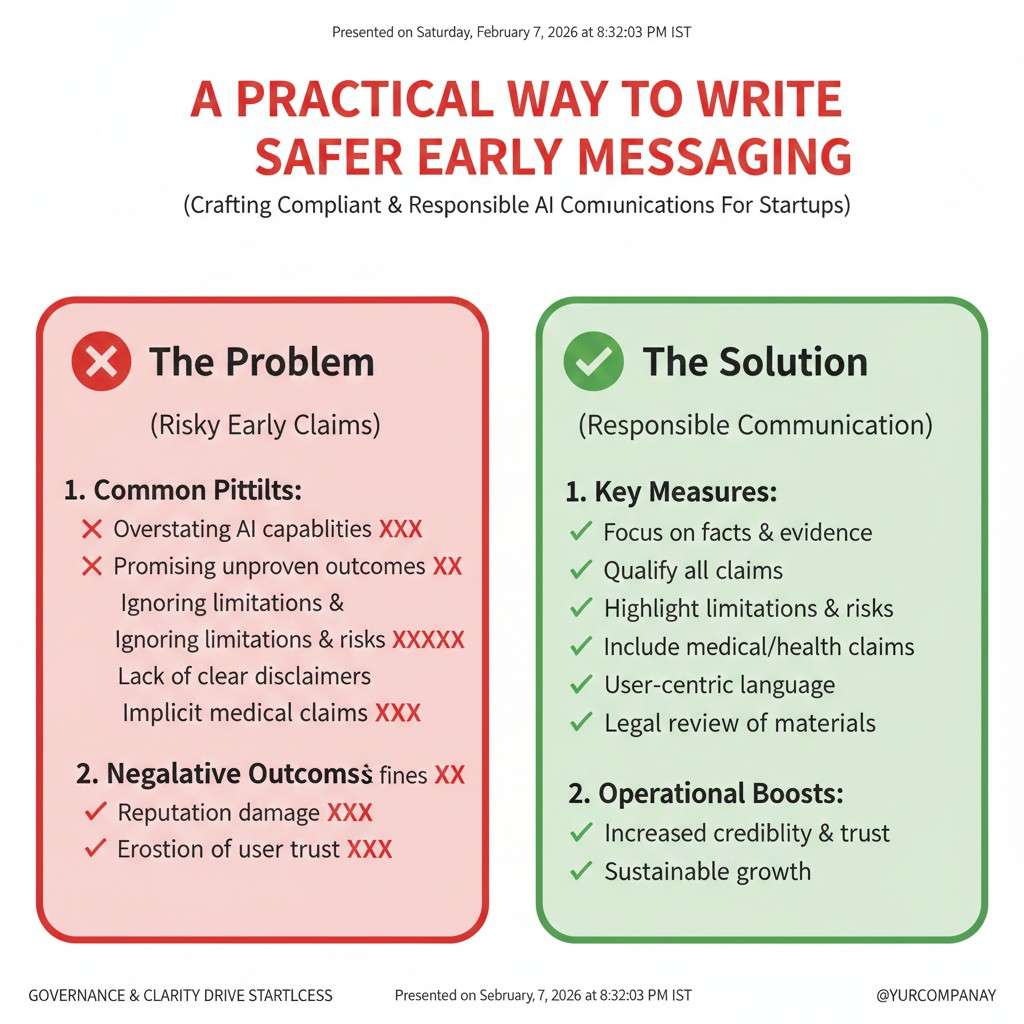

A practical way to write safer early messaging

If you are early, it helps to describe your product as “supporting review” rather than “making the call.” For example, “helps organize information for clinician review” is less risky than “flags disease.” This does not remove FDA interest if the function is truly diagnostic, but it can keep your messaging aligned while you plan the right pathway.

Claims, Labeling, and Marketing: The Fastest Way to Create Risk

The FDA reads your website like a regulator

Founders often think regulation starts when you file. In practice, your public claims can shape how your product is classified before you ever talk to the FDA. A single case study that says “we reduced sepsis deaths” can create a high bar for evidence. If you cannot back it up yet, it can slow everything down later.

Your “use case” wording is not just copywriting

A use case is often treated like a clinical claim. “Helps radiologists find nodules faster” is different from “detects lung cancer.” The first suggests workflow support; the second suggests diagnosis. When you write your site, you are also writing your future regulatory file. Tight language now reduces rework later.

The output format can change the risk

A risk score, a binary decision, and a highlighted region on an image do not get treated the same way. A hard “yes/no” feels like the software is deciding. A ranked list with explanations can feel more like assistance. The more your UI looks like a final clinical answer, the more you should assume you need a full FDA plan.

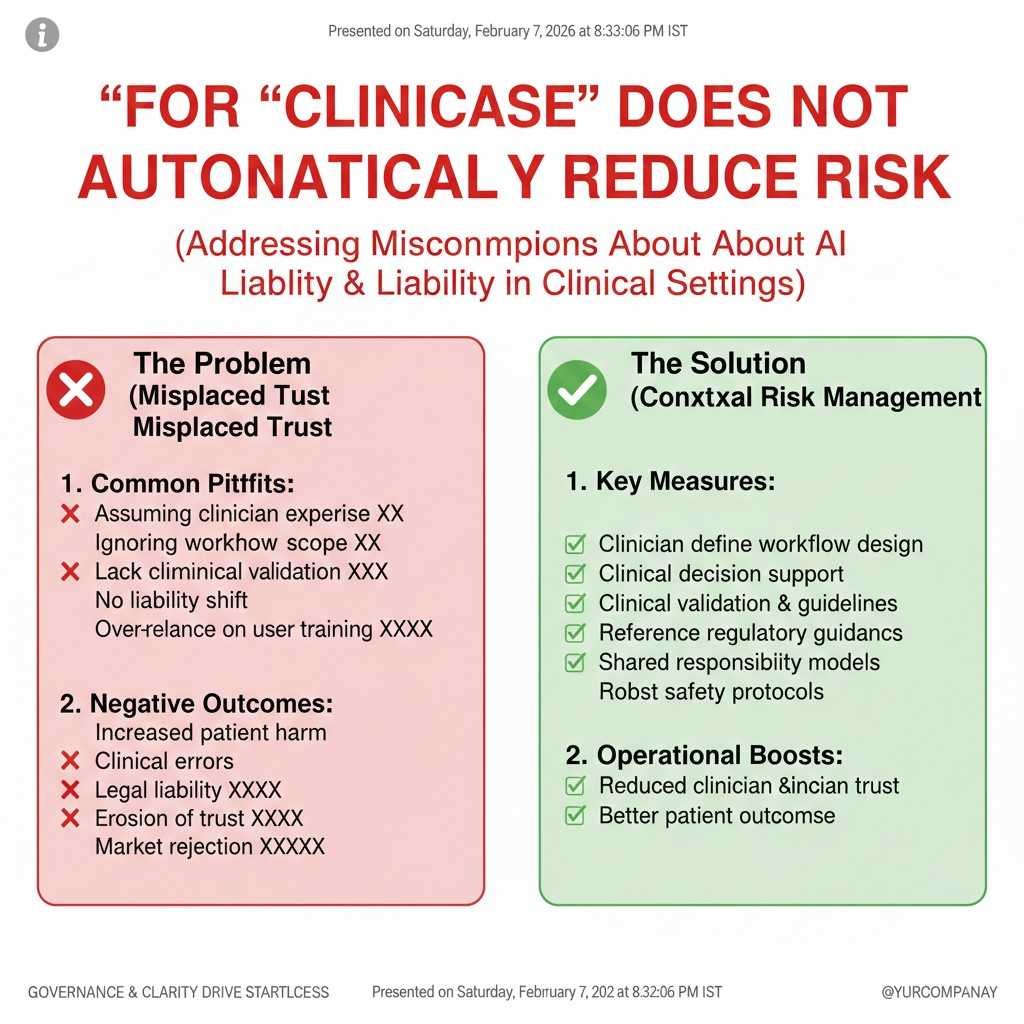

“For clinician use” does not automatically reduce risk

Some teams add “for clinician use only” and assume it solves things. It does not. The FDA cares about what the software does, not only who uses it. Clinician-facing tools can still be high risk, especially if they influence diagnosis or therapy.

Clinical Decision Support and AI: Where Many Teams Get Confused

Why “clinical decision support” is a special area

There is a category often discussed as clinical decision support (CDS). Some CDS functions may be outside active FDA oversight when they are transparent and a clinician can independently review the basis for the recommendation. But many AI systems are not easily explainable in a way that lets a user truly check the reasoning.

The “can the clinician independently review?” test

A key idea is whether the user can understand and verify the reasoning behind the output. If your software points to published guidelines and shows the patient facts it used, that can support independent review. If it is a black box model that outputs a risk score without a clear, checkable basis, it may not qualify for lighter treatment.

Imaging AI often does not fit the light-touch path

In imaging, AI may mark lesions or detect patterns humans cannot easily quantify. Even if a radiologist reviews the image, the model’s “reason” may not be fully reviewable. That often pushes imaging AI into regulated device pathways. If you are building imaging AI, plan early for device-level evidence and controls.

What to do if you are not sure where you fall

When the line is blurry, act like a regulated team until proven otherwise. Write down your intended use in one paragraph. Write down what a user does after seeing the output. Then ask: “Could a wrong output lead to harm?” If yes, build your quality and evidence plan as if FDA will regulate you, even if you later find a lighter path.

Risk, Device Classification, and Why It Matters

The FDA uses risk to set the rules

Once your AI is treated as a device, the next question is risk level. In simple terms, higher risk means more evidence and more controls. Risk is not about how advanced your AI is. It is about what happens if it is wrong and how the output drives care.

The three main classes in plain words

Class I is usually low risk and often has lighter requirements. Class II is moderate risk and is common for many SaMD products, including many AI tools. Class III is high risk, often tied to life-supporting, implanted, or very high-stakes uses, and it usually needs the most rigorous review.

Why your classification drives your timeline and cost

Your classification influences which submission route you may need and how much clinical evidence is expected. It also shapes your quality system needs and how strict your change control must be. If you guess wrong, you can waste months building the wrong package. A careful classification view early can save real time.

A founder-friendly way to think about risk

Focus on two things: the clinical role and the clinical setting. If your AI is used to make a diagnosis, that is usually higher risk than a tool that helps sort cases for review. If it is used in emergency care, ICU, or oncology, the stakes rise. If it supports a non-urgent decision with easy human checks, risk can be lower.

Why IP strategy matters even at this stage

Regulatory work forces you to document your method, your data approach, and your system design. That same clarity is often what makes strong patents possible. If you are doing the hard work anyway, you can capture the defensible parts early. Tran.vc helps technical teams turn these “must-do” steps into IP assets that investors respect. You can apply anytime here: https://www.tran.vc/apply-now-form/

The Main FDA Pathways for AI SaMD

Why the pathway choice is a business decision too

Most founders treat the FDA route as a paperwork choice. In reality, it shapes your product design, your budget, your hiring plan, and your launch timing. If you pick the right route early, you build the right evidence in the normal flow of product work. If you pick the wrong route, you end up rebuilding your study plan and rewriting claims after you already shipped a pilot.

The three routes you will hear about most

For AI SaMD, you will most often hear about 510(k), De Novo, and PMA. They are not just “forms.” Each one is a different level of proof, and a different kind of conversation with the FDA. Your goal is to find the lowest-burden route that still fits your risk level and clinical promise.

A simple way to avoid guessing

Before you choose any route, write down your intended use in one tight paragraph. Then write down your key output, who acts on it, and what action they take. After that, look for a “similar enough” device that is already cleared or approved. If one exists and your device is close in purpose and risk, you may have a clearer path. If none exists, your path usually changes.

The 510(k) Route

What 510(k) means in normal words

A 510(k) is built around the idea of “substantial equivalence.” You are telling the FDA: “A device like mine is already legally on the market, and mine is as safe and effective for the same basic job.” The focus is not on proving your product is the first of its kind. The focus is on showing you are not introducing new safety or performance problems compared to a known device.

When 510(k) is a good fit for AI SaMD

If your AI is doing a task that other cleared products already do, 510(k) may be possible. This happens in areas like image triage, certain types of detection aids, measurement tools, or clinical scoring support where similar products exist. Even then, “similar” must be real, not hopeful. The more your intended use or tech approach differs, the harder the 510(k) argument becomes.

The predicate device is the center of the story

The predicate is the earlier device you compare against. If you choose a weak predicate, the FDA can push back and ask for more work. A strong predicate matches on intended use, user type, setting, patient population, and key outputs. Founders sometimes chase the easiest predicate they can find, but then spend months defending why it is comparable. It is often better to pick the best match even if it looks like more work upfront.

What “different technology” can do to your plan

AI models can be treated as different technology compared to rule-based software, even if they solve the same problem. That does not automatically block 510(k), but it can raise questions. The FDA may ask for more performance testing, more robustness checks, and clearer controls to make sure your AI behaves safely across real-world variation.

The De Novo Route

What De Novo is, without the legal jargon

De Novo is used when your device is new enough that no good predicate exists, but the risk level is still not the highest tier. You are asking the FDA to create a new device type with special controls. Think of it as: “This is new, but it can be managed with clear guardrails and evidence.”

Why many AI-first products land here

AI can create truly new functions, like predicting a complication earlier than standard practice, or spotting patterns not formally used in routine care. If you cannot find a cleared predicate with the same intended use, De Novo becomes more likely. This route can take more work than a clean 510(k), but it can also set you up to become the predicate others must follow later.

The hidden advantage of being first

If you clear via De Novo, your device type can become the foundation for future 510(k) submissions by others. That sounds like you are helping competitors, but there is a strategic twist. If you pair your clearance with strong IP, you can still hold the high ground. Many teams forget this. Regulation defines the category; patents protect your unique method inside the category. Tran.vc is built for this moment, helping you lock in defensible IP while you build the evidence. Apply anytime here: https://www.tran.vc/apply-now-form/

What “special controls” really means for you

Special controls are the extra requirements the FDA sets for that new category. For AI SaMD, this often touches data quality, performance testing, labeling, and post-market monitoring. If you design your development process to meet these controls from day one, you avoid painful retrofits later.

The PMA Route

What PMA means in plain terms

PMA stands for premarket approval. This is the most demanding path and is used for the highest-risk devices. Here, the FDA expects deep proof of safety and effectiveness, often including strong clinical studies. If your AI is making decisions where mistakes can cause serious harm, PMA may be on the table.

Not every “important” product is PMA

Founders sometimes hear “PMA” and panic. Many valuable AI tools still land in Class II routes. PMA is more likely when the device is critical to patient survival, replaces a key physician judgment without a strong check, or has a very high-stakes intended use. The best way to reduce the chance of PMA is not to weaken your product, but to frame the intended use honestly and build strong clinician-in-the-loop safeguards where appropriate.

If PMA is likely, plan for it early

If you suspect PMA, do not wait until late development to act. Your clinical evidence plan must be shaped early, because you may need prospective data, multi-site studies, and strict controls on how the software is used. Teams that treat PMA like a final hurdle often run out of runway. Teams that plan early can align product, trials, and partnerships from the start.

Pre-Sub: The Meeting Many Teams Skip and Later Regret

The FDA is not a black box if you talk early

A pre-submission meeting, often called a “pre-sub,” lets you ask the FDA questions before you file. For AI SaMD, this is one of the best ways to reduce risk. You can present your intended use, your planned studies, and your performance metrics, and ask if your plan is likely to meet expectations.

What you should bring to a strong pre-sub

Bring a clear description of the software, the clinical workflow, and the exact output. Bring your data plan, including the types of sites and patients represented. Bring your proposed metrics and acceptance targets. The FDA does not want poetry. They want to see that you understand the problem, the risks, and how you will prove performance in the real world.

The most useful questions to ask

The best questions are specific and tied to decisions you must make. For example, you can ask whether your proposed reference standard is acceptable, whether your clinical dataset is representative, or whether your change plan for model updates is reasonable. Avoid vague questions like “Is this good?” Instead, ask “If we do X and Y, does that support the claim Z?”

Pre-sub also helps your investor story

A good pre-sub outcome reduces uncertainty. It shows you are not hand-waving the FDA. It also shows you can run a regulated build like an adult company, not a hackathon. If you are raising, being able to say “We aligned our evidence plan with the FDA” can change the tone of diligence conversations.

How to Choose the Right Pathway Without Losing Months

Start from the clinical claim, not from the technology

Founders love to describe the model. The FDA cares about the medical promise. If you start from the claim, you can map risk, classification, and pathway more cleanly. If you start from the model type, you often drift into details that do not decide the pathway.

Look for the “closest clinical twin”

Instead of asking “Is there any AI like mine?” ask “Is there any device that does the same clinical job?” Sometimes the closest predicate is not AI at all. It might be a software tool that supports the same decision. If the intended use matches tightly, that can still support 510(k) thinking, even if you must do extra work to address technology differences.

Decide what you can prove this year

You may want your AI to do five things. But if you can only prove two things well right now, build the regulated claim around the two. You can expand later. Many teams fail because they over-claim early and then cannot support it. The FDA route is often smoother when you take a narrow first claim and earn the right to grow.

Pair regulatory work with IP on purpose

Every time you define your method, your data pipeline, your training approach, your drift monitoring, or your safety logic, you are creating invention points. These can become patents that protect your moat even as the category grows. Tran.vc specializes in helping founders capture those invention points early, before copycats show up. If you are building in AI, robotics, or deep tech for health, you can apply here: https://www.tran.vc/apply-now-form/