If you build AI, you build with data. And the truth is simple: the quality and safety of your model can never be better than the data you feed it. Data governance is just the set of rules you follow so your training data stays clean, fair, legal, and useful. It is not paperwork. It is how you avoid painful surprises right when you are about to ship, raise, or sign a big customer.

In this guide, we will keep it plain and practical. You will learn what rules matter most for training data, what to write down, what to check, and what to fix before a problem becomes expensive.

If you are building AI in robotics, software, or any deep tech area and you want to protect what you are creating, you can apply to Tran.vc anytime: https://www.tran.vc/apply-now-form/

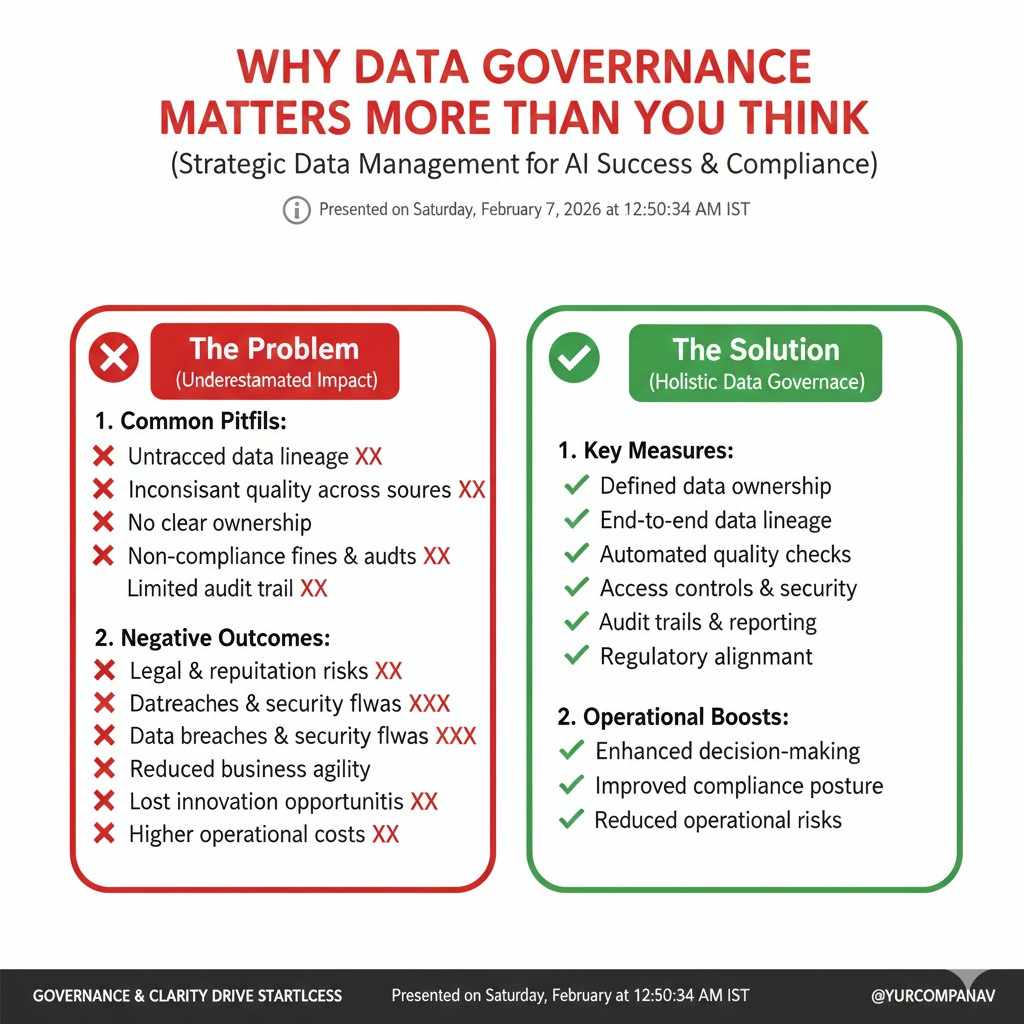

Why data governance matters more than you think

Most teams treat data like fuel. They want more of it, fast. They scrape, buy, label, merge, and train. The model improves. Demos look good. Then something breaks.

A customer asks, “Where did this data come from?” A partner asks, “Do you have rights to use it?” A security lead asks, “Does this dataset have personal data?” A lawyer asks, “Can you prove consent?” A regulator asks, “Can you explain how you trained this model?” A competitor asks none of these things. They just file a complaint after your product starts to grow.

This is the moment many founders wish they had set rules earlier. Not because they love rules, but because they love speed. Data governance is what lets you move fast without stepping on a landmine.

Good governance gives you three big wins.

First, it reduces training risk. You do not want your model to learn the wrong patterns, repeat private details, or fail in edge cases because your data was messy.

Second, it reduces business risk. When you sell B2B, buyers will test your maturity. They may not call it “data governance,” but they will ask for proof that your data pipeline is safe and legal. If you cannot answer, you lose trust.

Third, it builds a moat. When your data is collected the right way, labeled the right way, and tracked the right way, it becomes harder to copy. It becomes an asset. It can support patents, trade secrets, and strong product claims.

This is where Tran.vc can help. Tran.vc supports technical founders with up to $50,000 of in-kind patent and IP services so you can turn your data work and model work into real defensible assets early. Apply anytime: https://www.tran.vc/apply-now-form/

The core idea: training data needs “rules of use” and “rules of truth”

When people say “data governance,” it can sound big and vague. Let us make it simple. Training data needs two kinds of rules.

Rules of use answer: Are we allowed to use this data, in this way, for this purpose?

Rules of truth answer: Is this data accurate, stable, and fit for what we want the model to learn?

Most teams focus on truth and forget use. Or they focus on use and forget truth. Strong teams do both.

Think about a robotics startup training a vision model for warehouse picking. You might have videos from a pilot customer. You might have images captured from cameras on the robot. You might have open datasets. You might also have vendor data.

Rules of use will cover things like: the customer contract, whether workers were notified, whether the footage includes faces, whether you can train a general model or only a customer-specific one, and whether the data can be reused later.

Rules of truth will cover things like: lighting changes, camera angles, label accuracy, missing cases (like reflective packaging), drift over time, and whether the dataset matches real working conditions.

If you only manage truth, you can still get blocked by legal issues. If you only manage use, you can still ship a model that fails in the real world.

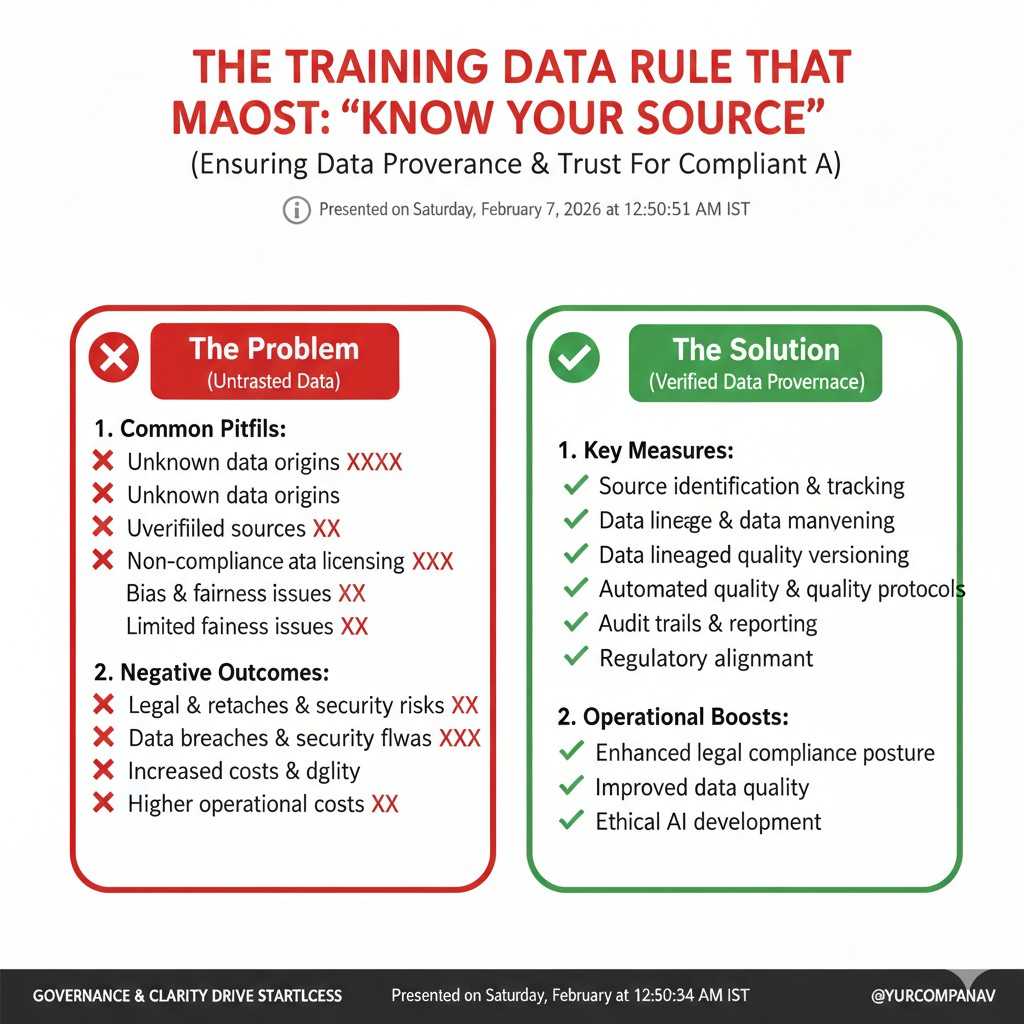

The training data rule that matters most: “Know your source”

Every dataset has a story. If you do not know the story, you do not own the risk.

So your first rule is simple: for every dataset you train on, you should be able to answer, in plain words:

Where did we get it?

Who created it?

What did we pay or promise for it?

What limits come with it?

What does it contain that could harm someone if mishandled?

This is not a long process. It can be a short page per dataset. But it must exist.

When founders skip this step, the same pattern shows up later. Data is stored in a bucket with a name like “final_v3.” No one remembers what “v3” means. Someone new joins and mixes it into the main set. A year later, you cannot prove rights. You cannot prove consent. You cannot even prove what you trained on.

If you want one tactical move today, do this: open a doc and write a “dataset passport” for your top three training datasets. Keep it short. But be clear. If you can do it for three, you can do it for thirty later.

A good dataset passport includes what you would tell a customer if they asked. Not what you would tell yourself. If it feels hard to say out loud, that is a signal you need a fix.

And if you are not sure how to structure this so it also supports your IP plan, Tran.vc can guide you. Apply anytime: https://www.tran.vc/apply-now-form/

Rule two: “Purpose limits are real”

A common mistake is to assume that once you have data, you can use it for anything. That is often not true.

You might have permission to use data to provide a service, but not to train a general model. You might have permission to train a model for one customer, but not to reuse learnings for others. You might have permission to store data for 30 days, but not forever. You might have permission to use anonymized outputs, but not raw inputs.

Even when the law allows something, contracts may not. And in B2B, contracts matter more day to day than abstract legal theory. Your best customer can also be your biggest blocker if your data use terms are unclear.

So your second rule is: every dataset must have a clear purpose label.

That purpose label answers:

What are we allowed to do with it?

What are we not allowed to do with it?

How long can we keep it?

Can we use it to train foundation models, fine-tunes, or only evaluation sets?

Can we share it with vendors?

This may feel like overhead. But it protects your roadmap. It also protects your valuation. Buyers and investors hate unknowns. If your training data story is fuzzy, they assume the worst.

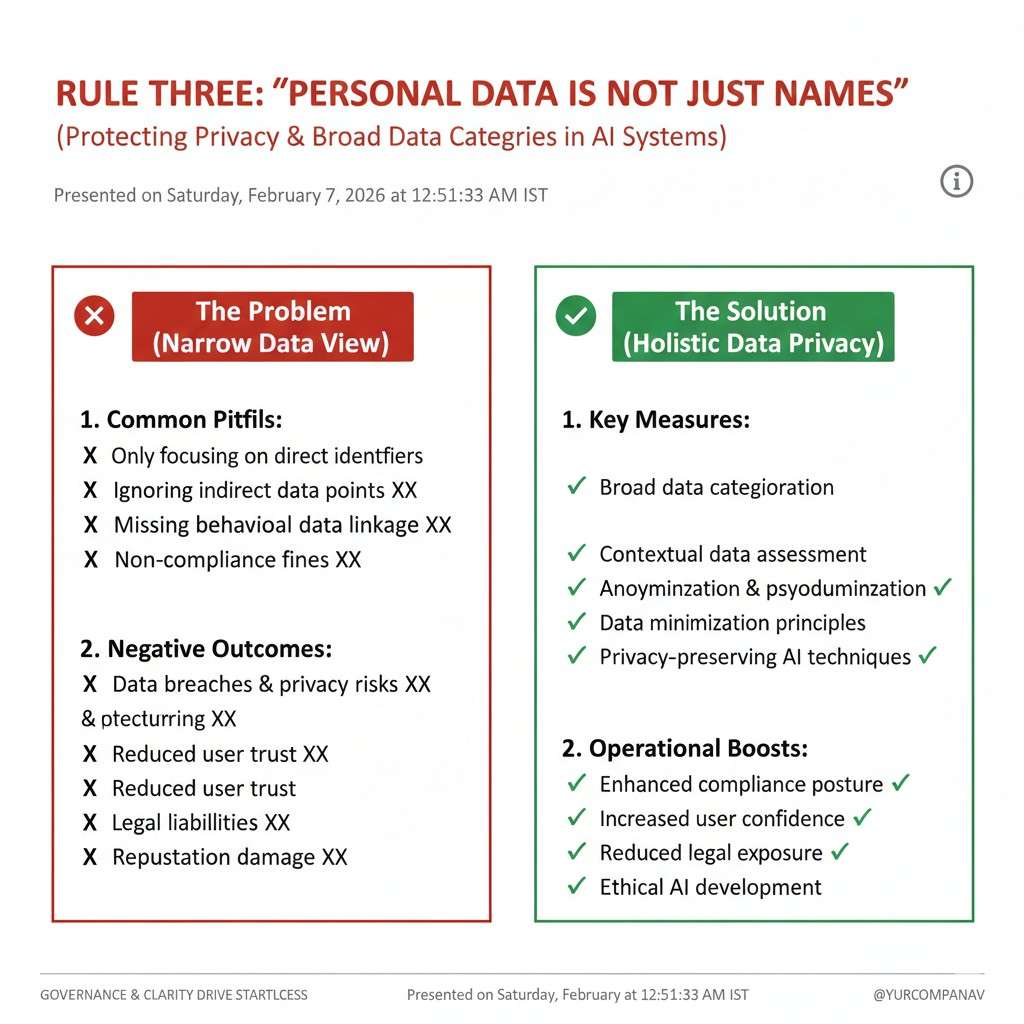

Rule three: “Personal data is not just names”

When people hear “personal data,” they think of names, emails, phone numbers. But training data risk goes far beyond that.

A face in a video can be personal data. A voice clip can be personal data. A license plate can be personal data. A unique device ID can be personal data. A chat log can contain health details or private beliefs. A GPS trace can show home and work patterns.

If you train on data like this without care, you can create models that leak. Even if you do not intend it. Models can memorize. They can repeat details. They can reveal more than you expect when prompted the right way. This is why many enterprise buyers now ask pointed questions about what kinds of data you train on.

So your rule here is: treat personal data like a live wire. Do not touch it without insulation.

In practice, this means you should know, for each dataset: does it contain personal data? If yes, do we truly need it? Can we reduce it? Can we mask it? Can we remove it?

Many teams keep personal data because it is convenient, not because it is needed. Convenience is not a business reason. If you can train without it, do it. If you need it, define strict controls.

This is also where a smart IP plan helps. If your model performance depends on a special kind of data collection process, that process itself can be protected. If you build a unique way to capture, clean, and label data for robotics or industrial AI, that can be a moat. Tran.vc specializes in helping founders turn these practical technical choices into defensible IP. Apply anytime: https://www.tran.vc/apply-now-form/

Rule four: “Consent is not a vibe, it is a record”

Some founders rely on a general feeling that consent is “probably fine.” That is dangerous.

Consent is not just someone saying yes once. Consent depends on what they understood, what they were told, and what you do later. It also depends on local law and contract language.

But you do not need to become a lawyer to be safer than most startups. You need a basic record.

If you collect data from users, you should know what you showed them and what they agreed to. If you collect data from customers, you should know what the contract says. If you collect data from a third party, you should know what the license allows.

The key is not perfection. The key is traceability. When you can trace your data back to a clear permission path, you can answer questions fast. That speed becomes a competitive advantage when deals move quickly.

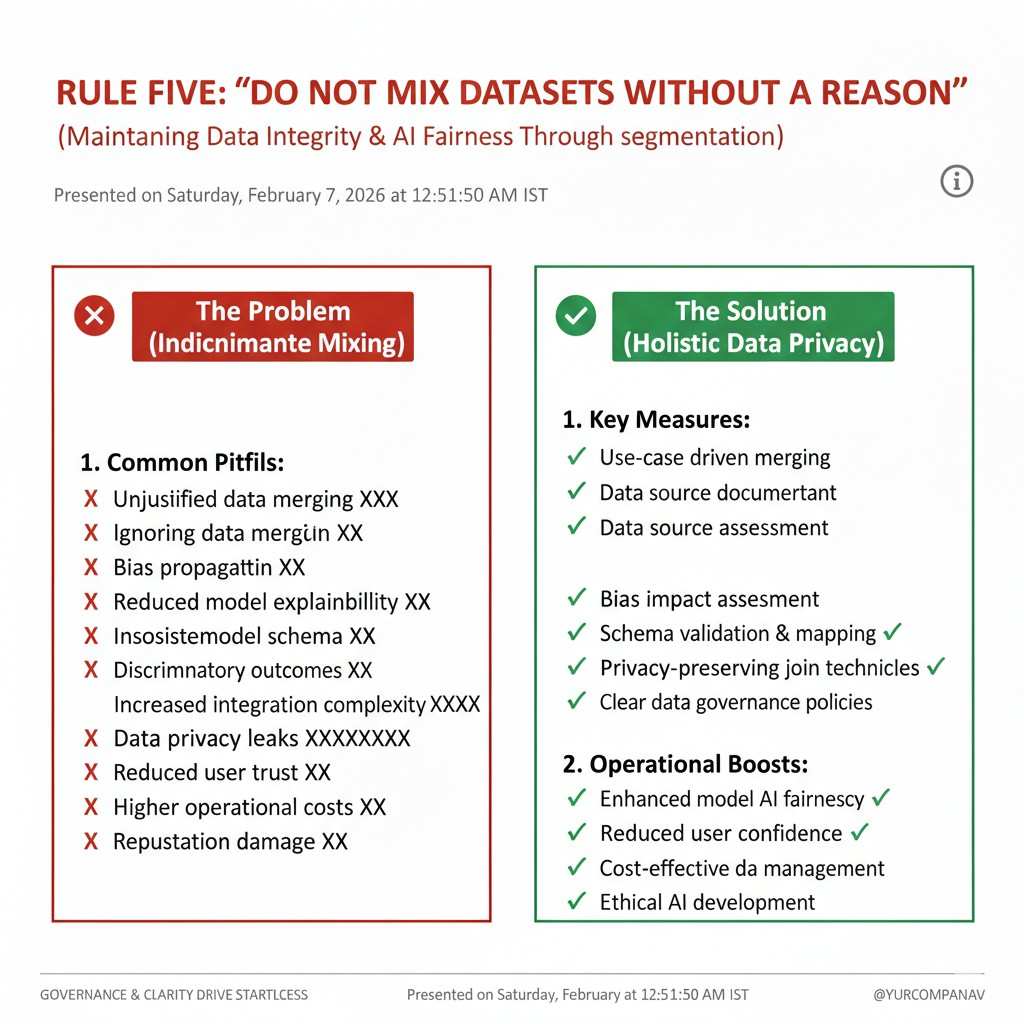

Rule five: “Do not mix datasets without a reason”

Here is a quiet killer: mixing datasets that should never meet.

Maybe you have medical text from one source and general chat data from another. Maybe you have customer data and public web data. Maybe you have European user logs and U.S. user logs. Maybe you have employee test data sitting near production logs. Someone merges them to “get more training signal.” The model improves slightly. The risk jumps a lot.

Mixing creates hidden problems. It can break the rules of use. It can create privacy issues. It can make it impossible to delete data later. It can also create bias or leakage. For example, if one dataset contains labels that reveal answers, your evaluation can become fake. You think you improved accuracy. You just trained on the test.

So the rule is: every merge needs a written reason and a check.

A simple check is: do the purpose limits match? Do the privacy rules match? Does the retention rule match? If not, do not merge.

If you must merge, you can keep separation through tagging and access control, or by training in stages where sensitive data never joins the general pool.

Rule six: “Labeling is part of governance”

Founders often treat labeling as “just ops.” In truth, labeling is policy.

Labels define reality for your model. If labels are inconsistent, your model learns confusion. If labels reflect a biased view, your model learns that bias. If labels are rushed, your model learns noise.

So governance must cover label rules. This includes who can label, how they are trained, what the label guide says, how disagreements are handled, and how quality is checked.

A practical approach is to treat your label guide like a product spec. Keep it living. Update it when you learn. And track changes.

This matters a lot in robotics and industrial AI, where edge cases are everywhere. One person calls a “partial obstruction” a failure. Another calls it a success. The model learns a mushy middle and you get weird field behavior.

You do not need complex systems to start. But you do need a shared definition of what “correct” means.

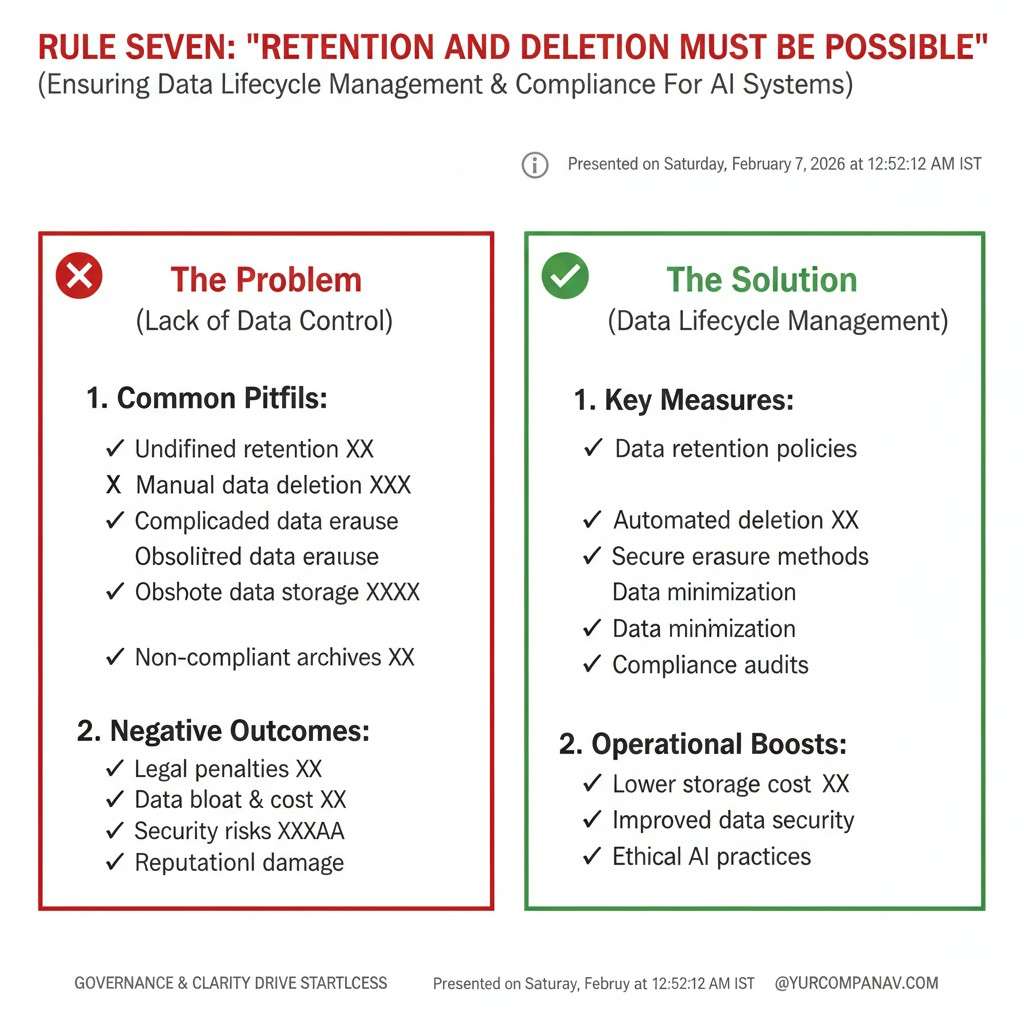

Rule seven: “Retention and deletion must be possible”

Many teams store everything forever because storage is cheap. The hidden cost is not storage. The hidden cost is that you cannot cleanly delete later.

If a customer ends a contract and asks you to delete their data, can you? If a user requests deletion, can you remove their data from training sets? If a dataset license expires, can you stop using it and prove you stopped?

These are not theoretical questions anymore. They come up in real deals.

So governance means designing for deletion. At minimum, this means you do not lose track of where data is used. You know which training runs used it. You know which derived sets include it. You know where it lives.

The teams that win enterprise deals have a calm, clear answer here. They do not say, “We think we can delete it.” They say, “Yes, and here is how.”

Rule eight: “Train-test leakage is a governance issue, not just an ML bug”

If your evaluation set is contaminated, you will make bad product decisions. Your model will look better than it is. You will ship, and reality will punish you.

Leakage can happen in many ways. Duplicate records across train and test. Similar samples that are basically the same. Time leakage where you train on future data. Label leakage where the input includes the answer. It is common in logs and time-series data, and it is common in text.

So governance should include a basic discipline: evaluation data is protected. Access is controlled. It is versioned. It is not used for training. People cannot casually “borrow” it for a quick boost.

This feels strict. It is worth it. A trustworthy metric is one of your strongest internal tools.

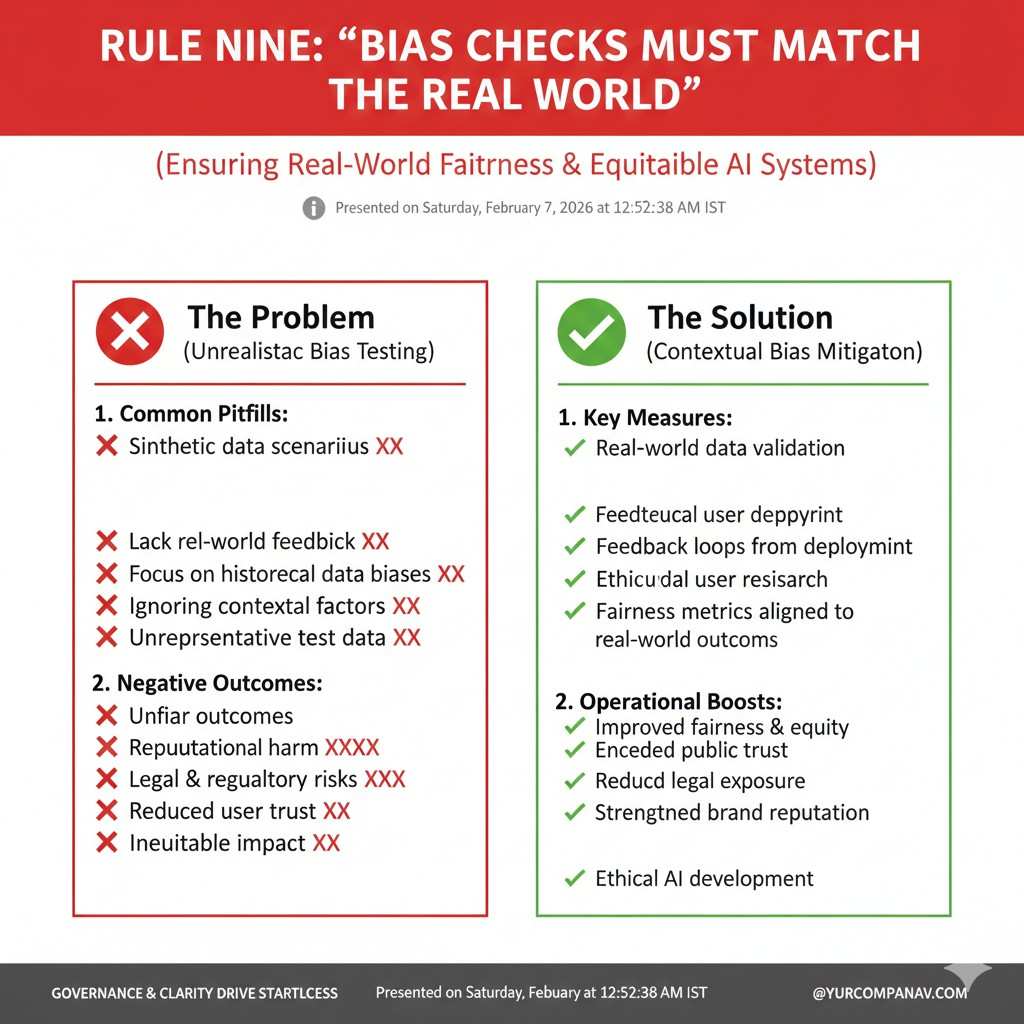

Rule nine: “Bias checks must match the real world”

Bias is often discussed in abstract moral terms. But in B2B AI, bias is also a performance problem and a customer trust problem.

If you train a model for hiring, lending, health, security, or education, bias is a direct risk. But even in robotics, bias can show up as uneven performance. The model works great in one warehouse but fails in another because the data over-represents a single environment.

So your governance should include a basic check: do we have coverage across the environments we claim to support?

This is not about doing a huge academic study. It is about sanity. If you sell to many sites, do you train on many sites? If you sell to many camera types, do you train on many camera types? If you sell to many accents, do you train on many accents?

A simple habit helps: whenever you add a new customer environment, ask, “Is this environment represented in training?” If not, decide whether you need to expand data collection before you promise performance.

Rule ten: “Security is part of data governance”

Training data is valuable. It can include private customer logs, internal process details, and unique signals. If it leaks, you lose trust and may lose deals.

So governance includes basic security controls: who can access raw data, who can export it, where it is stored, how it is encrypted, and what gets logged.

Early-stage teams often skip this because they are small. But small teams are often more exposed. One compromised laptop. One shared folder. One careless vendor. It happens.

You do not need a huge security program to be safer than average. Start with the basics. Limit access. Use separate environments. Track who touched what. Remove access when people leave. Avoid copying data into personal devices.

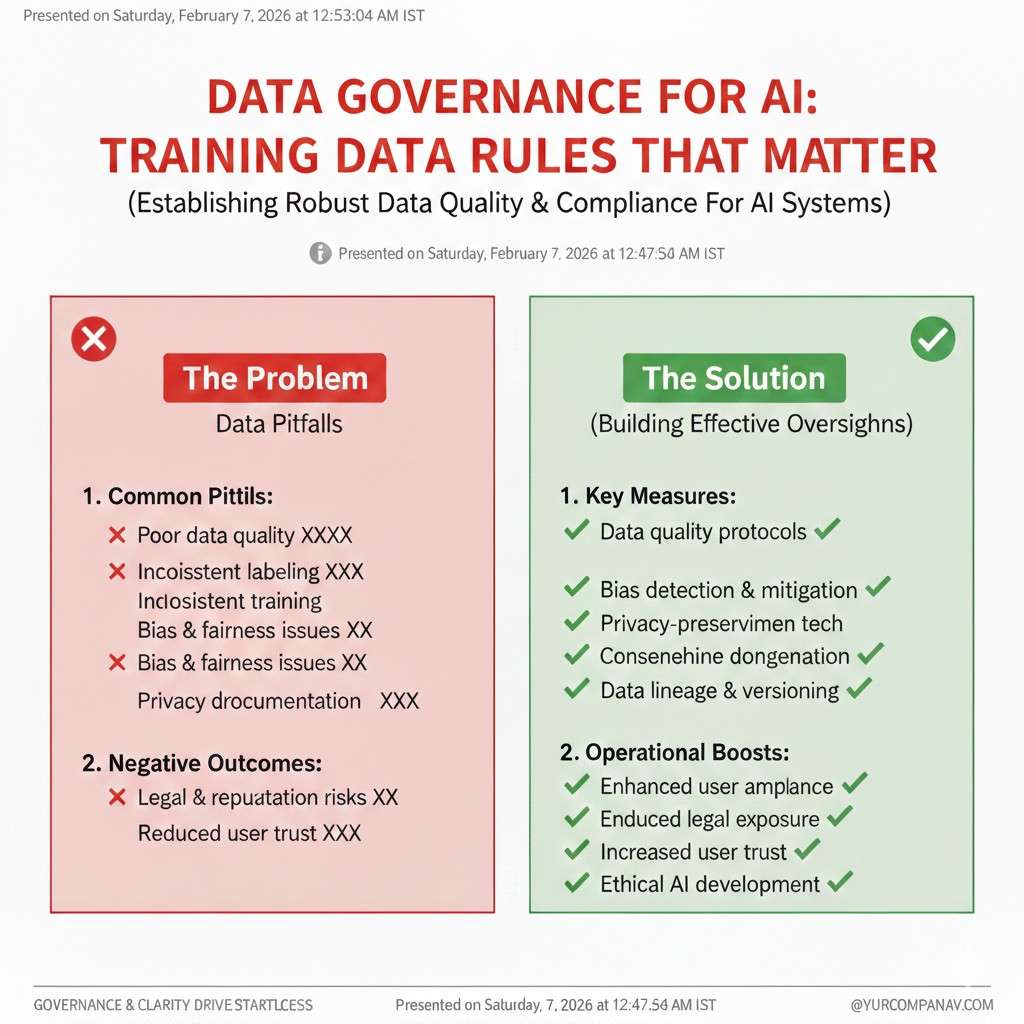

Data Governance for AI: Training Data Rules That Matter

Why data governance matters more than you think

When you build AI, you are building a system that learns from examples. Those examples decide what your model will do in the real world. If your data is messy, the model becomes messy. If your data is risky, the model becomes risky. That is why data governance is not a “big company thing.” It is a startup survival skill.

Most founders only feel this pain when they are close to shipping or selling. A buyer asks where your data came from. An investor asks if you can defend your dataset. A customer asks if you trained on their data. If you cannot answer fast and clearly, trust drops right away.

Good governance also makes you faster. When your team knows what data is allowed, what is clean, and what is approved, you stop wasting time. You avoid training runs that should never happen. You reduce rework, and you keep your momentum.

If you want help turning your data work into defensible IP, you can apply to Tran.vc anytime: https://www.tran.vc/apply-now-form/

The core idea: training data needs “rules of use” and “rules of truth”

Data governance sounds broad, but it is easier when you split it into two parts. First is “rules of use.” This is about permission. Are you allowed to use this data, in this way, for this purpose, for this long? If the answer is unclear, your business is exposed.

Second is “rules of truth.” This is about quality. Does the data match reality? Does it include the hard cases you will face in production? Are labels consistent? Are there hidden gaps that will cause failure when a real customer uses the product?

Teams often focus on only one side. They may have clean data that they do not have rights to use. Or they may have legally safe data that is too weak to train a reliable model. Strong teams hold both sides at the same time.

The training data rules that matter in practice

Data rules are not meant to slow you down. They are meant to stop preventable damage. The best rules are plain, easy to follow, and easy to explain to a buyer. If the rule cannot be explained in simple words, people will ignore it.

A useful rule has a clear owner, a clear check, and a clear outcome. The owner makes sure the rule is followed. The check proves the rule is followed. The outcome says what happens if the rule fails, so the team does not guess under pressure.

Rule 1: Know your source

What “knowing your source” really means

Every dataset has a history. If you cannot explain that history, you cannot control the risk tied to it. “Knowing your source” means you can tell the story of the data from the first moment it was created to the moment it enters training.

This matters because source problems do not show up in a model chart. They show up in contracts, audits, security reviews, and legal disputes. A model can look strong and still be unsafe to ship if the data behind it has a weak origin.

The dataset passport you should write today

A dataset passport is a short record that keeps your team honest. It does not need to be long. It needs to be clear. It should tell you where the data came from, who owns it, what rights you have, and what limits apply.

It should also describe what is inside the data at a high level. For example, does it contain faces, voices, names, customer IDs, location traces, or private documents? If you cannot answer this, you cannot manage privacy, security, or deletion later.

How source clarity becomes a moat

When your data source is clean and well tracked, your dataset becomes an asset. It becomes harder for others to copy because they cannot recreate the same inputs the same way. This is even stronger in robotics and industrial AI, where collecting real-world data is hard and expensive.

This is also where IP strategy matters. The way you collect, clean, and label data can be protectable. Tran.vc helps founders turn these real technical systems into IP-backed advantages. Apply anytime: https://www.tran.vc/apply-now-form/

Rule 2: Purpose limits are real

Why “we have the data” is not enough

Many teams assume that if they can access the data, they can train on it. That is not always true. Permission is often tied to a specific purpose, like providing a service to one customer or improving only that customer’s system.

Purpose limits show up in contracts, licenses, and user terms. They also show up in the expectations of your customers. If your buyer thinks you trained on their data to help other customers, the relationship can break fast.

How to assign a purpose label to every dataset

A purpose label is a simple tag that states what the dataset is allowed to be used for. It answers what training is allowed, what is not allowed, and whether the data can be reused across customers. It also answers how long the data can be stored and who can access it.

This label should be visible where the data lives, not buried in email threads. If the label is not visible, someone will use the data in the wrong way by accident. Most data misuse is not malicious. It is careless, rushed, and avoidable.

How purpose rules protect your roadmap

When your purpose limits are clear, product decisions become easier. You do not plan features based on data you cannot legally use. You do not promise performance improvements that depend on forbidden training sets. You also avoid last-minute rewrites of customer agreements right before a big launch.

Rule 3: Personal data is not just names

What counts as personal data in training

Personal data is anything that can identify a person, directly or indirectly. In AI training, this can include faces in videos, voices in audio, license plates, device IDs, precise location trails, and even detailed logs that show behavior patterns.

Text datasets are especially risky because personal details can hide inside normal sentences. Support tickets, chat logs, and call transcripts often include private data without anyone meaning to include it.

The real training risk: models can memorize

Even if you do not want your model to repeat private details, it can happen. Some models can memorize rare strings or unique records, then reveal them when prompted in a clever way. This is one reason buyers ask about what you trained on and how you prevent leakage.

The risk is not only legal. It is also reputational. A single screenshot showing your model repeating a private detail can damage trust far beyond the people involved.

How to reduce exposure without killing progress

Start by asking one hard question: do we truly need personal data for this model to work? If the answer is no, remove it. If the answer is yes, reduce it. Mask it, blur it, redact it, or transform it so the model learns patterns without learning identities.

If you are building robotics systems, you can often train on task-relevant data that removes faces and personal markers. The goal is not to collect less data. The goal is to collect the right data, in a safer form.