SOC 2 is not a badge. It is a promise.

It tells a buyer, a partner, or an investor: “We handle your data with care. We do what we say we do. We can prove it.”

If you are building an AI startup, you will feel SOC 2 pressure early. A mid-market customer asks for it in a security review. An enterprise deal stalls without it. A bank, hospital, or insurance buyer sends a long form and SOC 2 sits right at the top. Even some seed investors quietly want to know you can pass a real audit later.

But here is the trap: many AI teams “solve” SOC 2 by overbuilding. They add tools they do not need. They write long policies nobody reads. They create process theater that slows shipping. Then they burn weeks, miss product deadlines, and still fail the audit because the basics were not real.

This series will help you get SOC 2 ready without turning your startup into a slow-moving company.

And while we are on the topic of building real moats early: Tran.vc helps AI, robotics, and deep tech founders protect what matters from day one—by investing up to $50,000 in in-kind patent and IP services, not just advice. If you want to build leverage before you raise, you can apply anytime here: https://www.tran.vc/apply-now-form/

What “SOC 2 readiness” actually means (in plain words)

SOC 2 is an audit. An outside firm checks whether your controls match what you claim you do. “Controls” are simply the ways you reduce risk. Examples are: who can access production, how you handle incidents, how you track changes, how you back up data, how you onboard and offboard staff, how you vet vendors, and how you respond when something goes wrong.

SOC 2 is built around “Trust Services Criteria.” Most startups start with Security. Many also add Availability. Some add Confidentiality. Fewer add Processing Integrity and Privacy unless the business requires it.

Readiness is not the audit. Readiness is being able to pass when you choose to start.

For an AI startup, readiness means five things are true:

You can clearly explain your system boundaries. (What is in scope, what is out.)

You can show that only the right people can touch customer data.

You can show you are watching your systems and you react to alerts.

You can show you manage change in a controlled way.

You can show you treat vendors like real risk, not an afterthought.

You do not need a giant compliance program. You need a small set of real habits, written down, and followed.

The mindset that keeps you from overbuilding

SOC 2 can feel like a big “project.” If you treat it that way, it becomes a monster. You assign it to one person. They go off and write docs. Then a month later everyone scrambles to “implement” the docs. That is where overbuilding starts.

A better approach: treat SOC 2 as product work.

Not “compliance work.” Product work.

Because the outcome you want is not a report. The outcome you want is faster trust. Shorter security reviews. Less fear in the room when a big customer asks hard questions. And fewer nights awake worrying about a breach.

In product terms, your goal is:

Minimum controls that reduce real risk,

implemented using tools you already have,

that do not slow engineering down,

and that create proof you can show.

Proof matters. In SOC 2, “If it is not written down, it did not happen.” That line scares founders. But it is also freeing. It means you do not need perfection. You need evidence.

Evidence is usually simple. A screenshot. A ticket. A log. A pull request. A configuration export. A Slack incident channel. A vendor contract. A list of users. A record of an access review.

You win by being boring and consistent.

When should an AI startup start SOC 2 work?

Most AI startups start thinking about SOC 2 too late. The deal appears, the buyer says “SOC 2 required,” and suddenly you are compressing months into weeks.

At the same time, some startups start too early. They do SOC 2 before they even know who their customer is. That can be waste.

A practical rule:

Start readiness the moment you can name the type of customer that will care.

If you sell to teams that touch regulated data, health data, payment data, or large enterprises, you should start early. If your ICP is small startups buying with a credit card, you can wait longer.

But even if you wait on the audit, the readiness habits are worth starting now because they also reduce risk.

There is one more AI-specific point: your data story will be questioned sooner than you think.

Buyers will ask:

Do you train on our data?

Do you store prompts?

Where do embeddings live?

Who can access production logs?

How do you prevent one customer’s data from leaking into another’s outputs?

How do you handle model provider risk?

SOC 2 does not answer every AI question, but it sets the baseline that you are not careless.

Scope first, tools later

Overbuilding often comes from buying tools before deciding scope.

Scope is the line that says: “This is what we are promising to secure.”

For SOC 2, you define the “system” you are auditing. For an AI startup, that system might include your production app, your API, your core cloud account, your CI/CD pipeline, and the services you use to run the product.

It might also include your model stack, depending on how you do it.

If you are using a third-party model API, your “system” includes how you send and store data with that provider. The provider is usually a “vendor,” not in your system, but the connection is in scope.

If you run your own models, your model hosting environment is in scope. The data used for fine-tuning can be in scope depending on where it lives and how it is handled.

Here is the key: keep scope as small as you can while still covering the real product buyers will care about.

If you have multiple cloud accounts, keep to one for now if possible.

If you have older prototypes, keep them out of production and out of scope.

If you have internal tooling, separate it from the production system.

Scope creep is the silent killer of SOC 2 timelines.

A simple mental test: if a buyer asked you today, “Is this part of the product I am trusting?”, would you say yes? If not, keep it out.

The “thin control layer” approach

To avoid overbuilding, you want a thin control layer. That means you set a small number of rules that apply everywhere, and you make them easy to follow.

Think of it like guardrails, not gates.

Here are the guardrails that matter most for AI startups.

1) Identity is the front door

Almost every SOC 2 failure starts with access issues.

So your first big job is identity.

Your goal: every person has one work identity, and all access is tied to it. When they leave, you can shut it off fast.

If you are early stage, you can do this without heavy tools:

Use Google Workspace or Microsoft 365 as your core identity provider.

Turn on multi-factor authentication for all users.

Require strong passwords.

Stop sharing accounts.

Remove personal emails from business systems.

Then connect key systems to SSO where you can. You do not need SSO for every tool. Start with the tools that matter: cloud provider, code repo, customer data stores, observability, ticketing, and your main model provider console.

SOC 2 auditors will ask: “How do you know only the right people can access production?” You want to answer in one breath.

“We use a central identity provider with MFA. Access is granted by role, approved in writing, and removed during offboarding. Production access is limited and logged.”

That sentence becomes real only if you set it up now.

2) Production access must be rare, intentional, and logged

Many AI teams have a culture of “everyone can jump into prod.” That is normal early. It is also risky.

SOC 2 does not require that only one person has access. It requires control.

A simple approach:

Define what “production” is.

Make a small group that can access it.

Use role-based access in your cloud provider.

Log access by default.

Block direct database changes unless there is a documented emergency.

You can still move fast. You just make access explicit.

For AI startups, production access also includes:

Access to prompt logs.

Access to feature stores.

Access to embedding databases.

Access to model weights.

Access to fine-tuning datasets.

If you do not separate these now, you will later be forced to do it under pressure. Doing it calmly now is cheaper.

3) Change management that does not slow shipping

This is where teams overbuild. They think “change management” means meetings and approvals and forms.

In modern startups, change management can be your existing engineering workflow.

If you use GitHub, GitLab, or Bitbucket, you already have most of what you need.

Your “control” can be:

All production changes happen through pull requests.

At least one reviewer approves before merge.

CI checks run before merge.

Deploys are automated from main branch.

Hotfixes are allowed but must be documented after.

That is it.

The evidence is the PR history, the CI logs, and the deployment logs.

Auditors like this because it is clear and objective.

For AI features, you should treat prompt templates and model configuration like code. Put them in version control. Use PRs. Review changes. This is a common gap: teams change prompts in a dashboard with no record. Then a customer sees different output and you cannot explain why.

If your product uses a prompt management tool, make sure it can export history or has audit logs. If it cannot, you may need a simple internal process: “All prompt changes are made in repo, not in the UI.”

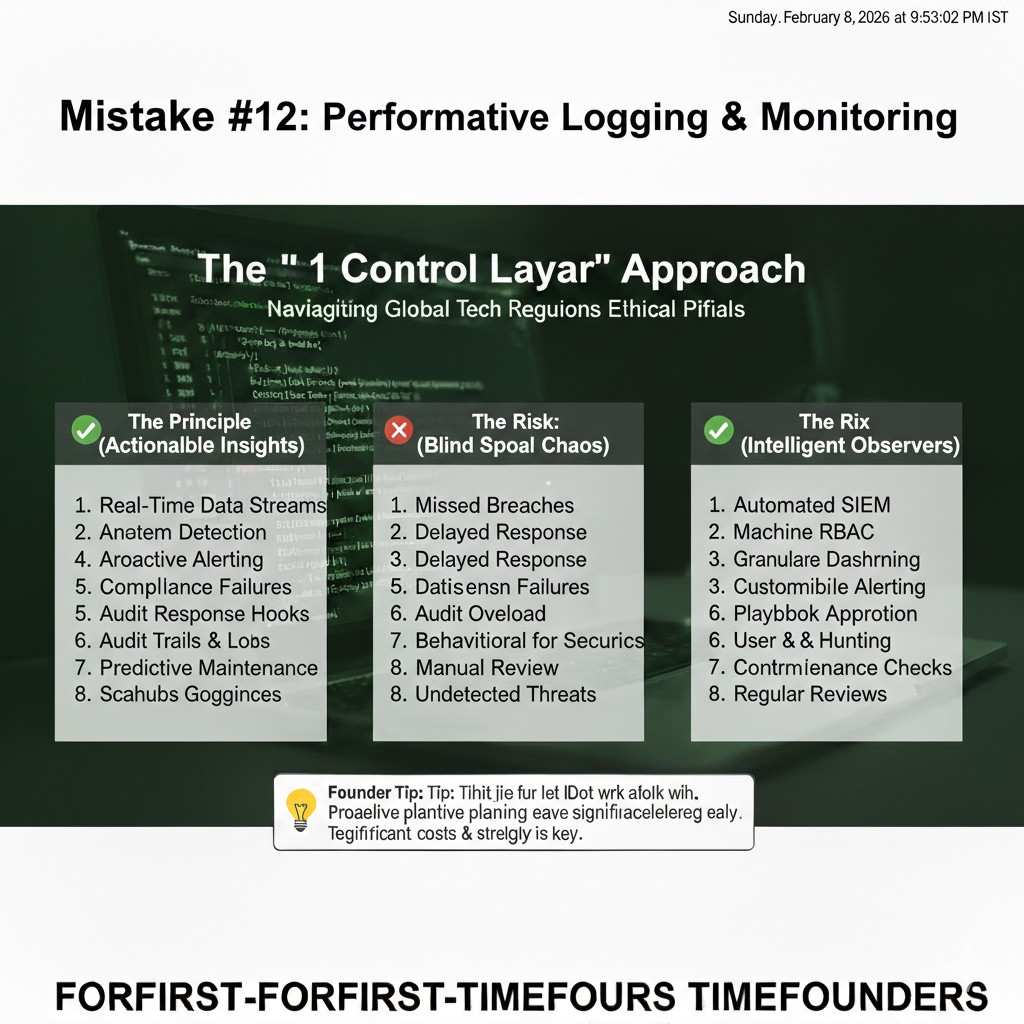

4) Logging and monitoring that is real, not performative

SOC 2 does not require fancy observability. It requires that you can detect and respond.

At minimum you want:

Centralized logs for your application and infrastructure.

Alerts for downtime and major errors.

Alerts for suspicious login events in key systems.

A clear way to open an incident and track it.

Early stage teams often use Slack and a basic monitoring tool. That is fine.

What matters is that you can show:

What you monitor,

what triggers an alert,

and what you do when it fires.

For AI startups, also monitor:

Sudden spikes in token usage (can signal abuse).

Unusual prompt patterns (could signal prompt injection attempts).

Large data exports.

Model provider outages or error rates.

You do not need a huge security operations program. But you need a habit: when something breaks, you record it.

A simple incident record can be a ticket or a doc with:

What happened,

when you found out,

who responded,

what you did,

and what you changed to prevent repeat.

That single habit makes auditors relax.

5) Vendor risk: the hidden SOC 2 iceberg for AI

AI startups lean on vendors more than most companies. Model APIs. Vector databases. Data labeling. Cloud GPUs. Analytics. Customer support tools. Email tools. Billing. Every one of these touches some data.

SOC 2 will force you to face this.

The simplest workable approach:

Make a short list of vendors that touch customer data or are critical to uptime.

For each vendor, store:

what data they get,

why you use them,

their security posture (often their own SOC 2 report),

and your contract terms.

You do not need to assess every tool. Start with the ones that matter.

If a vendor has a SOC 2 report, keep it. If they do not, have a simple plan: limit what data they get, or replace them when needed.

For model providers, buyers will ask detailed questions. SOC 2 will not fully cover “model behavior,” but it helps you show that you manage vendor risk in a structured way.

6) Data handling: answer the training question clearly

This is the most emotional area for buyers. They worry their data will become someone else’s advantage.

So you must define your data stance in simple words, and follow it.

Decide:

Do you store prompts and outputs? For how long?

Do you log user inputs by default?

Do you let customers opt out?

Do you train on customer data?

Do you fine-tune on customer data?

Do you use human review on customer data?

Then write it down in your security page and contracts.

SOC 2 does not force a specific stance. It forces consistency.

If you tell customers “we do not train on your data,” you must ensure your systems and vendors align with that. If you use a model API, you must know what settings and contract terms apply.

This is where many AI startups trip: marketing says one thing, engineering does another, and the vendor settings are unclear.

A readiness step that pays off fast is to map data flows:

Where does user input enter?

Where is it stored?

Where is it sent?

Where is it logged?

Who can see it?

How is it deleted?

You do not need a fancy diagram tool. A simple doc is enough.

This data map becomes the backbone of your security answers and your audit.

And it also becomes part of your moat story. If you build clean data boundaries early, you can scale trust faster.

That is the same principle Tran.vc focuses on with IP: build the asset early, when it is easiest. If you want help making your tech defensible through patents and strong IP strategy while you build, apply anytime: https://www.tran.vc/apply-now-form/

What to do in the next 14 days (without turning it into a “compliance project”)

Instead of a long checklist, focus on a two-week sprint that creates momentum.

Week one is identity and access.

Week two is evidence and documentation.

In week one, your goal is to reduce “unknown access.”

You ensure MFA is on.

You ensure every tool uses work email.

You remove shared accounts.

You tighten production access.

You confirm you can offboard someone in under one hour.

In week two, your goal is to create proof.

You write down your system scope in one page.

You write down your access rules in one page.

You write down your change workflow in one page.

You write down your incident process in one page.

You create a vendor list that covers the critical few.

Each page should be short. If it takes more than a page, you are likely overbuilding.

The main point is that you are creating a simple story:

“This is our system. These are the risks. These are the controls. Here is the proof we follow them.”

That is readiness.

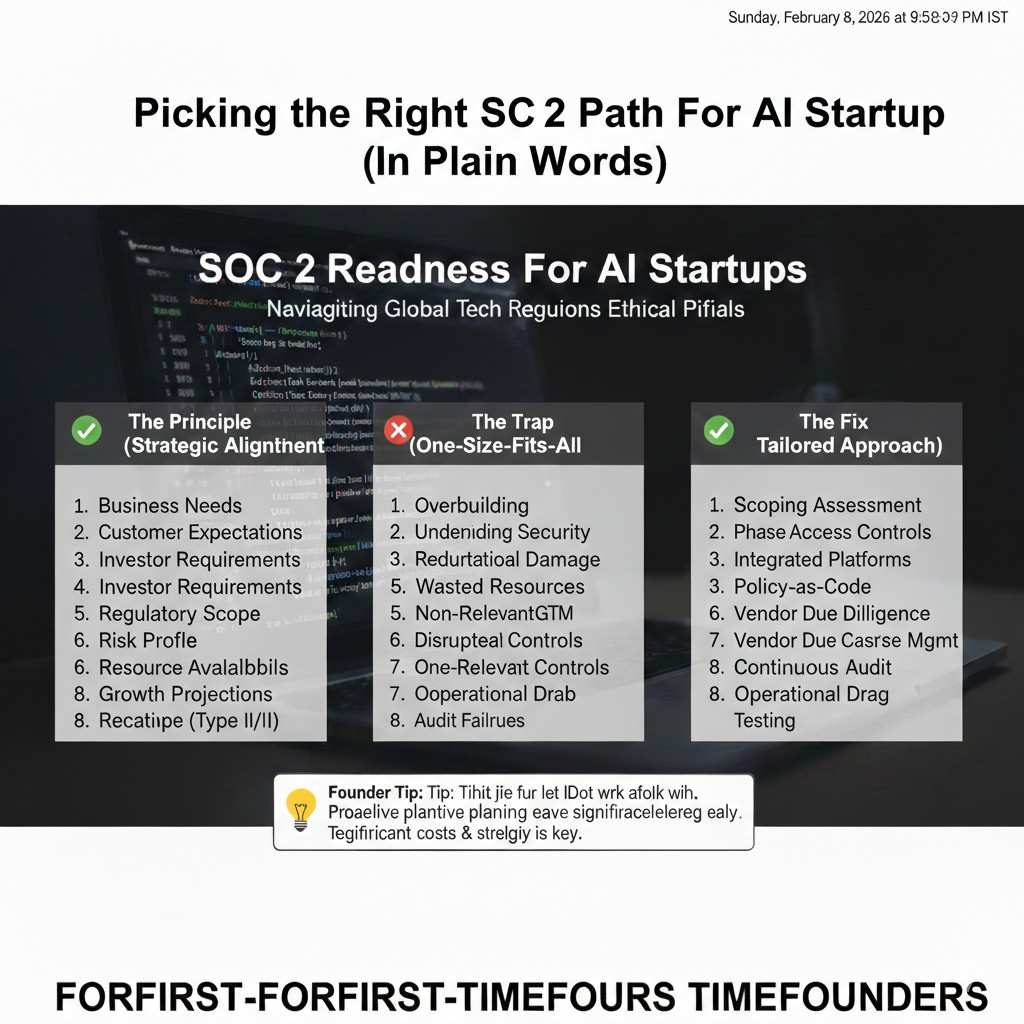

Picking the right SOC 2 path for an AI startup

You do not need to “do SOC 2” in the biggest way possible. You need the version that matches where you are today, and what buyers will ask for in the next six to twelve months.

Most AI startups get pushed into SOC 2 because one serious customer wants proof you are safe. That customer usually does not care if you are perfect. They care that you are consistent, and that an outside party can confirm it.

If you pick the wrong path, you end up building controls for a company you are not yet. That is the fastest way to slow down product and lose deals anyway.

SOC 2 Type I vs Type II in simple terms

Type I is a snapshot. It says your controls are designed well on a specific date. It is proof you set things up.

Type II is a movie. It says your controls worked over a period of time, often three to twelve months. It is proof you did what you said you do, again and again.

If a buyer says “we need SOC 2,” they often mean Type II. But some will accept Type I if you are early and moving fast. The trick is to ask, early in the sales cycle, what they truly require.

A practical approach is to become Type I ready first, then move into Type II when revenue and deal size justify it. That way you build the base once, and you do not scramble later.

Which Trust Services Criteria you should choose first

Most AI startups start with Security. Security covers access controls, monitoring, incident response, and basic risk management.

Availability is common if customers rely on your service to run their business, or if downtime would create real harm. If your AI is in a workflow that cannot pause, buyers will ask about uptime and recovery.

Confidentiality often matters when customers treat the data as sensitive and contractually protected. Many enterprise buyers care about this because it maps to how they already think about data.

Privacy is usually the wrong early choice unless your product is clearly tied to personal data and privacy rules. It adds complexity and forces more legal alignment.

The safest early package for most AI SaaS teams is Security, and sometimes Availability. You can add more later when it is driven by customer needs, not fear.

The timeline that keeps you honest and keeps you fast

SOC 2 timelines do not fail because audits are hard. They fail because teams start without stable habits.

If your engineering team is changing everything daily, and you cannot describe your system boundary clearly, the audit will feel painful. Not because you are unsafe, but because you cannot prove what is true.

A better timeline is to spend a short period building readiness habits, then start the audit window. When you do that, the audit becomes a natural record of how you already operate.

For many AI startups, a sensible rhythm is to spend four to eight weeks on readiness work, then begin a Type II period. That window can be three or six months, depending on what buyers want and what your auditor supports.