AI products do not fail only because the model is “bad.” They fail because the model you picked, the vendor you depend on, or the chain of tools behind the scenes shifts under your feet. A pricing change, a policy update, a model swap, a quiet data leak, a surprise outage, or a vendor getting acquired can turn a working product into a mess overnight. This article is about that exact risk: the vendor and model supply chain risk that comes with shipping AI.

If you are building in AI, robotics, or deep tech, this matters even more. Your product is not just a demo. It needs to work in the real world, with real customers, real contracts, and real consequences. If your AI stack is fragile, the business is fragile.

One more thing before we get into it: if you are building something real and you want to protect it early, Tran.vc can help you turn your work into strong IP. They invest up to $50,000 in in-kind patent and IP services so you can build a moat before you raise a big round. You can apply any time here: https://www.tran.vc/apply-now-form/

Now let’s talk about supply chain risk, in plain words.

When people say “supply chain,” they think of factories and trucks. But AI has a supply chain too. It is just invisible. Your AI product depends on a chain of things you do not control.

It might look like this:

You send customer data to an API. That API calls a model. That model runs on a cloud. That cloud depends on chips. That vendor depends on another vendor for safety filters, logging, billing, and monitoring. And your product depends on all of it staying stable.

Most teams do not map this chain. They only notice it when something breaks.

The first big mistake is thinking the model is the product. The model is a part. Often, it is not even the most important part. The product is the workflow you built around it, the trust you earned, the rules you set, and the way you handle failure.

So when we talk about “vendor and model supply chain risk,” we are talking about the ways your AI product can break because of outside changes. These changes can be slow and quiet, or fast and loud. Both are dangerous.

Let’s make it real. Here are the main ways this risk shows up, and how to deal with each one.

Pricing risk is the first punch people feel.

A vendor can change price. A vendor can change how they count tokens. A vendor can change how they charge for tool calls, images, embeddings, storage, fine-tuning, or logging. Even if the “per token” price stays the same, the true cost can still rise because a new model needs longer prompts, or produces longer outputs, or needs more retries to be safe.

If your product margin is thin, one pricing change can wipe it out.

The fix is not “negotiate harder.” Early stage companies do not have leverage. The fix is to design so your unit cost can move without killing you.

That starts with knowing your unit economics at the feature level, not at the company level. You should know, in simple terms, what one customer action costs you. For example, “one support ticket reply costs $0.07.” Or “one document summary costs $0.03.” Or “one robot inspection report costs $0.22.” If you cannot say it, you cannot control it.

Once you know the cost per action, you can build guardrails. Put limits in the product. Not in a harsh way. In a sensible way. Maybe long documents get summarized in parts. Maybe power users get a higher plan. Maybe some features run on cheaper models by default, and only use the best model when needed.

Also, do not let one model do everything. That is how teams get trapped. Use a small, cheap model for simple work. Use the big model for the hard work. This is not only about cost. It also reduces risk. If the big model price jumps, your whole product does not collapse.

Availability risk is the next punch.

Models go down. APIs get slow. Regions fail. Rate limits hit. This is normal. It will happen again.

The problem is not that outages happen. The problem is when your product acts like outages never happen.

If the model is down and the user sees a blank screen, you lose trust. If the model is down and your system crashes or retries forever, you lose money. If the model is down and you silently return wrong answers, you lose customers.

A strong AI product has a “degraded mode.” That means when the best path fails, the product still works, just with fewer features or lower quality. The user still gets progress.

For a B2B AI product, degraded mode can look like: “We could not run the deep analysis right now. Here is a fast summary and we will email the full report when ready.” Or: “We are switching to a backup model for the next few minutes.” Or: “This request is queued.”

But the key is honesty and control. You do not want to hide it. You want to explain it in a calm tone and keep the workflow moving.

To do this, you need timeouts, circuit breakers, and a queue. You also need to store the inputs so you can replay the task later. This is basic reliability work. Many AI teams skip it because they think it is “just a model call.” It is not.

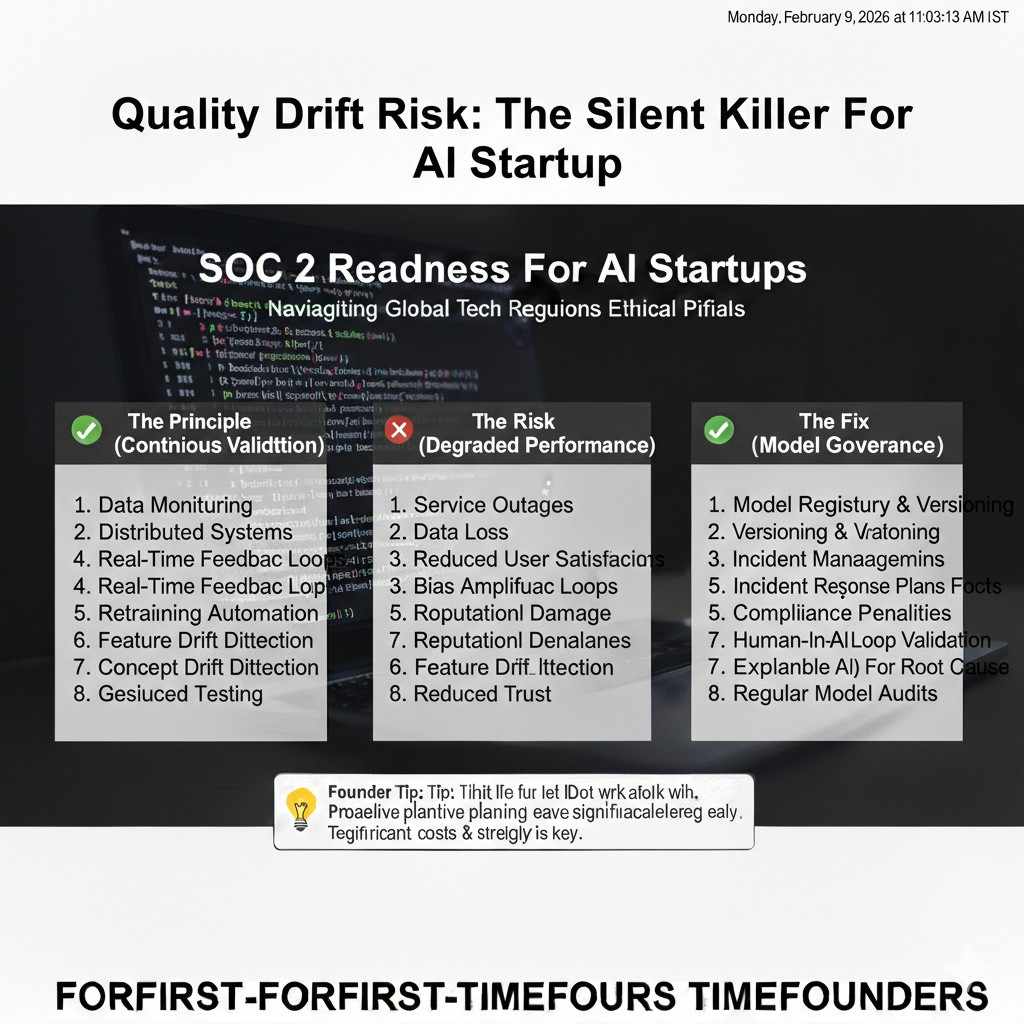

Quality drift risk is the quiet killer.

Sometimes nothing “breaks.” The API stays up. The price stays stable. But output quality shifts. A vendor updates a model. The same prompt that worked last month now gives a different style, or misses details, or follows rules less well. You might not notice right away. Your users will.

This is the hardest risk because it is slow. It feels like your product is “getting worse” and you do not know why.

The fix is to treat prompts, model versions, and output checks like real production code. You need tests. You need a set of saved “golden” examples that represent your core use cases. Every time you change a prompt or a model, you run the set and compare results.

This is not about perfect scores. It is about catching big shifts early.

You also want to log model inputs and outputs in a safe way. Not raw customer data if you can avoid it, but enough to debug. For example, you can store hashed IDs, redacted text, and key metrics like token counts, refusal rate, latency, and whether the output passed your checks.

If you do not measure quality, you will learn about quality drift from angry emails.

Policy risk is another silent one.

Vendors have usage rules. These rules change. Safety filters change. What was allowed last quarter might get blocked this quarter, even if your intent is fine.

For example, a healthcare product might get blocked because it looks like medical advice. A finance tool might get blocked because it looks like trading advice. A cyber tool might get blocked because it looks like hacking. Even if you are building a safe, legal product, the vendor might choose to be extra cautious.

When this happens, your product might start refusing requests that used to work.

The fix is to design your workflows so the model is not the only gate. You should have your own policy layer. That means you classify requests, detect risky inputs, and route them. Sometimes you can reframe the task into a safer form. Sometimes you need a human step. Sometimes you need to restrict the feature.

This is not about gaming rules. It is about building a product that can keep serving customers even as vendor rules change.

Data risk is the one that gets founders in trouble.

Every AI vendor has some policy about how they handle your data. Some keep it for a period. Some use it for training unless you opt out. Some allow you to control retention. Some do not. Some have different rules for enterprise plans.

If you do not understand this, you can make promises to customers you cannot keep.

Also, the risk is not only the vendor. It is your own team. If you log everything in plain text, if you send secrets in prompts, if you store raw outputs without care, you are building a leak path.

The fix starts with a simple rule: do not send what you do not need.

If the model does not need a full customer record, do not send it. If it only needs a few fields, send only those fields. If it needs a document, consider chunking it and removing names. If it needs context, use summaries instead of raw text when possible.

You can also use “data boundaries.” That means some tasks run in a “clean room” where you do not log raw text. Or you use customer-managed keys. Or you run sensitive steps on a model you host yourself.

Not every startup can self-host. That is fine. But every startup can reduce the data they expose.

Contract risk matters once you sell to serious buyers.

A big customer will ask: where does the data go, who touches it, how long is it stored, what happens if the vendor has a breach, can you support audits, can you sign a DPA, what about SOC 2, what about HIPAA, what about GDPR.

If your AI stack is a mystery, you will struggle.

The fix is to write down your AI supply chain early. Not a 50-page doc. A simple map. What vendors you use, what data flows to each, what the purpose is, what retention is, what your controls are.

When a customer asks, you do not want to scramble. You want to answer clearly.

This is also where IP matters. If your value is only “we call a model,” customers can swap you out. But if your value includes your own logic, workflows, data rights, and defensible inventions, your position is stronger.

Tran.vc exists for this exact moment. They help founders turn technical work into real IP assets early, while you are still building. If you want to explore that, apply here: https://www.tran.vc/apply-now-form/

Now, there is a deeper risk that people miss: vendor lock-in.

Lock-in is not only about the API. It is about the shape of your whole system.

If your prompts are built around one vendor’s quirks, you are locked in. If your evals are tied to one model’s style, you are locked in. If your output format is hard-coded to one vendor’s tool calling, you are locked in. If your product depends on one vendor’s embeddings, you are locked in. If your team does not know how to swap models, you are locked in.

Lock-in becomes a risk when you need to switch fast. Maybe for price. Maybe for performance. Maybe because a vendor blocks your use case. Maybe because a customer demands on-prem. Maybe because a competitor gets a better deal and undercuts you.

So how do you reduce lock-in without slowing down?

You do not need to build a giant abstraction layer on day one. But you do need clean seams. You need to keep the model behind an interface you control. You need to store prompts and system rules in a place you can update without redeploying the whole app. You need to version prompts. You need to store model choice as config, not code.

You also need to keep your “business logic” outside the model. The model can help, but it should not be the only source of truth.

For example, if you are building an AI tool for legal teams, the model can draft text, but your own rules should enforce what clauses must be present. If you are building a robotics stack, the model can interpret sensor notes, but your own code should enforce safety bounds. If you are building a finance tool, the model can explain, but your own code should calculate.

The more you move truth into your own system, the less you depend on the vendor staying the same.

A practical way to do this is to split tasks into steps. One step is “extract facts.” Another step is “apply rules.” Another step is “write output.” The model might help with the first and third steps. Your code owns the second.

This also makes testing easier.

At this point, you might be thinking: “This sounds like a lot. We are just trying to ship.”

Yes. But this is what separates a demo from a company.

You do not need to do everything at once. You can start with a few simple habits that reduce risk right away.

You can start by writing down your top three vendor risks. Price, outages, policy changes. Then add one control for each.

For price, track cost per action and set limits.

For outages, build a fallback path and a queue.

For policy changes, add a request classifier and a safe mode.

These three changes already put you ahead of most teams.

Also, every time you add a new vendor, treat it like adding a dependency in your business, not just your code. Ask: what happens if they change terms? What happens if they get acquired? What happens if they remove a feature? What happens if they block us?

This is supply chain thinking.

And when you invent new ways to manage these risks, you might be inventing real IP. The way you route tasks, compress context, verify outputs, or safely run AI in robotics workflows can be patentable. This is another reason Tran.vc focuses on IP services, not only cash. If you build a strong moat early, you have more control later. Apply here when you are ready: https://www.tran.vc/apply-now-form/

Pricing risk

Why pricing changes hit harder than you think

AI costs feel simple at first because the bill looks like one number. But in real products, cost is tied to many tiny choices. A vendor can change token rates, but they can also change what counts as “billable,” how tool calls are priced, and how long outputs tend to be. Even if the sticker price stays the same, your real cost per user action can rise.

Pricing risk also shows up when your prompts get longer over time. As you add safeguards, examples, and rules, prompts grow. The model may also produce longer replies if you do not control format. That extra length becomes silent spend that grows with usage, which can surprise you in a month that should have been profitable.

The simplest way to stay in control of unit cost

You need to measure cost at the feature level, not at the company level. In plain words, you should know what one action costs, like one summary, one chat reply, one report, or one alert. This makes pricing changes visible fast, because you can see which feature is getting expensive and why.

Once you can see unit cost, you can shape it. You can limit extreme inputs, compress context, reduce retries, and use smaller models for simple tasks. The goal is not to be cheap. The goal is to avoid being surprised, and to make sure your product can survive a vendor change without panic.

Smart model routing that does not feel “cheap” to users

Most AI products do not need the biggest model for every step. Users care about outcomes, not model names. If you route simple work to a smaller model and reserve the best model for hard work, users still get strong results and you lower cost risk at the same time.

This works best when your product is clear about what it is doing. Not with a long explanation, but with calm messaging when needed. If a user asks for a deep analysis, your system can run the deeper path. If they ask a basic question, it can choose the faster, cheaper path without making the user feel like they are getting a “worse plan.”

Contract and billing details that founders often miss

Early stage teams often ignore billing terms until the first big customer asks hard questions. Some customers will want cost predictability, and they will ask for usage caps, alerts, or fixed budgets. If your vendor can change price quickly, you can get stuck between the vendor bill and the customer contract.

To avoid this, build internal cost alarms early and keep a buffer in your pricing. Also, treat model costs like a variable input, not a fixed cost. When you price your product, assume costs can move, and design plans that can absorb change without forcing you to raise prices every quarter.

Availability risk

Outages are normal, but broken workflows are not

Every model API will have slow periods and outages. The risk is not that it happens. The risk is that your product collapses when it happens. Many AI apps are built like a single thin bridge: one request goes out, one response comes back, and if it fails, the user gets nothing.

In B2B, “nothing” is not acceptable. People are using your product inside their workday. If they cannot move forward, they will lose trust fast. The fix is to design the workflow so it can still deliver progress even when the model is slow or down.

What “degraded mode” looks like in a real product

Degraded mode means your product still works, but with reduced quality, speed, or depth. A report might be shorter. A summary might be less detailed. A complex step might be queued for later. The point is to keep the user moving and to keep your system stable.

Degraded mode should be designed, not improvised. You decide in advance what the fallback is for each key feature. This removes guesswork during an incident and keeps your team from making risky changes under pressure.

The three controls that prevent chaos during failures

First, use strict timeouts so requests do not hang forever. Second, use circuit breakers so repeated failures do not flood your system and multiply costs. Third, use a queue so work can continue later without the user redoing everything.

These are not “enterprise-only” ideas. Even small startups can build them. The payoff is huge because it turns outages from a public disaster into a short inconvenience that users can understand and tolerate.

How to communicate issues without losing trust

When something fails, the worst thing you can do is pretend nothing happened. Users can tell. A calm message builds trust when it is honest and gives a path forward. You do not need dramatic language. You need simple words and a clear next step, like “We are running this in the background and will notify you when ready.”

If you sell to teams, you also need an internal status view for your own support and ops. If support cannot see what happened, they cannot help. That leads to slow tickets and frustrated customers, which makes even small outages feel bigger than they are.

Quality drift risk

Why outputs change even when you change nothing

Models are updated. Safety layers are tuned. Inference settings shift. Vendors may change routing behind the scenes. All of this can alter the way outputs look and behave, even if your prompts and code stay the same. Many teams notice only after users complain that the product “doesn’t feel as good” anymore.

Quality drift is dangerous because it can be slow and uneven. Some users may see it first, depending on their use case. That makes it harder to detect if you are not watching quality in a structured way.

The habit that catches drift before customers do

You need a set of saved examples that represent your most important tasks. These examples should reflect real customer inputs, cleaned of sensitive data. When you update a prompt or model, you run the same examples and compare results. This is the simplest form of quality testing, and it gives you early warning.

Do not aim for perfection. Aim for stability. If results change a lot, you want to know right away so you can decide whether it is an improvement, a regression, or a new risk you must handle.

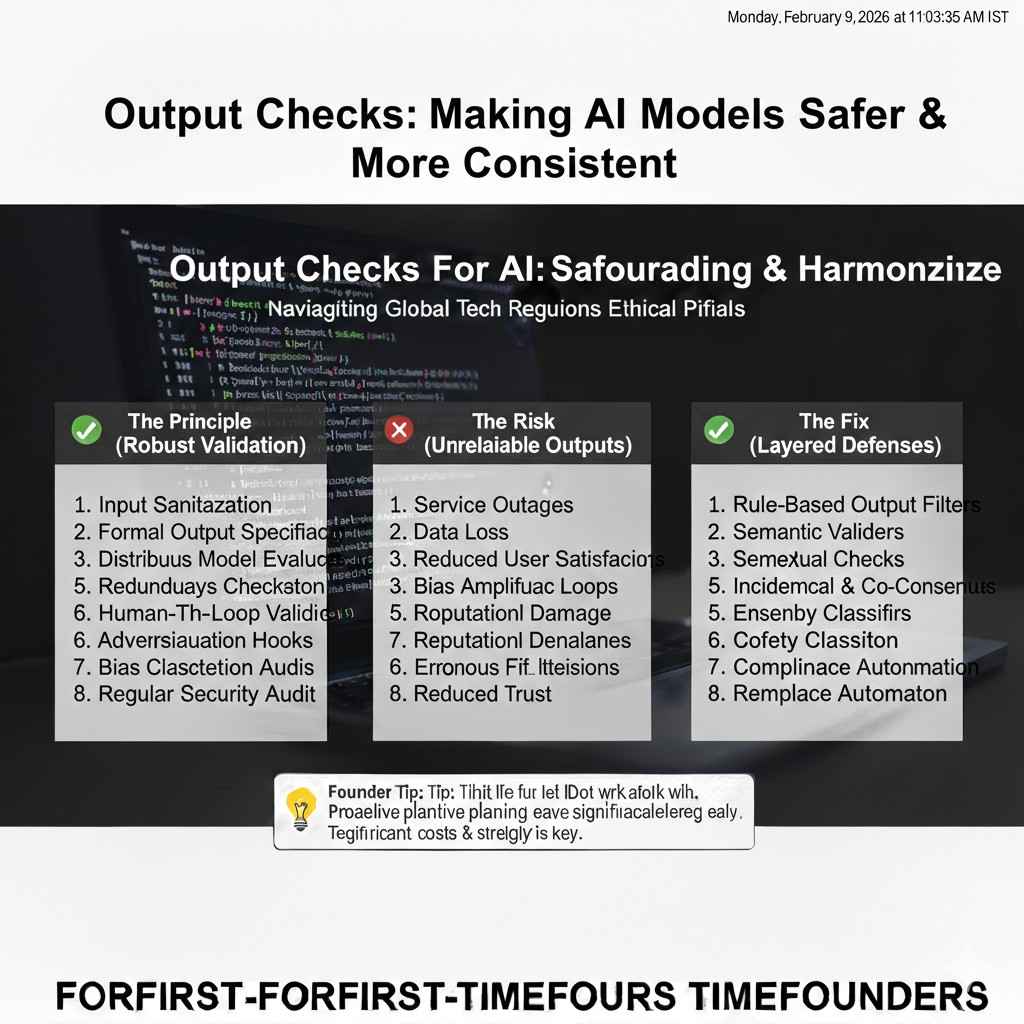

Output checks that make the model safer and more consistent

Many AI teams rely on the model to follow rules, but models are not consistent enough to be your only guard. You should check outputs in your own system. This can be as simple as verifying that required sections exist, that the format is correct, and that the answer contains a source reference when needed.

These checks reduce drift risk because they keep the product behavior stable even when the model shifts. If the model changes style, your checks can still enforce the shape of the output and catch missing pieces before the user sees them.