If you build deep learning systems, you are building a factory made of code, data, and time.

The model file you ship is only the tip of the iceberg. The real value often sits under the water: your training data, your labels, your feature pipeline, your prompts, your fine-tuning steps, your eval tests, and the small choices your team made over weeks or months. Those choices are hard to copy if they stay private. But once they leak—or once a competitor can copy the results without doing the work—you lose speed, pricing power, and trust.

This is why “protecting the model” is not just a legal task. It is a product task. It is an engineering task. It is a business task.

In this guide, we will talk in plain words guaranteeing one thing: you will leave with steps you can use right now. Not theory. Not buzzwords. Real things you can do this week to lower theft risk, keep your data safe, and build a stronger moat.

Tran.vc helps technical founders do this early, before a seed round. We invest up to $50,000 in in-kind patent and IP services so your work becomes an asset, not a leak. If you want help shaping a clear IP plan for your model, your data, and your training method, you can apply anytime here: https://www.tran.vc/apply-now-form/

Now let’s start where most teams get it wrong: they focus on “the model” and forget the full system.

What you are really trying to protect

A deep learning product is not one thing. It is a chain. If one link is easy to copy, the whole chain becomes easy to copy.

Think of your system as four layers:

1) The training data and labels.

This can be raw sensor data, user events, images, speech, text, logs, medical signals, factory video, robot runs, or anything else. The labels may be human-made, model-made, or rule-made. Often, the labels and the label rules are more valuable than the raw data.

2) The training recipe.

This includes what you clean, what you remove, how you slice data, which losses you use, which augmentations you apply, how you balance classes, and how you pick checkpoints. Two teams can use the same base model and still get very different results because the recipe is different.

3) The model weights and the serving stack.

Weights matter. But the serving stack matters too: pre-processing, post-processing, routing, caching, safety filters, and fallback logic. Many “model copies” fail because they expect weights alone to do the full job.

4) The feedback loop.

The best teams build a loop that keeps making the model better: monitoring, drift checks, human review, and retraining. If a competitor copies your first release but cannot copy your loop, you still win.

When founders ask, “How do I protect my model?” the better question is: Which part creates unfair advantage, and how do we lock it down without slowing shipping?

That is the balance. Protection must not kill speed. It must support speed.

If you want Tran.vc to help you map this in a clean, founder-friendly plan, you can apply here: https://www.tran.vc/apply-now-form/

Training Data Protection

Put the “gold” data in one controlled home

If your best dataset lives in ten places, you do not have a dataset. You have ten chances to lose it. The simplest fix is to pick one home for the “gold” data and treat everything else as a copy that can be replaced. This home should have clear access rules and strong logs, so you can see who touched what and when.

A practical way to do this is to store raw data, cleaned data, and labeled data in separate areas. That separation is not for beauty. It helps you keep the most sensitive part locked while still letting the team move fast on less sensitive parts.

Stop full downloads without slowing work

Most leaks happen because someone downloads “just in case.” It feels faster in the moment, but it creates permanent risk. A safer pattern is to keep the data inside your environment and let people run jobs where the data lives. They pull only the slices they need, and only for the time they need them.

If your team truly needs local work, use small sampled sets that are safe to share. Keep them clearly marked as “dev-only,” and rotate them often. Your real dataset should not be the thing sitting on a laptop during a coffee shop meeting.

Control access by role, not by request

Early teams often give broad access because it avoids delays. But broad access is also broad exposure. A better way is role-based access. People get the least access needed to do their work, and then access expands only when there is a real reason.

This is not about distrust. It is about being able to say, with a straight face, that you did the basics. If you ever need to explain a leak to a customer, an investor, or a regulator, “everyone had access” is the worst sentence you can say.

Make labels and label rules first-class assets

Founders tend to talk about “the data” as one big blob. But labels are often the true value. The label guide, the edge-case rules, the reviewer notes, and the final decisions are the learning your company paid for. If a competitor gets that, they can build quickly even with less raw data.

Treat label guides like product specs. Keep version history. Track who changed what. Tie label decisions to examples so you can defend why a sample was tagged one way and not another. This protects you in theft cases, and it also improves training quality.

Prove ownership and provenance from day one

You cannot protect what you cannot prove is yours. Make a habit of recording where data came from, what rights you have, and what limits exist. This matters even more if you collect data through partners, customers, or public sources with special rules.

When you can show clean provenance, you gain leverage. You reduce legal risk. You also become easier to fund, because investors know the core asset is not a ticking time bomb.

If you want help building a clean data ownership story and turning it into an IP plan, Tran.vc does this with founders before a seed round. You can apply anytime here: https://www.tran.vc/apply-now-form/

Training Pipelines and Recipes

Protect the pipeline, not just the files

Many teams think protection equals locking a folder. But the pipeline is the real prize. Your cleaning steps, feature building, filtering logic, and sampling choices often matter more than the raw data. A competitor can get similar raw data, but copying your pipeline can save them months.

To protect the pipeline, treat it like a core product. Keep it private. Keep it documented. Keep it versioned. Avoid spreading critical steps across personal notebooks that no one can trace or control.

Use strong change tracking for training configs

In deep learning, small config changes can create big performance shifts. If you cannot track those changes, you cannot defend your advantage. You also cannot prove what was unique if there is a dispute later.

A simple habit helps here: tie every trained model to a specific data snapshot and a specific config snapshot. When you can point to “this exact bundle created this result,” you gain both operational clarity and legal strength.

Limit who can run “full training”

Not everyone needs the power to run full training on the full dataset. Full training access is a high-risk privilege. One mistake can leak weights, data, or both. A practical approach is to let most team members run experiments on smaller subsets, while only a small group can run full pipelines.

This still supports speed because most iteration happens on smaller loops anyway. Full training becomes a controlled event, not a casual button click.

Build a “secret sauce” layer that is hard to copy

If a competitor can rebuild your model from a paper description, you do not have much protection. But you can create a layer of advantage that is hard to infer. This could be a custom evaluation suite, a domain-specific augmentation method, or a pipeline step that only makes sense with your data.

The goal is not to hide everything. The goal is to ensure that copying outputs does not easily reveal inputs. When your improvements come from many small choices tied to your environment, imitation becomes expensive.

Tran.vc often helps founders identify what parts of the training recipe are patentable and what parts are best kept as trade secrets. That mix is usually what creates real durability. Apply here if you want that map: https://www.tran.vc/apply-now-form/

Model Weights and Checkpoints

Decide what you ship and what you never ship

A clear rule helps: ship only what you must. If your business can work through an API, you reduce the chance that weights get copied. If your business requires edge deployment, then weight protection becomes even more important, because the weights must leave your servers.

This is a product decision as much as a security decision. Your go-to-market choice changes your risk profile right away.

Reduce value of stolen weights with gated features

Even if someone steals weights, you can lower the value of that theft. One way is to keep key behaviors on the server side. For example, you might keep special post-processing steps, safety logic, routing rules, or domain adapters private.

When a stolen model behaves worse without the “rest of the system,” the thief does not get a ready-to-sell product. They get a partial piece that still needs work.

Watch for leakage through model outputs

Some models can reveal training data through outputs, especially when trained on repeated, sensitive, or small datasets. The risk can show up as memorized text, repeated images, or surprising exact matches.

You can reduce this by using better data cleaning, removing duplicates, using privacy-aware training choices where needed, and testing for memorization as part of evaluation. This is one of those areas where a simple test suite can save you from a public incident.

Treat model releases like financial releases

A model release is not “upload a file and celebrate.” It should feel more like shipping a payment system update. You want a short checklist that includes access review, artifact location review, dependency review, and a quick scan of what is being exposed.

This does not need to be slow. It needs to be consistent. Consistency is what prevents “one bad day” from becoming a company-ending mistake.

Defending Against API Copying

Understand how model copying happens in practice

A competitor does not need your weights to copy your value. They can send many inputs to your API and collect outputs. Over time, they build a dataset that reflects your behavior. Then they train a smaller model that matches your responses well enough to sell.

This can happen quietly because the traffic looks like normal usage unless you know what to look for. The copying is not a single attack. It is slow harvesting.

Add friction where it counts

The goal is not to block real users. The goal is to block harvesting. Rate limits, abuse detection, and usage policies are basic tools here, but they only work when enforced with care.

A strong tactic is to identify patterns that real users do not create, such as extremely broad sampling across the input space or high-volume requests with odd timing. When you detect those patterns, you can slow responses, require stronger keys, or move suspicious users to stricter tiers.

Watermarking and response shaping

Some teams use subtle response shaping so that large-scale harvesting becomes less useful. This is not about harming user experience. It is about making “perfect imitation” harder. In some cases, you can embed small statistical signals that help you later prove copying, especially when the competitor’s model starts behaving “too similar.”

This area needs careful design, because you never want to reduce accuracy for customers. But when done well, it adds a quiet layer of defense.

Contract terms that support technical defenses

Strong terms of service do not stop a thief alone, but they give you leverage. If you detect harvesting, clear terms let you cut access, demand deletion, and act faster. The best contracts match your technical controls, so enforcement is not just a threat. It is a real process.

If you want to align technical defenses with patents and contracts so your protection works as one system, Tran.vc can help. Apply anytime here: https://www.tran.vc/apply-now-form/

Trade Secrets and Patents

Know what “trade secret” really means

A trade secret is not a magic label you put on a folder. It is a promise you keep through behavior. If you treat something as a secret in daily work, the law is more likely to treat it as a secret too. If you leave it open, share it widely, or mix it into public code, it becomes hard to claim it was ever protected.

For deep learning teams, trade secrets often include the training recipe, data cleaning rules, labeling rules, and the full evaluation suite. These are the kinds of things that are hard for outsiders to recreate if they do not see your internal process. But they only stay protected if access is limited and well managed.

The practical takeaway is simple. If you want a thing to be a trade secret, decide that early, keep it in a controlled place, and make sure only the people who truly need it can reach it. That is not paranoia. That is good housekeeping.

When patents can protect more than you think

Many founders think patents are only for hardware. That is not true. In many cases, patents can cover methods, systems, and workflows that include software and machine learning, especially when the invention is tied to a real technical improvement and not just a vague “use AI” idea.

For deep learning, patentable value often shows up in places teams forget to look. It can be in how you prepare data, how you reduce compute cost, how you improve accuracy under real constraints, how you handle edge cases, how you fuse sensors in robotics, how you adapt models on-device, or how you measure and control risk. The key is that the invention must be described clearly, with steps and structure that show it is more than a wish.

Patents can help in two ways at once. They can stop direct copying, and they can increase your leverage when raising money. Investors like clear proof that your team is building a moat that does not depend on hype.

A clean way to choose: patent, secret, or both

Many strong companies use both. They patent what can be observed from the outside and keep private what cannot. This is a strong mix because it covers two kinds of threats. If someone can reverse engineer behavior, the patent gives you leverage. If someone cannot easily see the inner recipe, secrecy keeps your advantage hidden and hard to mimic.

A simple mental model helps. If a competitor can learn it by using your product, think about patenting it. If they cannot learn it without seeing inside your walls, think about keeping it as a trade secret. When you combine the two, you create a situation where even a well-funded competitor must spend real time and money to catch up.

Tran.vc is built for this exact decision. We invest up to $50,000 in in-kind patent and IP services so you can file the right things early, while keeping the right things private. If you want help choosing what to patent and what to hold close, apply here: https://www.tran.vc/apply-now-form/

Documenting Your ML Inventions

Write it like you are teaching a new teammate

Founders often worry they are “not ready” to document inventions. But you do not need perfect writing. You need clear writing. A simple way is to write your invention like you are teaching it to a strong engineer who just joined your team.

Start with the problem in plain language. Then explain what was wrong with the old way. Then explain your new way step by step. After that, explain why it works better, and what changes when inputs change. The goal is not to impress. The goal is to make the invention real on paper.

This kind of document is useful even if you never file a patent. It helps you onboard, it helps you stay consistent, and it helps you avoid losing knowledge when someone leaves.

Capture the “why,” not just the “what”

Code shows what you did. It does not always show why you did it. And the “why” is often the moat. For example, a line in the pipeline might exist because it blocks a rare failure mode. Or a loss term might exist because it stabilizes training on noisy labels. Those choices are hard-earned.

When you document, write the reason behind key choices. Note what you tried that failed. Note what improved results and by how much. Note what metrics changed. These details become powerful later, because they show the invention is not random tinkering. It is an engineered solution.

Keep a short invention log that becomes a legal asset

Instead of long reports, keep a steady log. Each week, capture what improved, what changed, and what new method emerged. Add dates, names, and links to the relevant experiments. This creates a timeline. Timelines matter for IP work and for investor trust.

The key is to make it easy enough that it actually happens. If the process feels heavy, people will skip it. If it feels like a normal part of building, it will stay alive.

Tie inventions to real product impact

A patent or an IP story is stronger when it links to real constraints. For example, “we improved accuracy” is vague. But “we improved accuracy under limited compute on edge devices” is stronger. “We reduced inference time while keeping safety checks” is stronger. “We made robotics perception stable under low light” is stronger.

When you frame inventions around constraints, you show that the work is not academic. It is commercial. That is the kind of story investors and customers understand.

If you want Tran.vc to help turn your invention notes into a patent plan that fits your product roadmap, apply here: https://www.tran.vc/apply-now-form/

Contracts That Actually Protect ML Assets

Assign ownership clearly with every contributor

One of the fastest ways to lose IP is unclear ownership. If a contractor writes part of the data pipeline or helps label data, and there is no strong agreement assigning rights to the company, you can end up with a mess later. That mess can block funding, partnerships, or acquisition.

This is not about being harsh. It is about being clear. Every contributor should sign an agreement that says the work product belongs to the company, and that confidential information stays confidential. If you wait until later, people disappear, emails get lost, and your leverage drops.

Define “confidential” in a way that matches ML reality

Generic contracts often fail because they do not match how ML teams work. You want your definition of confidential information to clearly include training data, labels, evaluation sets, prompts, fine-tuning data, weights, and any internal methods used to train and tune.

Clarity matters because if something ever goes wrong, you do not want to debate whether the label guide “counts.” You want the paper to match the real assets that create value.

Add practical rules about data movement

Many contracts say, “Keep it confidential,” but they do not say what that means day to day. A better approach is to include practical rules. For example, no copying datasets to personal drives, no uploading to unapproved tools, and no sharing model weights outside approved channels.

These rules are not there to punish. They are there to prevent accidental leaks. Most issues are not evil. They are sloppy. Clear rules reduce sloppy behavior.

Customer and partner data terms can make or break you

If you train on customer data, you must be precise about what rights you have. Some customers will allow training only for that customer. Some will allow aggregated training. Some will not allow training at all. If you ignore this, you can end up forced to delete the very data that made your model good.

A strong contract aligns expectations upfront. It also gives you room to build a product that improves over time without stepping into a legal trap.

Tran.vc helps founders get these terms right early, because clean IP and clean data rights are part of being fundable. If you want help setting up the basics, apply here: https://www.tran.vc/apply-now-form/

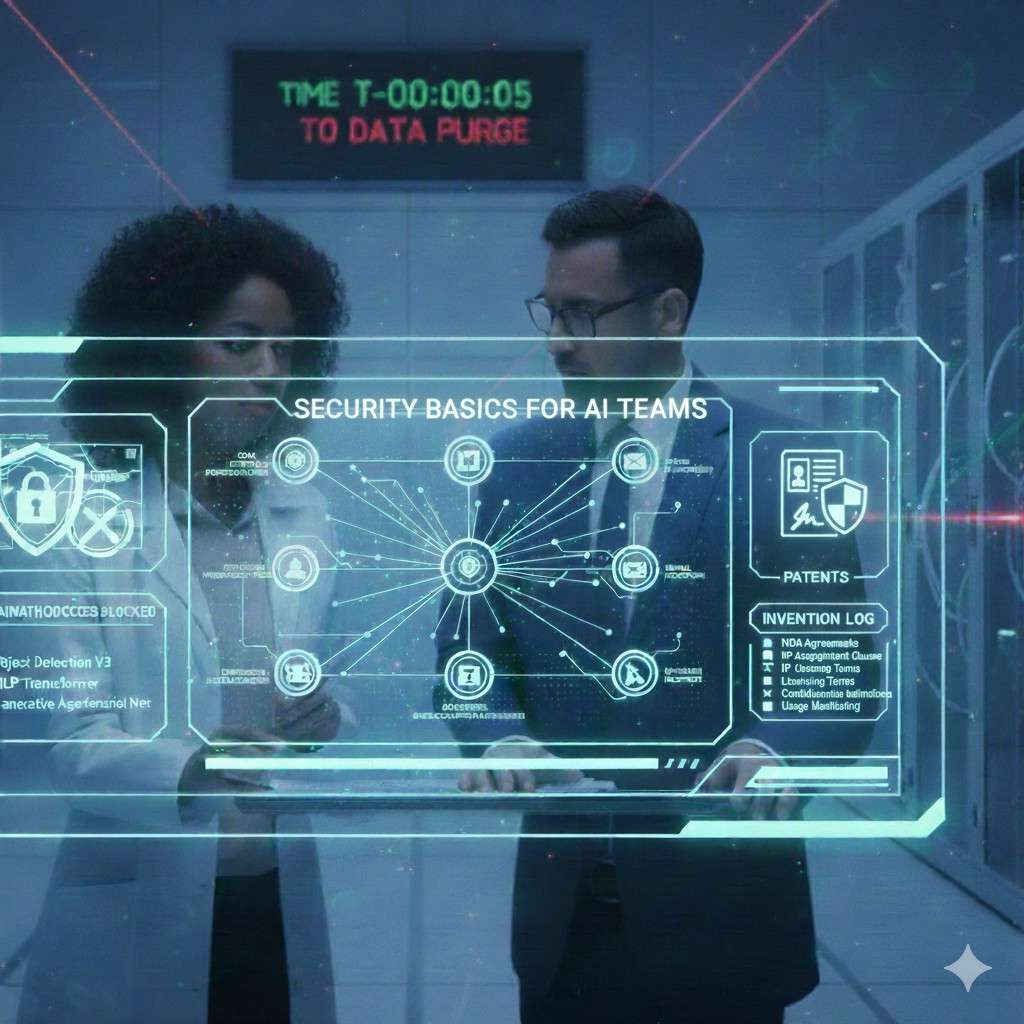

Security Basics for AI Teams

Protect access like you protect money

It is easy to treat access as a small detail. But access is the front door to your model and data. Use strong identity controls, require multi-factor authentication, and remove access quickly when someone leaves. These are not “enterprise” habits. They are survival habits.

The goal is not to build a fortress. The goal is to stop easy mistakes. Most attackers do not break in through a wall. They walk in through an open door.

Separate environments to reduce blast radius

If dev, test, and production all share the same data and keys, one mistake can spread everywhere. A simple separation reduces harm. Development can use safe samples. Testing can use limited data. Production can use real data with tighter controls.

This keeps the team fast while keeping the crown jewels less exposed. When something goes wrong, you limit how far it can go.

Treat third-party tools as potential leak paths

AI teams love tools. Label tools, logging tools, notebook tools, evaluation tools, collaboration tools. Each tool is also a place data can land. Before you upload sensitive data into any tool, make sure you know who can access it, where it is stored, and what the vendor can do with it.

This is not about avoiding tools. It is about being intentional. The easiest leaks happen when someone connects a new tool in ten minutes and forgets it exists.