Regulated AI is not like regular software.

When your AI touches money, health, hiring, safety, or people’s rights, the rules change. People will ask hard questions. Auditors will ask for proof. Customers will want to know what you did, when you did it, and why you did it. If you cannot show it, they will treat it like it never happened.

That is why documentation is not “extra work” in regulated AI. It is part of the product.

Here is the good news. You do not need to write a giant novel. You just need to keep the right things, in the right way, so you can answer four simple questions at any time:

What does the system do?

What data did it learn from and use?

How do you know it is safe and fair enough?

Who approved what, and when?

If you can answer those four questions quickly, you look like a serious team. You move faster in sales. You reduce legal risk. You avoid weeks of back-and-forth with enterprise buyers. And if you plan to patent key parts of your AI, strong records also help you prove what you built and when you built it.

Tran.vc works with technical teams who want to build real moats early, not just demos. If your AI has a novel method, a novel pipeline, or a novel way to reduce risk, it may be protectable. You can apply anytime here: https://www.tran.vc/apply-now-form/

In this guide, we will keep it simple. You will learn what to keep, what “good enough” looks like, and how to set up documentation that does not slow you down.

Before we go deeper, one mindset shift: documentation is not a pile of files. It is a story your product can defend. A clear story that starts at the problem, shows the choices you made, and ends at the proof that your system behaves the way you claim.

If you want, I can continue into the first core section: the “documentation map” for regulated AI (what folders and records matter most, and how to keep them without turning your team into writers).

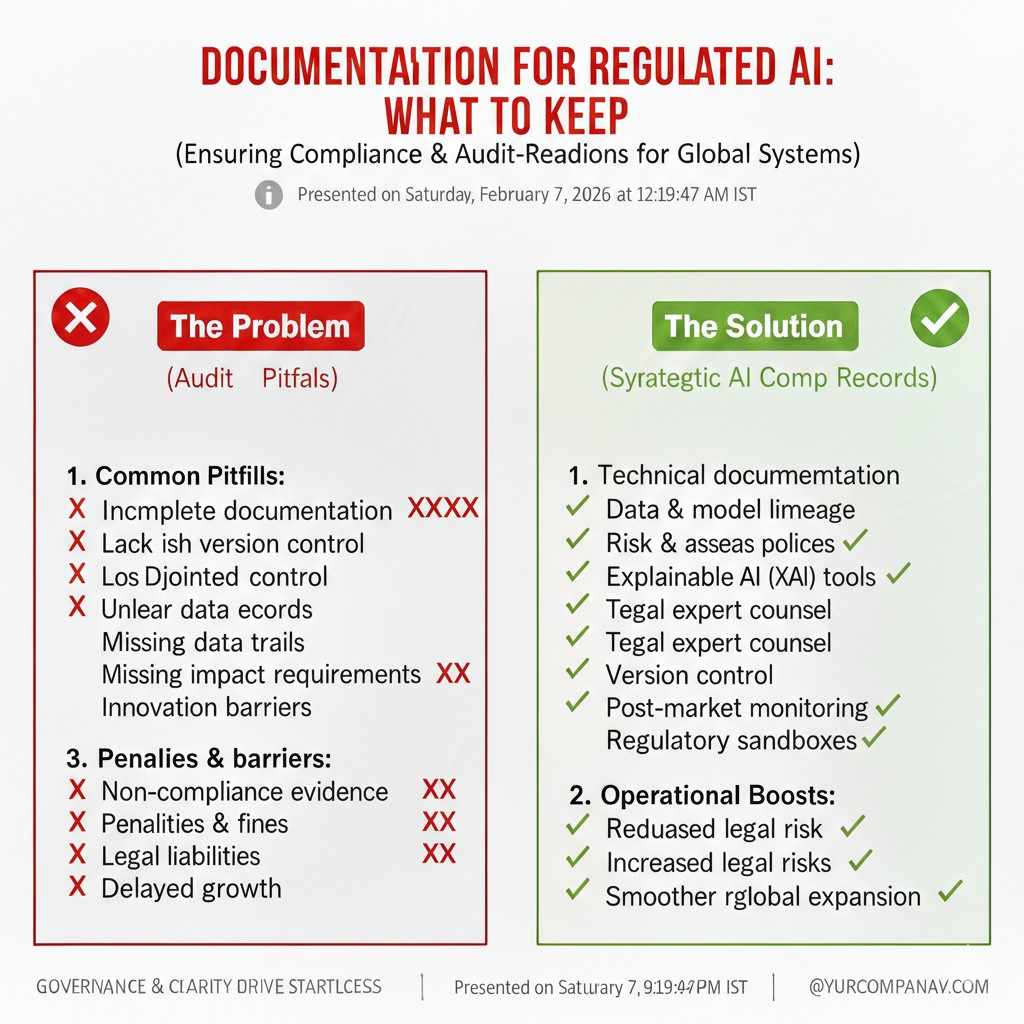

Documentation for Regulated AI: What to Keep

Why this matters in real life

Regulated AI is not judged by how smart it seems in a demo. It is judged by what you can prove when someone asks. A buyer, a regulator, or an internal risk team will not accept “trust us” as an answer. They want to see your records, your tests, your choices, and who signed off.

If you cannot show these things, the deal often slows down or dies. Even if your model is strong, weak documentation makes you look risky. Good documentation does the opposite. It makes you look careful, ready, and worth betting on.

This is also how small teams beat bigger teams. When you can answer questions fast, you close faster. You also avoid painful rework when rules tighten or a customer changes what they need.

What “documentation” really means

Documentation is not just a set of notes in a folder. It is a set of facts that lets another person follow your work. They should be able to see what you built, what data you used, what you tested, and what you decided.

Think of it like a clean trail in the snow. When someone walks behind you, they should see where you went. They should also see where you chose not to go, and why.

That trail becomes your protection. It protects your team when things go wrong. It protects your company when someone questions your results. It protects your reputation when you scale.

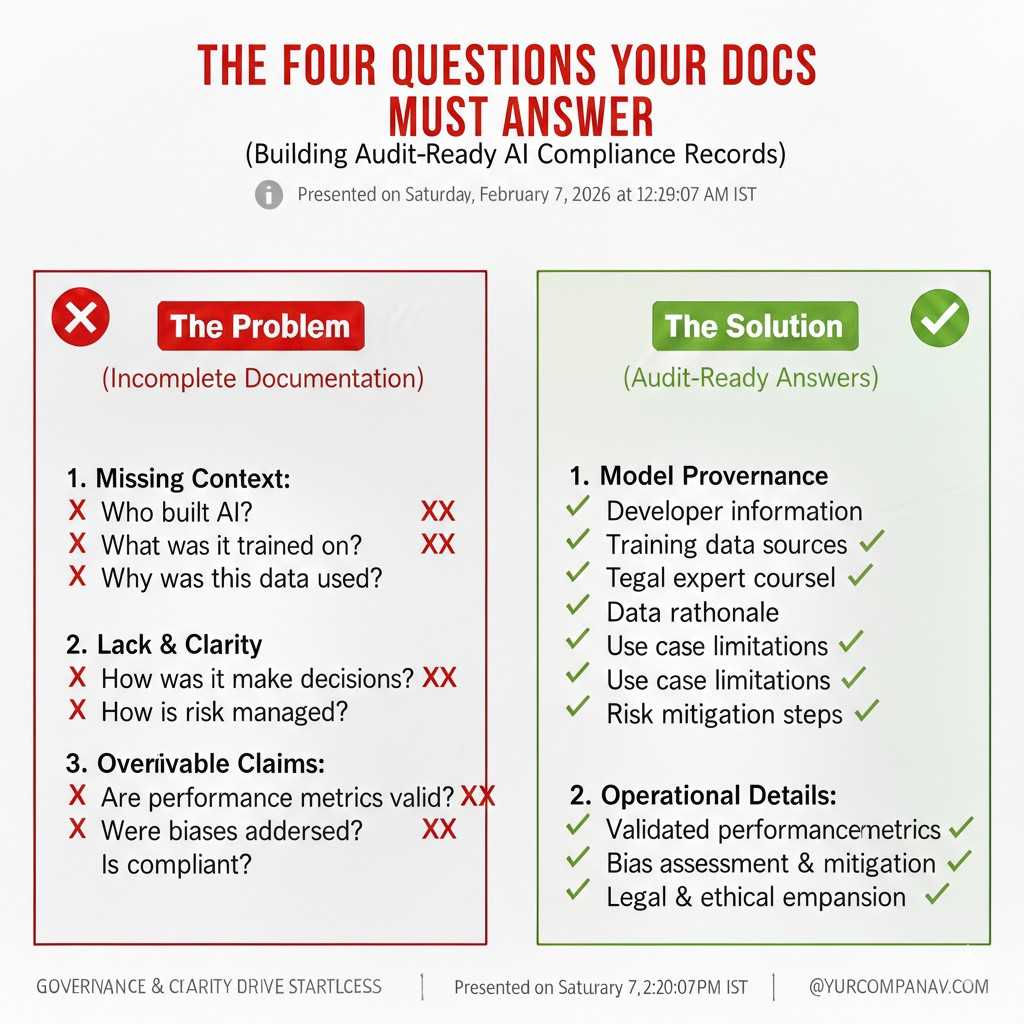

The four questions your docs must answer

Almost every review of regulated AI comes back to four questions. What does the system do, and where is it used. What data trained it, and what data it touches in production. How you know it behaves well enough, even on bad days. And who approved decisions along the way.

If your documentation can answer those clearly, you will feel the difference right away. Sales calls become simpler. Security reviews move faster. Legal teams relax. You stop digging through old chat threads looking for what happened.

This guide is built around those questions. You will keep fewer things than you think, but you will keep them in a way that is hard to argue with.

A quick note on IP and patents

Many teams do not realize how closely documentation and IP connect. If you have a new method, a new training approach, a new safety layer, or a new system design, you may want to protect it. Clear records help you explain what is unique and when it was created.

Tran.vc helps deep tech and AI founders build that moat early through in-kind patent and IP services, up to $50,000 worth. If you are building regulated AI, you are often building valuable know-how. You can apply anytime here: https://www.tran.vc/apply-now-form/

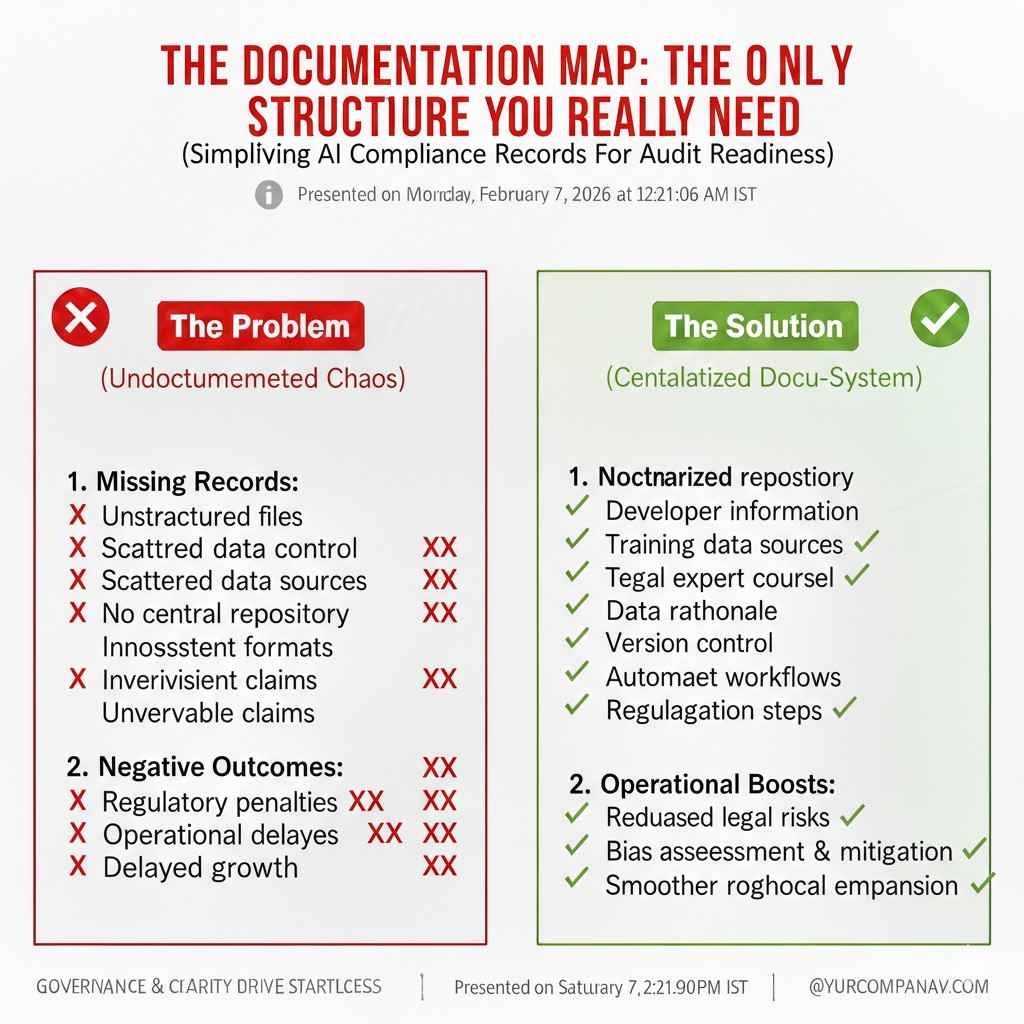

The documentation map: the only structure you really need

The goal of the map

Most teams fail at documentation because they try to document everything. That is not realistic. The better approach is to create a small map of “must-keep” records. Then you make it easy to add to that map as you build.

The map should fit your product and your risk level. A medical model needs more proof than a marketing model. A model used for hiring needs stronger fairness records than a model used for internal search.

But the shape stays the same across most regulated AI systems. You keep records about the system, the data, the model, the tests, and the people decisions.

The six buckets to organize everything

A simple way to keep order is to use six buckets. Product and use case records. Data records. Model records. Evaluation and monitoring records. Security and privacy records. Governance records, meaning who approved what.

You do not need fancy tools to start. A clean repo, a shared drive with clear naming, and a habit of writing things down as you go will get you far. The key is that your team can find things in minutes, not days.

When you set this up early, you stop losing knowledge. You also stop relying on one person’s memory. That matters a lot when you hire, when you hand off work, or when someone leaves.

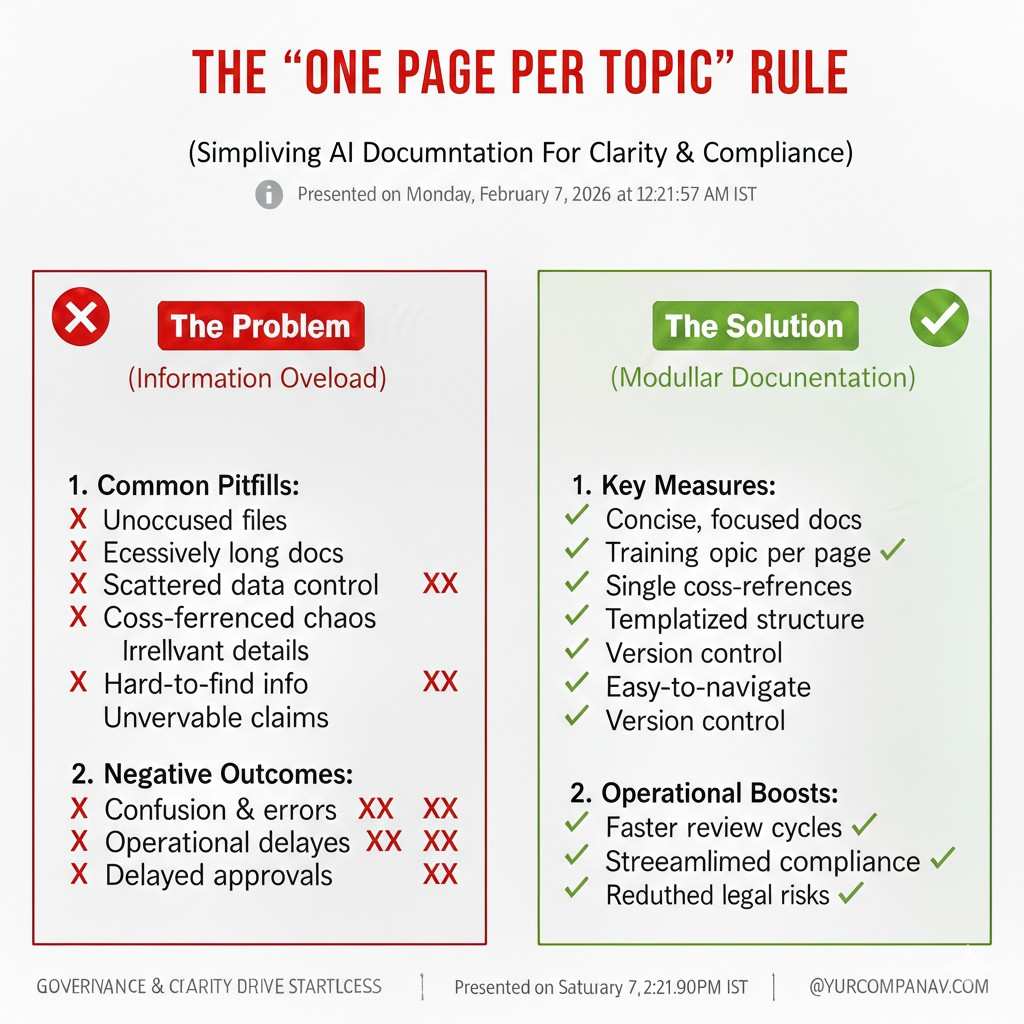

The “one page per topic” rule

A practical rule that keeps teams sane is this: for each topic, have one living page that points to the deeper records. That page is not a wall of text. It is a clear summary with links to tests, datasets, and decisions.

This makes audits easier because the reviewer sees the story first. Then they can drill down. It also helps your own team because you stop writing the same explanation in ten places.

It is the same idea as good code. You keep it readable. You keep it tidy. You make it easy to trace.

System documentation: what the AI is and what it is not

The system’s purpose and boundaries

Start with a clear description of what your system does. Write it in plain words. Say who uses it, what it helps them decide, and what the output means. If it makes a score, explain the score. If it makes a recommendation, explain how it should be used.

Then write what it does not do. This is not about being negative. This is about protecting users and protecting your company. Many failures happen because people use AI outside its safe zone.

This section should also include the “impact” of the system. Does it affect health, money, jobs, safety, or rights. If yes, say so clearly. This is often how risk teams decide the level of review.

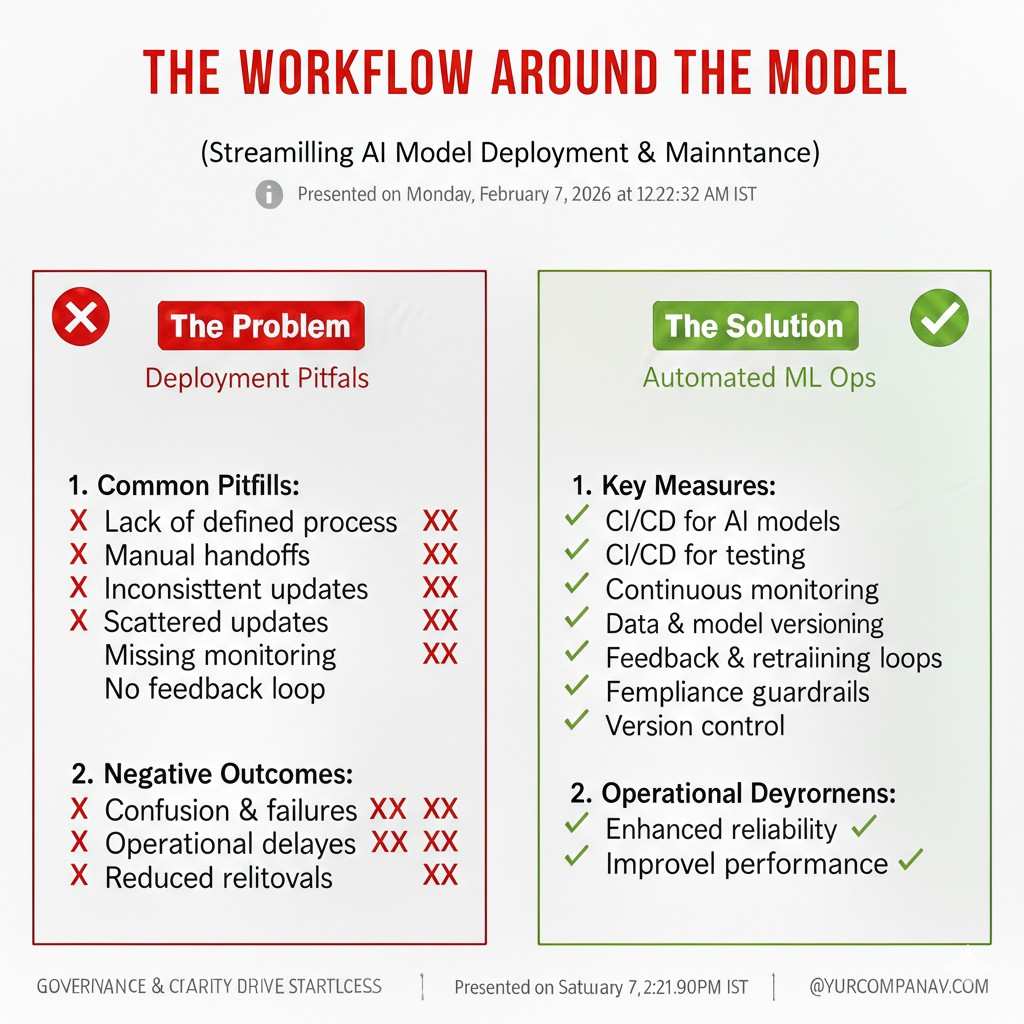

The workflow around the model

Regulated AI is almost never “just a model.” There is data coming in, data being cleaned, features being made, prompts being built, thresholds being applied, and humans sometimes approving outputs. Document the full workflow.

A simple diagram helps, but a clear written flow is fine too. The important part is that someone can see where the model sits and what controls exist around it. Many rules care about the full system, not only the model.

Also document what happens when the model is unsure. Does it ask for human review. Does it refuse to answer. Does it give a warning. These details matter because this is where risk is reduced.

Intended users and training needs

Write down who should use the system and what training they need. If a nurse will use it, the interface and training will differ from a data scientist. If a call center agent will use it, you may need guardrails and scripts.

This is also where you explain any warnings shown in the product. If the tool is not meant to be used as a final decision maker, say that. If it must be paired with a human check, say that.

Many regulated settings require that users understand the limits of the tool. If you never document the limits, it is hard to defend later.

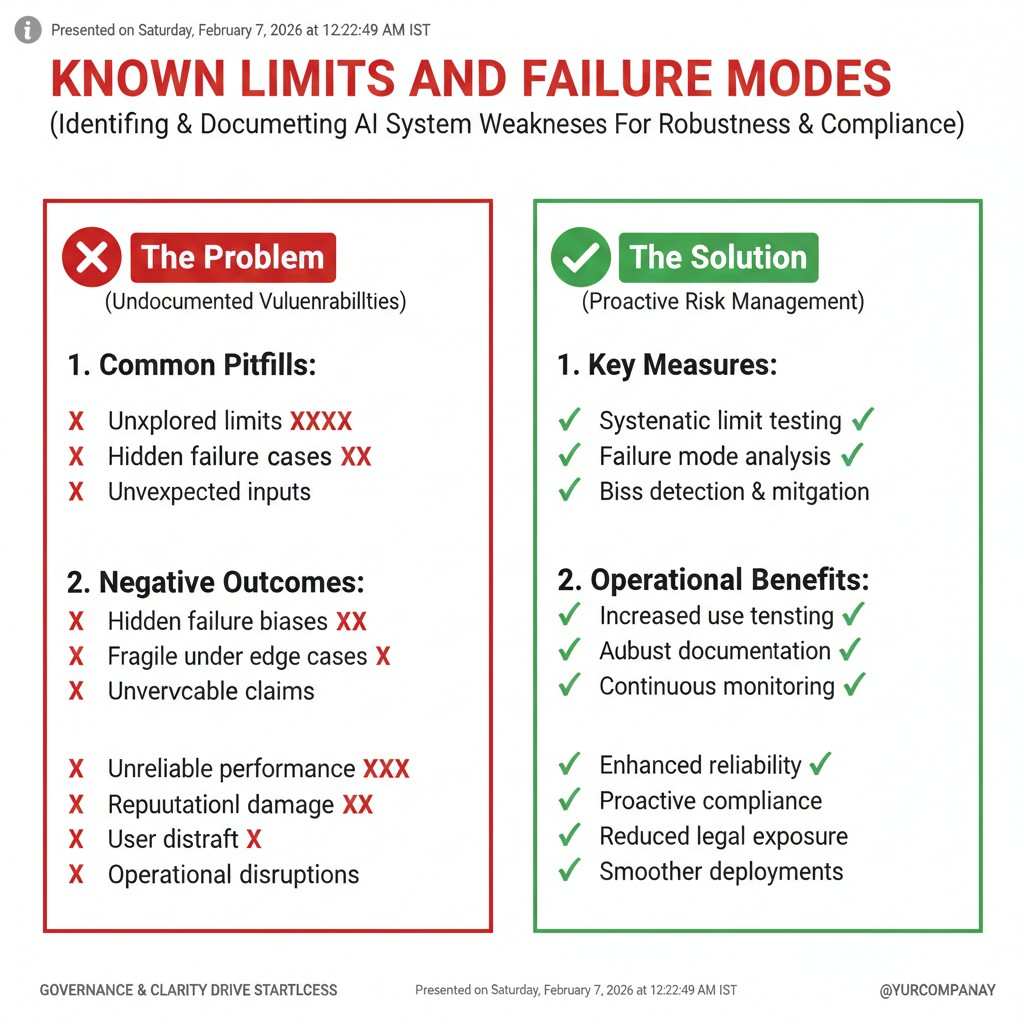

Known limits and failure modes

Every model has places where it performs worse. Document these places early and update them often. It could be certain groups, certain languages, certain sensors, certain edge cases, or certain rare events.

Do not hide this. Clear limits build trust. Buyers do not expect perfection. They expect honesty and control.

Also keep a record of “known bad outputs.” If your system has a risk of hallucinating facts, record that and record how you reduce it. If it can amplify bias, record that and show the mitigation steps.

Data documentation: the heart of regulated AI

Data sources and rights to use them

Write down where each dataset came from. Include links, owners, and the terms you are allowed to use. If it is scraped, be careful and document the legal basis. If it is licensed, keep the license. If it is customer data, keep the contract terms.

Regulated AI reviews often start here. If you cannot show rights to use data, everything else becomes harder. It is also a common place where deals break.

Also record whether data includes personal data. If it does, document how you store it, how you protect it, and how long you keep it.

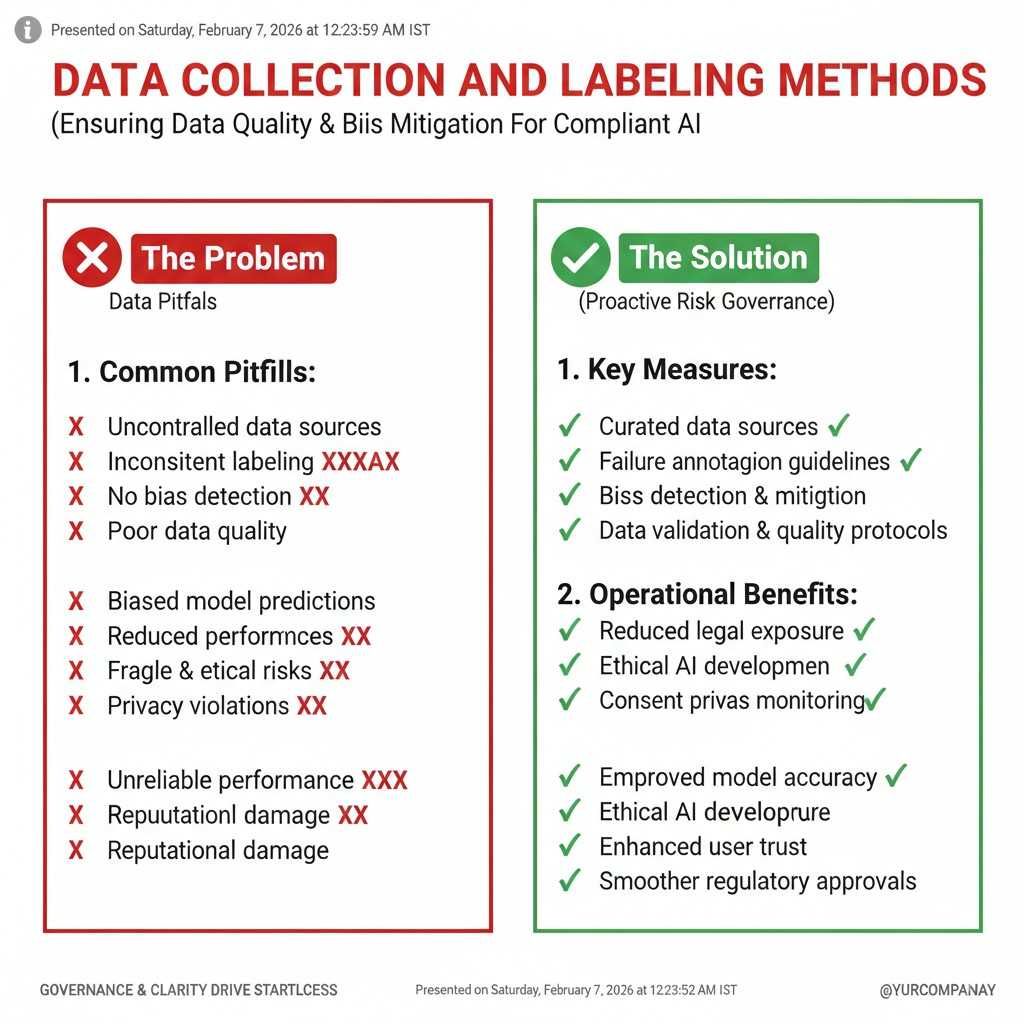

Data collection and labeling methods

If your team collected data, describe the process. How did you decide what to collect. What was included and excluded. How did you prevent obvious skew. If you used labeling, document who labeled, the guidelines used, and how you checked quality.

Labeling is a hidden source of risk. Two labelers can read the same item and choose different labels. That means your dataset might be noisy. Noise can become unfairness or unsafe behavior later.

Keep your labeling guide as a versioned document. When the guide changes, record what changed. This is important because model behavior can shift when labels shift.

Data cleaning and transformations

Most real-world datasets are messy. You likely remove duplicates, handle missing values, normalize formats, or filter out unwanted content. Document these steps.

This does not need to be a long essay. It can be a short note plus the code link. The point is traceability. If a regulator asks why a model failed for a certain group, you can check if the cleaning step removed too much of that group’s data.

Also record any synthetic data you use. Explain why you used it and how you validated it. Synthetic data can help, but it can also hide problems if used carelessly.

Dataset “facts”: what is in it and what is missing

You need a simple dataset summary that answers basic questions. How big it is. What time period it covers. What regions it covers. What groups are represented. What labels exist. What percentage of missing labels exists.

Also describe what is not present. If you do not have enough data for a certain group, write that down. If you do not have data for certain scenarios, write that down.

This helps you set honest expectations. It also helps you plan what to collect next.

Data versioning and change logs

In regulated AI, data changes are often more important than code changes. Keep a version for each dataset release. Record what changed and why. Record who approved it.

This matters because a model trained on new data can behave differently, even if the code is the same. If you cannot prove which data version trained a model, you will struggle to explain incidents.

A simple change log is enough. It just needs to be real and kept up to date.

Model documentation: how you built it and why you chose it

Model choice and the reason behind it

Document which model family you used and why. If you chose a smaller model for speed and control, say so. If you chose a larger model for accuracy, say so. If you used an open model for privacy reasons, say so.

Regulated buyers often ask: why this model, not another. They want to see that you made a thoughtful choice based on risk and need, not hype.

Also document alternatives you considered. You do not need ten pages. A short note is fine. The point is to show your decision process.

Training setup and key settings

Keep a record of how the model was trained or fine-tuned. Record the code commit, the compute setup, key settings, and the training run ID. Record the random seed if that is relevant.

If you use prompts and retrieval instead of training, document that too. Keep the prompt versions and retrieval settings. For many LLM systems, the prompt is part of the “model” behavior.

This is where you stop future confusion. Months later, you will not remember which run shipped. Your records will.

Features, prompts, and retrieval logic

If you use features, document what they represent. If you use embeddings and retrieval, document the sources used for retrieval. If you use prompt templates, document the template and the system rules.

This matters because regulators care about what information shaped the output. If your model uses a knowledge base, that knowledge base becomes part of the system. It must be governed like data.

Also keep records of prompt changes. Small prompt changes can create large behavior changes. If you do not track them, you cannot explain drift.

Guardrails and post-processing

Most regulated AI systems include controls after the model produces output. This can be thresholding, filtering, policy checks, or rules that limit what can be said.

Document these controls clearly. Explain what they block and why. Explain how they are tested. If they are tuned, record how tuning happens and who approves it.

These controls are also part of your moat. If you have a novel safety layer or a novel way to reduce harmful outputs, that can be a patentable asset in some cases.

If you want to build that IP foundation early, you can apply anytime here: https://www.tran.vc/apply-now-form/

Evaluation documentation: proof that it works well enough

The baseline metrics that matter

Regulated AI is not only about accuracy. It is about the right measures for the harm you want to prevent. Document what you measure and why those measures match the risk.

If you are scoring credit risk, you care about false negatives and false positives in a very specific way. If you are helping doctors, you care about missed cases and unsafe suggestions. If you are screening candidates, you care about fairness across groups.

Your evaluation plan should be written before you ship. That way, you are not picking measures that make you look good after the fact.

Test sets that reflect real use

Keep a record of how you built test sets. Record whether they reflect real use, rare cases, and different user groups. Document if any test data overlaps with training data.

In regulated settings, people will ask about leakage and overfitting. If you can show clean splits and clean methods, you reduce doubt.

Also keep examples of “hard cases.” These are cases where your model struggles. Showing that you test hard cases signals maturity.

Fairness checks and group performance

If the system impacts people, you need to measure performance across groups where possible and lawful. Document which groups you measure, how you define them, and what you found.

Be careful with sensitive traits. Different regions have different rules about collecting or using them. Still, the principle is the same: you need some way to check whether harm is uneven.

Document what you did if you saw gaps. Did you collect more data. Did you adjust thresholds. Did you add human review. Did you change the product flow. These choices matter as much as the numbers.

Safety testing and abuse testing

Regulated AI should be tested like someone is trying to break it, because they will. Keep records of abuse tests, prompt injection tests, data poisoning risks, and other ways your system can fail.

If you do not have a red team, you can still do basic “attack” sessions internally. Document what you tried, what happened, and what you changed.

The record is important. It shows you did not wait for an incident to learn.

Human review and quality checks

Many regulated systems use a “human in the loop.” If you do, document when humans step in, what they check, and how their decisions are recorded.

Also document training for reviewers. If reviewers are inconsistent, they can create risk. If you have guidelines, keep them versioned like labeling guides.

Human review is not a magic fix. It must be designed and measured. Documentation is how you prove it is real, not just claimed.

Monitoring documentation: what happens after you ship

What you watch in production

A model that looks good in testing can still fail in real life. So you need monitoring. Document what signals you watch, how often you review them, and what triggers action.

This can include performance metrics, drift checks, error rates, user feedback, and safety events. It can also include system uptime and latency if those affect safety.

The key is that monitoring is planned. You are not scrambling after a complaint.

Incident records and response steps

Keep an incident log. When something goes wrong, record the date, what happened, who found it, who responded, and what was changed.

Also record what you learned and how you prevent the same issue. This is often called a post-incident review, but you can keep it simple.

In regulated sales, the way you handle incidents matters. Mature incident records can save a deal.

Model updates and release approvals

Every time you update the model, document what changed and why. Link the change to the data version, the code version, and the evaluation report.

Also document approvals. In many settings, it matters that a qualified person reviewed the update. Even if you are a small startup, you can set a simple rule that someone other than the author signs off.

This is not red tape. It is a safety habit that scales with you.

.