Export controls sound like a topic for big defense firms and government teams.

But if you build AI or robots, it can become your problem much earlier than you think.

Because the same model that helps a factory run smoother can also help a drone fly better. The same robot arm that packs boxes can also handle parts for weapons. The same vision system that spots defects can also track people.

That is what “dual-use” means. It is tech that can be used for a normal job, and also for harm.

And export controls are the rules that say: some tech cannot be shared, shipped, trained, hosted, or even shown to certain places, people, or groups—without the right steps.

This matters even if you are “just a startup.” Even if you are “not selling overseas.” Even if you are “only using cloud tools.”

Here is why.

If you share controlled tech the wrong way, it can lead to big fines, blocked deals, lost investors, and in some cases, criminal risk. But the bigger day-to-day pain is simpler: export risk can quietly kill growth. It can slow down pilots. It can stop a customer contract. It can make a strong hire impossible. It can force you to rebuild your stack when it is already in production.

So the goal is not fear. The goal is control.

When you understand export controls early, you can:

Build your product so it is easier to sell later

Set clean rules for your team so you do not “accidentally export”

Pass diligence faster when investors and acquirers ask hard questions

Keep your options open with partners, cloud providers, and global customers

This guide is written for founders and builders. Not lawyers. Not policy people.

We will talk about what dual-use risk looks like in real AI and robotics work, where export problems show up, and what you can do now—without slowing down your team.

And if you are building deep tech and you want to protect what you are making, Tran.vc can help you turn your core work into strong IP early, before you raise your seed round. You can apply anytime here: https://www.tran.vc/apply-now-form/

Before I continue, quick note on scope: export control rules change often and differ by country. This is not legal advice. It is a founder-friendly map so you know what to watch and when to bring in an expert.

If you want, next I will cover:

- What “export” really means (it is more than shipping boxes)

- The main rule sets founders run into

- The most common dual-use traps in AI and robotics

- A practical way to reduce risk without killing speed

Export Controls and Dual-Use Risk for AI & Robotics

Why this matters much earlier than you think

If you build AI or robots, export controls can touch you long before you “go global.” Many teams hit this topic when a customer asks a simple question like, “Where will the model be trained?” or “Who will see the source code?” That is when you learn that export rules are not only about shipping hardware across borders.

The reason is simple. AI and robotics are often “dual-use.” That means the same tool can help normal work and also help harmful work. Governments treat that kind of tech with extra care. So even early product demos, cloud setups, and overseas hires can create risk if you do not plan for it.

Export controls can feel unfair to a small team moving fast. But the rules do not care if you are a startup. They care about what the tech can do, where it goes, and who can access it. The good news is that a founder can reduce risk with clear choices, without slowing progress.

Tran.vc works with AI, robotics, and deep tech teams from day one. If you want to build a strong moat with patents and smart IP planning while you also stay clean for diligence, you can apply anytime at https://www.tran.vc/apply-now-form/

What “dual-use” looks like in plain terms

Dual-use is not about your intent. It is about capability. A model that improves navigation can also improve navigation for a military drone. A robot that can pick items can also pick sensitive parts. A vision system that tracks motion can also track people.

Founders often think, “We are not building weapons, so we are fine.” But export reviews often start with “Could this be used for weapons, surveillance, or cyber harm?” If the answer is “maybe,” you need to treat it seriously, even if your real market is safe and normal.

This is not meant to scare you. It is meant to help you avoid a late surprise. Most export problems are not dramatic. They show up as delays, blocked payments, a partner that walks away, or a contract that dies in legal review.

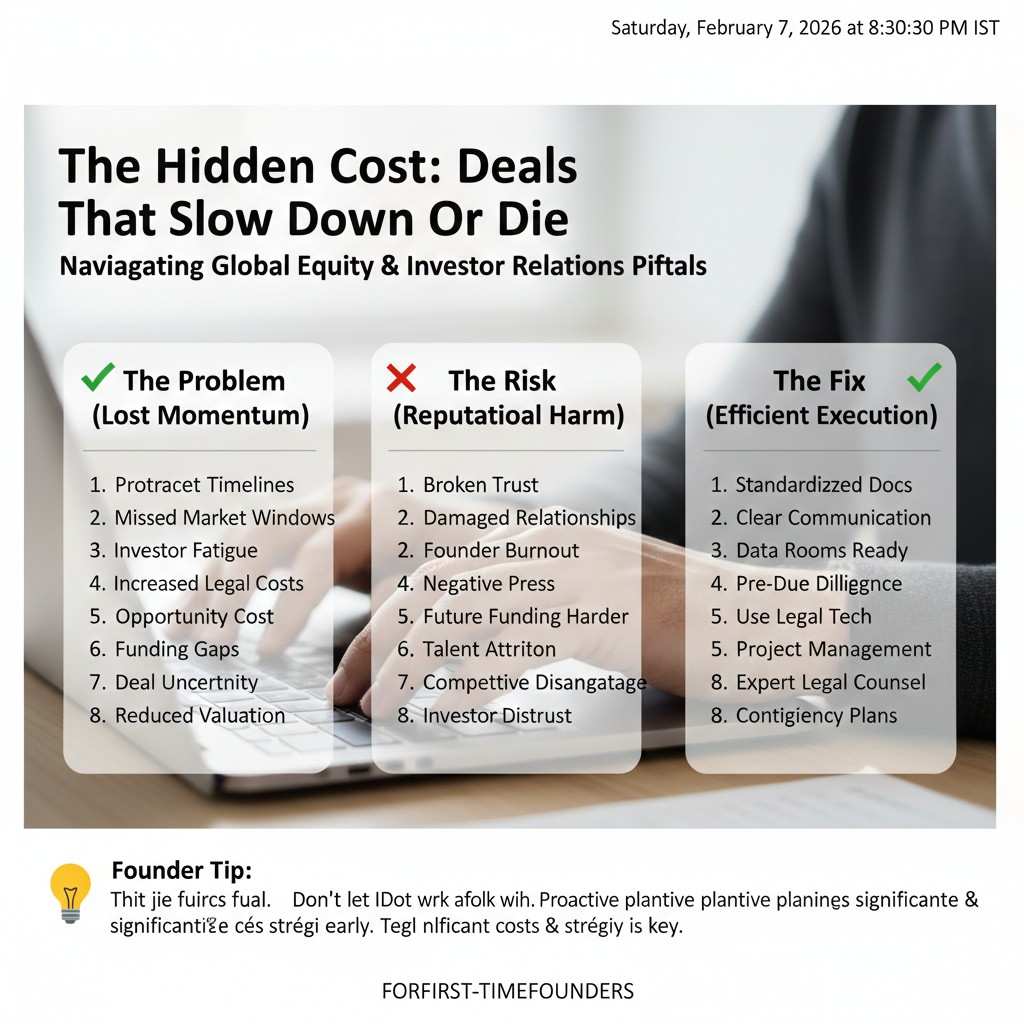

The hidden cost: deals that slow down or die

In a pilot phase, time matters. You may have one champion inside a company who is pushing hard for you. If their compliance team asks export questions and you have no answers, the deal can freeze. That freeze often looks like “We will circle back next quarter,” which is a polite way of saying “not now.”

Investors also ask these questions more than founders expect. A strong seed investor may want to know if your tech could trigger export licensing later. They do not want to fund a product that can only sell in a small set of places. If you can show you have thought about this, you look far more mature than your stage.

What you will learn in this guide

We will make a clear split between what export controls are, what dual-use risk is, and how they connect to AI and robotics work. We will also talk about the main ways a startup “exports” without noticing, like letting someone abroad access a repo or sharing weights with a vendor.

You will also get practical steps you can use right away. Not a long checklist. Just founder-level moves that reduce risk, keep your team fast, and make your company easier to fund and sell later.

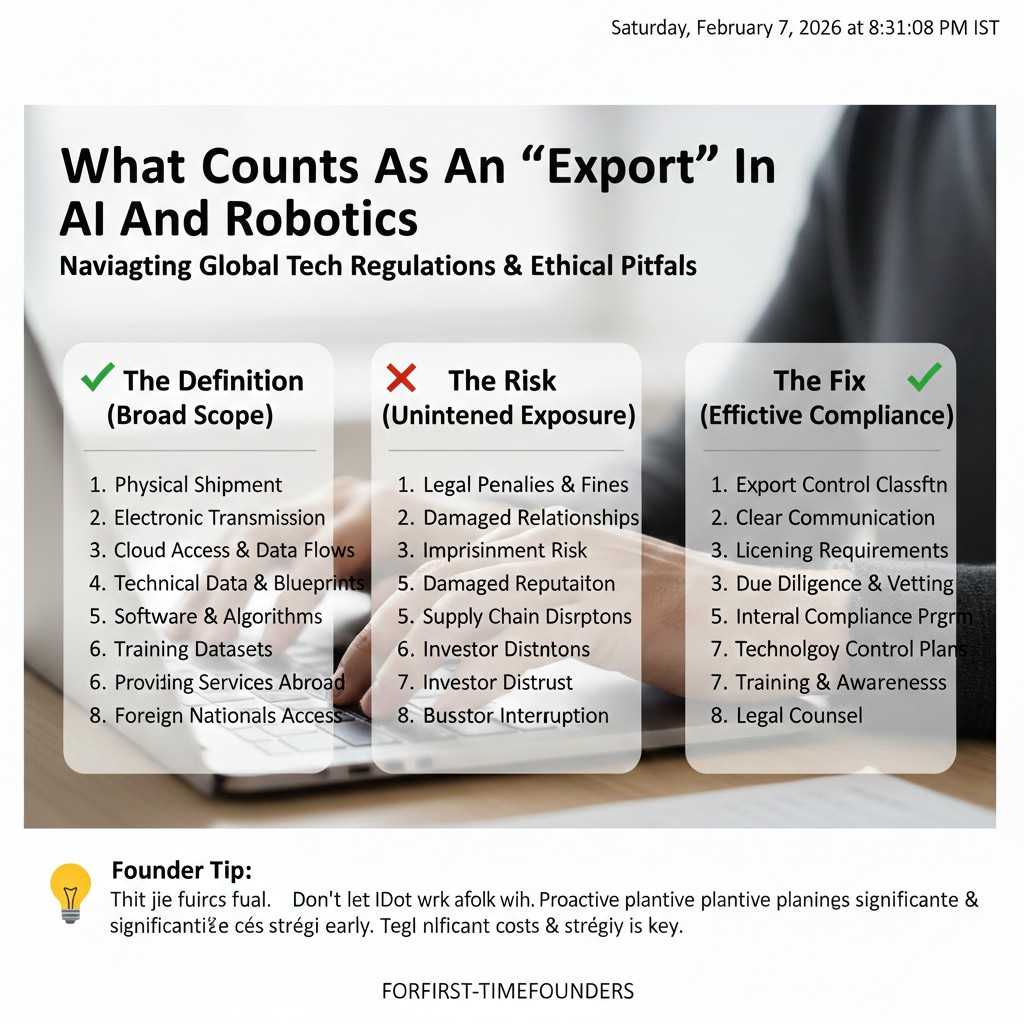

What counts as an “export” in AI and robotics

Shipping hardware is only one small part

Most founders picture export as a box crossing a border. That is real, but it is not the full story. In modern AI and robotics, the bigger risk is often digital. Code, model weights, training data, and design files can be controlled the same way a physical device can be controlled.

If you email a controlled file to someone in another country, that can be treated like an export. If a vendor abroad logs into your cloud and can view controlled material, that can also count. If you host a model in a place where a restricted user can access it, you can create a problem even if your team never “sent” anything.

So the founder lesson is: export is often about access, not shipping.

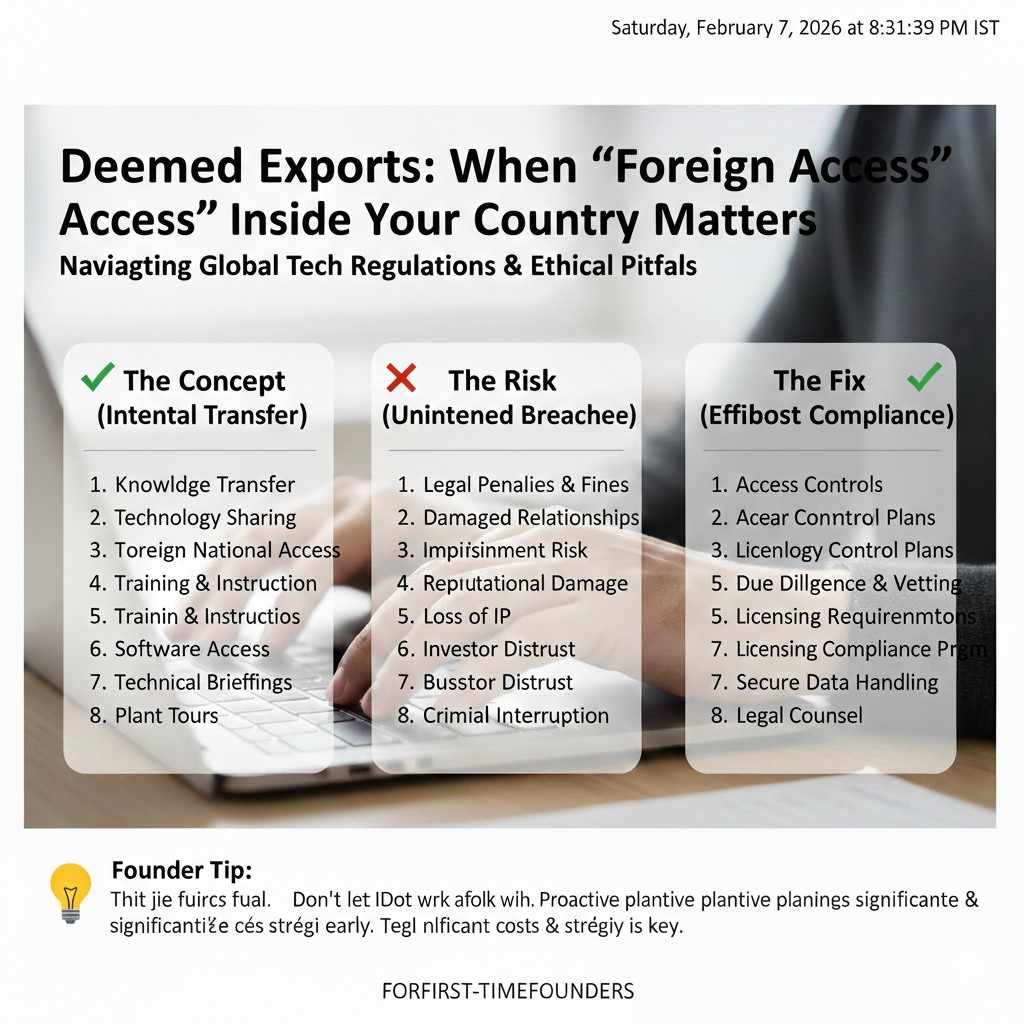

Deemed exports: when “foreign access” inside your country matters

One of the most confusing ideas for new founders is the “deemed export.” In plain terms, this is when a person from another country gets access to controlled tech while physically inside your country. It can happen in an office, on a laptop, or on a whiteboard.

This matters for hiring and interns. It can also matter when a customer brings a mixed team to a workshop. If your work includes controlled details, you need to think about what is shared, what stays internal, and what is separated into safer layers.

A lot of startups do not plan for this. They only learn about it when a large customer asks for a compliance statement before signing.

Cloud tools can create “exports” without travel

With AI work, the cloud is often the product. Even if you do not ship anything, your logs, weights, and training data can move between regions. A simple setting in a cloud dashboard can change where your data sits and who can access it.

Founders sometimes grant “temporary” access to a contractor abroad to help fix a bug, label data, or test a build. That can be enough to create an export event. The same is true for external MLOps teams, overseas QA groups, and support vendors.

The key is not to avoid the cloud. The key is to set it up in a way that matches your risk level, and to keep clean records of who can access what.

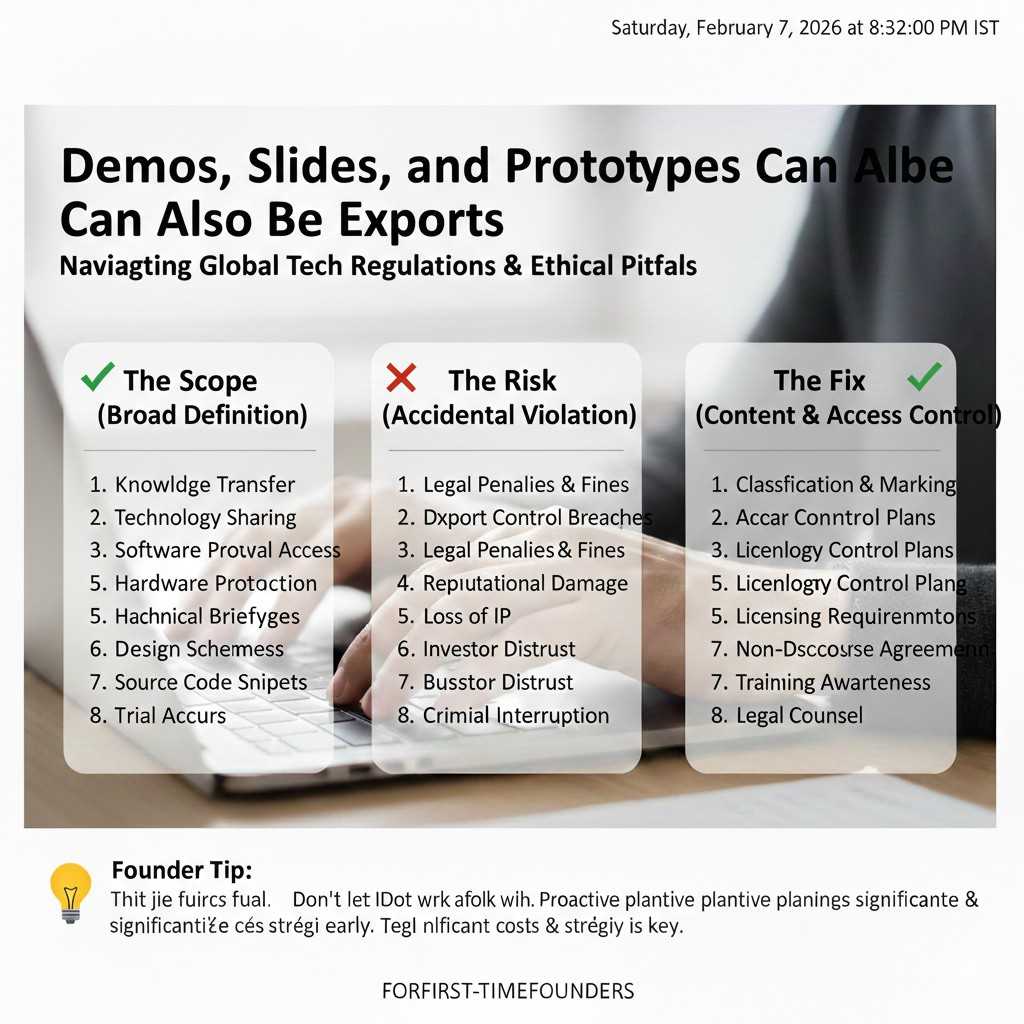

Demos, slides, and prototypes can also be exports

A demo can become an export if you show controlled detail. Many teams share “how it works” slides to win trust. That is normal. But if your value sits in a sensitive method, a system design, or specific performance details, you need to be careful about how deep you go.

Prototype robots can also trigger export concerns. Shipping a unit abroad for testing is a clear case. But even sending detailed CAD files, control code, or calibration data can be treated similarly if the tech is controlled.

This is where strong IP strategy helps. If you have patents filed and a clear boundary of what is protected and what is shared, you can sell with more confidence and less fear.

Tran.vc helps founders shape that boundary early. If you want support building IP that investors respect, apply anytime at https://www.tran.vc/apply-now-form/

The main rule sets you will hear about

Export rules are not one single thing

Founders often ask, “Is my product controlled or not?” The real answer is that export controls are a set of rules with different buckets. Some buckets focus on where the item goes. Others focus on who gets it. Some focus on the type of tech. Some focus on military end use.

That is why this topic feels messy. But you do not need to memorize every rule. You need to understand the categories well enough to spot when you are near a boundary.

In most cases, you will run into three kinds of controls: item-based controls, person-based controls, and end-use controls.

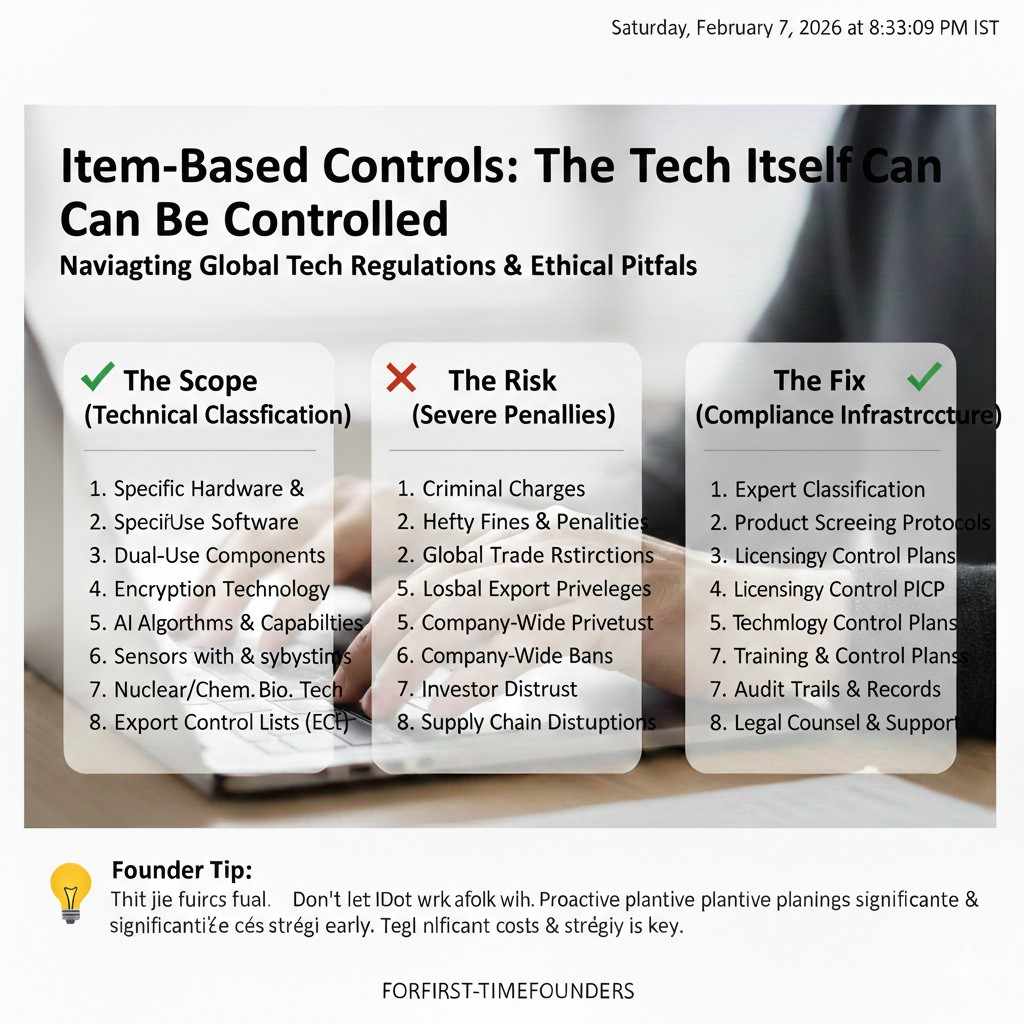

Item-based controls: the tech itself can be controlled

This is the part most people think about. Some hardware, software, and technical data are controlled because of what they can do. High-performance sensors, certain navigation tools, encryption systems, and advanced manufacturing gear can fall into this group.

In AI and robotics, item-based controls can show up in odd ways. A robot may be “just a robot,” but a specific sensor package or guidance feature can be the sensitive part. A software stack may be “just software,” but the training method, weights, or integration details can change the picture.

This is why you should break your system into parts when you think about export. Often one part carries the real risk, while the rest is normal commercial tech.

Person-based controls: who you deal with matters

Even if your tech is not controlled in a strict way, you can still have a problem if you deal with the wrong person or group. Many countries keep lists of restricted parties. If you sell to, ship to, or share with someone on a restricted list, you can face serious penalties.

Startups get exposed here when they grow fast. They may sign a reseller, accept a customer in a new region, or hire a contractor, without checking. Even a small payment can create risk if it involves a blocked party.

This is why a basic screening step is important. It is not glamorous, but it can save you from a painful surprise.

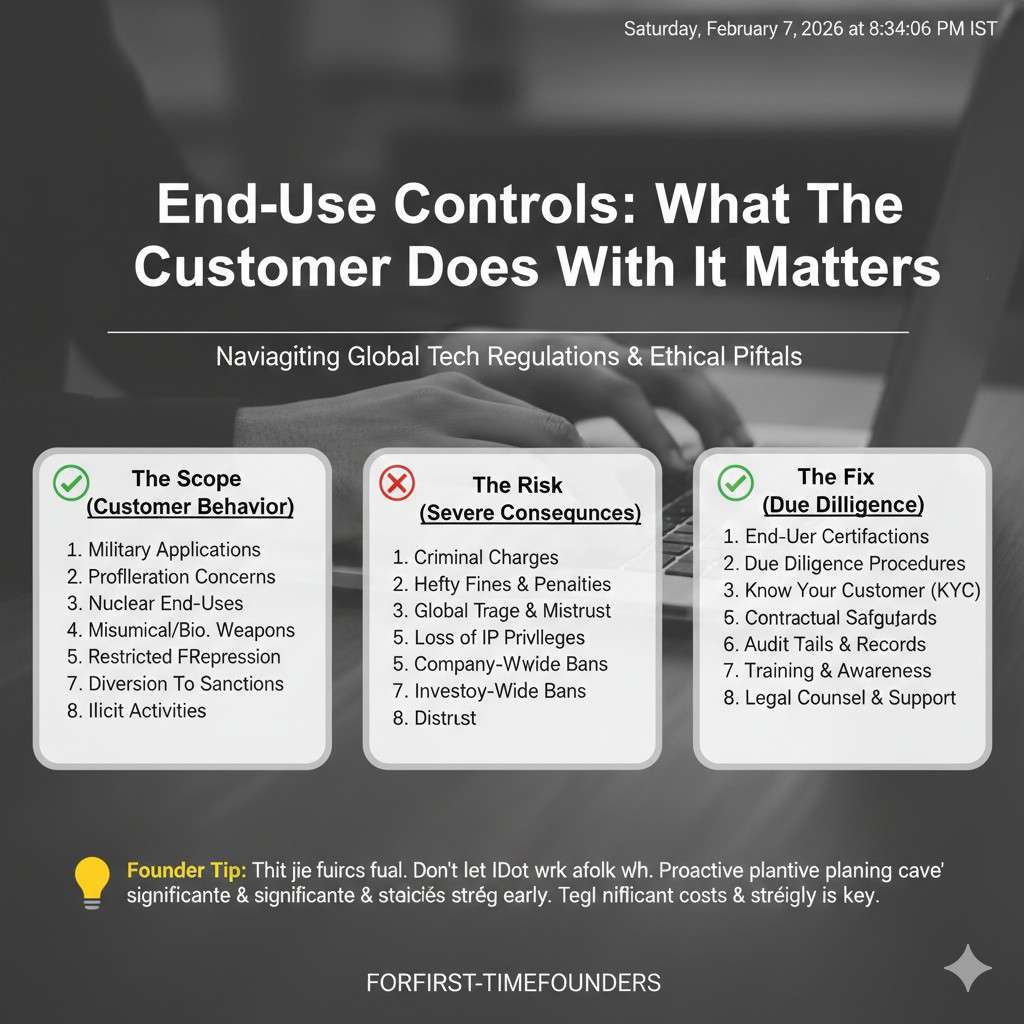

End-use controls: what the customer does with it matters

Sometimes the tech is not “controlled” in the normal item sense. But if it will be used for certain military or harmful uses, it can become restricted. This is where questions about “end use” and “end user” show up.

For AI and robotics, end-use risk is common because the same system can be used in many settings. A factory customer may be fine. But a customer that wants the same system for surveillance or for weapon support changes the story.

Founders often avoid asking end-use questions because they fear losing the sale. But ignoring end-use can be worse. If a deal later looks like you “should have known,” you can end up with risk that is hard to defend.

Dual-use risk in AI: where founders get surprised

Models are not just math; they are capability

Many builders see a model as code and data. Export reviews often see it as capability. If the model helps targeting, tracking, detection, mapping, or decision making in high-risk settings, it can raise concern.

It does not matter if you wrote the model for farm robots or warehouse robots. If the capability can transfer, the risk is there. That does not mean you cannot sell. It means you should know which use cases create questions and be ready with a clear story.

This is also why documentation matters. If you can explain the intended use, limits, and safety boundaries in plain words, you help a customer’s compliance team say “yes” faster.

Training data can raise flags even when the model seems safe

Sometimes the sensitive part is not the model. It is the data. High-resolution mapping data, certain satellite or aerial imagery, and data tied to protected groups or sites can trigger rules and restrictions.

Even if your product is commercial, using data from certain sources or regions can create a compliance need. This can affect partnerships with data vendors, research groups, or foreign labs.

A good habit is to keep a clean record of where key datasets come from, what rights you have, and where the data is stored. It makes diligence and compliance work far easier later.

Inference at the edge vs. in the cloud changes the risk

A cloud-hosted model can be accessed from many places. An edge model on a device may be easier to control. But edge devices can also be shipped, moved, and resold, which creates other risks.

There is no perfect choice. The practical founder move is to decide where control must sit. If your model is sensitive, you may keep the most capable part server-side. If latency requires edge, you may split features so that the most sensitive logic is not fully exposed on the device.

These are product choices, but they also become compliance choices. Thinking about it early prevents painful rework later.

Collaboration can become leakage without anyone trying

AI teams share a lot by default. Notebooks, weights, evaluation results, and prompts move quickly between people. Add overseas contractors or a global research partner, and those same habits can become export risk.

It is not about mistrust. It is about process. A basic access model, clear repo rules, and a “safe-to-share” folder structure can reduce risk without slowing work.

If you set it up now, it feels natural. If you wait until a customer demands it, it feels like a last-minute scram

Dual-use risk in robotics: where the line gets blurry

Robots are often “systems,” not single products

Robotics is rarely one item you can label as safe or risky in a simple way. A robot is a full system. It can include sensors, cameras, mapping, control code, autonomy features, remote operations, and in some cases, parts that can be swapped fast.

This is why dual-use risk shows up so often in robotics. A basic mobile base can be harmless, but add strong navigation, long-range radio links, night vision, or a payload interface, and it starts to look very different to a reviewer.

A founder should avoid thinking in terms of “our robot is fine.” Instead, think in terms of “which parts of our stack create higher-risk capability.” That is the piece to manage with extra care.

Autonomy changes the way others view your product

A remote-controlled device and an autonomous device can be treated very differently, even if they look the same in a demo. When you add autonomy, you add the ability to act with less human input. That can raise the perceived risk quickly.

In practice, the risk is not only about full autonomy. It can also be about features that reduce human burden. Better path planning, better target detection, better tracking, and better coordination between multiple robots can all raise questions.

A very practical move is to be precise in your product language. If you sell “autonomous mission planning,” you may trigger deeper review than if you sell “assisted routing for indoor logistics.” Your feature might be the same. But your words shape how a compliance team frames the risk.Sensors can be the sensitive part even when the robot is simple

Many founders focus on the robot body and forget that export rules often focus on components. Cameras, thermal systems, lidar, radar, precision GPS, inertial sensors, and certain radios can raise flags depending on performance and use case.

This matters because hardware sourcing choices can lock you in. If you choose a sensor package that is hard to ship to key markets later, you may face delays or higher costs. If you choose a safer alternative early, you might avoid that friction.

This is not saying “avoid good sensors.” It is saying “know what you are buying and why.” If your product must have top performance, plan for the compliance path from the start.