If you are building an AI product and you plan to sell into Europe (or even just touch data from people in Europe), GDPR is not “legal homework.” It is product work. It shapes how you collect data, how you train models, how you log prompts, how you run analytics, and how you talk to customers.

The good news: GDPR is not impossible. Most founders struggle because they think GDPR is a giant checklist they must finish in one weekend. It is not. GDPR is a set of simple promises to real people: “I will not be sneaky with your data. I will protect it. I will let you control it. I will not keep it forever. And if something goes wrong, I will act fast.”

In this guide, I will translate GDPR into founder language. No long legal words. No scary talk. Just practical steps you can build into your AI product now, so you do not get blocked later by enterprise security reviews, customer procurement, or a panicked investor question.

And one more thing before we start: a strong IP base and a clean data story go together. If your model or workflow is truly novel, you want it protected. Tran.vc helps technical founders do exactly that with up to $50,000 in-kind patent and IP services so your hard work becomes an asset, not just code. If you want help turning what you are building into defensible IP, you can apply any time here: https://www.tran.vc/apply-now-form/

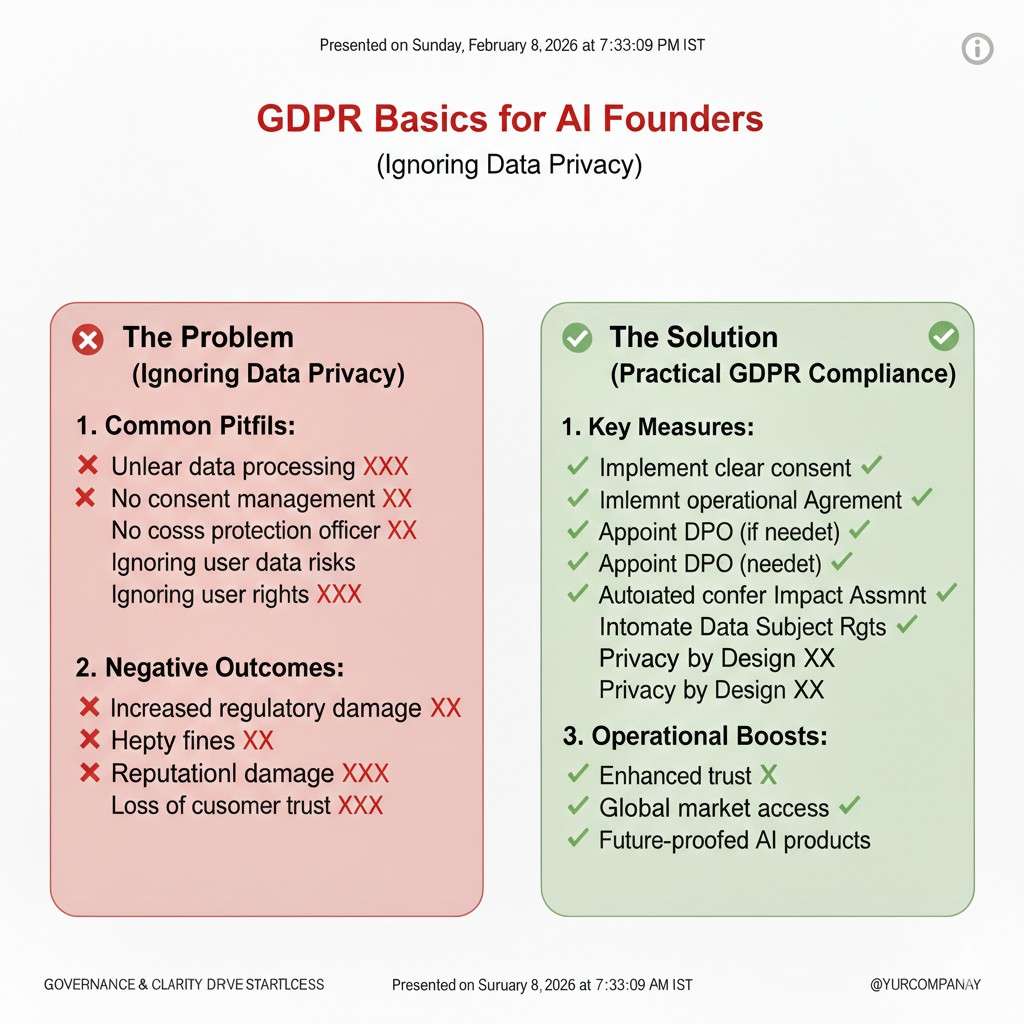

GDPR Basics for AI Founders

What GDPR is, in plain terms

GDPR is a privacy law in the European Union. It tells you how to handle personal data. Personal data is any info that can point to a real person, even if you do not know their name.

For AI products, this matters more than most founders expect. Your product may touch prompts, chat logs, user IDs, device IDs, emails, voice, images, and support tickets. Even a “simple” analytics event can become personal data when it connects back to a user.

GDPR is not trying to stop AI. It is trying to stop careless data use. If you build the right habits early, GDPR becomes a trust advantage instead of a blocker.

When GDPR applies to you

GDPR applies if you offer your product to people in the EU, or if you track people in the EU. It can also apply if your customer is in the EU, even if your team is not.

This is why many US startups still need to care. One EU user can pull GDPR into your product. One EU enterprise pilot can turn “privacy later” into “privacy now.”

If you are not sure, assume it applies and build the basics. The work you do will also help with other privacy rules later.

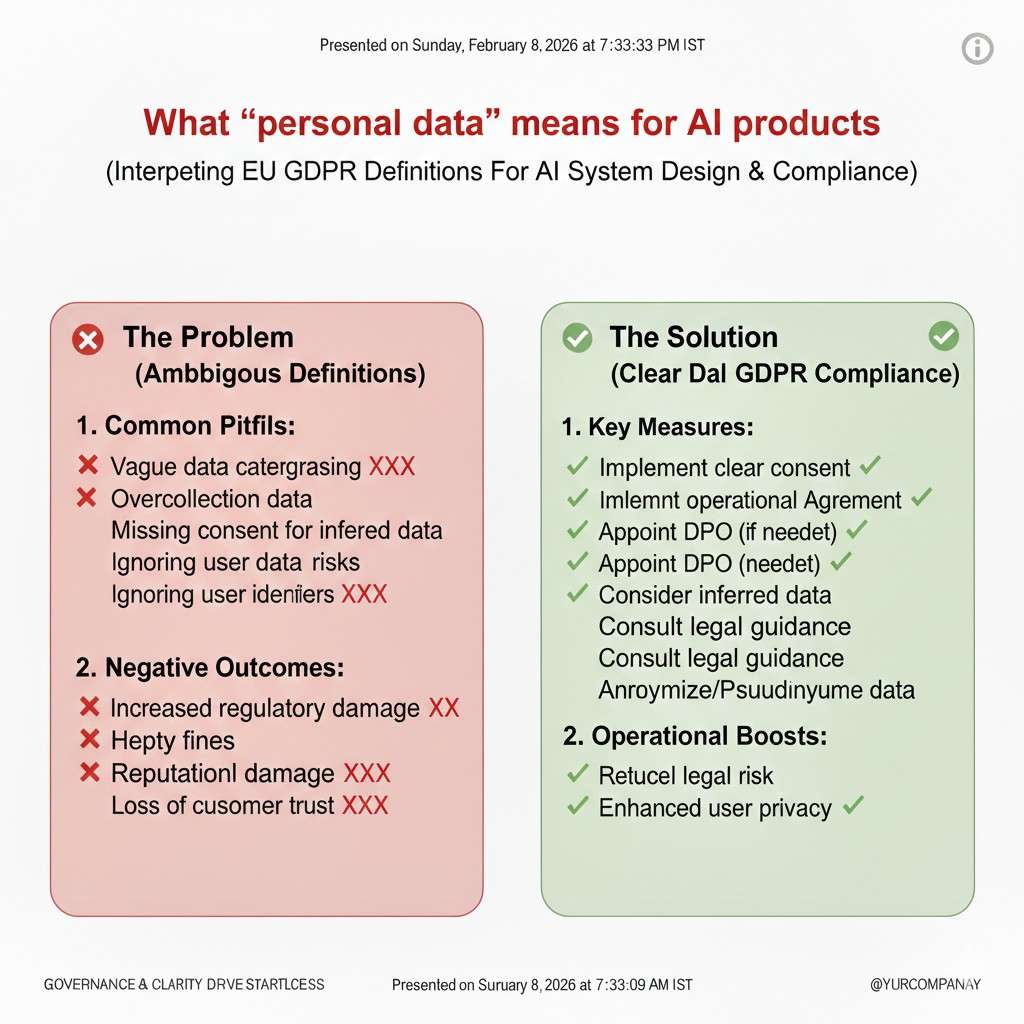

What “personal data” means for AI products

In AI, personal data is not only the user profile screen. It can show up in the text people paste into your app. It can show up in uploaded files. It can show up in call recordings, screenshots, and even model outputs.

A user might paste a customer list into your tool. Another user might paste a medical note. A sales rep might paste a contract. If your system stores it, even for “debugging,” you now hold personal data.

So the founder question is simple: “Where can personal data enter my system, and where can it leave?” When you can answer that, GDPR becomes much easier.

Why GDPR shows up in sales and fundraising

Most founders meet GDPR during a sales cycle. A security team sends a long form. A procurement team asks about data retention. A buyer wants to know if you train on their data.

If you do not have clear answers, deals slow down. If you do not have clear controls, some deals die.

Investors also ask because risk matters. A messy data story makes your company harder to scale. A clean story makes you easier to trust, easier to sell, and easier to defend.

If you want to build leverage early—both in sales and in fundraising—Tran.vc can help you tighten your IP and product foundation. You can apply any time here: https://www.tran.vc/apply-now-form/

The Two Most Important GDPR Ideas

Lawful basis: you need a real reason to use data

GDPR says you cannot use personal data just because it is useful. You need a lawful basis. That is a fancy phrase for “a valid reason.”

For many AI products, the lawful basis is “contract.” That means you need the data to deliver the service the user asked for. If your product summarizes a meeting, you need the meeting audio to do the summary. That is simple.

Sometimes the lawful basis is “consent.” This is common when you want to use data for something extra, like marketing emails or optional product improvement that is not needed for the core service.

Sometimes it is “legitimate interest.” This is tricky, but it can apply to things like basic security logs or preventing fraud. You still have to be fair and not surprise the user.

A founder-friendly rule is this: if a user would be shocked to learn you used their data that way, you probably need a different approach, or clear consent.

Purpose limitation: do not change the deal later

GDPR also says you must be clear about why you collect data, and you should not quietly change that reason later.

This is where AI teams get into trouble. They collect customer prompts to “provide the service,” and later decide to use those prompts to train a general model. Even if the new idea is exciting, the original deal with the user did not cover it.

So, decide early: are you training on user data or not? If you are, say it clearly and design for it. If you are not, say that clearly too, and make it true in your system.

This choice impacts everything: your architecture, your contracts, your trust story, and your speed to close enterprise deals.

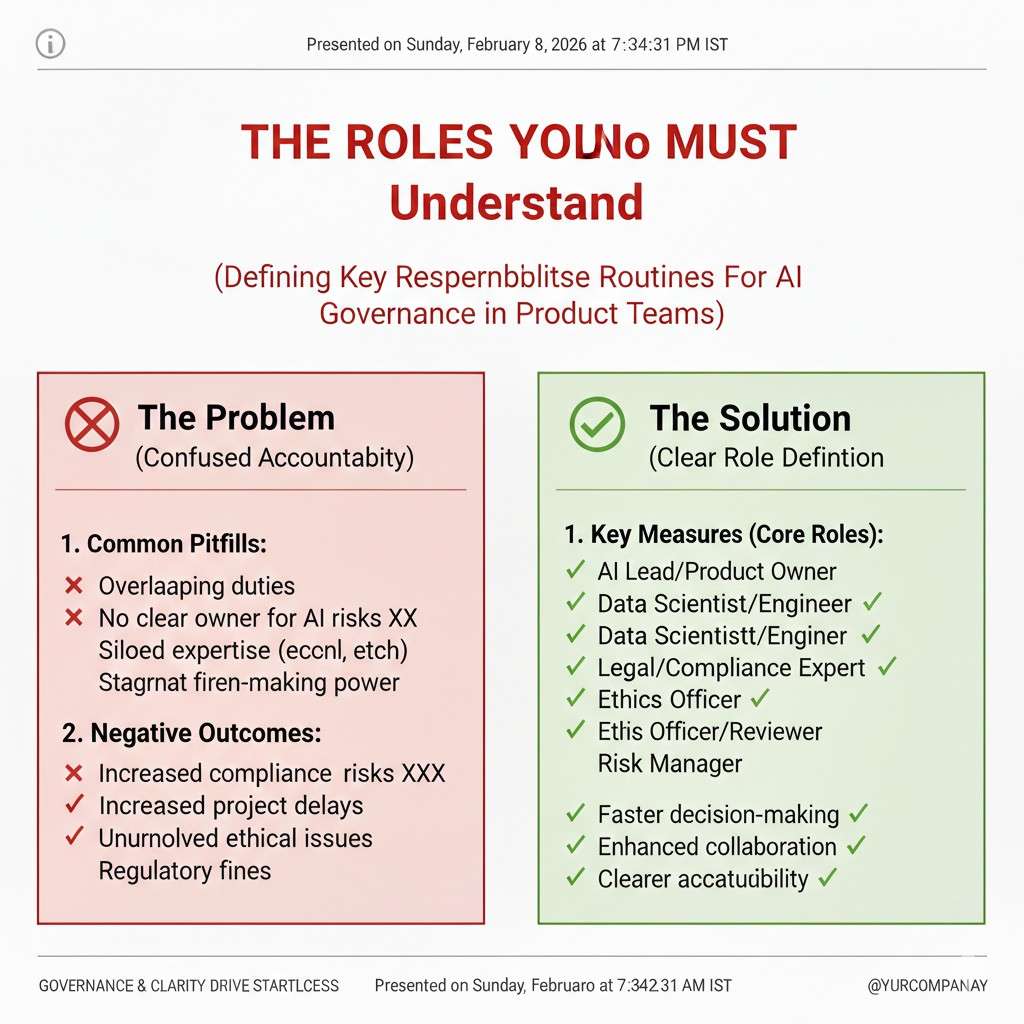

The Roles You Must Understand

Controller vs processor, explained like a product conversation

GDPR uses two main roles. A controller decides why and how personal data is used. A processor handles personal data for the controller, based on instructions.

If you sell a B2B AI tool to a company, that company is often the controller. They decide they want to process their employee or customer data. You are often the processor because you run the tool for them.

But it can flip. If you run a consumer app and decide how the data is used, you are the controller. If you collect data for your own product goals, you are almost surely a controller for that data.

This matters because controllers have more duties. Processors still have duties too, but the paperwork and risk feel different.

Why this changes your contracts and your roadmap

If you are a processor, most customers will ask for a data processing agreement. People call it a DPA. It sets rules for how you handle data, what security you use, and what happens if there is a breach.

If you are a controller, you will need a privacy notice that tells users what you do, why you do it, how long you keep data, and how they can control it.

Many founders delay this because it feels like “legal stuff.” But in practice, this is a product choice. If you know your role, you can write clearer promises and build simpler controls.

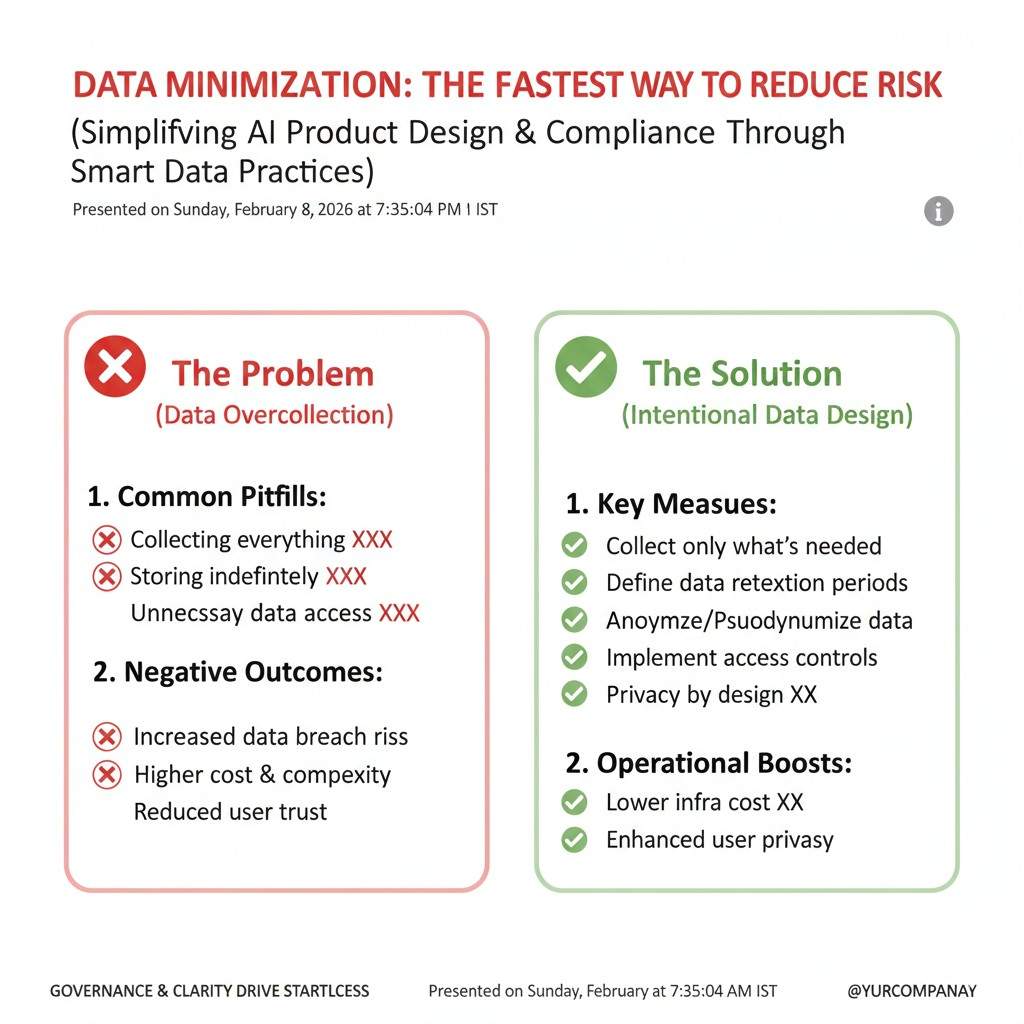

Data Minimization: The Fastest Way to Reduce Risk

Keep less data than you think you need

Data minimization is a core GDPR idea. It means you should only collect what you need, and you should not keep it longer than needed.

AI teams often over-collect because logs help debugging. That is normal. But GDPR pushes you to design smarter logging.

Instead of storing full prompts forever, you might store short samples, or store prompts only for a short window, or store them only when a user reports a bug. You can also mask sensitive parts before saving.

This is not only about compliance. It is also about security. You cannot leak data you do not have.

Practical example: prompt logging without getting burned

Imagine you run an AI support agent. A user types, “My name is Alex and my order number is 12345.” If you store that full text in a debug table for six months, you now hold personal data for no strong reason.

A safer approach is to store only what helps you fix the model. You can store the model version, the tool calls, the latency, and a redacted version of the prompt. If you truly need the full prompt for a short time, set an auto-delete timer.

This kind of design becomes a selling point in enterprise deals. Buyers like vendors who do not hoard data.

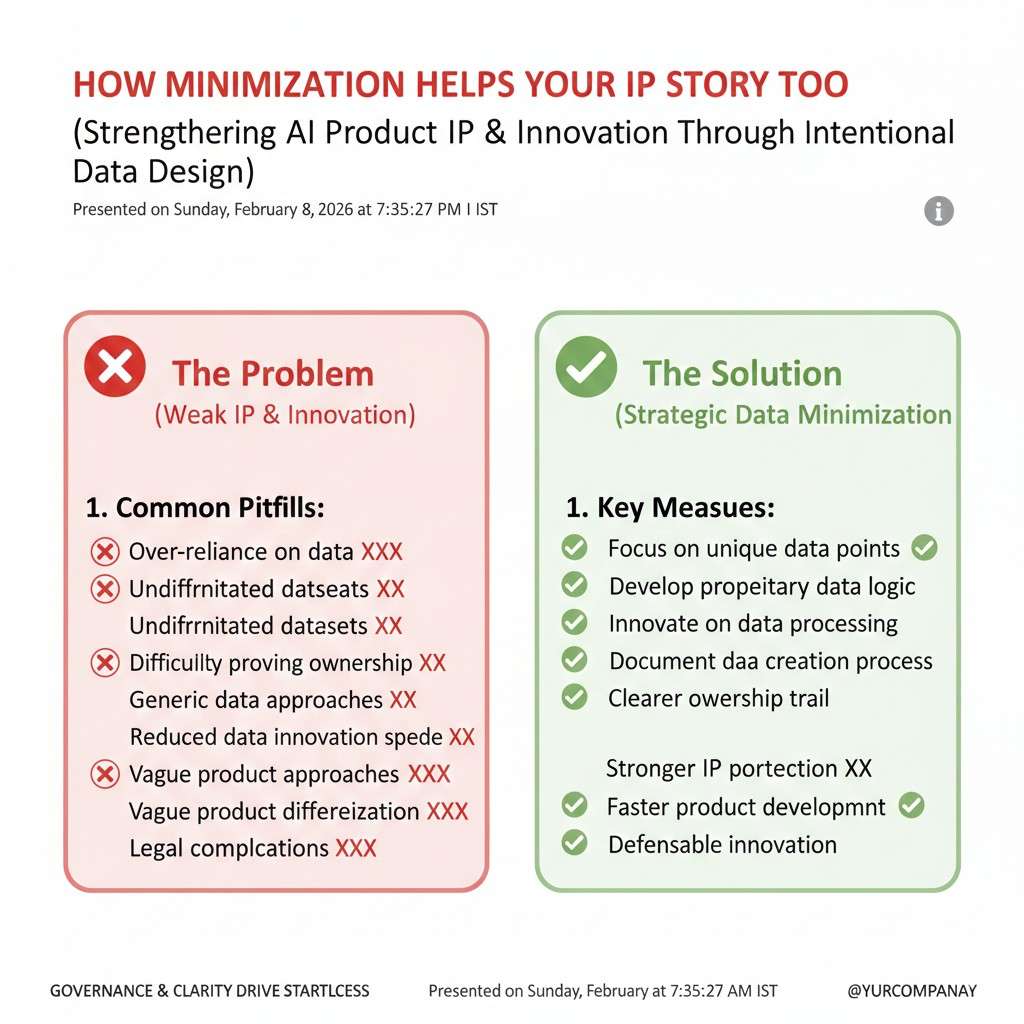

How minimization helps your IP story too

If your product value comes from your unique workflow, model tuning method, or system design, you can protect that as IP. You do not need to rely on keeping piles of user data to have a moat.

Tran.vc’s approach is to help founders build real defensibility early—patents and IP strategy that matches what you are actually building—so you can grow without risky shortcuts. Apply any time here: https://www.tran.vc/apply-now-form/

GDPR Rights in AI Products

The right to access, without panic

GDPR gives users the right to ask what data you have about them. For AI founders, this sounds scary at first. In practice, it is manageable if you design for it early.

Access does not mean dumping raw databases. It means being able to explain, in clear terms, what kinds of data you store and why. For many AI tools, this includes account details, usage logs, prompts, uploads, and support messages.

A simple internal map helps. Know which systems store user data and what type lives there. When an access request comes in, you are calm instead of scrambling.

The right to delete, and what it really requires

Users can ask you to delete their personal data. This is one of the most talked-about GDPR rights, and often misunderstood.

Deletion does not mean erasing your entire system. It means removing data that can identify that person. If prompts are linked to a user ID, you delete or anonymize those records. If backups exist, you document when they will expire.

For AI products, the big question is training. If you train models on personal data, deletion becomes harder. This is why many early-stage teams choose not to train on user data at all, or only train on data that is clearly anonymized.

Objection and restriction, explained simply

Users can also object to certain uses of their data or ask you to pause processing. This usually applies when your lawful basis is legitimate interest.

In real life, this often shows up in marketing or analytics. A user might say they do not want their data used for product improvement beyond core functionality.

If your system can tag users with preferences and respect them, you are already ahead. Most objections are rare, but being ready builds trust with customers and regulators alike.

AI, Automation, and Human Oversight

Automated decisions and why they matter

GDPR pays special attention to decisions made only by machines, especially if they affect people in serious ways. Think credit approval, hiring filters, or insurance pricing.

If your AI product makes decisions that can impact someone’s rights or opportunities, you need to be careful. GDPR may require transparency and a way for humans to review outcomes.

Many founders avoid this issue by keeping humans in the loop. Even a simple review step or appeal process can change your risk profile.

Explaining AI outputs without giving away secrets

Users have the right to “meaningful information” about automated decisions. This does not mean you must reveal your model weights or proprietary methods.

It means you should be able to explain, at a high level, what inputs matter and how outcomes are produced. For example, “The system looks at recent activity and policy rules” is often enough.

Clear explanations help users trust your product. They also reduce support tickets and legal noise.

Why transparency helps adoption

AI buyers are cautious. When you can explain how your system works, what data it uses, and where humans step in, sales conversations go smoother.

This is another place where strong IP helps. If your core method is protected, you can be open about how the product works without fear of being copied. Tran.vc focuses on helping founders lock this in early. You can apply any time here: https://www.tran.vc/apply-now-form/

Data Retention and AI Systems

Keeping data forever is a silent risk

GDPR expects you to set limits on how long you keep personal data. “Forever” is not an acceptable answer.

AI teams often forget this because storage is cheap. But long retention increases breach risk and compliance burden.

A simple retention policy says what data you keep, why you keep it, and when it gets deleted or anonymized. This can be written in plain language and enforced with basic automation.

Retention by data type, not by fear

You do not need one rule for all data. Account data might be kept while an account is active. Logs might be kept for 30 or 90 days. Billing records might be kept longer for legal reasons.

For prompts and uploads, shorter is usually better unless you have a clear reason. If data helps debugging only during early use, delete it once the issue window passes.

These choices show maturity. Customers notice.

Retention decisions affect your product design

Retention is not just a legal note. It affects how you build features like history, replay, and analytics.

If users expect history, make it clear and give them control. If they do not need history, do not store it. Align product value with data use, and GDPR becomes simpler.

Security Expectations for AI Founders

Security is part of GDPR, not separate

GDPR requires “appropriate” security. This does not mean perfect security. It means reasonable steps for your size and risk.

For AI startups, this usually includes access controls, encryption in transit, basic monitoring, and clear internal rules about who can see production data.

You do not need a giant security team. You need discipline and documentation.

Internal access is often the weakest point

Many data issues happen because too many people can see too much data. Engineers debug with real user data. Support shares screenshots in chat tools.

Limit access. Use roles. Mask data when possible. Train your team on why this matters.

These habits protect users and protect you.

Breach response, without drama

If something goes wrong, GDPR expects you to act fast. You need to know how to detect issues, who decides severity, and when to notify customers or authorities.

You do not need a thick playbook. A simple plan with names and steps is enough. The goal is speed and honesty, not perfection.

Vendor and Model Choices Matter

Using third-party models responsibly

If you use external models or APIs, you must understand how they handle data. Some providers store prompts. Some train on them. Some offer opt-outs.

Your GDPR story includes your vendors. Customers will ask. You should have answers.

Choose partners whose policies align with your promises. If you say you do not train on customer data, your vendors should match that.

Sub-processors and trust

Under GDPR, customers often want to know who else touches their data. These are called sub-processors.

You do not need to hide them. Be clear. List them. Update customers if they change.

Transparency here builds credibility, especially with larger buyers.

GDPR and AI Training Data

Training data is where most founders get stuck

Training data is the most sensitive part of GDPR for AI products. It is also where many teams make quiet decisions early that become hard to undo later.

The core question is simple: are you using personal data to train your models, or not? Everything else flows from this choice. If the answer is unclear, regulators, customers, and investors will feel that uncertainty.

Founders who decide early move faster. Founders who delay often end up rebuilding pipelines under pressure.

Training on user data versus delivering a service

There is a big difference between using data to provide a service and using data to improve a model. GDPR treats these differently.

If a user gives you data so the product can work for them, that usually fits under a contract. If you then reuse that data to train a general model, that is a new purpose. That often needs clear consent or strong justification.

Many strong AI companies avoid this risk by separating the two. The service runs on user data, but training uses curated, licensed, or synthetic data.

Anonymization is harder than it sounds

Founders often say, “We anonymize everything.” GDPR is strict about this. True anonymization means the data cannot be linked back to a person, even with extra effort.

Removing names is not enough. Context, patterns, and rare details can still identify someone, especially in text or audio.

If data can be re-linked, it is still personal data. Be honest about this internally. If you are not sure, treat it as personal data and design accordingly.

Pseudonymization as a practical middle ground

Pseudonymization means you replace direct identifiers with tokens or IDs. The data is safer, but still personal data under GDPR.

This approach is useful for training internal models or improving workflows, as long as you apply strong access controls and clear retention limits.

It reduces risk, but it does not remove obligations. Many teams use it as a stepping stone while they build better data sources.

Synthetic data and licensed data

Synthetic data can reduce GDPR risk if done well. If the data truly does not relate to real people, GDPR may not apply.

Licensed datasets can also help, but only if the license clearly allows your use. Always read the terms and understand any restrictions.

A mix of synthetic data, licensed data, and carefully governed real data is often the safest long-term path.

Privacy by Design, in Real Product Terms

What “privacy by design” really means

Privacy by design sounds abstract, but it is very practical. It means thinking about privacy when you design features, not after launch.

For AI products, this often shows up in choices like default settings, data flows, and logging behavior.

If the default is safe and minimal, most users never create risk. If the default is “collect everything,” problems grow fast.

Defaults matter more than policies

Users rarely read policies. They live with defaults.

If your product logs everything by default, most customers will never turn it off. If logging is minimal by default and expanded only when needed, you protect users without friction.

Design defaults that match your privacy promises. This alignment builds trust and reduces support issues.

Feature flags as a privacy tool

Feature flags are not just for rollout. They can help with privacy too.

You can enable detailed logging only for certain accounts, or only for short periods. You can test new data uses safely before making them permanent.

This gives you flexibility without breaking GDPR principles.