Most startups build AI fast. That is normal. You ship, you learn, you fix, you ship again.

But there is a quiet problem hiding under that speed: risk. Not “big company” risk. Startup risk. The kind that shows up as a surprise customer security review, a blocked enterprise deal, a model that fails in one edge case and hurts trust, or a regulator question you are not ready to answer.

The NIST AI Risk Management Framework (AI RMF) is a simple way to avoid those surprises. It is not a law. It is not a long checklist you must obey. Think of it like a map. It helps you build AI that is safer, easier to sell, and easier to defend when someone asks, “How do you know this is reliable?”

This playbook is written for founders and builders. It is meant to be used while you are moving fast, not after you “have time.” And it is written the way you would explain it to a smart teammate: clear, direct, and practical.

One more thing before we start: if you are building AI, robotics, or deep tech, your real edge is not only your code. Your edge is what your code makes possible—and what others cannot copy. Tran.vc helps teams turn that edge into strong IP, with up to $50,000 in in-kind patent and IP services, so you can build a moat early without giving up control. If you want to see if you’re a fit, apply anytime here: https://www.tran.vc/apply-now-form/

In the next section, we will turn the NIST AI RMF into a simple startup system you can run every week. No heavy process. No long meetings. Just a few habits that reduce risk and increase deal speed.

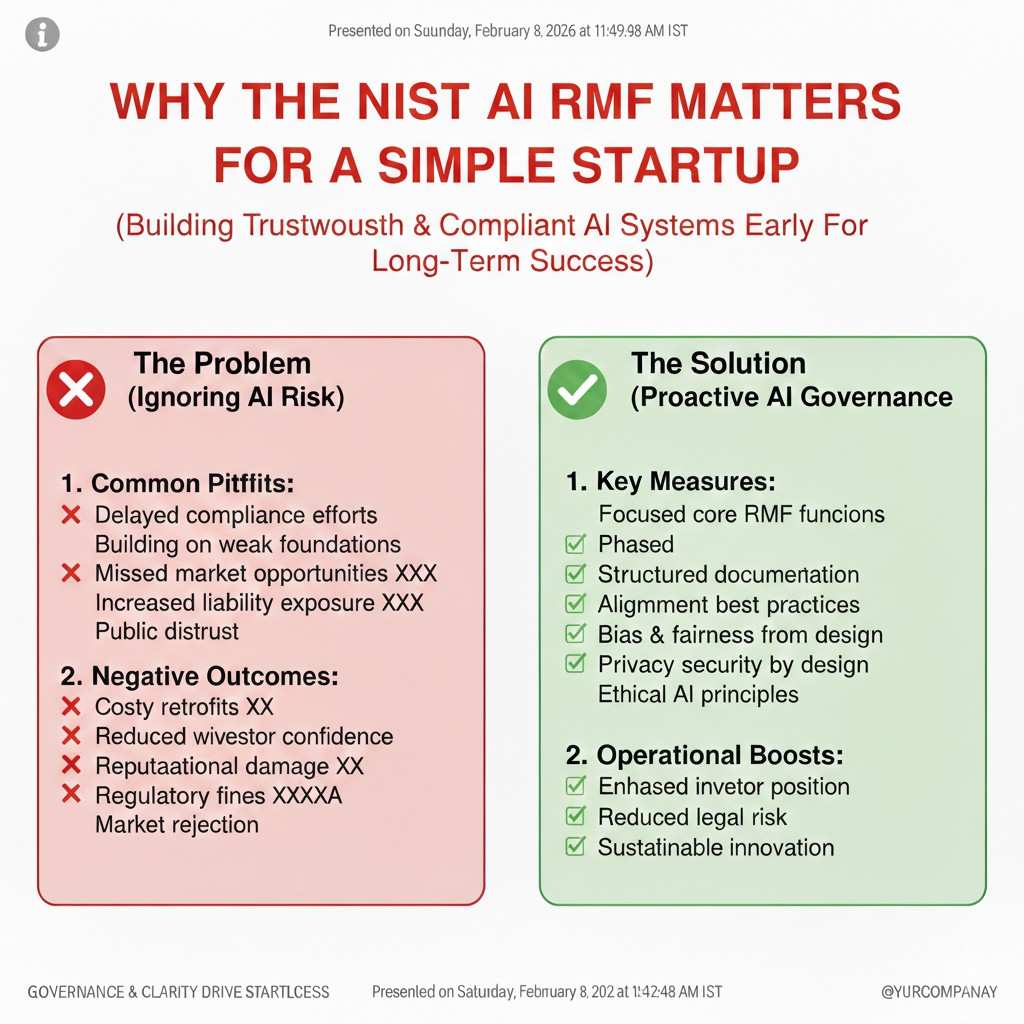

Why the NIST AI RMF matters for a startup

The real reason deals slow down

A startup can build a strong model and still lose a deal. It happens when a buyer asks simple questions and your team has no clear answers. They will ask how the model was tested, what can go wrong, and what you do when it fails. If you respond with guesses or “we will add that later,” trust drops fast.

The NIST AI RMF helps you answer those questions without turning into a big company. It gives you a common way to talk about AI risk. When you use it early, you look prepared, even if you are small. That changes how customers, partners, and investors treat you.

Risk is not just safety, it is business

Many founders hear “risk management” and think it is only about harm or legal trouble. In a startup, risk also means lost revenue, long sales cycles, and sudden rework. One unclear edge case can force a product pause at the worst time, like right before a pilot turns into a contract.

If you treat risk as a product feature, you build faster in the long run. You make fewer painful changes later. You also protect your reputation, which is hard to rebuild once it breaks.

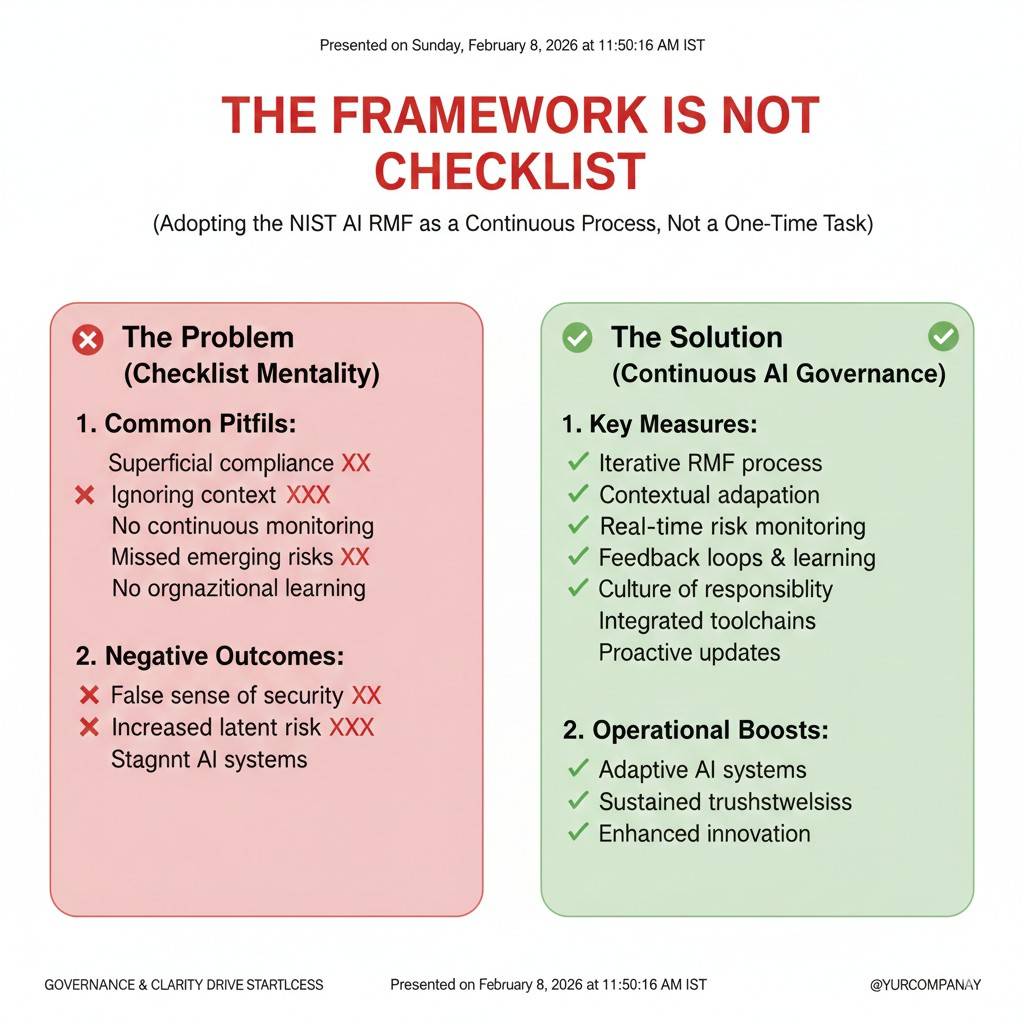

The framework is not a checklist

The NIST AI RMF is a guide, not a strict rulebook. You are not supposed to copy it into a document and call it done. The point is to build a few habits that you repeat, so risk does not sneak up on you.

The easiest way to use it is to turn it into a weekly rhythm. Small actions, done often, beat big process, done once.

The four core functions in plain words

Govern means “who owns the risk”

Governance sounds heavy, but it can be light. It simply means someone is responsible for decisions when tradeoffs show up. If the model is accurate but sometimes unfair, who decides what is acceptable? If a customer wants more data than you feel safe using, who says yes or no?

Startups fail here when nobody owns the call. Work slows down, debates drag on, and the team ships with hidden risk. A simple owner and a simple rule for decisions fixes this.

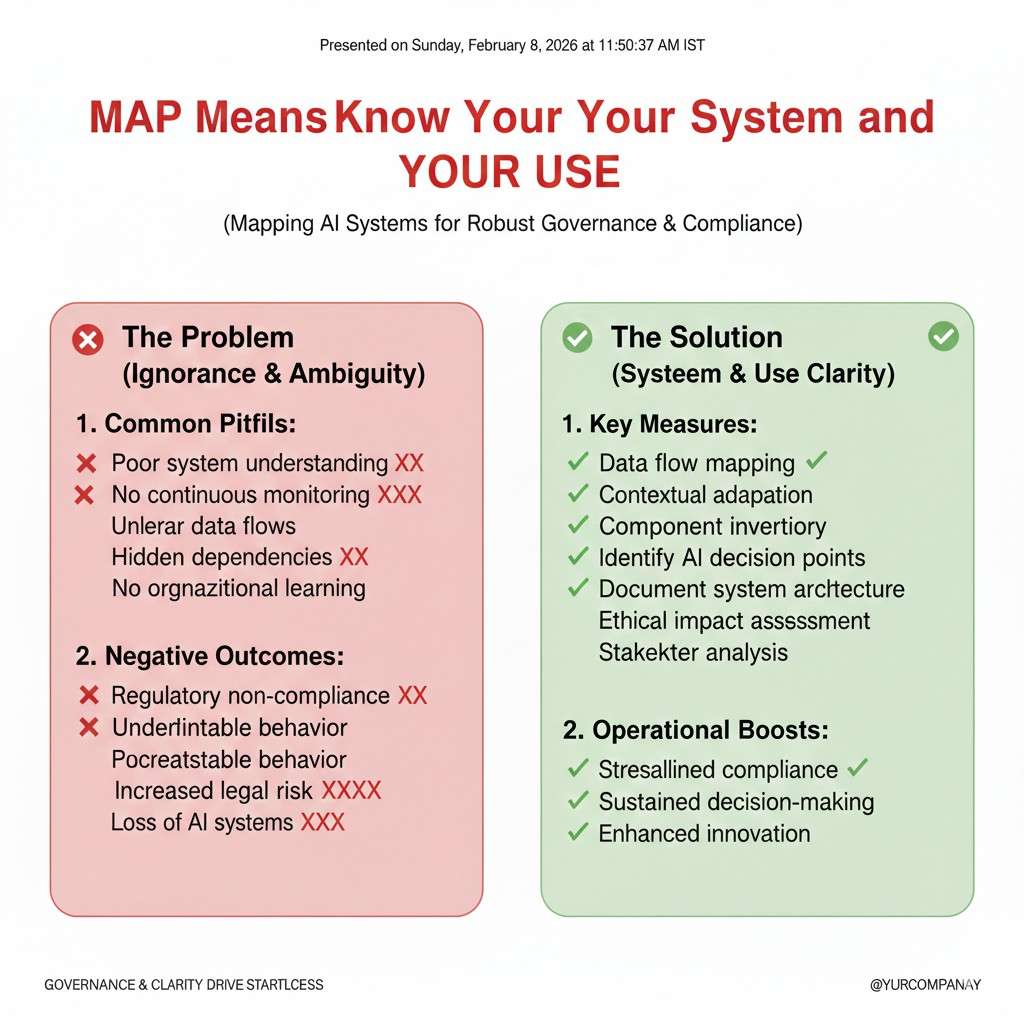

Map means “know your system and your use”

Mapping is about knowing what you built, what it touches, and where it can break. Many teams can describe the model, but they cannot describe the full system. Buyers care about the full system because that is where problems appear.

Map also forces you to be honest about use. The same model can be low risk in one setting and high risk in another. A chatbot for product search is not the same as a system that guides medical choices, even if the model is similar.

Measure means “test what matters”

Measuring is not only accuracy. It is also reliability, bias, security, and how the model behaves under stress. A startup that only tracks one metric is blind in important ways.

The goal is to choose a few tests that match your real risks. Then you run them often, not once per quarter. This becomes your proof when someone asks for it.

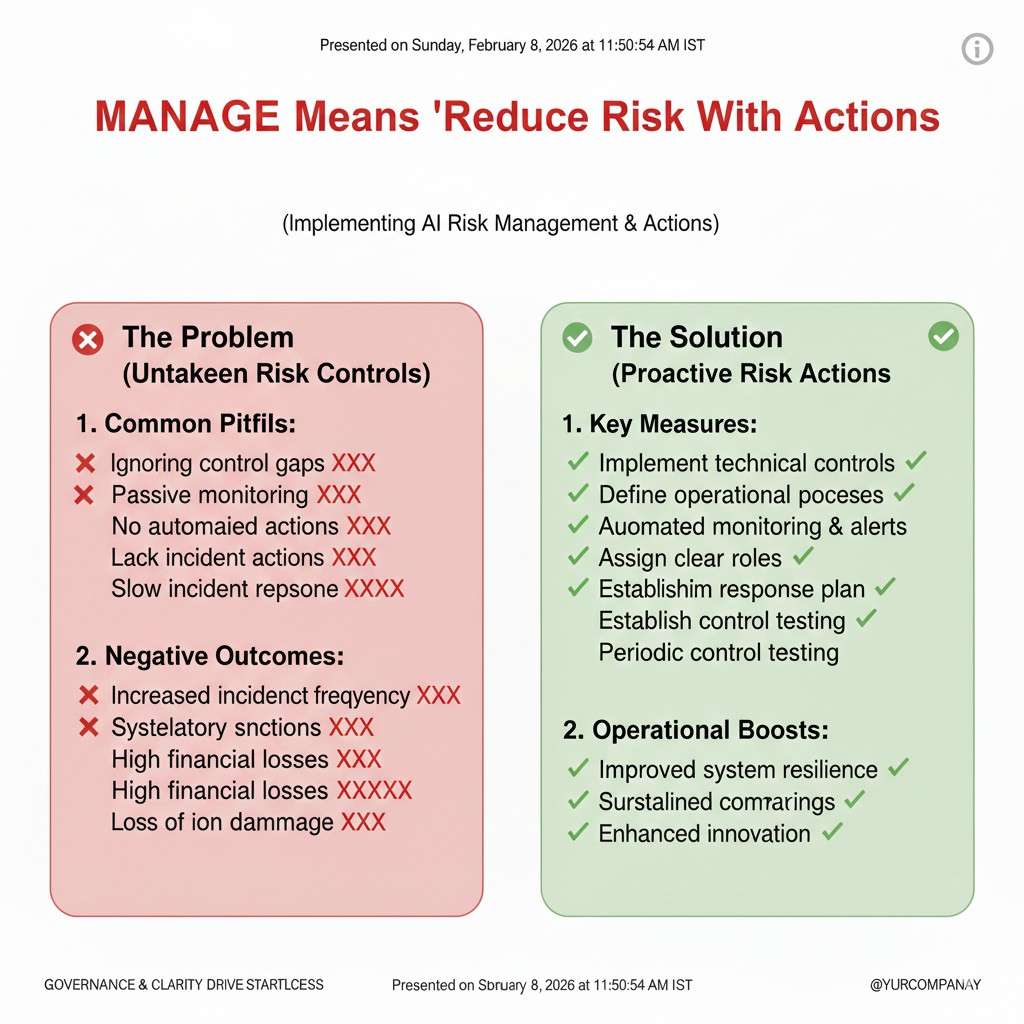

Manage means “reduce risk with actions”

Managing is where you make changes based on what you learned. If a risk is high, you reduce it. If it cannot be reduced, you limit the use. If neither works, you should not ship that feature yet.

This is also where you set response plans. When something goes wrong, you should already know what you will do. That keeps the team calm and keeps customers informed.

A startup-friendly way to run the framework

The weekly “risk standup” that takes 20 minutes

You do not need a committee. You need a short meeting with the right people. One product lead, one engineering lead, and someone who speaks for the customer. If you have a security or compliance advisor, bring them in when needed, not every time.

In that meeting, you review what changed this week. You ask what new data came in, what features moved, and what new customer use cases appeared. Then you pick one risk to focus on and one action to take. The goal is steady progress, not perfect coverage.

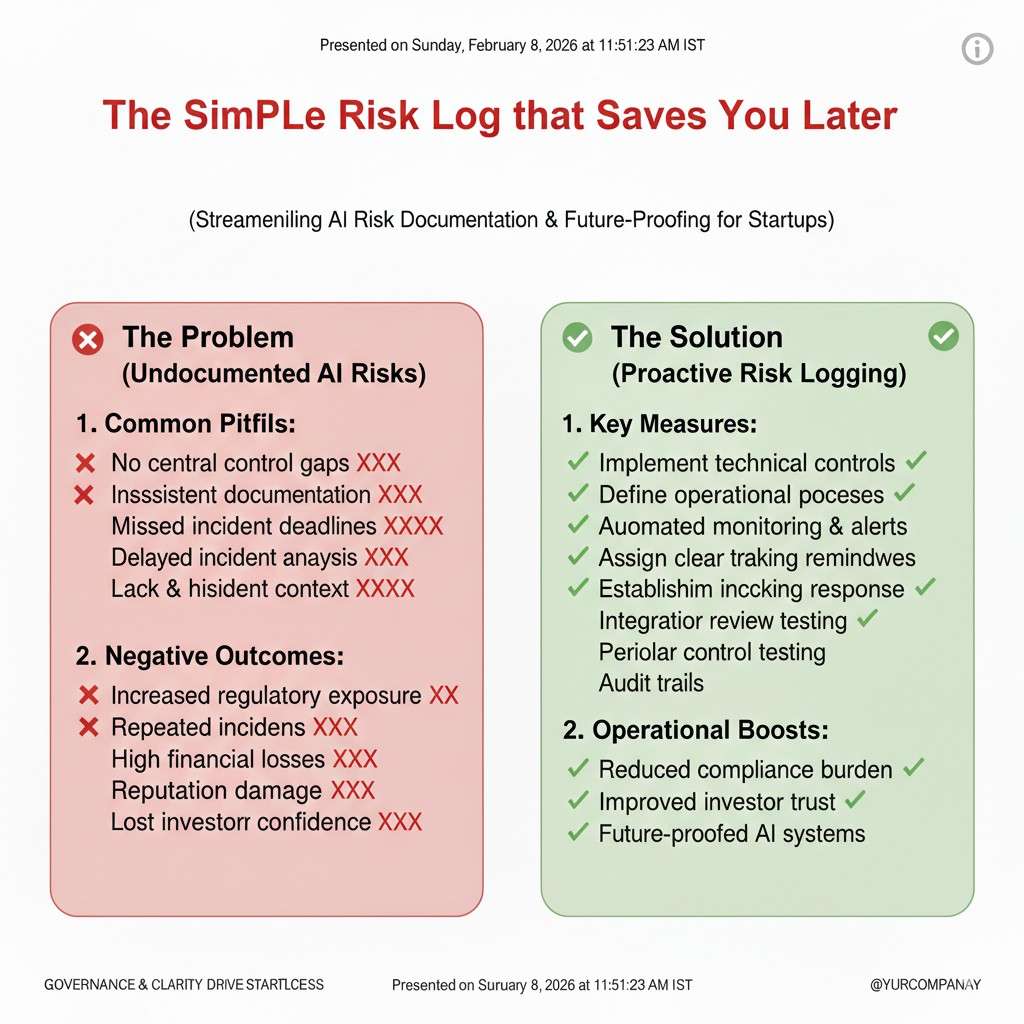

The simple risk log that saves you later

Keep a small document that tracks key risks and what you are doing about them. Do not make it long. If it becomes a novel, nobody will maintain it.

Each risk should have a clear name, why it matters, where it shows up, and what you are doing. Add a date and an owner. Over time, this becomes a history of your decisions. When an investor or customer asks for proof of maturity, you can show it without stress.

The “ship with guardrails” mindset

Startups often feel they must choose between speed and care. You do not. You can ship fast with limits. Guardrails let you launch while keeping the blast radius small if something goes wrong.

Guardrails can be simple. Limit which users get a feature. Add a confidence threshold. Add human review for high impact cases. Log model inputs and outputs for later checks, while protecting sensitive data. These steps are not glamorous, but they prevent painful surprises.

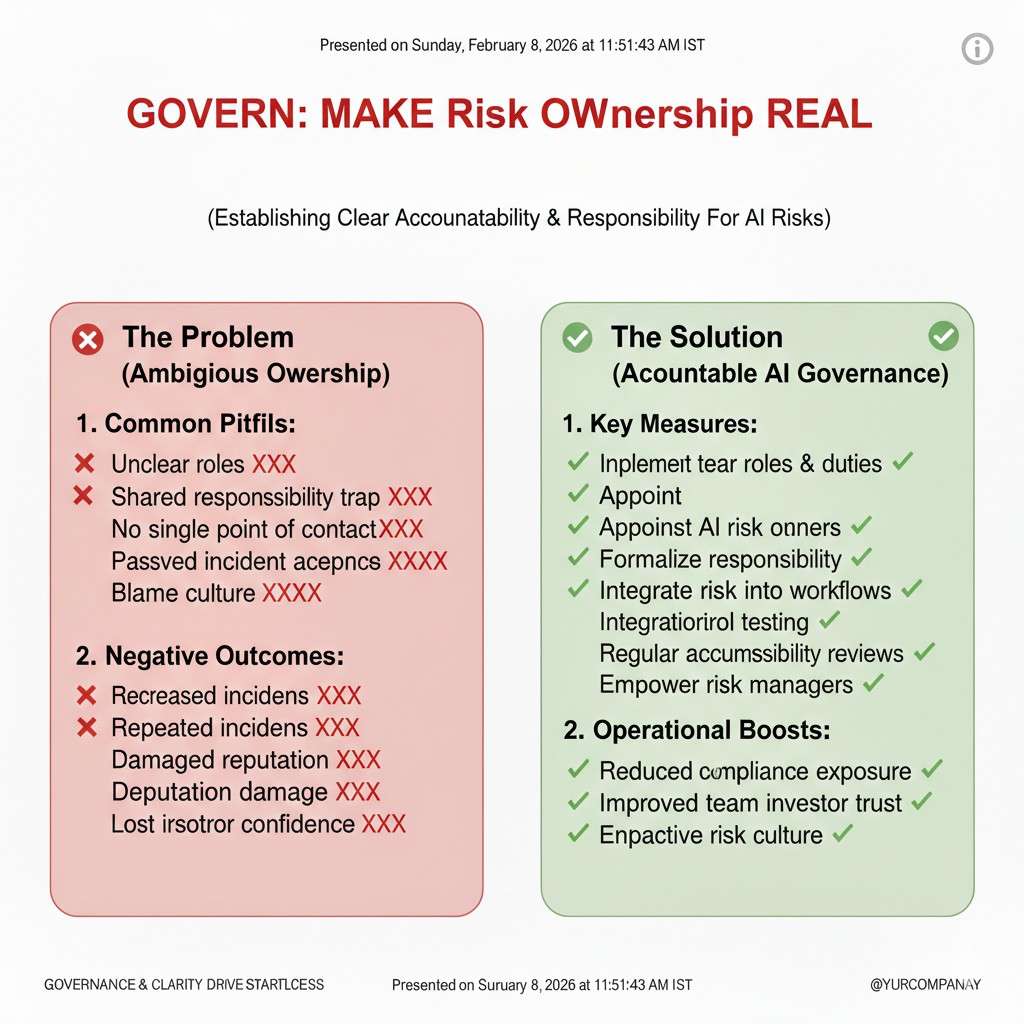

Govern: make risk ownership real

Pick a single decision owner for AI risk

Someone must be able to say, “This is safe enough to ship,” or “Not yet.” This does not mean one person does everything. It means one person has the final call when the team cannot agree.

In many startups, this is the CTO or Head of Product. What matters is clarity. When the owner is clear, the team moves faster. When the owner is unclear, debates repeat and risk grows.

Set your “no-go” lines early

A no-go line is a clear boundary you will not cross. For example, you might decide you will not deploy a model that affects hiring decisions without human review. Or you might decide you will not train on customer data unless you have written permission.

These lines are not about fear. They are about focus. They stop your team from taking on risk that can kill deals or create legal trouble.

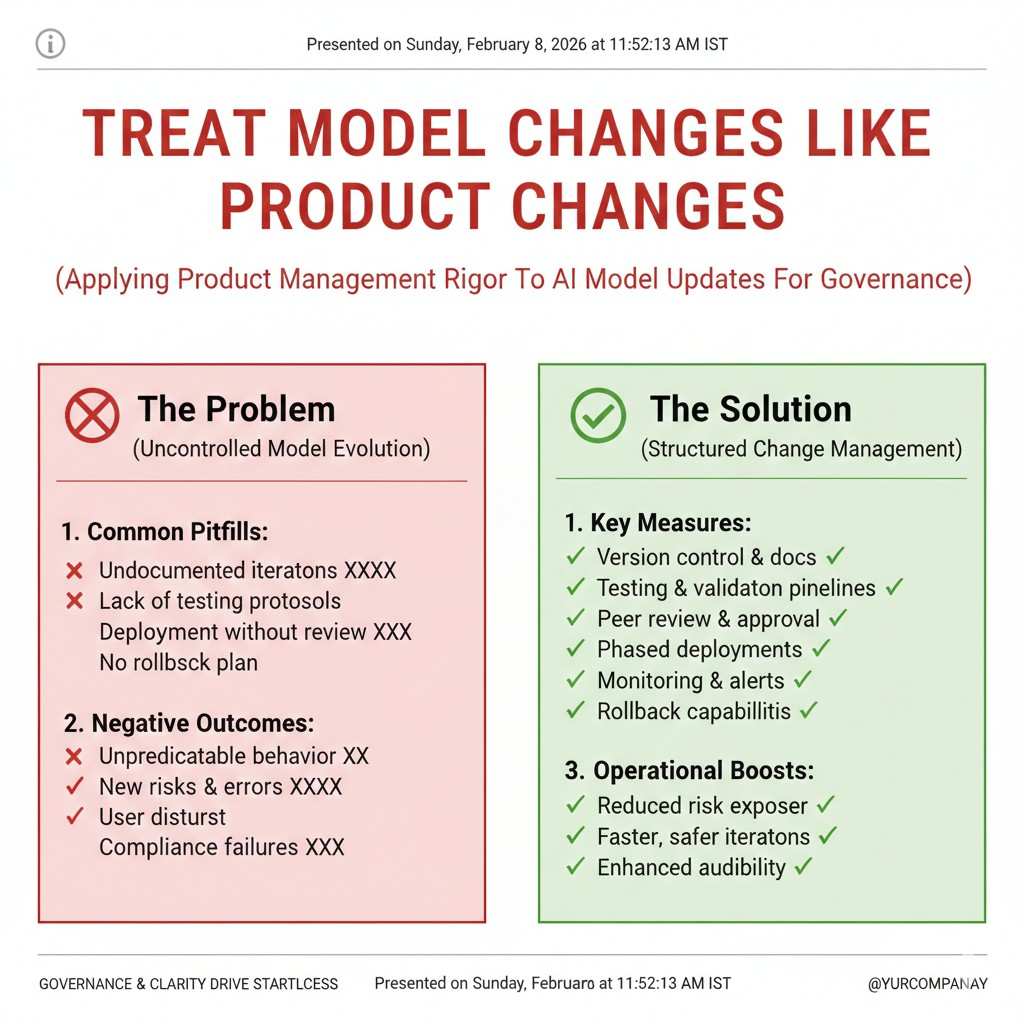

Treat model changes like product changes

A model update can change user outcomes. If you treat it like a silent backend tweak, you will miss problems. Instead, treat model changes like product releases.

Have a short release note internally. Record what changed, what tests were run, and what you expect to improve. If something goes wrong, you can roll back faster because you know what you shipped.

Map: understand your AI system in the real world

Define the real job your model is doing

Many startups describe the model type, like “LLM” or “vision model.” Buyers do not care about the label. They care about the job. Is it ranking? Is it summarizing? Is it detecting? Is it recommending?

When you define the job clearly, you can define failure clearly. That helps you test the right things. It also helps you explain the system to a customer in plain words.

List where the model touches people, money, or safety

Risk increases when your model affects important outcomes. If your AI can change a payment amount, approve an action, or guide a physical robot, the stakes are higher.

Mapping these touchpoints helps you decide where to add guardrails first. It also helps you prioritize which features need stronger review before launch.

Be specific about users and settings

A model may behave well in your test data and fail in a new setting. This happens when the real world differs from your training world. Different language, different lighting, different devices, different workflows.

Write down where the system will be used and by whom. If usage changes, update the map. Many “unexpected” failures are actually “we forgot the setting changed.”

Measure: choose tests that match your true risk

Accuracy is not enough

Accuracy can hide a lot. A model can be “good on average” and still harm a small group of users. It can also be accurate but unstable, where small input changes create wild output changes.

Measuring should include stability, edge cases, and performance in the hardest scenarios. The point is to see the cracks before customers do.

Test for harmful and misleading output

If your system generates text, images, or actions, you need to know how it fails. Hallucinations, unsafe advice, and toxic output can show up at the worst time, like during a demo or a pilot.

You can build a small set of “red team” prompts that represent your business. These are not generic. They should be based on your users. Run them each time you change a model or prompt, so you can see drift early.

Watch for data leaks and privacy risk

Startups often log everything because logs help debug. That is understandable, but it can create risk if sensitive data lands in places it should not. Some customers will ask how you handle this before they sign.

Measurement here looks like audits of what you store, who can access it, and how long you keep it. It also means checking whether a model can repeat sensitive text it saw before. If you cannot answer these questions, you will hit a wall with enterprise sales.

Manage: reduce risk with practical actions

Start with the highest impact failures

Do not try to fix everything at once. Focus on failure modes that can cause the most harm or the biggest business loss. This usually includes wrong outputs in high-stakes cases, security issues, and reliability problems that make the product feel broken.

When you fix the highest impact failures first, you gain confidence and you gain better customer trust. You also avoid spending months on low-value polish.

Use product limits as a safety tool

A simple limit can cut risk sharply. If your model is less reliable on certain inputs, you can block those inputs or route them to a safer path. If the system is not ready for certain users, you can delay access.

This is not a weakness. It is a smart launch strategy. Buyers prefer honest limits over hidden flaws.

Prepare a response plan before you need it

When something fails, the worst time to decide what to do is in the moment. A simple plan keeps you calm and keeps your customer informed. It also helps you collect the right evidence to fix the issue.

Your plan should include who gets notified, how you pause or roll back, and how you communicate. It should also include how you learn from the event, so you do not repeat it.

Turn the framework into a sales advantage

Build answers to common customer questions

Enterprise buyers often ask the same things. How do you test models? How do you handle drift? How do you prevent harm? How do you protect data? If you can answer quickly and clearly, you move faster than competitors.

The NIST AI RMF gives you a clean structure for those answers. You can turn it into a short “AI trust brief” that supports sales calls and security reviews.

Show evidence, not promises

A promise like “we take safety seriously” does not help. Evidence helps. Evidence can be test results, release notes, incident response steps, and clear ownership.

When you build this evidence as you go, it is not extra work later. It becomes part of how you build. That is what mature teams do, even at a small size.

Protect what makes you different

If you are building something novel, your risk work and your IP work should support each other. The same clarity you build in mapping and measuring can help you describe what is truly unique about your system.

Tran.vc helps technical teams lock that uniqueness into defensible IP early, with up to $50,000 in in-kind patent and IP services. If you want to see what that could look like for your product, you can apply anytime here: https://www.tran.vc/apply-now-form/

NIST AI RMF for Startups: A Simple Playbook

A 30-day startup playbook you can actually run

Week 1: Decide what “safe enough” means for your product

In the first week, your goal is not to write policies. Your goal is to remove confusion. Most AI teams move fast, but they move in different directions because each person has a different idea of what “good” looks like. One person thinks it is accuracy. Another thinks it is speed. Someone else worries about harm. All of them are right, but the team still needs one shared target.

Start by describing the job your AI is doing in one short paragraph. Not the model type, not the architecture, not the tooling. The job. If your AI is ranking leads, say that. If it is steering a robot arm, say that. If it is summarizing legal text, say that. This paragraph is the anchor you will reuse in sales calls, in your docs, and in your test plan.

Then define what failure looks like in real life. Not in theory. Real life. A failure is not “loss went up.” A failure is “the system tells a warehouse worker to pick the wrong bin,” or “the assistant invents a policy clause that does not exist,” or “the model misses a defect and a bad part ships.” When you name failure clearly, your team stops arguing about abstract ideas and starts fixing real outcomes.

Now choose the small set of outcomes you will protect. For many startups, that set is three things: avoid high-impact harm, protect customer data, and keep performance stable over time. Your set may differ, but it should be small enough to remember. If it is too big, it will not guide decisions when you are under pressure.

Week 1: Set ownership so decisions do not stall

The fastest teams are not the ones who skip hard topics. They are the ones who decide quickly. That only happens when ownership is clear. Pick one person who has the final call on AI risk tradeoffs. This does not mean they work alone. It means when two smart people disagree, the work still moves.

Also decide who can approve a launch. Many startups release models through a single engineer pushing a change. That is fine for early prototypes, but it becomes risky when customers depend on you. A simple rule helps: any model change that can change user outcomes needs a second set of eyes. You do not need a board. You need one reviewer and a habit.

Finally, decide how you will handle surprises. A surprise can be a user report, a bad output that shows up on social media, a customer security question, or a drift issue after a data shift. If your team already knows who responds and what the first steps are, you will look calm and capable when it matters most.

Week 2: Map your system like a buyer will map it

In week two, you build a map that matches reality. Most founders can describe the model. Fewer can describe the full system. Buyers and partners care about the system, because that is where risk lives. Data flows, prompts, retrieval, tools, human steps, monitoring, and updates all change the outcome.

Write down where your data comes from, how it is cleaned, and how it becomes model input. If you use customer data, say how it is separated. If you use third-party data, note the source and the allowed use. If you use web data, note how you remove sensitive or private items. This does not need to be long. It needs to be true.

Then write down what your system produces, who sees it, and what they can do with it. If your AI produces a recommendation, can a user act on it with one click? If your AI produces a robot action plan, does a human confirm it? These details define risk. They also define where guardrails should go.

Now make one more map that many startups skip: the “misuse map.” Ask how a user could use your system in a way you did not intend. This can be accidental misuse, like a user relying on output as final truth. It can also be intentional misuse, like trying to extract private training examples or using your system for harmful tasks. You do not need to solve every misuse path in week two. You do need to know the most likely ones, so you are not surprised later.

Week 2: Identify your “high stakes” moments

Risk is not equal across your product. Some moments carry more weight than others. A wrong summary in a chat may be annoying. A wrong instruction for a robot can break equipment or hurt a person. A wrong decision in a finance workflow can cause real loss.

Choose the parts of your product where an error is costly. These become your “high stakes” moments. In those moments, you should add extra checks. You should also measure more carefully. Doing this makes your system feel dependable, even if other parts are still improving.

This is also the week to decide where you need humans in the loop. Many founders avoid this because it feels slow. But early on, human review can be your best safety tool. It also creates labeled data and real feedback, which improves the model faster than guessing.

Week 3: Measure what breaks trust, not what looks good on a dashboard

In week three, you build a test routine that fits your product. The mistake here is copying a generic test list from the internet. Generic tests may not match your real use. You need tests that look like your customers. The closer your tests are to reality, the less you get surprised in pilots.

Start with a small “truth set.” This is a set of examples where you know the right outcome. It can be 50 examples or 500. It does not need to be huge. What matters is that it represents the real cases that matter most. If your product has rare but important edge cases, include them on purpose. Do not leave them out because they make numbers look worse.

Add a small “stress set.” These are inputs designed to push the model into failure. For an assistant, this could be tricky prompts that tempt hallucination. For vision, it could be poor lighting or unusual angles. For robotics, it could be sensor noise and timing changes. The goal is to find failure before customers do.

Then add a “drift check.” Drift is when the world changes and your model’s performance changes with it. Drift can come from new user behavior, new data patterns, season changes, new devices, or new product features. You can keep drift checks simple. Run the same truth set each time you change the model. Track key numbers. If they move in the wrong direction, pause and inspect.