Regulations can feel like a wall you hit after you start building. For deep tech founders, it is better to see that wall early—while you still have freedom to shape the product, the data flows, the business model, and your IP. This guide is about doing that in a calm, practical way for three tough areas: health, fintech, and AI. The goal is not to turn you into a lawyer. The goal is to help you avoid the common traps that burn months, money, and trust.

If you are building something in robotics, AI, or other advanced tech—and you want to turn your work into real business assets—Tran.vc can help you protect the core with patent and IP services worth up to $50,000. You can apply any time here: https://www.tran.vc/apply-now-form/

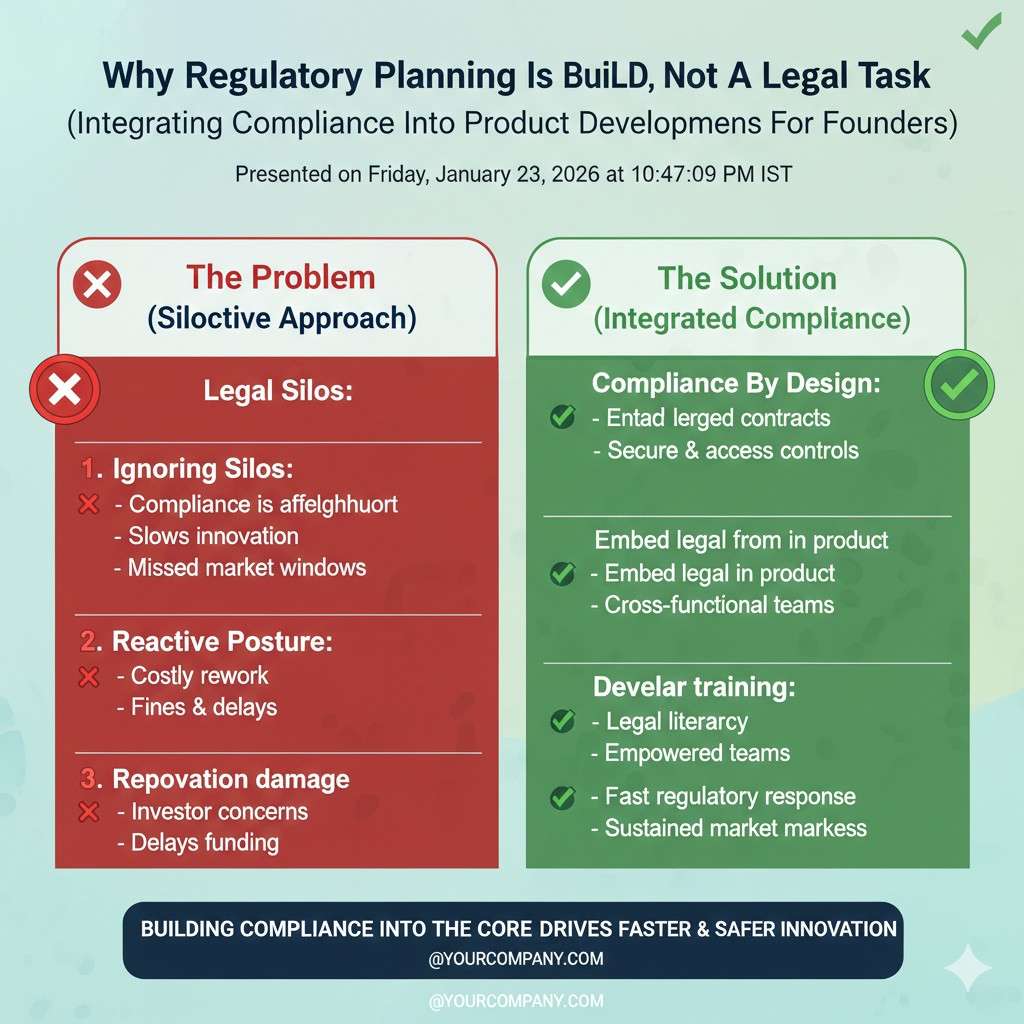

Why regulatory planning is a build skill, not a legal task

Most founders treat rules like paperwork. That is the first mistake.

Rules shape what you can sell, who will buy, how fast you can ship, and what proof you need. If you plan early, rules become a design input—like battery life, latency, or unit cost. If you plan late, rules become a surprise tax.

Here is a simple way to think about it. Every regulated product has three parts:

- The claim: what you say it does.

- The path: what you must do to sell it.

- The proof: what you must show to keep selling it.

Your claim is the steering wheel. Small changes in words can change your whole regulatory path. “Helps you track symptoms” is not the same as “detects disease.” “Shows spending” is not the same as “gives personal financial advice.” “Suggests text” is not the same as “decides who gets a loan.”

So the first step is not “talk to a regulator.” It is to write down your product claim in plain words, then test the claim like a stress test.

Ask:

- If I say this out loud to a customer, what will they believe I mean?

- If something goes wrong, what harm could happen?

- If I put this on a website, what would a regulator think I am selling?

- If a big company copied this, what part would I want to protect with patents?

That last question matters. Regulatory planning and IP planning work best as one system. If the rules force you to build a certain process, that process can be patentable. If the rules force you to gather evidence in a certain way, that method can also become a moat. Smart founders do not “comply” and move on. They comply in a way that creates defensible assets.

That is the Tran.vc model in one line: protect what matters early, so you can raise with leverage later. If you want that support, apply here: https://www.tran.vc/apply-now-form/

The shared pattern across health, fintech, and AI

These three fields look different on the surface. Underneath, they are the same game. They all deal with trust. Trust has a cost, and rules are one way society collects that cost.

In health, the trust problem is physical harm.

In fintech, the trust problem is money harm.

In AI, the trust problem is decision harm at scale.

Your regulatory plan should answer four questions in order:

1) What are we building, really?

Not the pitch. The real thing. The full system: app, model, device, data, and humans in the loop.

2) Who can be harmed, and how?

Not “users” in general. Name the groups. Patients. Nurses. Kids. Borrowers. Small merchants. People who get flagged. People who do not speak English well. People with no credit file.

3) What rules attach to that harm?

You are not reading every law. You are mapping risk to rule types.

4) What will we do now, this month, to reduce risk and keep shipping?

The plan must produce actions that fit a startup schedule.

Now let’s walk through each area.

Regulatory planning for health products

If you build for health, you must be very clear on what you are: a wellness product, a clinical tool, a medical device, a lab service, a support system, or something else. Many founders accidentally step over the line because the marketing gets ahead of the evidence.

Start with the “claim ladder”

Imagine a ladder. The lowest rung is “general wellness.” The top rung is “diagnosis and treatment.” The higher you climb, the more proof you must provide.

A good early tactic is to start lower on purpose while you build evidence. That is not “playing small.” It is sequencing.

For example, if your long-term goal is to detect early heart issues, your first shipped product might be: “helps you notice patterns and talk to your doctor.” That can let you test engagement, data quality, retention, and model behavior without making a medical claim you cannot defend yet.

This is not just a legal trick. It helps your product. Early users do not want a robot doctor. They want clarity, a next step, and a reason to trust the output.

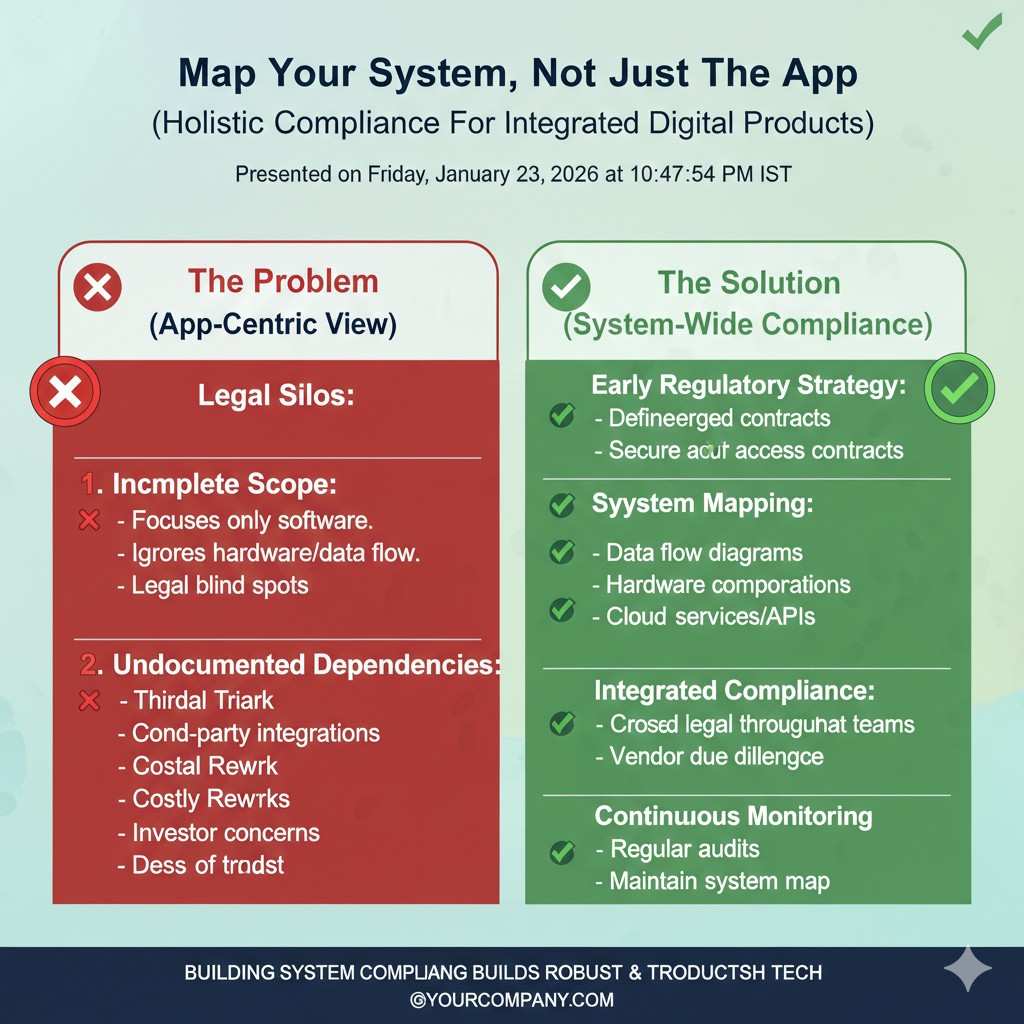

Map your system, not just the app

Regulators and customers do not see “an AI model.” They see a system that can affect care.

So draw your system in plain words:

- Where does data come from?

- Where is it stored?

- Who sees it?

- What does the model output?

- Who acts on it?

- What happens if the output is wrong?

- How do you detect that it is wrong?

When you do this, you will notice risk points right away. Maybe your output will be used by a clinician, but your UI looks like it is telling the patient what to do. Maybe you train on hospital data, but your model will be used in a different setting. Maybe you rely on a wearable sensor that drifts over time.

Those are not “later problems.” Those are design problems today.

Build a proof plan that matches your stage

Health proof can mean many things. It can mean clinical study data. It can mean bench testing. It can mean software validation. It can mean quality processes.

Early-stage founders often make two mistakes:

Mistake one: they aim for perfect proof too early.

They plan a full clinical trial before they even know what feature users will keep. That is a fast way to burn cash.

Mistake two: they collect no proof at all.

They ship with vibes and hope. Then a partner hospital asks for evidence and the deal dies.

The right approach is a stepwise proof plan. You should always be collecting some form of proof that your system is safe and works as intended, even if it is not yet the “final” proof needed for full clearance.

A practical way to do this is to split proof into three buckets:

Safety proof: show you do not create harm by design.

That can be basic: clear warnings, escalation paths, not showing scary outputs without context, and limits on what the tool can do.

Performance proof: show your output is stable and meaningful.

Start with internal validation. Then small external tests. Then deeper studies.

Process proof: show you build and ship in a controlled way.

Version control, change logs, tests, access logs. Not fancy. Just real.

This is where many founders can turn compliance into IP. If you invent a better way to monitor model drift for a medical workflow, that can be protectable. If you invent a method to calibrate sensors in the field with less cost, that can be protectable. Rules push you to create structure. Structure can become a moat.

Tran.vc is built for this kind of work: turning technical steps into defensible IP. If you want help with patent strategy and filings while you build, apply here: https://www.tran.vc/apply-now-form/

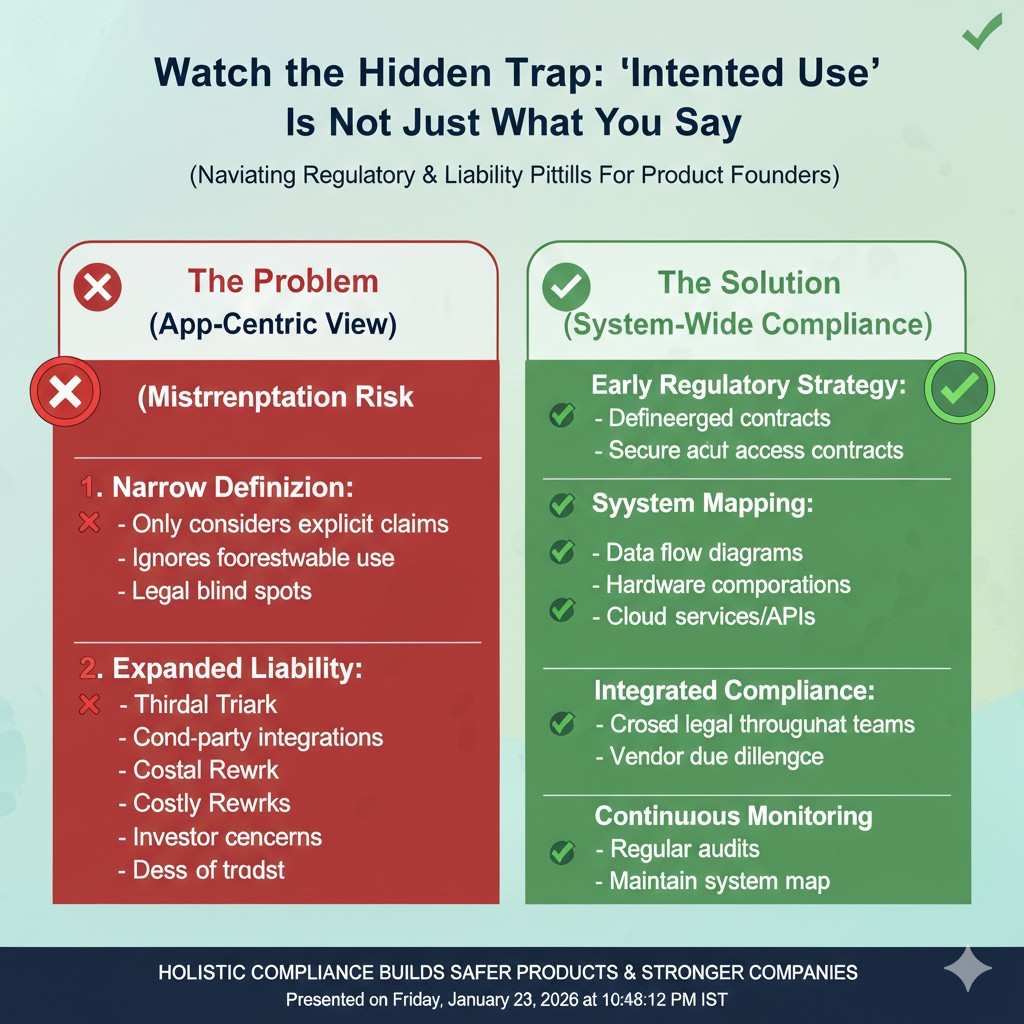

Watch the hidden trap: “intended use” is not just what you say

In health, what matters is not only what you claim. It is also what you imply.

Your UI, onboarding, help docs, and even your sales calls can change how the product is seen. If your tool is being used like a medical device, it may be treated like one. If you show “risk scores” with medical labels, you may be stepping into a higher category.

A simple tactic: create a one-page “intended use guide” for your own team. It should say:

- what the tool is for

- what it is not for

- who the user is

- what decisions it can support

- what decisions it must not make

Then train your sales and support teams on it. This seems boring. It saves you later.

Plan for privacy and security like a product feature

Health data is sensitive. Even when you think you are not “in healthcare,” you may still handle sensitive data. Build as if you will be audited.

The early moves that pay off:

- Use data minimization: only collect what you truly need.

- Separate identities from health signals where possible.

- Log access and changes.

- Use role-based access inside your team.

- Have a clear plan for deletion and retention.

Even if you are tiny, these habits make it easier to partner with hospitals, payers, and enterprise buyers. They also reduce the blast radius of mistakes.

Now, before we move into fintech and AI, take a breath and do a quick self-check.

If you are a health founder, can you answer this in one clean sentence?

“What is our product, who uses it, and what decision does it support?”

If you cannot, that is your first work item.

Regulatory planning for fintech products

The first job is to name what you are doing with money

Fintech rules change fast based on one simple thing: are you moving money, holding money, advising on money, or helping someone decide about money. Many startups say, “We are just software,” but the user experience can still trigger financial rules if it shapes real outcomes. The cleanest early move is to write down your exact money flow in plain words, from the first click to the final settlement.

When you describe the flow, be specific about where funds sit at each step. If money ever touches your accounts, even for a short time, you may be stepping into a more regulated lane. If you never touch funds, but you “direct” funds, you may still have obligations depending on partners and geography. This is why fintech planning starts with mapping, not legal terms.

Treat “we don’t custody funds” as a design constraint

A common early strategy is to avoid holding customer funds at the start. That can reduce complexity, lower risk, and speed up shipping. But it only works if your product truly behaves that way. Your UI, your terms, and your partner contracts must all line up with the same story. If a regulator or a bank partner sees mixed signals, they will slow you down.

Design your product so the customer clearly understands who holds the money and who is responsible for the account. If you use a partner bank, make their role visible in the product. If you rely on a payment processor, make the payment steps transparent. Clear lines reduce confusion, and confusion is where complaints begin.

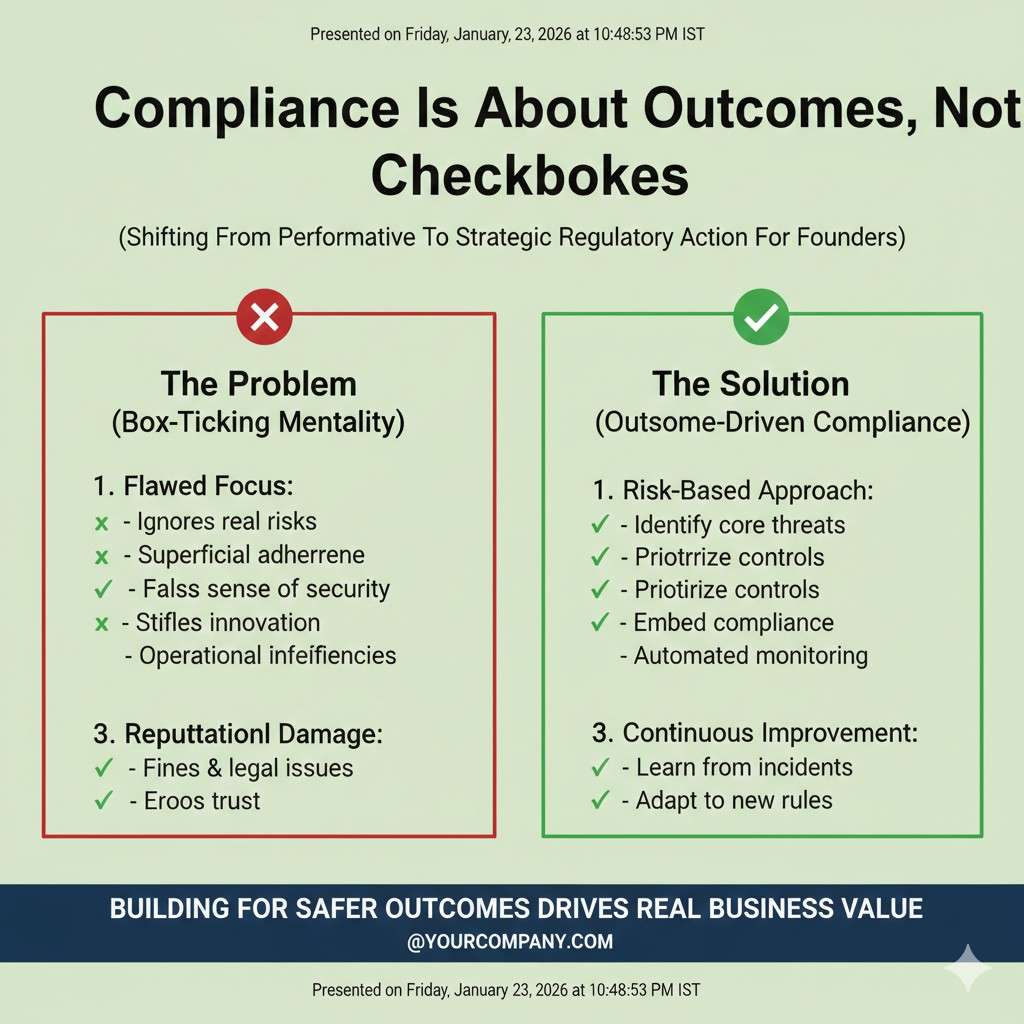

Compliance is mostly about stopping bad outcomes, not checking boxes

Fintech compliance is often described as “forms and rules,” but the real goal is simple: stop fraud, stop abuse, stop harmful mistakes, and keep records so problems can be traced. Even if you are not required to do everything on day one, the best fintech teams build a product that behaves like it will be reviewed tomorrow.

Start with the user journeys where harm is most likely. That includes onboarding, account access, password resets, chargebacks, refunds, and disputes. These are not edge cases. They are the moments where real money gets lost and trust collapses. If your product handles these poorly, growth will not save you.

Know the difference between identity checks and risk checks

Founders often bundle all “checks” into one bucket, but they serve different jobs. Identity checks aim to confirm the user is a real person and matches the data provided. Risk checks aim to detect suspicious behavior and stop bad transactions. You need both, but you should treat them as separate systems with separate signals.

If your onboarding is too strict, good users will bounce and you will grow slowly. If your onboarding is too loose, fraud will eat you alive and payment partners will cut you off. The tactic is to tune checks in stages, then measure how often they block good users versus how often they stop real abuse. Good fintech teams treat this as a living system, not a one-time setup.

Be careful with anything that sounds like “financial advice”

If your product suggests what someone should do with money, you must be cautious with wording, UX, and customer support scripts. “Here is your spending” is different from “you should move money into this account.” “Here are options” is different from “this is the best option for you.” The more personal and direct your guidance becomes, the more you drift toward regulated advice in many places.

A practical early move is to frame outputs as education and tools, not commands. You can provide explanations, comparisons, and calculators while still letting the user make the final choice. If your long-term plan is to deliver advice, you can build toward that with proof, controls, and the right partners. The mistake is to imply advice before you have the structure to support it.

Build your partner plan before you build the full product

Many fintech startups succeed by building on top of regulated partners, not by becoming a regulated entity immediately. That can include sponsor banks, payment processors, card issuers, and compliance platforms. But partner-based fintech has its own rules, and the biggest rule is this: your partner will demand evidence that you are safe to work with.

They will want to see your policies, your monitoring, your incident plan, and your ability to respond fast when something goes wrong. You do not need a huge team for this, but you do need clear processes. If you wait until you are in a deal process, you will scramble and lose months.

If you are building deep tech inside fintech, this is also where IP can matter. A unique fraud detection method, a secure workflow for approvals, or a data minimization approach that still supports strong checks can become protectable. Tran.vc can help you shape that into patentable work while you build, and you can apply here: https://www.tran.vc/apply-now-form/

Record-keeping is your future defense

In fintech, a serious issue can turn into a “show me what happened” moment very fast. If you cannot reconstruct events, you will lose trust with users, partners, and sometimes regulators. Logging is not glamorous, but it is one of the highest leverage moves you can make early.

Your logs should answer basic questions without drama. Who logged in, from where, and how. What changed on the account and when. What payment was initiated, approved, rejected, or reversed. Which rules fired. Which human reviewed it, if any. When you have this, you can debug problems and handle disputes with confidence instead of panic.

Design for the “complaint path” on day one

Many founders build for happy paths and ignore complaints. In finance, complaints are not just customer service events. They can become formal reports, chargebacks, and platform bans. A complaint path is the set of steps a user takes when they believe something went wrong, and the steps you take to resolve it.

Make it easy to report issues. Make it clear what timelines look like. Provide a real way to reach support. Even if you are small, define who owns escalations and what triggers an urgent review. Fast and respectful resolution lowers refunds, reduces public anger, and keeps partners calm.

Geography is not a detail in fintech

In fintech, where your customer lives matters. Where your company is based matters. Where the partner bank is located matters. A plan that works in one place may fail in another. This is why fintech regulatory planning is always tied to launch sequencing.

A useful approach is to pick one initial region where you can be excellent, then expand. Expansion becomes easier when you already have a working compliance system, even if it is lightweight. If you try to launch everywhere at once, you will drown in differences and end up shipping nothing stable.

Regulatory planning for AI products

AI rules are really about outcomes, not algorithms

People talk about “AI regulation” as if it targets models and math. In practice, most rules focus on what the system does to people. If your AI influences health decisions, money decisions, or access to jobs and housing, you will be treated as high risk in many places. If your AI is used for fun, low-stakes tasks, the burden is often lighter.

So start by naming the decision your AI affects. Then name what happens when it is wrong. In AI, scale is the multiplier. A small error repeated across thousands of users becomes a serious harm. Regulators and enterprise buyers think this way, even if startups do not at first.

Separate three things: data risk, model risk, and product risk

AI planning is cleaner when you split the system into three risk types. Data risk is about how you collect, store, and use information, including consent and sensitive data. Model risk is about accuracy, drift, bias, and unexpected behavior. Product risk is about how humans interpret outputs and act on them.

These risks interact, but they need different controls. A model can be strong, yet the product can still cause harm if users misunderstand it. A product can be careful, yet still be risky if the data source is not allowed or is poorly secured. When you separate the risks, you can fix the right thing instead of adding random guardrails.

Start with a “use policy” that is written like product copy

Most AI teams write internal policies that read like legal docs and then nobody follows them. A better method is to write a short use policy in plain words that sounds like a product manual. It should state what the tool is for, what it cannot do, and where humans must review outputs.

This policy becomes your anchor. It guides your UI wording, your user training, and your sales messages. It also gives you a consistent story when partners ask, “How do you prevent misuse?” The earlier you lock this down, the fewer surprises you will face later.

Make your model behavior measurable in the real world

Founders often show model metrics from a test set and assume the work is done. In real use, inputs change, users behave differently, and edge cases appear. If you cannot detect drift and failures, you are flying blind. The best early tactic is to build monitoring into the product like a core feature, not an add-on.

You want to know when the model is being used in a way you did not expect. You want to know when accuracy drops for a certain segment of users. You want to know when the model starts refusing too much or hallucinating more than usual. This requires instrumentation, careful sampling, and a feedback loop that is respectful of users.

Put human review where it matters, not everywhere

“Human in the loop” is often used as a blanket safety line, but it only works when the human is placed at the right point. If you add human review for every output, you will not scale and you will create delays that users hate. If you add no human review, you may create harm that is hard to undo.

The practical approach is to identify the outputs that can cause real damage, then gate those outputs with review or extra checks. Low-risk outputs can stay automated. High-risk outputs can require confirmation, escalation, or a second signal. This makes safety real while still letting the product move fast.

Explainability is often a product problem, not a model problem

Many AI risks come from users trusting outputs too much, or not trusting them at all. Both are bad. You do not always need deep technical explainability. You often need simple, honest context. What data was used. What the model is confident about. What it is unsure about. What the user should do next.

This is why good AI teams invest in clear UX around uncertainty. They show limits. They show suggestions, not orders. They provide a way to challenge results. These are not “nice to have.” In regulated or high-trust markets, they can decide whether you get adopted.

If you build AI for health or fintech, you inherit those rules too

AI does not live in a vacuum. If your AI sits inside a medical workflow, you must act like a health product. If your AI shapes lending, pricing, fraud actions, or account access, you must act like fintech. This is where many teams stumble, because they treat AI as a separate category when it is really a layer inside an older, stricter category.

So your regulatory planning must be stacked. You plan for the sector rules first, then add AI controls on top. When you do this, buyers feel safer because you speak their language. Regulators also respond better because you are addressing the actual harm path, not just talking about technology.

Turn safety work into defensible IP

A hidden advantage in AI regulation is that it forces you to solve hard problems in a structured way. Methods to reduce hallucinations in a specific workflow, approaches to privacy-preserving learning, tools for drift detection, and systems that enforce use limits can be real inventions. If you build them early, you can protect them.

Tran.vc helps founders turn these technical moves into IP assets through patent strategy and filings as in-kind support worth up to $50,000. If you want to build your moat while you build your product, apply here: https://www.tran.vc/apply-now-form/