If you are building AI, you are building something that can be copied fast. Code leaks. Models get cloned. Features get rebuilt in weeks. A patent can slow that down—if it is written the right way.

But here is the hard truth: most AI patent specs fail long before they reach an examiner’s final decision. Not because the idea is weak. Not because the tech is not real. They fail because the spec does not teach the invention in a way examiners can trust, search, and map to claims.

A strong AI patent spec is not a marketing page. It is not a pitch deck. It is not a research paper either. It is a set of clear instructions that shows what you built, how it works, what makes it new, and how someone would make and use it—without guessing.

That is what examiners expect. And once you know what they look for, you can write specs that survive the hard parts: enablement, written description, and clarity. You can also write in a way that makes later claim changes easier, which matters because AI patents almost always go through edits during prosecution.

In this article, I will show you how to write AI patent specs that feel “obvious” to an examiner in the best way: easy to follow, easy to believe, and hard to reject.

If you want to protect your AI properly from day one, you can apply anytime here: https://www.tran.vc/apply-now-form/

Writing Strong Patent Specs for AI: What Examiners Expect

Why AI patent specs feel harder than other patents

AI work is slippery on paper.

You may know exactly why your model works, but the moment you describe it, it can sound like “we used ML to predict X.” That line is too thin. It does not help an examiner understand what you actually built.

Most AI teams also move fast.

They prototype, swap models, change features, and tune data pipelines every week. The patent spec, though, must be stable. It must explain the invention in a way that stays true even if the code changes later.

Another problem is that AI has many “moving parts.”

Data, labels, model type, training steps, deployment, monitoring, and feedback loops can all matter. If your spec skips one key part, the examiner may say you did not describe enough to support your claims.

If you want help shaping a spec that stays strong even as your product changes, you can apply anytime here: https://www.tran.vc/apply-now-form/

What examiners are trained to look for

Examiners are not trying to “get” you.

They are trained to apply rules in a repeatable way. Their job is to read your spec, compare it to known prior art, and decide whether your claims are new and supported.

They read with a very practical mindset.

They ask: “What is the invention?” and “Where is it described?” and “Would a skilled person be able to make and use it from this spec?” If your spec does not answer those questions clearly, the examiner cannot give you the benefit of the doubt.

They also have a time limit.

That matters a lot. If your spec is vague, they will not spend hours guessing what you meant. They will look for direct statements, clear flow, and concrete examples they can point to in an office action.

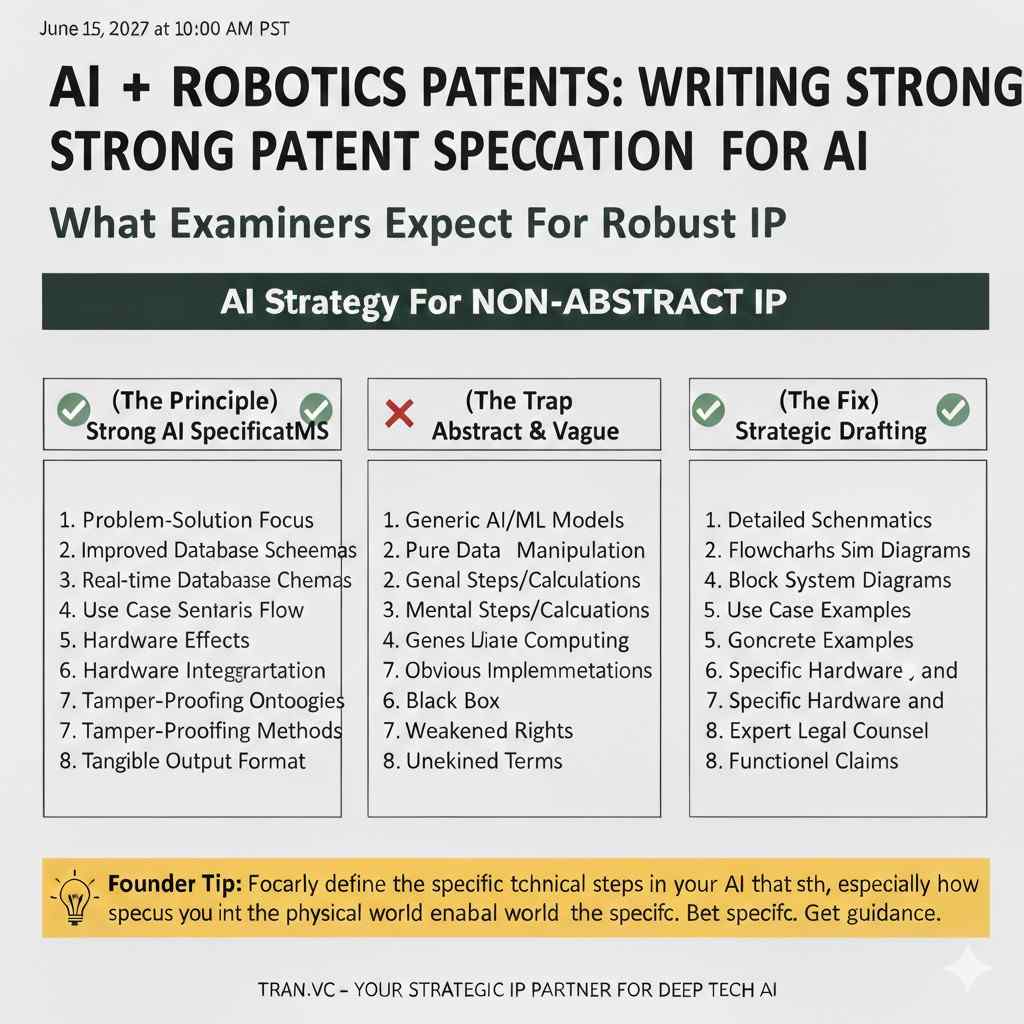

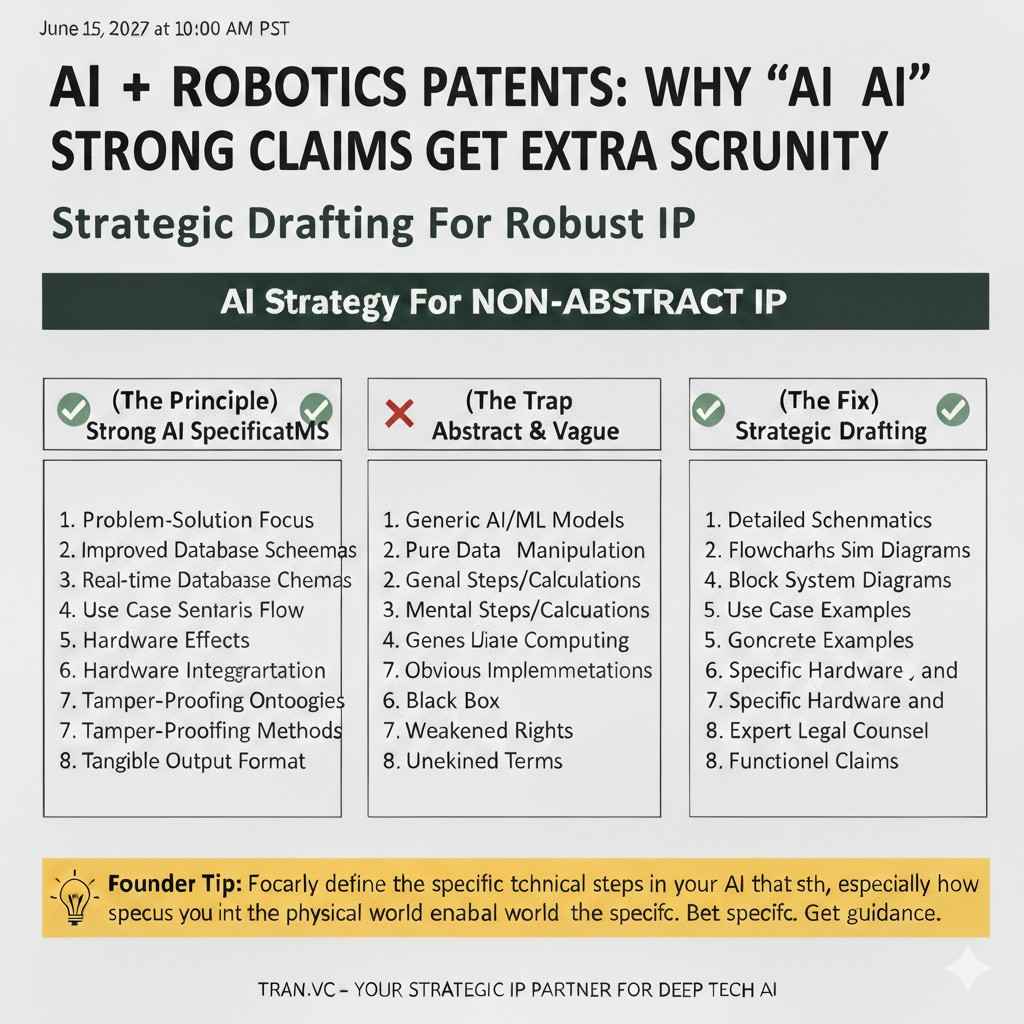

Why “AI” claims get extra scrutiny

AI patents often collide with two common examiner concerns.

The first is that the claims sound like an abstract idea, such as “analyzing data and making a decision.” The second is that the claims sound too broad, like you are trying to own all ways of doing something with a model.

A strong spec helps on both fronts.

It anchors the invention in a real system with real steps, real inputs, and real outputs. It also explains what is special about your approach, in a way that is not just “we used a neural network.”

When you describe the technical details with care, your claims can be written in a way that feels tied to a working invention, not a wish.

If you are building AI that must be defendable before you raise, Tran.vc can help you build that foundation early. Apply here: https://www.tran.vc/apply-now-form/

How examiners actually read your AI patent spec

The first pass is not deep, it is a scan

Most examiners start with a fast scan.

They look at the abstract, the field, the summary, and the main figures. They want a quick mental map of what the invention is and how it works.

If that first scan is confusing, you lose momentum.

The examiner will not feel confident that the spec is “tight.” They may assume your claims are also loose. That leads to broader prior art searches and tougher objections.

Your goal is to make the first scan easy.

You want the invention to stand out in simple words: what problem you solve, what you built, and why it works better than known approaches.

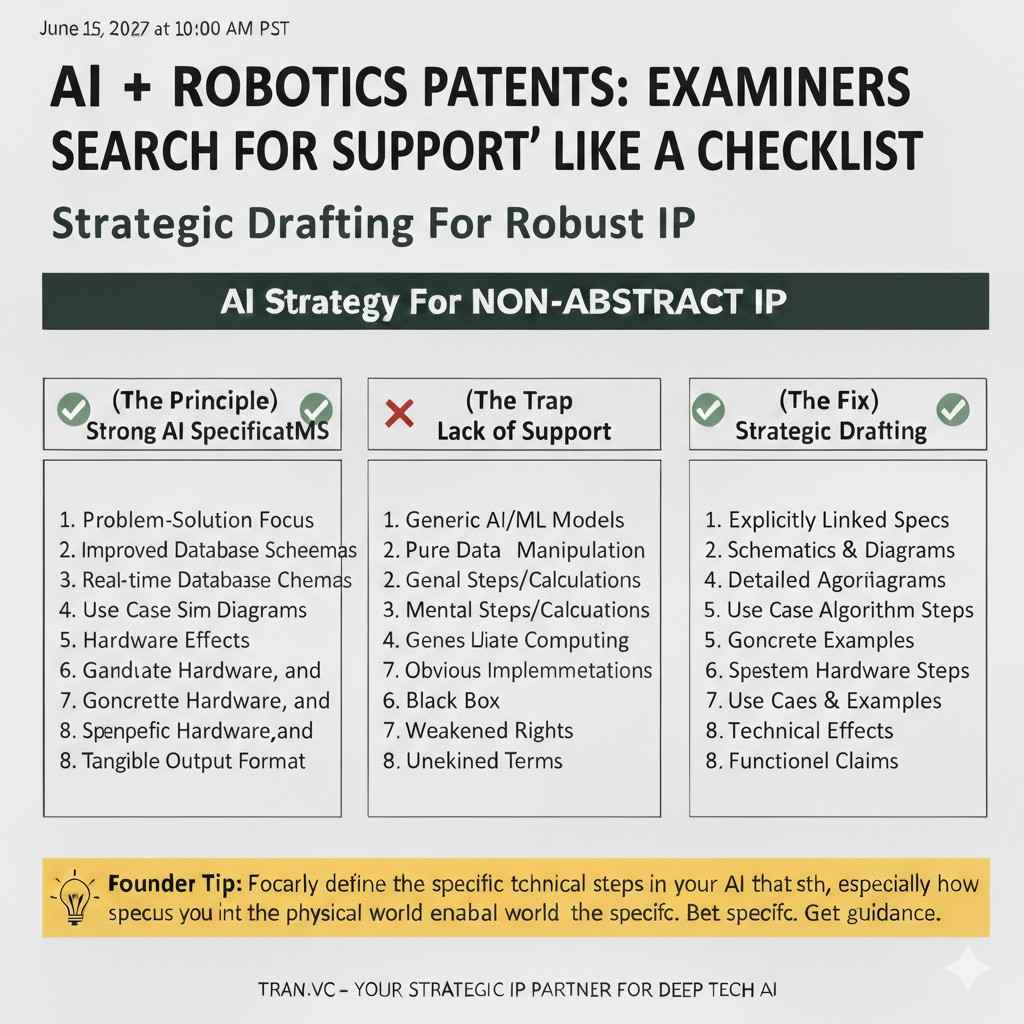

They search for “support” like a checklist

After the scan, they start matching.

They match claim terms to spec terms. They look for steps in your claims and try to find those steps clearly described in the spec.

If a claim says “training using a loss function that weights rare events,” the examiner will look for that exact idea.

If your spec only says “we train the model,” you will get a written description or enablement pushback when you try to claim that detail later.

This is why good AI specs do not hide the ball.

They say key things plainly and repeat key concepts in different sections using consistent language, so the examiner can point to support quickly.

They compare your spec to prior art, not your pitch deck

Examiners do not care if your startup is “unique.”

They care if your claims are new over what they can find in patents, papers, and public examples.

Many founders write specs like they write investor updates.

They describe outcomes and business value. That tone is not helpful in prosecution. The examiner needs to see the technical “how,” not the market “why.”

A strong spec shows technical choices.

It explains why you chose certain features, how you handle edge cases, how you avoid failure modes, and how your pipeline differs from standard setups.

If you want a team that helps translate your real engineering work into patent-ready language, apply here: https://www.tran.vc/apply-now-form/

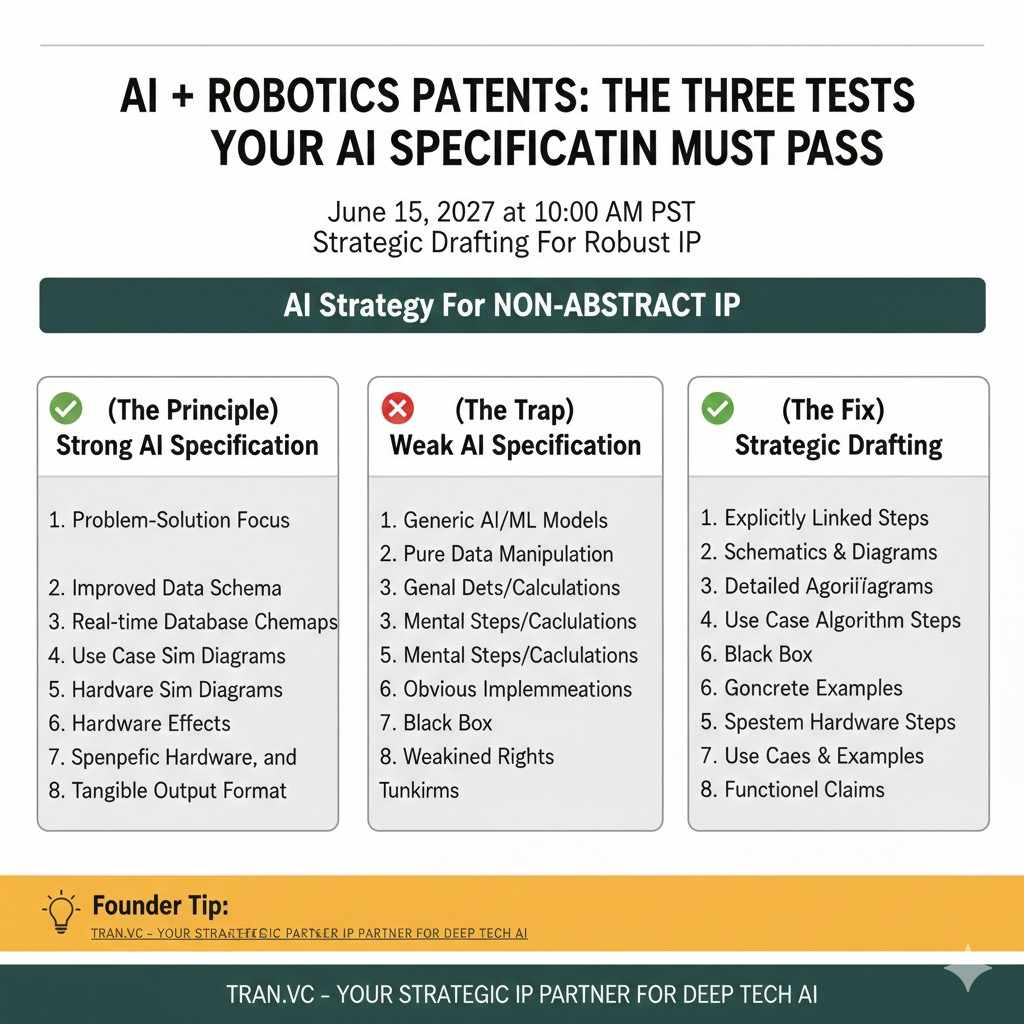

The three tests your AI spec must pass

Enablement in plain words

Enablement means the spec must teach.

Not in a classroom way, but in a “skilled person can build it” way. Your spec must describe enough details so the invention is not magic.

For AI, enablement often fails when the spec avoids specifics.

If the spec never explains what inputs are used, what outputs are produced, how the model is trained, and what is done at inference time, an examiner can argue the spec is too thin.

You do not need to give your source code.

But you must explain the method in a practical way. Think of it like describing a recipe that a skilled cook could follow without guessing the key steps.

Written description in plain words

Written description means you must show possession.

It is not enough to say you want to claim something later. You must show you had it in mind and you described it clearly in the spec.

AI patents get hit here when claims evolve.

During prosecution, you often narrow or reshape claims. If your spec does not contain the details you later want to claim, you may be stuck.

That is why early drafting matters.

A solid spec describes the invention from multiple angles, so you can later choose the right claim shape without running out of support.

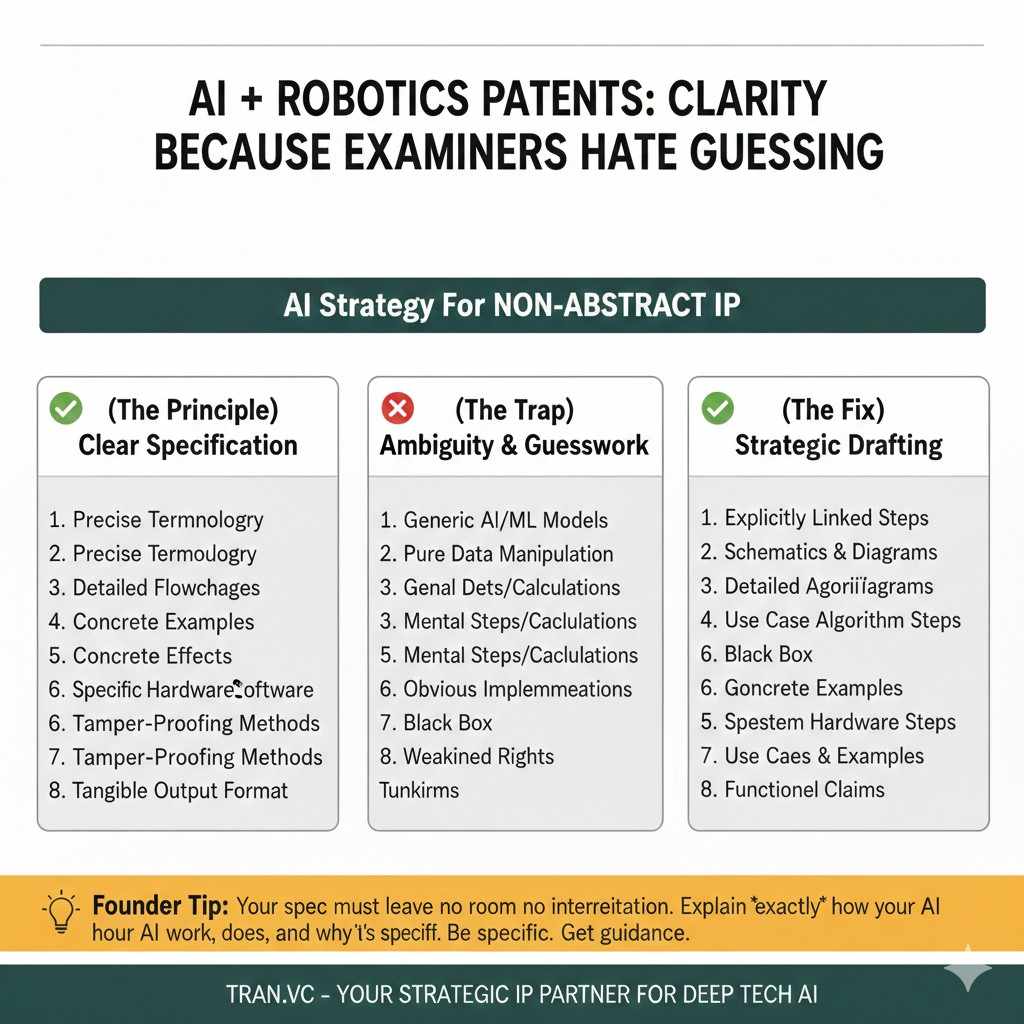

Clarity, because examiners hate guessing

Clarity is about making terms understandable.

If you use words like “optimize,” “improve,” “intelligent,” or “advanced,” you must explain what they mean in your system.

AI is full of overloaded words.

“Confidence score” could mean probability, margin, entropy, calibration output, or something else. If you do not define it, the examiner may interpret it in the least helpful way.

A clear spec defines terms by use.

It explains what the system computes, what the values represent, and how they are used to trigger actions.

If you want Tran.vc to help you build specs that survive these tests from the start, apply here: https://www.tran.vc/apply-now-form/

How to describe an AI invention so it feels real

Start with the problem in technical terms

The problem section should not be dramatic.

It should be technical and specific. It should explain what fails in current systems and why that failure matters.

For example, instead of saying “current systems are inaccurate,” you can say the system fails in rare events, drifts after deployment, or cannot explain decisions under a given latency limit.

This matters because examiners need context.

When they see why prior art does not solve the same problem in the same way, your invention becomes easier to separate from what already exists.

A clean problem statement also helps your own team.

It forces you to name what you truly solved, not what you hope to solve later.

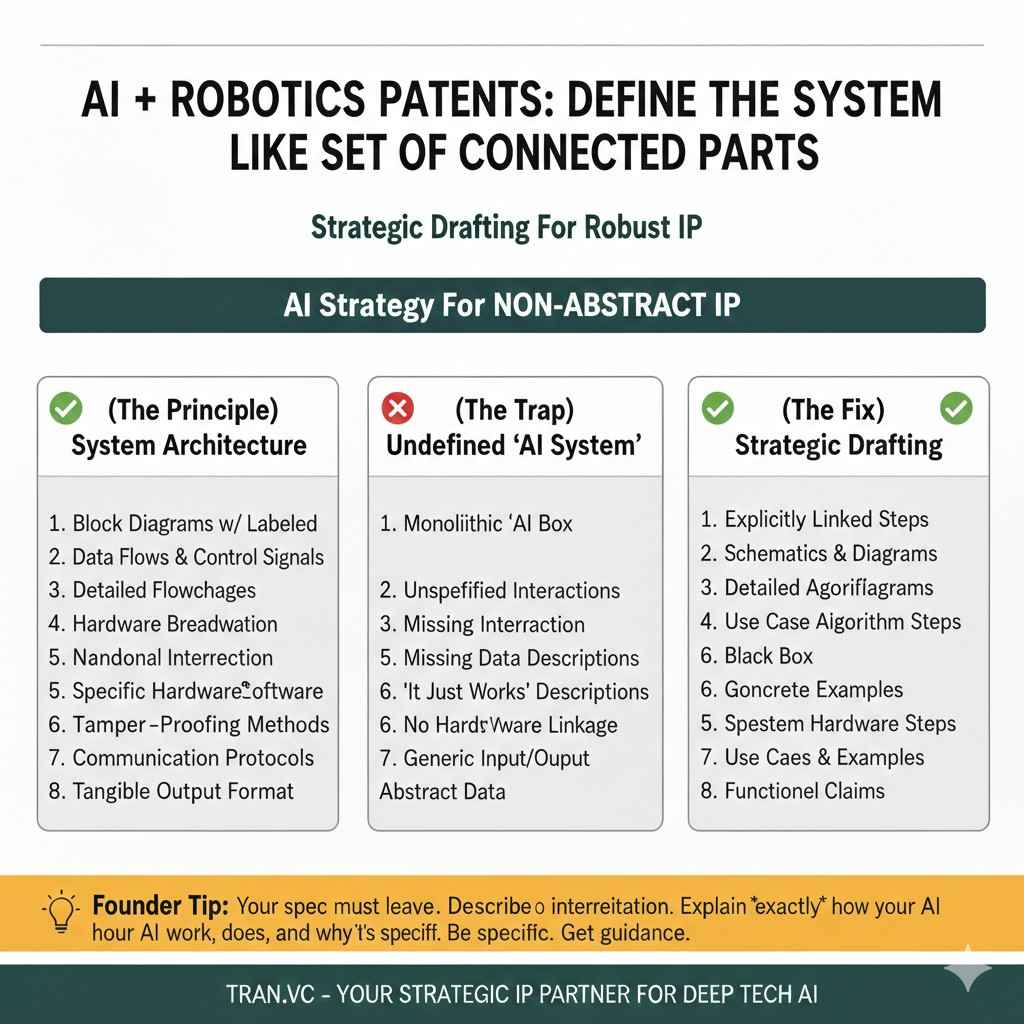

Define the system like a set of connected parts

Many AI specs are too “model-first.”

They jump straight into “we use a transformer,” but skip the system around it.

Examiners like systems they can draw.

They like modules, data flows, and clear inputs and outputs. That makes it easier for them to compare your invention to prior art.

So describe the pipeline.

What enters the system, how it is processed, what the model does, what post-processing occurs, and what action is taken at the end.

If your product has a feedback loop, describe it.

Feedback loops are often a source of novelty. They also raise questions about training updates, safety checks, and drift control. Those details can become valuable claim hooks later.

Explain what makes your approach different without bragging

You do not need big words.

You need clear differences.

Maybe you use a special feature set.

Maybe you use a training schedule that handles imbalance. Maybe you combine sensor streams in a new way. Maybe you run two models and resolve disagreement using a rule that improves safety.

Say those differences plainly.

Then explain the effect: lower compute cost, higher stability, better rare-case recall, faster response time, or fewer false alarms.

The examiner does not need to be impressed.

They need to be convinced the invention is concrete and supported.

Apply anytime if you want Tran.vc to help translate your technical edge into a spec that holds up: https://www.tran.vc/apply-now-form/

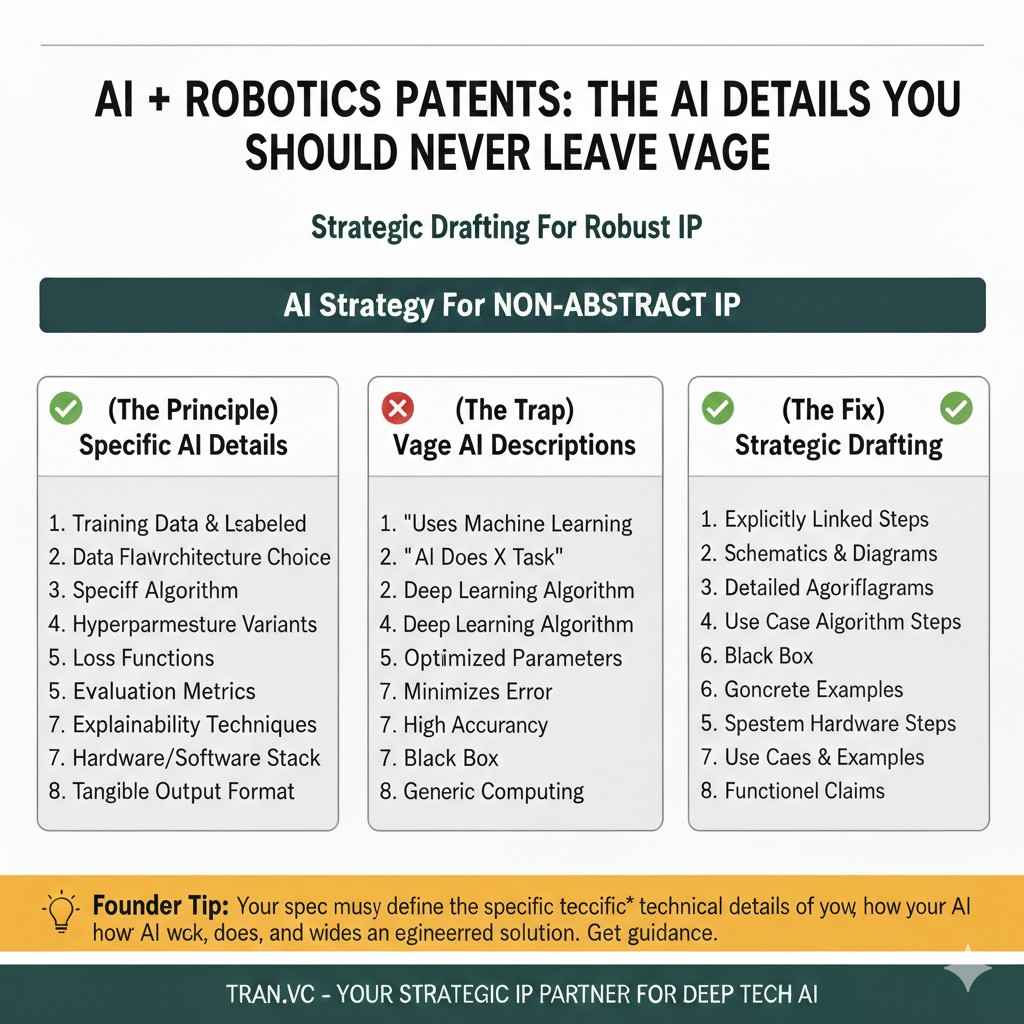

The AI details you should never leave vague

Inputs and outputs must be more than labels

AI systems live and die by inputs.

If you only say “input data,” you miss your chance to show what is special.

Describe inputs in categories.

Raw sensor values, text tokens, image frames, time series windows, user events, logs, graphs, embeddings, and metadata can all be relevant.

Then describe outputs clearly.

A class label is not enough if your system also outputs a score, a ranking, an action, or a structured result.

When you describe both, you create anchors.

Anchors help in claims. They also help examiners map your invention to a real use case.

Training details should show your method, not your secrets

You do not need to expose private weights.

But you do need to explain the training process in a way that is believable.

If you use supervised learning, say what the labels represent.

If you use weak labels, say how they are built. If you use synthetic data, say how it is generated and validated.

If you use a specific loss idea, describe it.

Not in math-heavy form, but in plain language that shows what is weighted, what is penalized, and what is encouraged.

If you use constraints, say what they are.

Latency limits, memory limits, safety constraints, and fairness controls can all show technical structure beyond the model itself.

Inference and deployment details often carry the novelty

Many AI inventions are not new in training.

They are new in how inference is done in real time, how outputs are checked, or how decisions trigger actions.

If you do post-processing, describe it.

Thresholding, calibration, filtering, smoothing, rule layers, or ensemble voting should be explained as steps in a flow.

If you run on edge devices, describe that setup.

Edge constraints are often a strong argument for technical improvement. They also support stronger claim language around compute limits and response time.

If you monitor drift, describe the drift signals.

Explain what is tracked, how it is measured, and what action happens when drift crosses a limit.

This is where many AI specs become strong.

They stop being “a model that predicts” and become “a system that operates under constraints with checks and controls.”

If you want help capturing these details in a way that stays simple and claim-ready, apply here: https://www.tran.vc/apply-now-form/

Writing style choices that make examiners trust you

Use consistent words for the same thing

Examiners do not enjoy puzzles.

If you call something “confidence score” in one place and “certainty value” in another, you invite confusion.

Pick one term and stick with it.

Then define it once in plain words, and reuse it across the spec and figures.

Consistency also helps you later.

When you draft claims, you will have clean language to pull from, and you reduce the risk that a term gets interpreted in a way you did not intend.

Avoid empty phrases that trigger skepticism

Some phrases sound like fluff to examiners.

“AI-powered,” “smart,” “optimized,” “enhanced,” and “state of the art” do not explain anything.

Replace them with concrete actions.

If the system “optimizes,” say what it changes. If it “improves accuracy,” say in what setting, like rare events, noisy data, or low light.

When you write like an engineer, the examiner relaxes.

They may still reject for prior art, but they are less likely to attack your spec for being unclear.

Add examples that feel like real usage

A strong spec includes examples that read like reality.

Not stories, but practical scenarios.

You can describe a few sample inputs, model outputs, and actions.

You can explain how the system behaves when the input is missing, corrupted, or out of range.

These examples do two jobs.

They help enablement, and they help you later when you want to argue technical effect during prosecution.

Structuring a Strong AI Patent Spec from Start to Finish

Why structure matters more than most founders think

A patent spec is not just about what you say.

It is also about where you say it.

Examiners expect a certain flow.

If your document jumps around, hides key ideas deep in paragraphs, or mixes business talk with technical steps, it becomes harder to read and harder to trust.

Good structure does something powerful.

It makes your invention feel organized, complete, and deliberate. That tone alone can change how an examiner approaches your claims.

For AI inventions, structure is even more important.

There are many moving parts. If you present them in a clean order, the system feels real and grounded, not abstract.

If you want help designing your spec from day one so it supports future claims and funding, apply here: https://www.tran.vc/apply-now-form/

The field and background should set the stage, not oversell

The field section should be simple.

State what technical area the invention relates to. Machine learning for sensor processing. AI for robotics control. Model-based anomaly detection in network systems.

Do not stretch the field too wide.

If you say your invention relates to “all AI systems,” you weaken your own focus. Keep it tied to the technical space you truly operate in.

The background section must be careful.

You can describe known systems and their limits, but avoid attacking them with emotional language. Stay factual and technical.

Explain what current systems do and where they fall short.

Maybe they rely on static thresholds. Maybe they fail under rare inputs. Maybe they require too much compute for edge devices.

This section prepares the examiner.

It tells them what gap exists before your invention steps in.

The summary should mirror your future claims

The summary is not a teaser.

It is a compact technical outline of the invention.

Many founders write the summary like marketing copy.

That is a mistake. The summary should describe the system and method in clear steps, close to how your independent claims will look.

If your main claim later recites steps like receiving input data, generating feature vectors, applying a trained model, and performing an action based on a threshold, your summary should already reflect that flow.

This alignment helps in two ways.

First, it shows written description support. Second, it helps the examiner quickly see what your invention covers.

The figures should tell a clean story

AI specs benefit from clear diagrams.

A block diagram of the system. A flowchart of the method. A training pipeline. An inference flow.

Each figure should match text in the spec.

When you refer to a component, describe what it does in practical terms. Avoid naming boxes without explaining their function.

For example, if you show a “feature extraction module,” explain what features it extracts and why.

If you show a “model training module,” describe what data it receives, how training is performed, and what is output.

Flowcharts are especially helpful.

They allow you to describe the invention as steps, which later map cleanly to method claims.

When figures and text align, the examiner can follow your invention without effort. That lowers friction during review.

Writing Fallback Positions into Your AI Spec

Why fallback detail is critical in AI patents

Almost no AI patent is allowed exactly as first filed.

Claims are usually narrowed after prior art is found.

If your spec is thin, you may not have room to narrow.

You might want to add a specific training detail, a data type, or a processing step, but if it is not described, you cannot safely add it later.

This is where strong drafting shines.

A well-written AI spec includes variations and options from the start, even if your product currently uses only one path.

This is not about padding.

It is about protecting your future flexibility.