Reinforcement learning can feel like magic. An agent takes actions, gets rewards, and slowly learns how to win—sometimes in ways even the builders did not expect. If you are building robots, AI systems, or real-world control software, reinforcement learning can become the core of your product. And when it becomes core, one question shows up fast:

Can you patent it?

The honest answer is: yes, sometimes. But not the way most teams first assume.

A lot of founders think they can patent “using reinforcement learning to do X.” That is usually too broad. Others assume they cannot patent anything at all because “software is not patentable.” That is also wrong. The middle ground is where the value is. The protectable parts are often not the high-level idea of reinforcement learning. The protectable parts are the specific way you make it work in the real world: the system design, the training pipeline, the safety rules, the reward shaping method tied to real signals, the on-device learning limits, the way you cut compute, the way you handle edge cases, the way the learned policy plugs into hardware, and the way you keep it stable under real conditions.

This guide is going to show you how to separate what you can protect from what you cannot, in plain language, without fluff. You will learn how patent examiners tend to look at reinforcement learning, what kinds of claims usually get blocked, and how strong patents are actually written for RL-based products. You will also learn what to do in the first weeks of building, so you do not lose rights by accident, and so you do not waste time writing patent drafts that will not survive.

If you are building something real with RL—robot arms, drones, warehouse bots, medical devices, industrial optimization, adaptive scheduling, personalization, or smart control—this matters. A clear patent plan can help you raise with more leverage and block copycats later.

And if you want help turning your RL work into defensible IP without burning months, Tran.vc can support you with up to $50,000 in in-kind IP and patent services. You can apply any time here: https://www.tran.vc/apply-now-form/

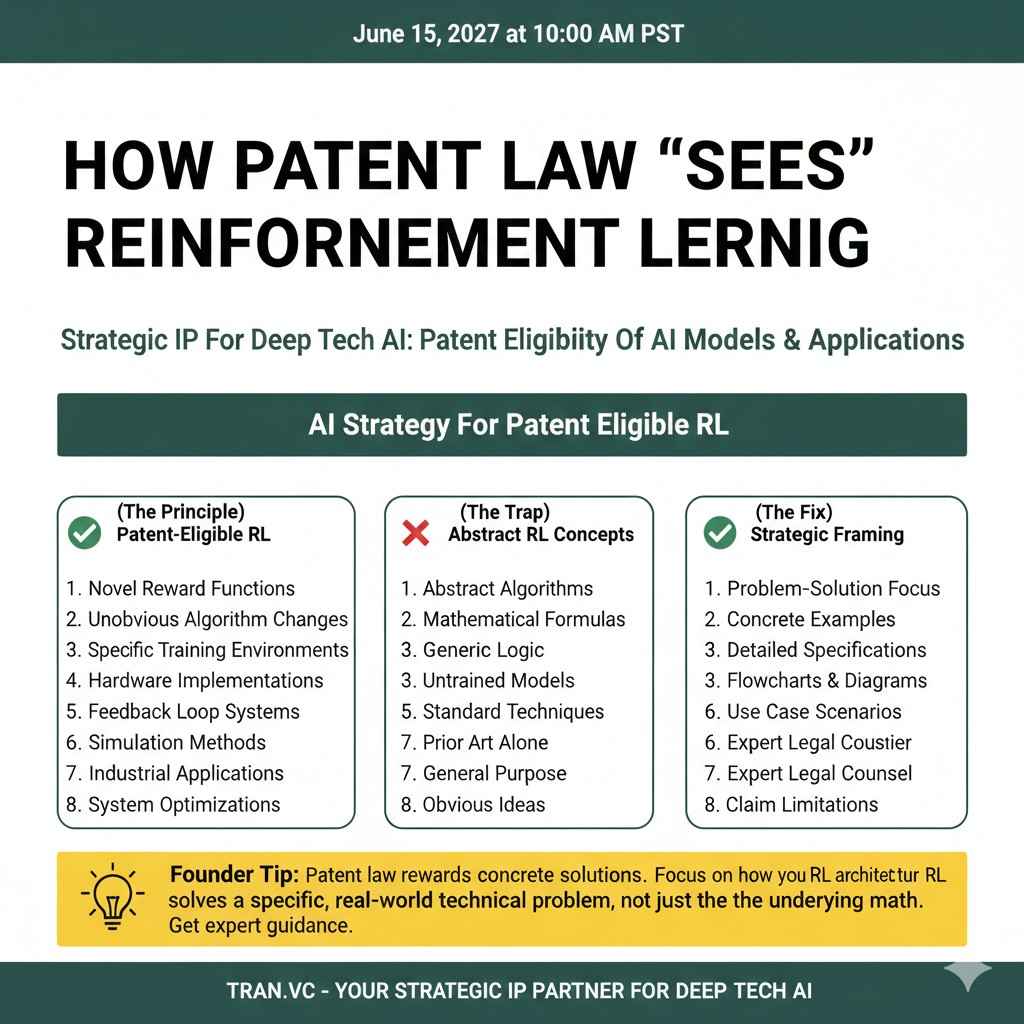

How patent law “sees” reinforcement learning

Reinforcement learning is not the invention by itself

When you say “we use reinforcement learning,” you are naming a tool, not the invention. It is like saying “we use a database” or “we use a neural network.” Patent examiners treat reinforcement learning the same way. They assume the general concept is already known.

So if a patent draft mainly says, “An agent learns a policy to maximize reward,” it will not go far. That is a textbook description. It does not matter if your product is new. The wording is still too close to the known idea.

The protectable part is usually the part you had to fight for. It is the part you built because the basic RL method did not work out of the box. In real products, RL breaks in the messy parts. That is where your invention often lives.

Why RL patents get rejected so often

Many RL patent drafts fail for the same few reasons. They read like a research paper summary. Or they describe a business goal and then sprinkle RL terms on top. Or they claim results without explaining the technical steps that produce those results.

A patent examiner is trained to look for a clear technical solution to a technical problem. If your draft does not explain the problem in a concrete way, and the steps you take to solve it, the examiner has an easy path to say no.

This is why strong RL patents do not lead with “we use RL.” They lead with the system problem and the system fix.

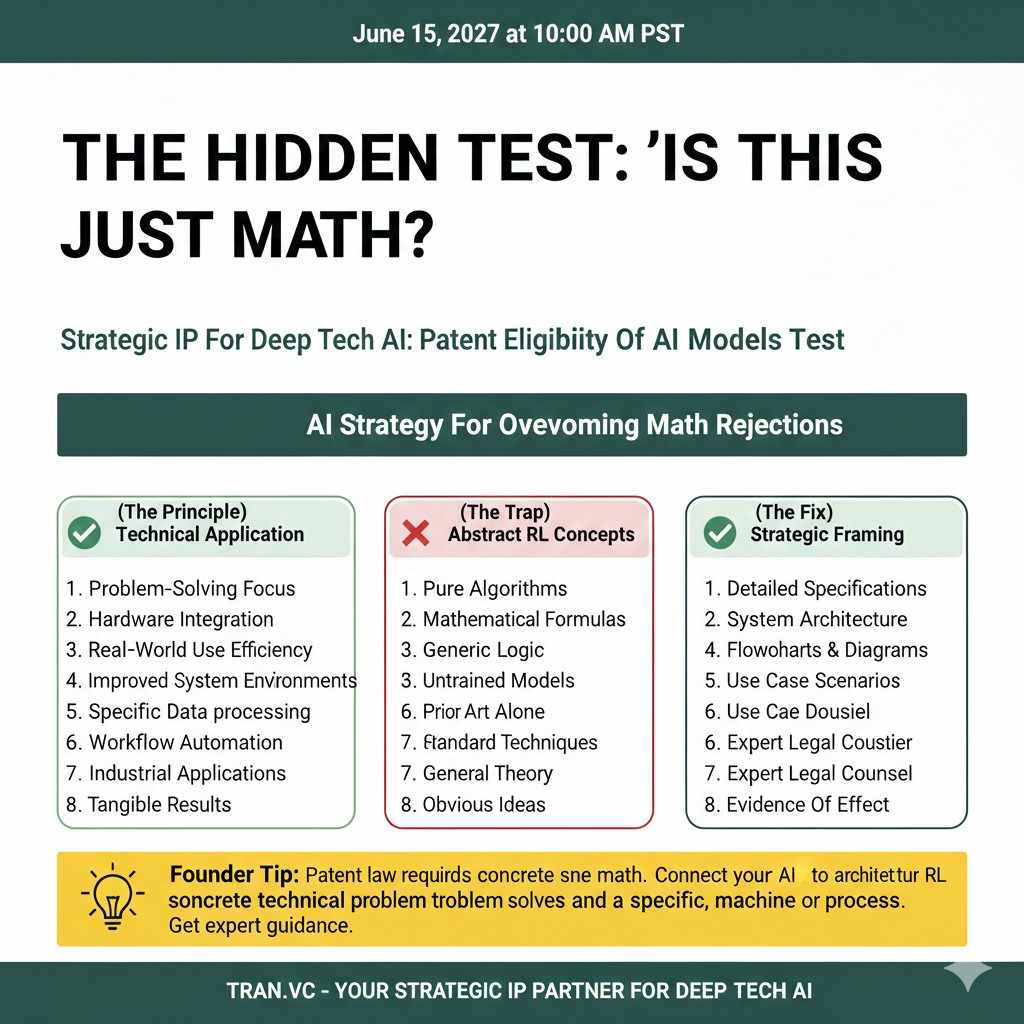

The hidden test: “Is this just math?”

Reinforcement learning is built from math. Value functions, gradients, reward signals, probability. Examiners will often ask, even if they do not say it directly, “Is this just math on a computer?”

If your claims sound like pure math steps with no tie to a real system improvement, you are at risk. The fix is not to avoid math. The fix is to show how the math is used inside a specific machine or process, in a way that improves how the machine or process works.

For robotics teams, this is often easier because you can point to physical effects. For pure software teams, it is still possible, but you must be more exact about the technical benefit.

What is protectable in reinforcement learning

Protectable usually means “specific and operational”

In RL, a lot is known. But the way you apply RL inside a real product can be unique. The protectable pieces tend to be the operational details that a competitor would need if they tried to copy your performance.

Think about what a smart competitor would ask if they wanted to replicate your results. They would not ask, “Do you use RL?” They would ask, “How do you define reward without cheating?” They would ask, “How do you keep it safe while exploring?” They would ask, “How do you make it learn with little data?” Those answers often contain the invention.

Protectable means you can describe a concrete system that someone could build. It also means the system is not just a normal, expected combination of known parts.

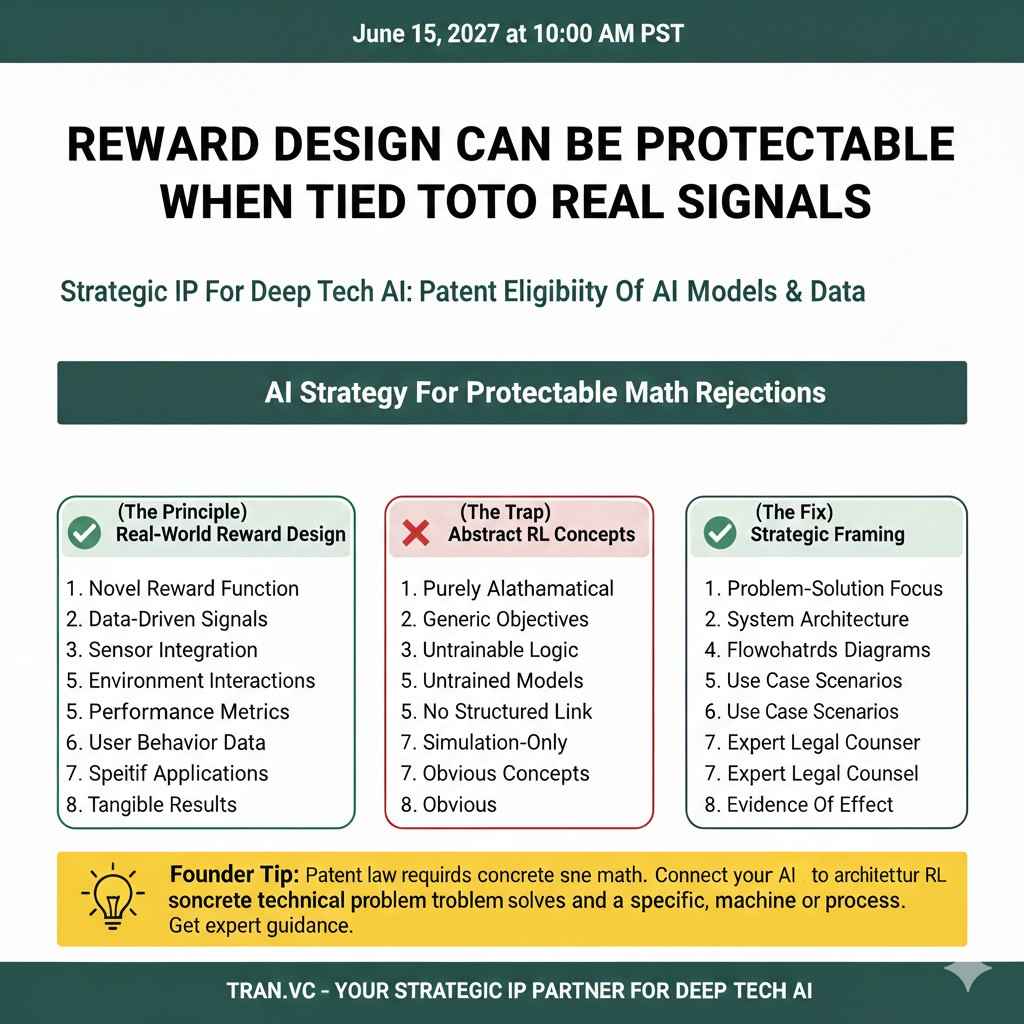

Reward design can be protectable when it is tied to real signals

“Reward shaping” is a common RL phrase. On its own, it sounds generic. But reward shaping becomes protectable when it is linked to a real measurement and a specific failure mode.

For example, if you discovered that a robot learns unsafe shortcuts unless you compute reward using a certain sensor fusion method, and you define the reward using that fused signal in a particular way, that can be protectable.

The key is that you are not claiming “a reward function.” You are claiming a reward computation pipeline that uses real system inputs, with a clear reason it works better.

Safety layers and constraints are often strong patent ground

In real-world RL, safety is not optional. You cannot let a robot “explore” by crashing into people or breaking expensive gear. Many teams build a safety wrapper that limits what actions are allowed, or that predicts risk before actions are applied.

If your safety logic is more than a generic “do not exceed limits,” you may have a strong patent angle. For example, if you have a method that checks predicted state transitions, or a fallback controller that takes over in a specific way, or a constraint solver that is integrated into policy selection, those are concrete system pieces.

This is also where patents can map cleanly to value. Safety is expensive to build and hard to copy quickly.

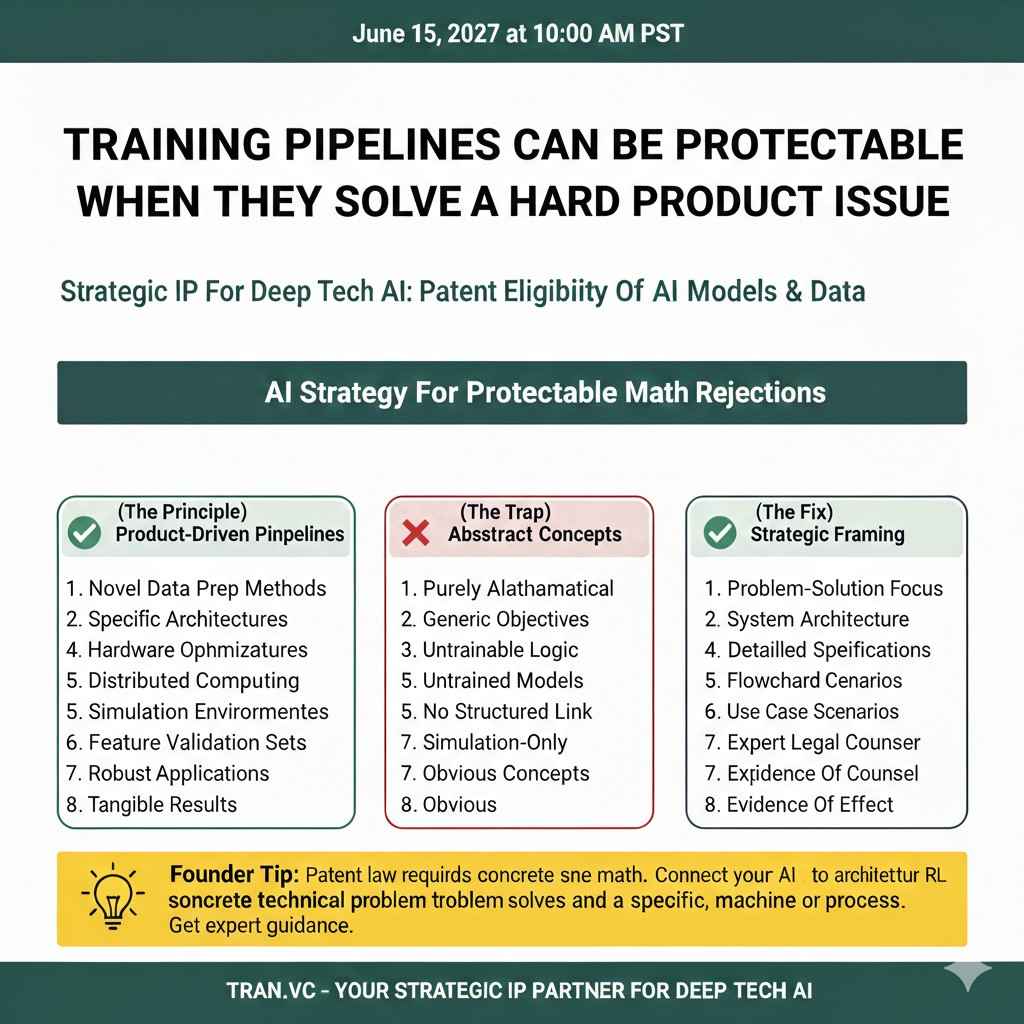

Training pipelines can be protectable when they solve a hard product issue

A lot of RL value comes from training, not inference. If you built a training setup that makes RL workable in your domain, that can be the invention.

Common examples include how you build a simulator that matches real physics closely enough, how you randomize environments to avoid overfit, how you mix real data and sim data, and how you decide when to stop training to avoid policy collapse.

To be protectable, the pipeline needs to be described as a set of steps that produce a technical benefit. “We train in simulation” is not enough. “We train in simulation using these domain parameters, in this schedule, with this drift detection, and we update this way” is much closer.

System integration claims can be stronger than algorithm claims

Many RL patents become stronger when the core claims describe a system, not just an algorithm. The system can include sensors, controllers, compute nodes, network links, memory constraints, and timing requirements.

If your RL policy must run under strict latency, or must coordinate across devices, or must handle missing sensor data in a special way, that is part of the invention. The examiner can see a real technical system improvement, not just a math model.

This also helps you later. System claims can be harder to design around, because real products must integrate too.

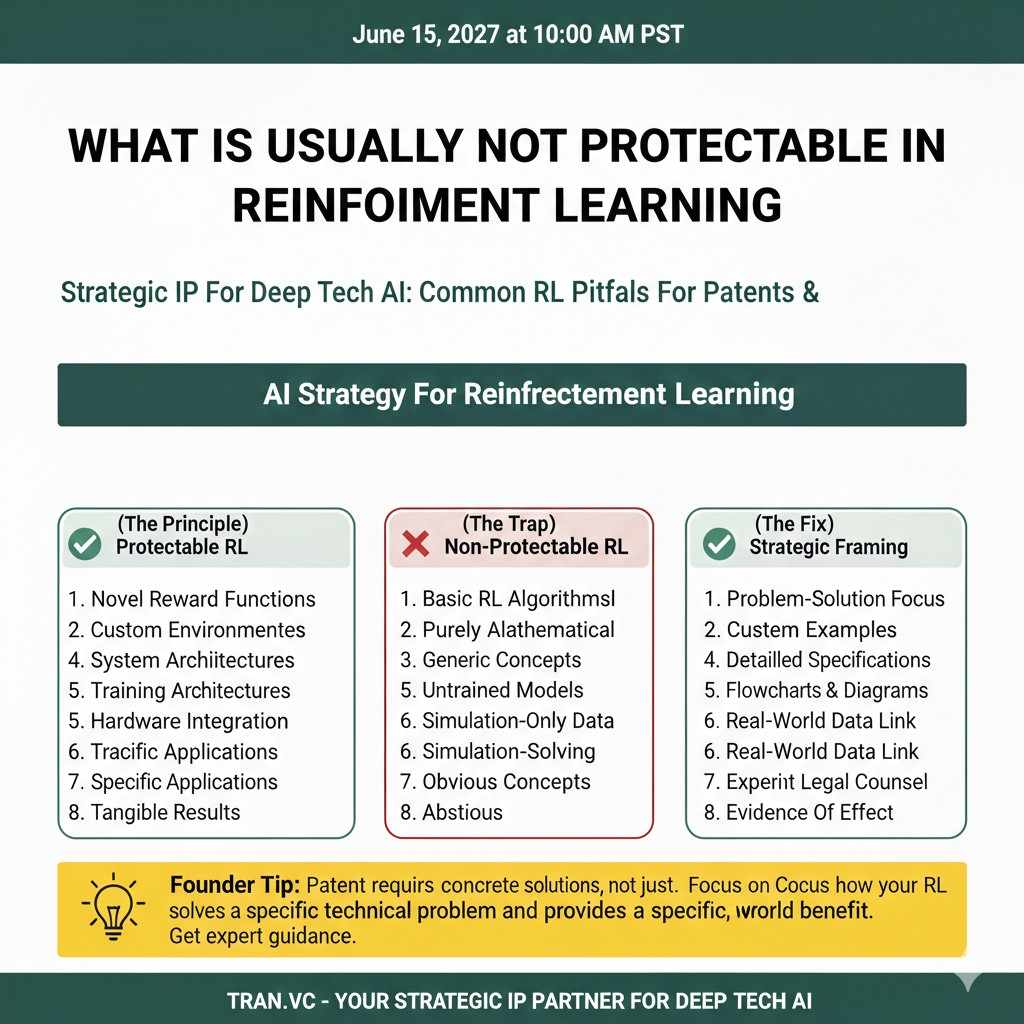

What is usually not protectable in reinforcement learning

The broad idea of “using RL for X” is rarely enough

If your claim reads like “use reinforcement learning to optimize warehouse picking,” it will likely fail. The examiner will find papers and patents that already describe RL used for many tasks, including warehouse work, control, and planning.

Even if your exact task is new, the structure “apply RL to a problem” is usually treated as an obvious use of a known tool. The bar is higher than that.

This is why the best approach is to claim the special steps that make it work, not the fact that you chose RL.

“An agent, a state, an action, and a reward” is considered basic

This is the standard RL loop. If your draft spends most of its time describing the agent observing a state, selecting an action, receiving a reward, and updating a policy, you are spending words on the part nobody can own.

Those details still belong in the specification, because you need a full explanation. But your key claims must go beyond the generic loop. They must cover the extra mechanisms you built.

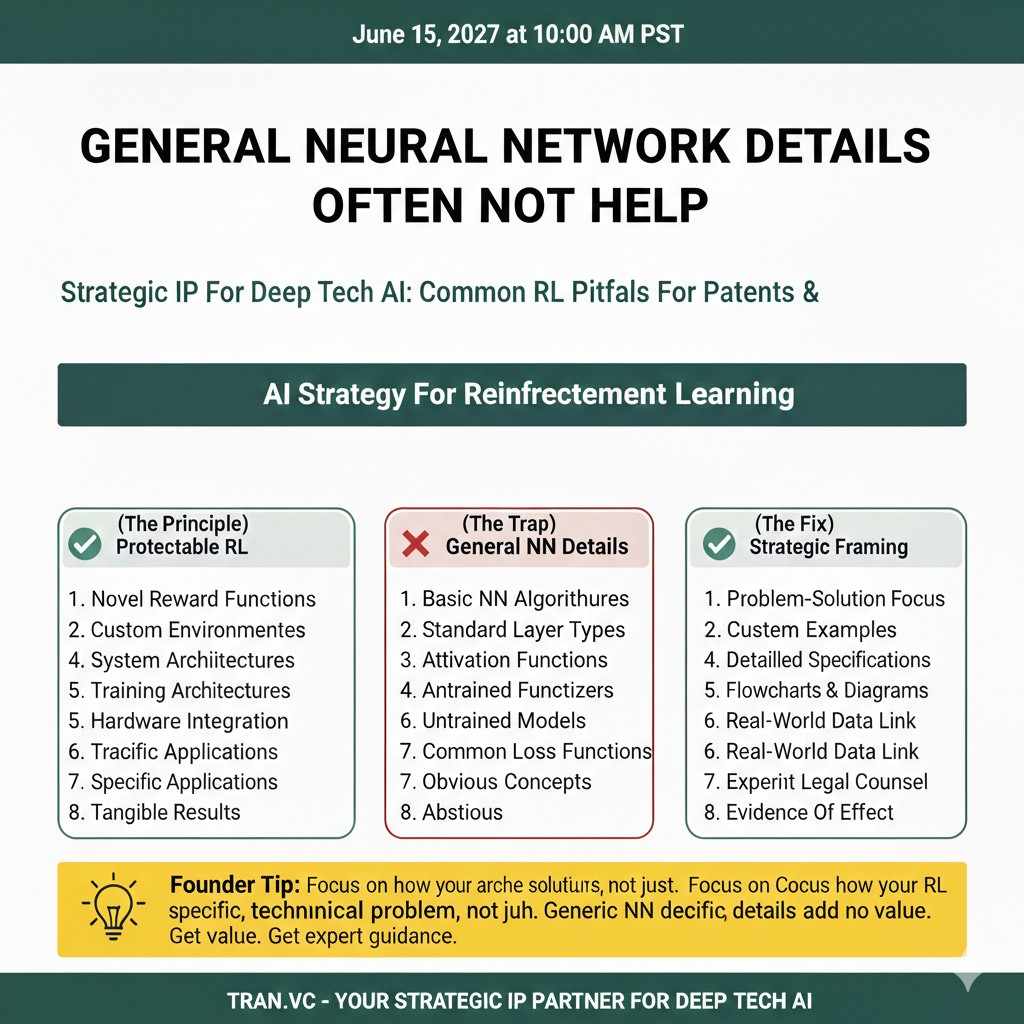

General neural network details often do not help

Saying “the policy is a neural network” is normal. Stating that you use layers, weights, backprop, or embeddings is usually normal too. Unless you have a special network structure tied to a real system constraint, those details tend to be weak.

In patents, “normal ML plumbing” is often treated as expected. If the network design is truly special, you need to show why it was required and what it solves.

If it is not special, do not force it. Focus on the parts that are special.

Pure performance claims are not enough

You cannot patent “higher reward” or “better accuracy” as a result by itself. A patent needs the “how,” not just the “wow.”

If your draft says the system “improves performance,” the examiner will ask, “By doing what?” If the answer is generic RL, the claim will fall.

The stronger move is to tie performance gains to specific steps, and to show those steps solve a known pain in the field.

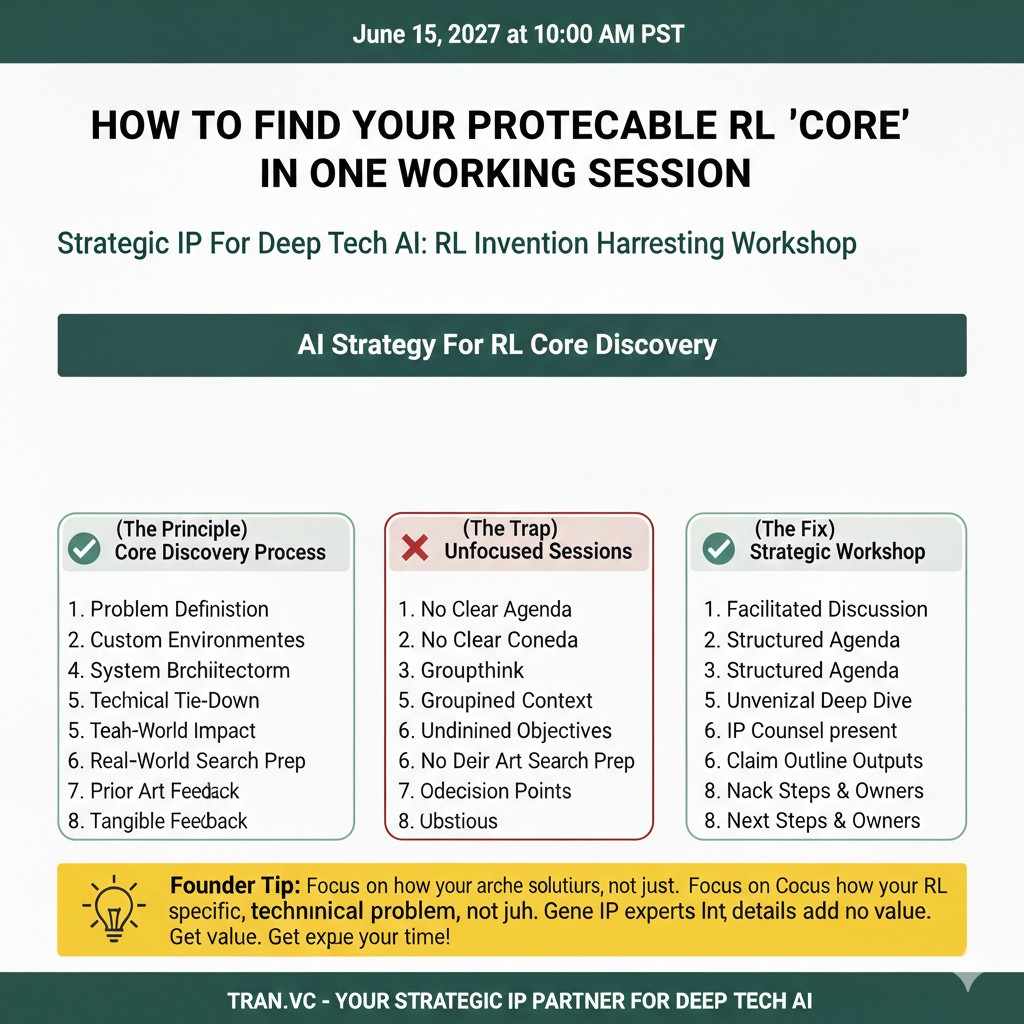

How to find your protectable RL “core” in one working session

Look for the hardest engineering decisions you made

A simple test is to review your repo history and your lab notes. What did you change again and again because the first idea failed? What did you rewrite because training was unstable? What did you add because the robot behaved in a way you did not expect?

That struggle is a map. It points to the technical gaps that standard RL did not close for you.

Those gaps are often where patents live. Not in the “agent learns” story, but in the “agent keeps failing until we added this mechanism” story.

Separate “science choices” from “product locks”

In RL work, you make many choices. Some are just tuning. Some are deep product locks. A product lock is a choice that a competitor cannot change without losing the advantage.

For example, if you discovered that you must compute reward using a certain sensor blend, because any other blend breaks safety or causes drift, that is a product lock.

If you discovered that you must add a constraint checker at a specific point in the control loop, because otherwise the policy will explore into unsafe actions, that is a product lock.

Patents should target product locks whenever possible. Those are the parts that protect real business value.

Capture it early, before it leaks

Founders often wait too long. They want more results. They want more polish. But patent rights can be harmed by early public talks, demo days, blog posts, and even some customer decks.

If RL is a core moat for your startup, treat IP as part of the build. Not a “later” task.

If you want Tran.vc to help you turn RL work into a focused patent plan, you can apply any time here: https://www.tran.vc/apply-now-form/

Writing reinforcement learning patents that actually survive examination

Start with the real technical problem, not the buzzword

A strong RL patent does not open with “This invention relates to reinforcement learning.” It opens with a clear system problem. The problem must feel real and narrow. It should describe what breaks, what fails, or what limits performance in current systems.

For example, instead of saying “existing systems do not optimize well,” describe how a robotic arm trained with standard RL drifts from stable trajectories when sensor noise increases. Or explain how exploration in a warehouse robot leads to unsafe paths when layout changes are frequent.

The more concrete the problem, the easier it is to justify your solution. Examiners are trained to reward clarity. If they understand the pain, they are more open to the fix.

Show why common RL methods do not solve it

You must also explain why known RL approaches are not enough. This does not mean attacking the field. It means stating the limits in a calm and technical way.

Maybe standard reward functions cause sparse feedback, leading to unstable learning. Maybe policy gradients require too much data in a safety-critical environment. Maybe model-based RL fails because the model error compounds in long-horizon tasks.

When you show that common methods hit a wall in your setting, your improvement looks less obvious. That is important. Patent law cares a lot about whether something would have been obvious to try.

Describe the solution as a sequence of technical steps

Once the problem is clear, describe your fix in operational detail. What data flows where? What module computes what? When does the safety layer activate? How is the reward adjusted over time?

This is not the place to be poetic. It is the place to be precise. You want someone skilled in the field to read it and think, “This is a real implementation, not just an idea.”

At the same time, avoid overloading the draft with code-level details. The goal is not to lock yourself into one narrow version. The goal is to capture the key structure that makes your approach work.

Claim the architecture, not just the math

Many teams focus too much on the policy update rule. But often the stronger protection sits in the architecture around the policy.

For instance, if your system uses a pre-filter to limit action space before policy selection, and then applies a post-check using predicted state transitions, that flow can be claimed. If you use a dual-controller system where RL proposes actions and a classical controller adjusts them under constraints, that interaction can be claimed.

Architecture claims often survive better because they describe how parts cooperate. Competitors cannot easily remove one piece without breaking performance.

Use multiple claim angles to reduce risk

A well-written RL patent usually has more than one angle. One claim might focus on reward computation. Another might focus on safety constraints. Another might focus on the integration with hardware or network components.

This layered approach increases the chance that at least some claims survive. It also makes design-around harder.

You should not rely on one “hero claim.” In complex systems like RL products, protection is stronger when spread across different technical features.

Special issues in robotics and physical systems

Physical impact strengthens patent position

If your RL system controls physical hardware, that can help. When a learned policy changes how a motor moves, how a drone flies, or how a device reacts to input, you can show a direct technical effect.

Patent examiners often respond better when the result is not just a number on a screen but a change in physical behavior. That does not mean software-only inventions are weak. It just means physical linkage is easier to explain as a technical improvement.

When drafting, be clear about how the RL output changes actuator signals, timing, or control loops. Show the cause and effect.

Real-time constraints matter

Many RL systems must act under strict timing limits. If you designed your system to meet latency bounds, memory limits, or compute budgets, that is important.

For example, if you created a method to prune action candidates before evaluation to meet a millisecond deadline, that is not just an algorithm trick. It is a system-level improvement tied to real constraints.

Explain these constraints clearly. Show how your design satisfies them where normal RL would fail.

Simulation-to-reality bridges can be strong inventions

A big challenge in RL for robotics is the gap between simulation and the real world. If you built a structured domain randomization method, or a specific calibration loop between sim and hardware, that can be valuable IP.

The invention might lie in how you adjust simulator parameters based on real-world drift. Or in how you update the policy safely when new real data arrives.

The key is to describe how this bridge reduces failure, reduces retraining time, or improves stability. Tie the method to a technical gain, not just convenience.

Reinforcement learning in pure software systems

The “abstract idea” risk is higher

If your RL product lives only in software, such as pricing engines, scheduling tools, or personalization systems, you face a higher risk that the invention is labeled abstract.

This does not mean you cannot patent it. It means you must anchor the invention in technical improvements to computer operation or data processing.

For example, if your RL system reduces server load by changing how decisions are batched or cached, describe that. If it reduces memory use through a specific state representation method, describe that.

Do not rely only on business outcomes like “higher conversion” or “better engagement.” Those are business results, not technical effects.

Data structures and processing flows can be key

In software-only RL systems, protectable features often include special data structures, memory layouts, or distributed training flows.

If you created a state encoding that reduces dimensionality while preserving decision quality, and that encoding has a clear structure, that can be protectable.

If you designed a distributed RL training system that synchronizes gradients in a new way to avoid instability, that can also be protectable.

The theme is the same: focus on the technical mechanism, not the business goal.

Common founder mistakes with RL patents

Waiting until after fundraising

Many founders focus on building and pitching. They think patents can wait. But if RL is core to your value, delay can hurt.

Public demos, conference talks, whitepapers, and even some investor decks can create risk if not handled carefully. In some countries, early disclosure can block future patent rights.

You do not need a full global strategy on day one. But you should at least capture your core inventions before you widely share them.

Writing the draft like a research paper

Academic style writing does not map well to patent style writing. Research papers aim to teach and prove. Patents aim to define boundaries.

A research paper may focus on experimental results and comparisons. A patent should focus on structural features and steps that can be claimed.

If your RL team writes the first draft, that is fine. But it should then be shaped by someone who understands how claims are examined and attacked.

Over-claiming too early

Some teams try to claim everything. They describe a very broad system with few details, hoping to cover the whole space.

This often backfires. Broad claims without support get rejected. Then you spend time narrowing under pressure.

It is usually smarter to start with a strong, well-supported core and then expand in follow-on filings as the product grows.

If you want expert help mapping your RL system into a clear, layered patent plan, Tran.vc works hands-on with technical founders. We invest up to $50,000 in in-kind IP and patent services to help you build real protection before your seed round. You can apply here: https://www.tran.vc/apply-now-form/