If you build autonomous systems, you are not “just shipping code.” You are teaching a machine how to act in the real world. That means safety, planning, and decision logic are not side details. They are the product.

And here is the part most founders miss: these are also the parts you can often protect best with patents.

Not the app wrapper. Not the dashboard. Not the slide deck story. The real value sits inside your autonomy stack—how your system decides what to do next, how it stays safe when the world gets weird, and how it recovers when sensors lie or fail.

This article will show you how to think about patenting autonomous systems in a way that is practical and founder-friendly. We will focus on three areas that show up again and again in strong autonomous patents:

Safety: how you prevent harm and handle risk in real time

Planning: how you create a plan, update it, and pick the best path

Decision logic: how you choose actions under uncertainty and time pressure

I will keep this simple, direct, and usable. No fluffy talk. No long lists. Just the kind of thinking that helps you build an IP moat while you build the product.

If you want help turning your autonomy work into a real patent plan, you can apply anytime here: https://www.tran.vc/apply-now-form/

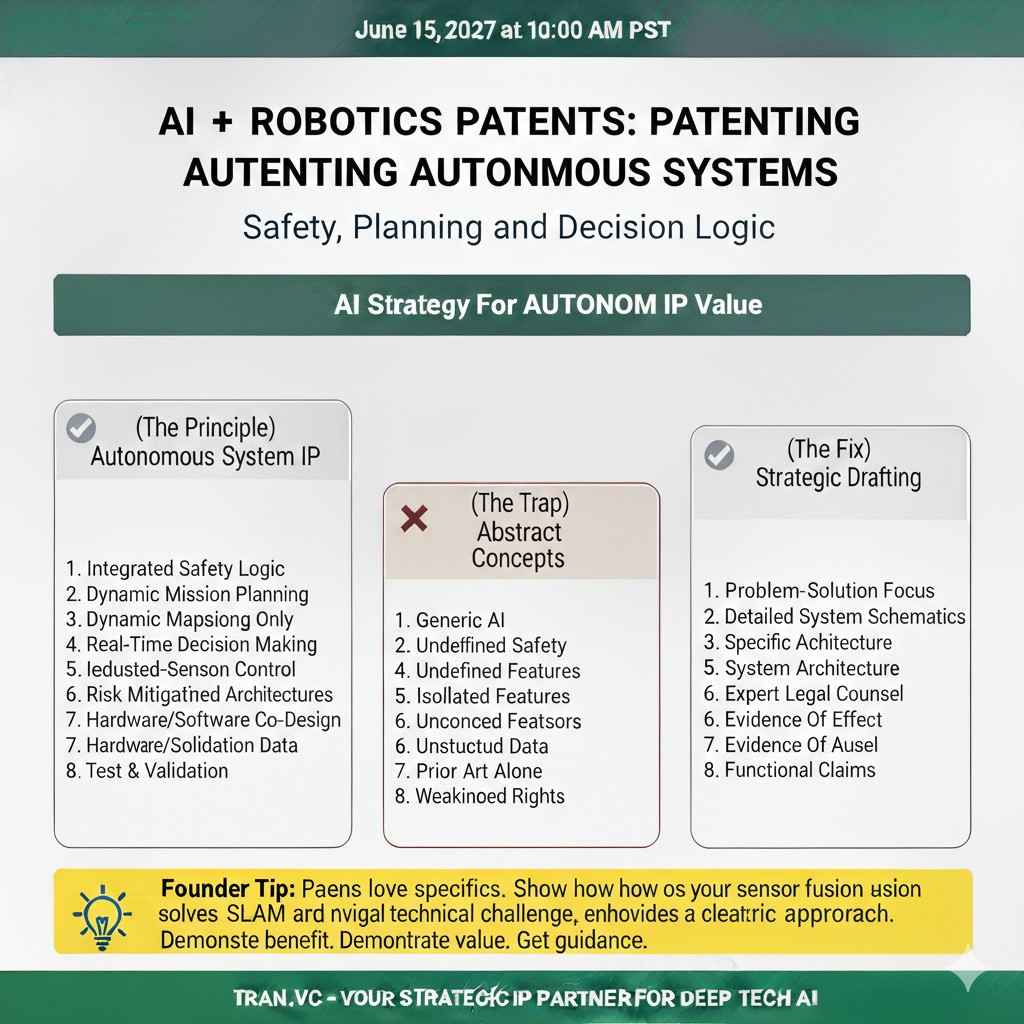

Patenting Autonomous Systems: Safety, Planning, and Decision Logic

Why this matters for founders

When you build an autonomous system, the hardest work is not the hardware or the UI. The hardest work is making the machine act well when the world is messy. That is where your real edge lives, and that is where strong patents often come from.

Patents in autonomy are not about “we use AI.” They are about the exact way your system stays safe, makes plans, and picks actions. If you can explain those steps in a clear, repeatable way, you can often protect them.

Tran.vc helps technical teams turn real engineering into defensible IP. If you are building in robotics or AI, you can apply anytime here: https://www.tran.vc/apply-now-form/

What we will cover in plain terms

This article breaks autonomy into three parts that show up in many strong patent claims. First is safety, meaning how you stop bad outcomes before they happen. Second is planning, meaning how you choose a path or sequence of steps to reach a goal. Third is decision logic, meaning how you act moment by moment when the inputs are not perfect.

These parts overlap in real products, but a patent becomes stronger when you draw clean lines between them. You want the reader to see what belongs to safety, what belongs to planning, and what belongs to action choice.

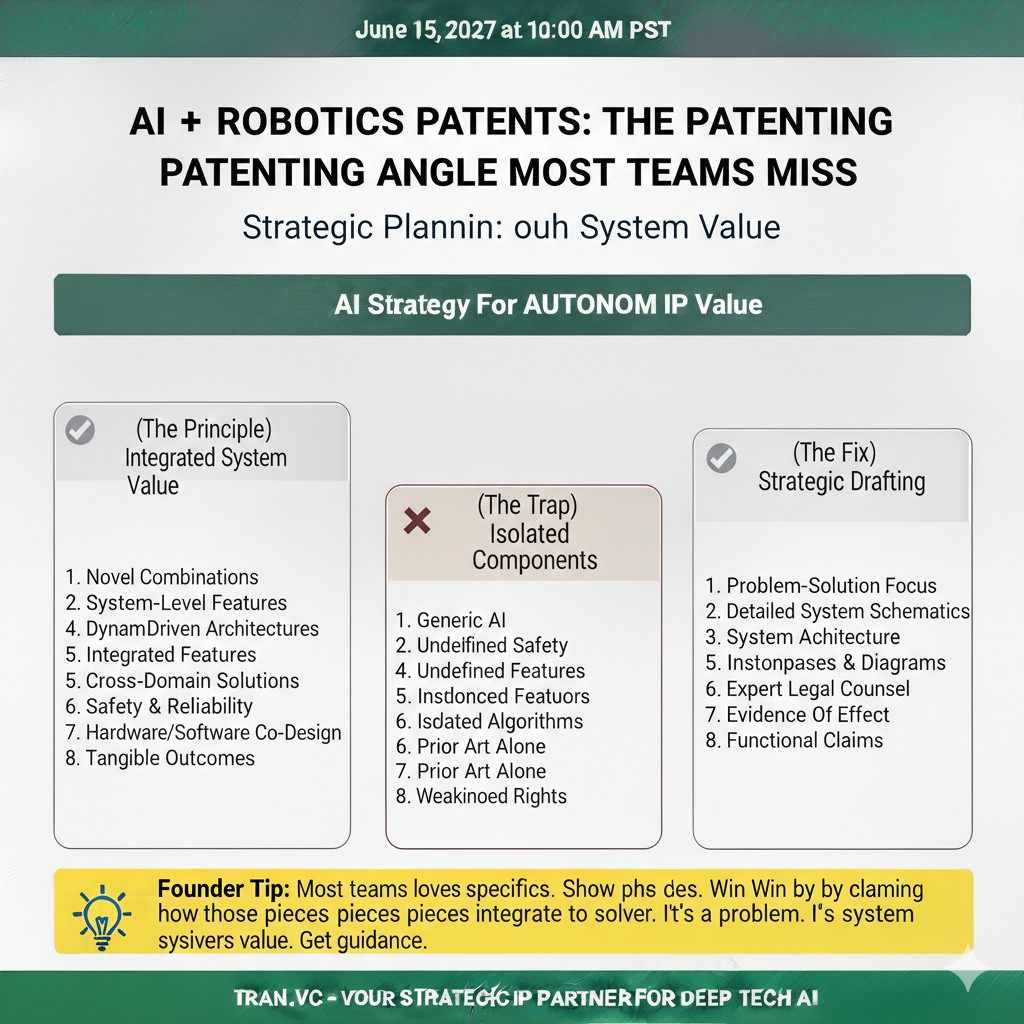

The patenting angle most teams miss

Many teams describe the “model” and ignore the system. But autonomy is a system problem, not a model problem. The best filings often focus on how pieces work together under stress, not how a single model predicts a label.

If your stack has checks, fallbacks, confidence scores, and rules for when to switch modes, you likely have patentable structure. The key is to write it as a method and a system that produces a measurable effect, like fewer collisions, fewer stops, or better task success.

Safety in Autonomous Systems

Safety is not one feature, it is a chain

Safety is rarely a single module you can point to. It is a chain of steps that start with sensing and end with action. A small change anywhere in that chain can change outcomes in a big way.

For patent work, this is good news. A chain gives you many places to claim novelty. You can protect how you detect risk, how you score it, how you pick responses, and how you prove the response worked.

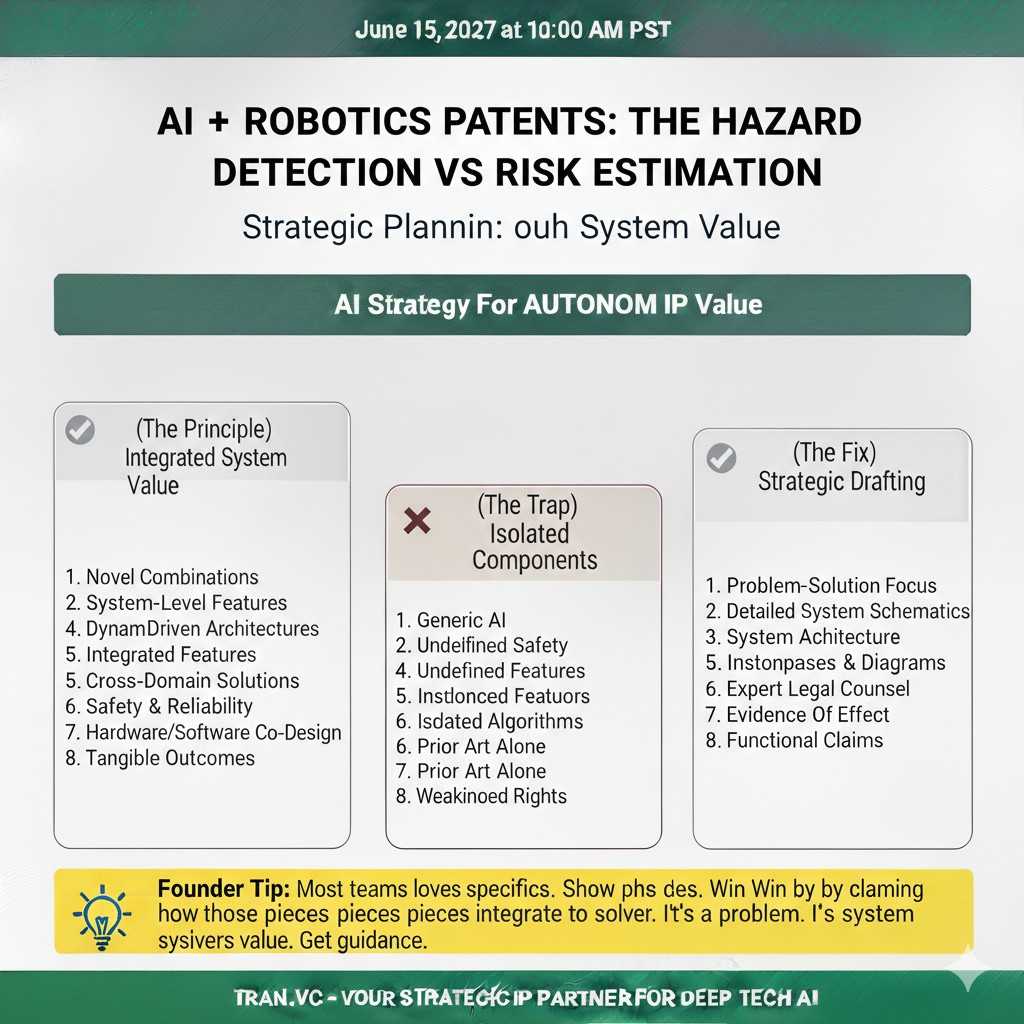

Hazard detection vs risk estimation

Hazard detection is the act of noticing something that could cause harm. Risk estimation is the act of judging how likely harm is and how bad it could be. These are not the same, and mixing them makes your invention sound vague.

In a patent story, you want to separate them clearly. You might detect a hazard using sensor fusion or a learned model. Then you might estimate risk using a cost function, a time-to-collision estimate, or a rule that changes with speed and payload.

Safety envelopes and operating limits

A safety envelope is a set of limits the system agrees not to cross. It can be a speed limit, a distance buffer, a torque limit, or a zone boundary. It can also be a “no-go” area tied to map data or to live perception.

Many autonomy products quietly rely on these envelopes, but they rarely write them down well. If your system adapts these limits based on context, that is often where a strong patent lives. For example, a robot may widen its buffer when carrying glass, or reduce speed when camera glare is detected.

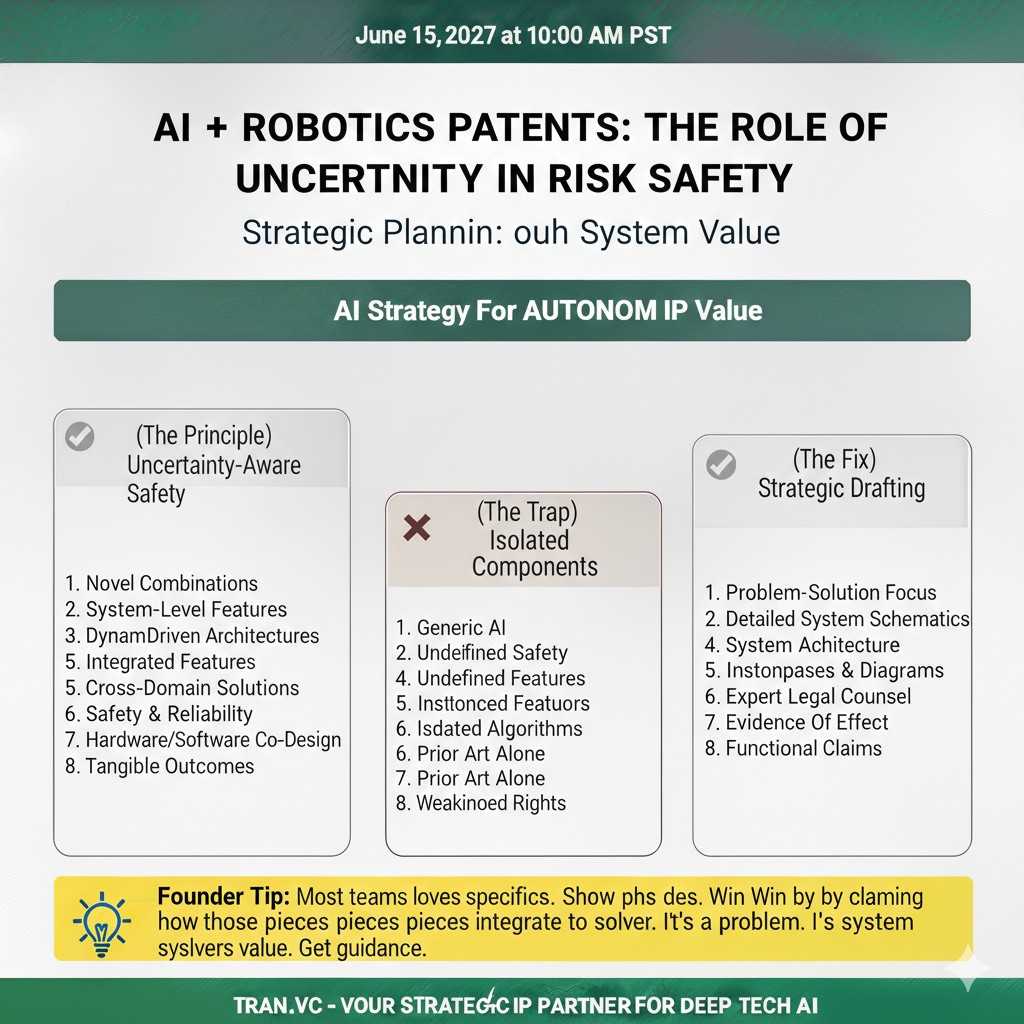

The role of uncertainty in safety

Autonomous systems do not fail only because the world is hard. They fail because the system acts too confident when it should be cautious. A strong safety design treats uncertainty as a first-class input.

If your system computes uncertainty and uses it to change behavior, document it. This can include confidence scores from perception, disagreement between sensors, or a stability score from tracking. The patent value is in how uncertainty changes the safety envelope or triggers a safe mode.

Safe modes and graceful degradation

Graceful degradation means the system gets less capable without getting unsafe. It may switch from full autonomy to assisted mode, slow down, or restrict actions to a smaller set. The goal is to avoid a sharp cliff where one bad sensor reading leads to a dangerous action.

From a patent view, you can claim the triggers, the switching logic, and the reduced capability plan. If your robot can keep working in a limited way when a sensor fails, that is not just reliability. It is a safety method with real business value.

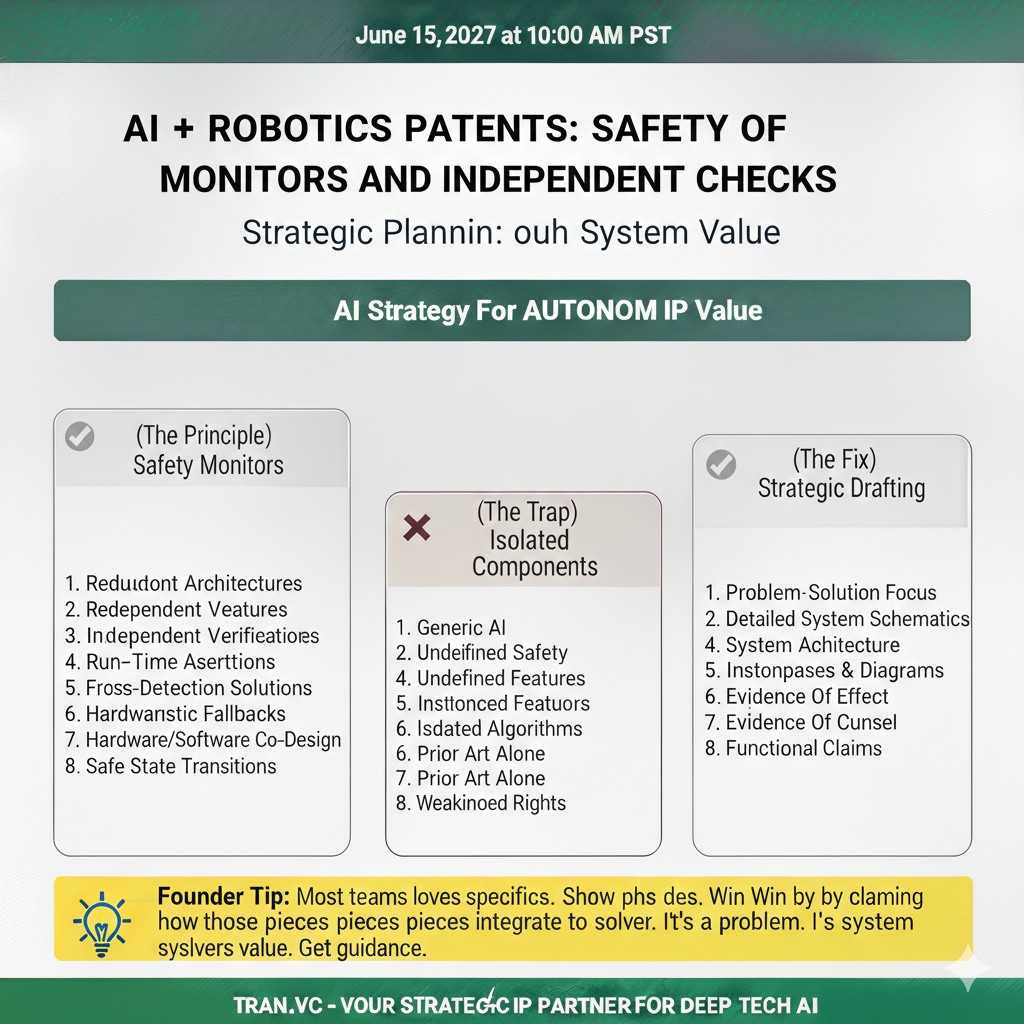

Safety monitors and independent checks

Many teams build a main autonomy brain and then add a safety monitor that watches it. The monitor can be a simple model, a set of rules, or a second planner. What matters is that it can veto unsafe actions.

A strong patent often describes the monitor as separate from the primary controller. It explains what data the monitor uses, how it evaluates the planned action, and what happens when it flags risk. This separation is important because it shows structure, not just an “idea.”

Scenario-based safety logic

A system may act differently in different scenarios. A delivery robot might treat driveways differently than sidewalks. A warehouse robot may react differently when humans are near. A drone may change rules near airports or crowds.

If your system classifies scenarios and changes safety behavior based on that label, write it as a clear method. The novelty is often in how you define the scenarios, how you detect them, and how you swap safety parameters in real time.

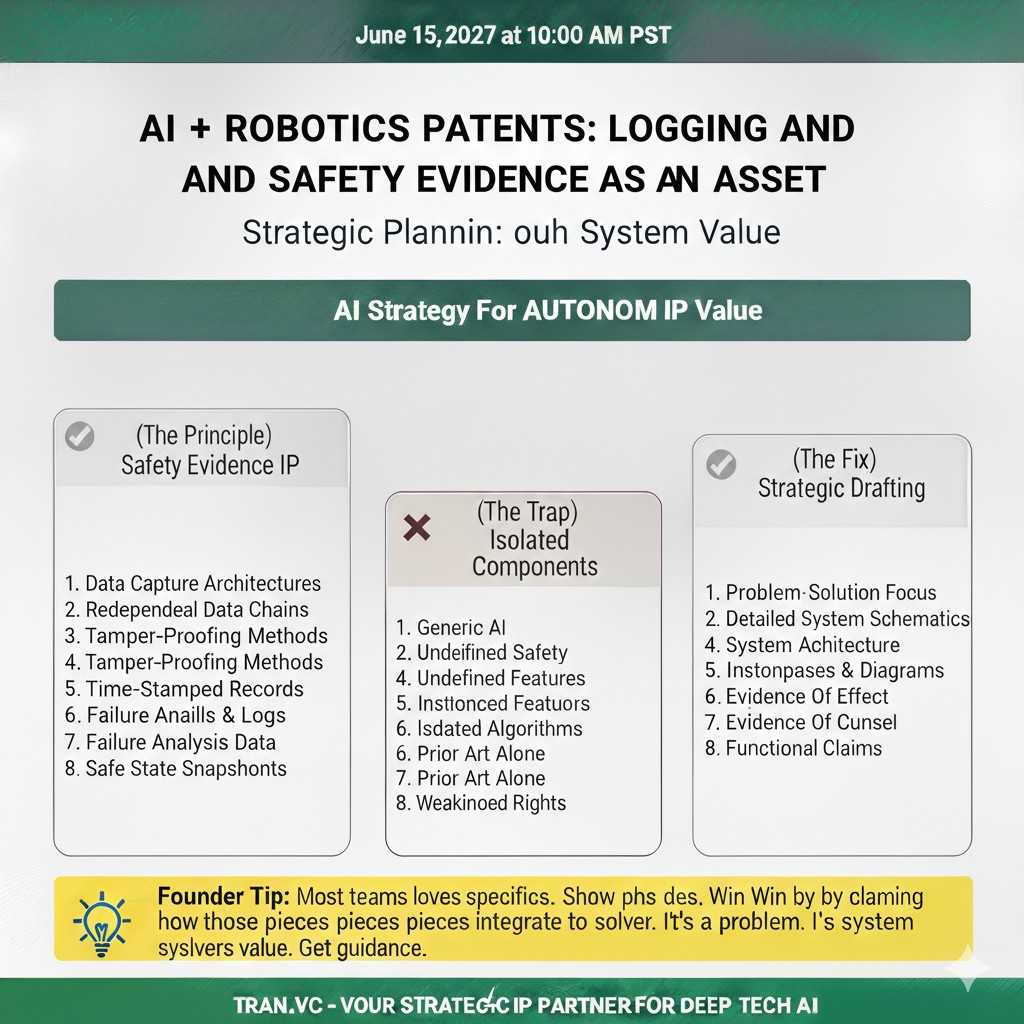

Logging and safety evidence as an IP asset

Many founders think logging is only for debugging. But for autonomy, logging can be part of safety itself. If your system logs certain signals, compresses them, and uses them to audit safety events, that can be part of a patentable workflow.

This matters because real buyers and regulators care about evidence. If you can show a method for capturing and replaying safety-critical moments, you are not only safer. You are also easier to certify and easier to insure, which is a competitive edge.

What to capture now so you can patent later

If you want to patent safety work, you need clean notes about how the system works. Not marketing notes, but engineering truth. Capture what inputs you use, what thresholds exist, what you do when thresholds are crossed, and what outputs change as a result.

Also capture what is new compared to common practice. Many teams assume their approach is “standard,” but small details can be novel. The moment you can say, “we do X only when Y and Z happen together,” you are getting close to a claimable pattern.

If you want Tran.vc to help you map your safety features into patent claims, you can apply anytime here: https://www.tran.vc/apply-now-form/

Planning in Autonomous Systems

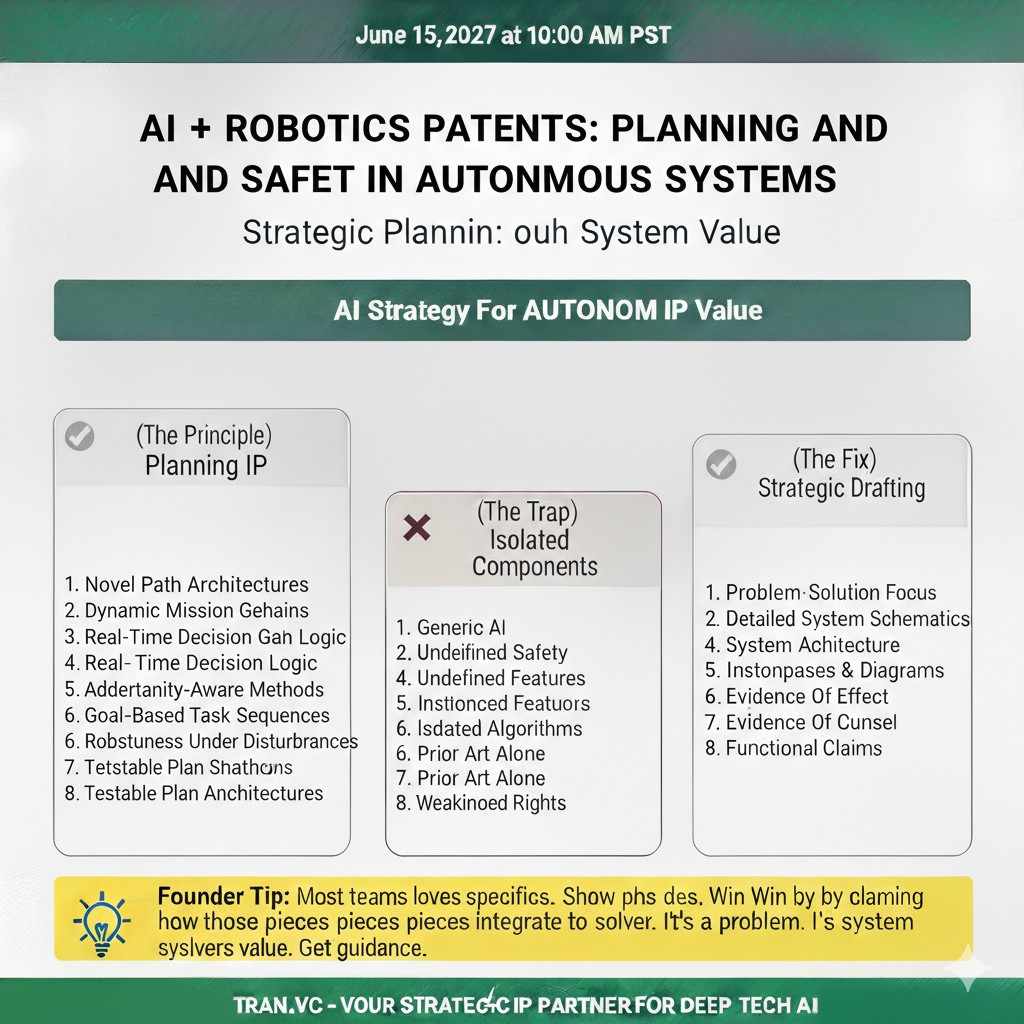

Planning is the bridge between goals and actions

Planning is how your system turns a goal into a path or sequence of steps. In robotics, that could mean a route through space, a set of pick-and-place moves, or a schedule of tasks. In autonomous vehicles, it could mean a lane plan, a merge plan, or a stop plan.

For patent work, planning is powerful because it has structure. You can describe the inputs, the objective, the constraints, and the output plan. You can also show how the plan updates when the world changes.

Global planning vs local planning

Global planning is about the big picture, like “get from dock A to shelf B.” Local planning is about the near future, like “avoid that cart and still move forward.” Both matter, but they solve different problems.

If your invention sits in how global and local planners interact, make that separation clear. For example, the global planner may produce a corridor, and the local planner may produce a smooth path inside it. The interface between them is often where a patentable idea hides.

Constraints are where planning becomes defensible

A plan is not just the shortest path. Real systems must obey rules, safety limits, comfort limits, battery limits, and task limits. When you add constraints, the planner becomes specific to your product and your market.

If your planner handles constraints in a new way, document it. Maybe you encode constraints as soft costs that adapt with uncertainty. Maybe you switch constraint sets based on the scenario label. These are the details that make a patent real.

Replanning and plan stability

Autonomy requires replanning because the world does not hold still. But if you replan too often, the system becomes jittery and hard to predict. Plan stability is the art of changing the plan when needed, but not changing it when you do not need to.

If you have logic that balances responsiveness with stability, that can be a strong invention. Patents here often talk about when replanning triggers, how you measure plan quality over time, and how you avoid oscillation between choices.

Predicting others as part of planning

Planning is not only about your robot. It is also about other agents, like humans, forklifts, cars, or drones. If your planner uses prediction of others to pick safer or smoother paths, that is key.

The patentable part is not “we predict.” The patentable part is how prediction flows into planning. For example, you might create future occupancy zones, assign them costs, and then choose a path that minimizes expected conflict. Write the pipeline clearly.

Planning with partial maps and changing spaces

Many robots work in spaces that are not fully mapped. Warehouses change. Streets have construction. Farms change with seasons. A planner that assumes a perfect map will break.

If your planner can operate with partial maps, patch maps on the fly, or reason about unknown areas in a controlled way, capture it. The core is how you represent the unknown and how you bound risk while still making progress.

Task planning vs motion planning

Task planning is about “what to do.” Motion planning is about “how to move.” Many teams blend them, but patents are clearer when you separate them.

If your system ties task choices to motion difficulty, that can be novel. For example, choosing a grasp that reduces motion risk, or choosing an order of tasks that reduces travel through risky zones. This is planning that touches business outcomes like speed and safety.

Planning outputs that make the system auditable

A good plan is not only executable. It is explainable to the system and to humans. Some teams output a plan plus a reason score, a constraint set used, and a set of rejected options.

This can become part of an IP story because it makes the system easier to debug and safer to deploy. If your planner produces these extra artifacts and uses them in later decisions, you can describe it as a closed loop, not a one-time computation.

Tran.vc often helps founders turn planning logic into claim language without exposing secrets in public. If you want that kind of support, apply here: https://www.tran.vc/apply-now-form/

Decision Logic in Autonomous Systems

Decision logic is the “last mile” of autonomy

Decision logic is how your system chooses the next action at a given moment. It can pick a velocity, a steering angle, a grasp, a route step, or a tool setting. This is where perception, planning, and safety all meet.

For patents, decision logic becomes strong when it is tied to measurable system outputs. If your logic reduces near-misses, reduces wear, or improves task success, those effects can support the value of the invention.

Policy vs rules vs hybrid systems

Some autonomy stacks rely on rules, like if-then checks and state machines. Some rely on learned policies. Many real products are hybrid, using learning for perception and rules for safety.

The important thing is not which camp you are in. The important thing is how you combine them. If your learned policy proposes actions and a rules layer filters them, that structure can be claimed. If your rules adapt based on model confidence, that is also a specific method.

State machines and mode switching

Mode switching is common in autonomy. A car may switch between cruising, following, stopping, and yielding. A warehouse robot may switch between transit, docking, lifting, and waiting. Each mode has its own rules and limits.

If your mode switching uses a unique signal mix, or if it prevents deadlocks in a new way, document it. Many real failures come from bad switching, so good switching logic is valuable and often protectable.

Handling edge cases without freezing

A classic failure pattern is freezing when the system sees something new. Some systems stop too often, which makes them safe but unusable. Other systems push through and become unsafe. Decision logic must balance this.

If you have a method for handling edge cases that keeps the system both safe and useful, that is prime IP. This could include slow-drive behaviors, probing actions, or requesting human help only after a structured test.

Arbitration between competing objectives

Decision logic often needs to trade off goals. You may want speed, but also smoothness. You may want shortest path, but also lowest risk. You may want to finish the task, but also preserve battery.

If your system has a clear method for weighing these goals, you can often write a strong patent story. The key is to show how weights change with context, and how the chosen action is the result of that weighting, not a vague “optimization.”

Real-time decision timing and compute limits

Autonomous systems live under compute limits. Decisions must happen at fixed rates, and delays can create danger. If your system schedules compute in a smart way, that can be patentable.

For example, you might run heavy planning less often, and run a lightweight safety check more often. Or you might adapt the decision rate based on speed and proximity. These are concrete system designs that produce real-world benefits.

Learning from interventions and near-misses

Some systems learn from human takeovers, overrides, or near-miss events. If your system tags these moments, builds training sets, and updates parameters in a controlled loop, that is more than “we retrain.”

The IP value is in the pipeline and the controls. How you choose which events matter, how you label them, how you prevent bad updates, and how you validate changes before deployment. This is a decision logic story tied to safety and reliability.

Turning decision logic into patent claims without oversharing

Founders worry that patents force them to reveal too much. In practice, good drafting shares enough to support the claims without giving away every tuned number. You describe the structure and the steps, not the entire codebase.

A practical approach is to write decision logic as stages with clear inputs and outputs. Then you claim the stages, the switching rules, and the safety checks. The strongest patents also include multiple versions, so competitors cannot design around you with small changes.

If you want Tran.vc to help you package your decision logic into a defensible filing, you can apply here: https://www.tran.vc/apply-now-form/