AI is moving fast inside real products. That is exciting. It is also risky.

Most teams do not fail because they ignore risk. They fail because they do not see it early. Or they see it, but they do not have a clear way to write it down, rank it, and fix it before launch.

This is what an AI risk assessment is for. It is not a legal form. It is not a “compliance thing.” It is a simple, repeatable way to answer four hard questions:

What can go wrong?

How bad would that be?

How likely is it?

What will we do about it, and who owns the work?

If you are building in robotics, AI, or any deep tech area, this matters even more. Your system may touch the real world, the body, money, sensitive data, or key business choices. Small model mistakes can become big product problems.

This guide gives you a practical template your team can actually use. It is meant for builders. It is written so you can run the process in a normal sprint, not a “special project.” It is also meant to help you create proof for partners, customers, and future investors that you take safety and trust seriously.

And if you are building something truly new—new model, new sensor stack, new control loop, new pipeline, new agent—risk work also helps your IP. Why? Because when you map risks and mitigations, you often uncover what is truly unique in your method. That can turn into patent-ready material later. Tran.vc helps technical teams turn that kind of work into defensible IP, without rushing into early VC pressure. If you want to build your moat early, you can apply anytime here: https://www.tran.vc/apply-now-form/

Before we start, let’s set one rule that keeps this process honest:

A risk assessment is not a promise that nothing will go wrong. It is proof that you thought like an adult about what could go wrong, and you built in guardrails.

Now, what does a “good” AI risk assessment look like in practice?

It looks like a short document that anyone on the team can read in 15 minutes. It covers the full system, not only the model. It uses plain language. It has owners and due dates. And it is updated over time, not written once and forgotten.

Most teams get stuck because they do not know what to write. So we will make it easy. Think of the assessment as five blocks:

- What the system is and where it will be used.

- What the system can and cannot do.

- What could go wrong across the full lifecycle.

- What you will do to lower the risk.

- How you will monitor it after launch.

You can do this without heavy lists. You can do it with clear paragraphs and a small set of tables if you want. The point is clarity, not paperwork.

The practical template: start with a one-page “system card”

Your first page should be a “system card.” It is a quick snapshot that makes the rest of the assessment easy.

Write it like this:

System name and version.

Example: “AssistBot Picking Model v0.9”

What it does in one sentence.

Example: “Suggests the best grasp point and route for a warehouse picking arm.”

Where it runs.

Example: “On-device GPU in the robot, with a cloud dashboard for logs.”

Who uses it.

Example: “Warehouse techs and shift leads.”

What it touches.

Example: “Cameras, depth sensors, gripper, arm motion planner, inventory system.”

What it can impact.

Example: “Worker safety, package damage, inventory counts, downtime.”

What decisions it makes and what decisions it only suggests.

This line is critical. If the model is only a helper, say so. If it can trigger actions, say so.

The best-case output.

Example: “Correct grasp point, no collisions, stable pick.”

The worst-case output.

Example: “Wrong grasp, drop, collision, injury risk.”

This page alone often reveals where the real risk sits. It is rarely “the model is wrong.” It is usually “the model is wrong, and the system trusts it too much.”

If you do one thing after reading this intro, do this: write the system card today.

The risk score: keep it simple, but consistent

You do not need complex math to rank risk. You need a shared language.

Use two scales: severity and likelihood. Both from 1 to 5.

Severity asks: “If this happens, how bad is it?”

Likelihood asks: “How often will this happen in real use?”

Then you multiply them for a risk score from 1 to 25. You can also add a third factor called “detectability,” but most teams do fine with two at the start.

Here is how to define severity in plain words:

1 is “annoying, no real harm.”

3 is “meaningful harm, customer pain, maybe a contract issue.”

5 is “serious harm: safety issue, legal issue, or major loss.”

And likelihood:

1 is “rare, edge case.”

3 is “will happen sometimes.”

5 is “will happen often unless we fix it.”

Do not debate the exact numbers for hours. The goal is alignment. If one person says severity is 5 and another says 2, that is not a scoring problem. That is a shared understanding problem. Talk it through until the team agrees.

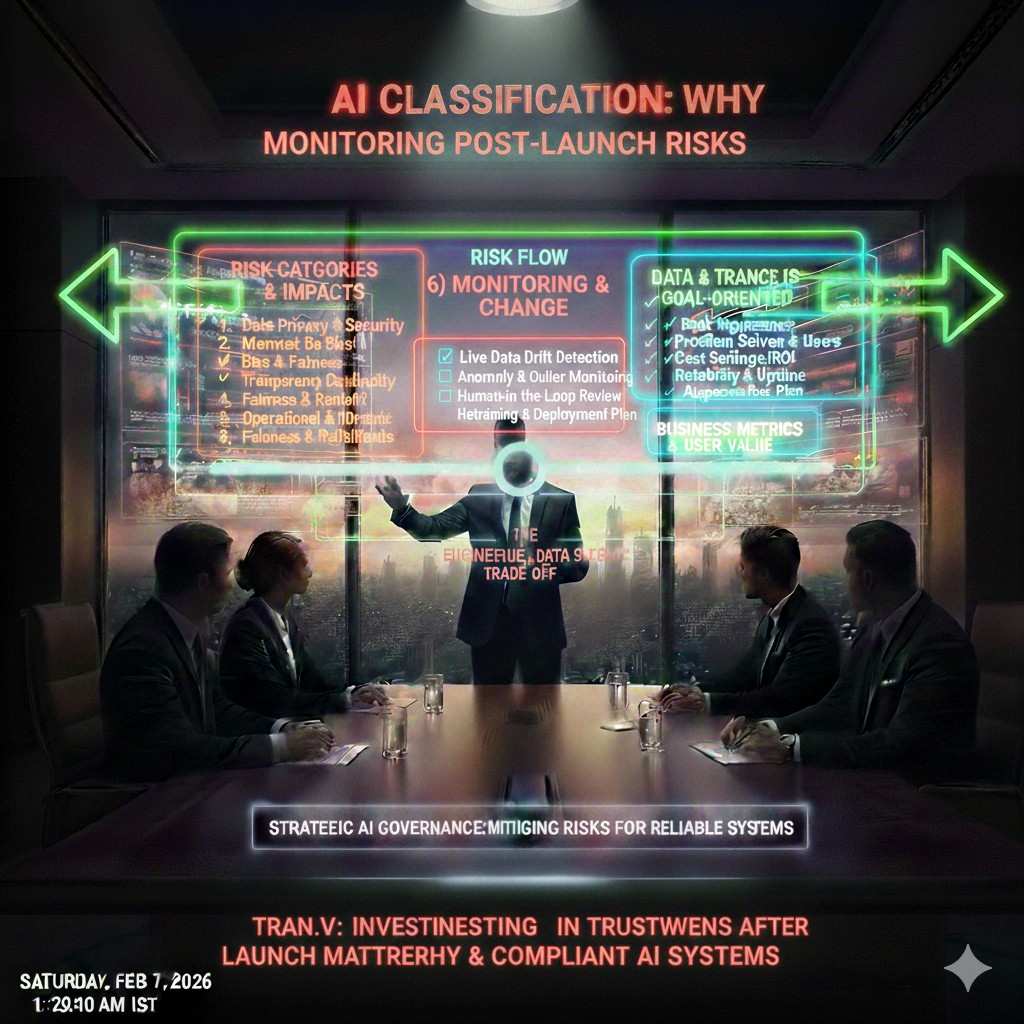

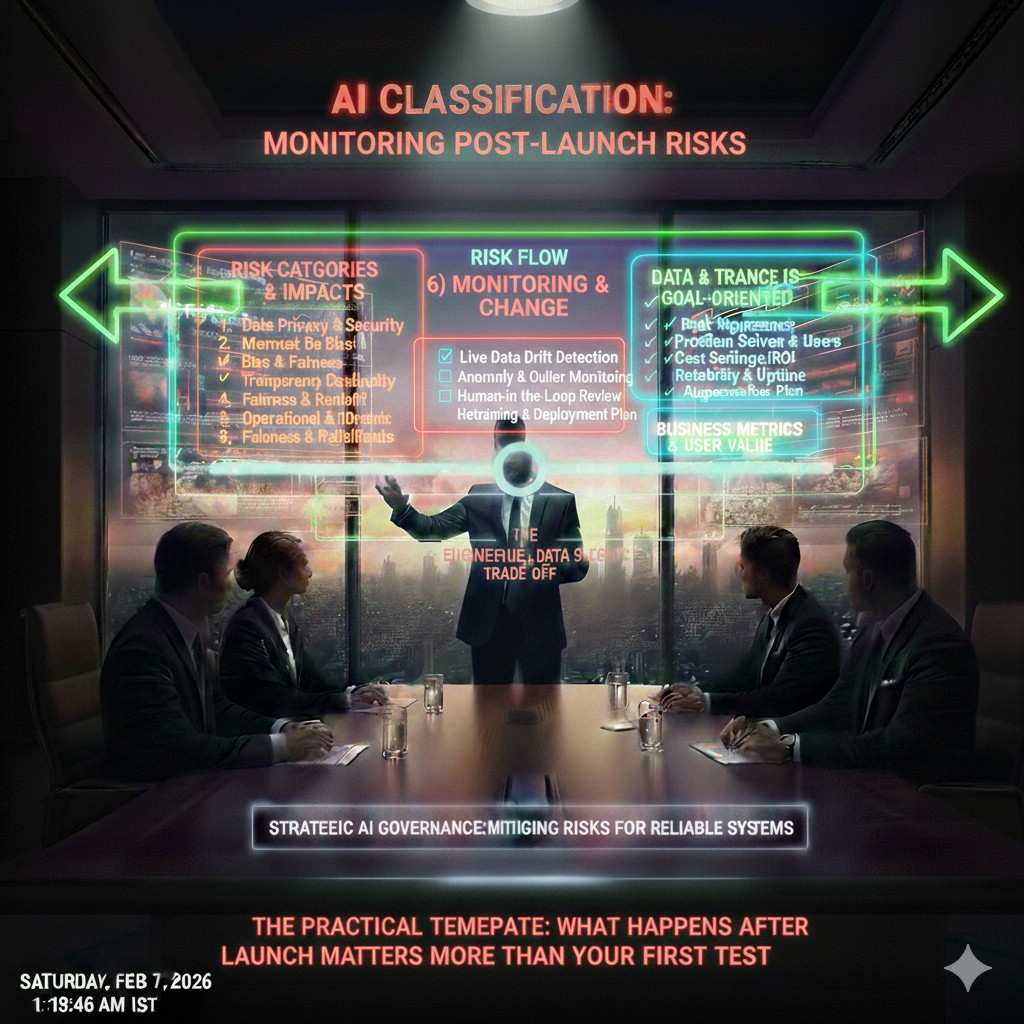

The core section: walk through risks in the order they appear in real life

Most templates fail because they start with “bias” or “privacy” in a vacuum. Those matter, but teams need a flow that matches how systems work.

A practical flow is:

- Data and inputs

- Model behavior

- Output and UI

- Human use

- System actions

- Monitoring and change over time

- Security and abuse

You are trying to catch the places where reality breaks your assumptions.

Let’s make this real with examples and exactly what to write.

1) Data and input risks: “garbage in” is still the main failure mode

Start with the inputs. Ask: what does the model depend on that might be missing, wrong, or shifted?

If you are using images, the lighting can change. If you are using text, users can write in new styles. If you are using sensor data, calibration drifts.

Write risks as short “if-then” statements.

“If the camera gets glare at 3 pm, the model will miss the edge of the box.”

“If a user speaks with heavy background noise, the transcript will be wrong.”

“If the dataset has too few examples of small parts, the robot will mis-handle them.”

Then score them.

Then write mitigations in simple, concrete terms. “Collect more data” is not enough. What data? From where? When? How will you know it is enough?

Better mitigations sound like:

“We will run a two-week capture in each warehouse zone at three times of day and label 500 examples of glare cases.”

“We will add a sensor health check that blocks inference if depth frames drop below a threshold.”

“We will add a fallback rule: if the object confidence is below X, ask for a second view.”

Notice these are not academic. They are sprint tasks.

Also, be honest about data you do not have. If you are missing edge cases, say it. A risk assessment that admits gaps is more trusted than one that pretends everything is solved.

2) Model behavior risks: errors, drift, and confidence lies

Now talk about the model itself.

Teams often assume the model’s confidence score means “truth.” It does not. It means “the model feels sure,” which is not the same thing. A model can be confident and wrong, especially out of distribution.

Write down the big model behavior risks:

Wrong answers that look right.

Unstable outputs when inputs change slightly.

Drift over time as the world changes.

Bad performance on a subgroup or environment.

Over-reliance on spurious patterns.

For each one, write how it shows up in your product.

Example:

“The model chooses grasp points based on the printed logo, not the box edges. If logos change, performance drops.”

Then write how you will test for it.

This is where many teams get vague. Do not be vague. Testing should be described as a repeatable check.

“We will run a perturbation test: rotate, crop, and change lighting on 200 validation images, and track delta in grasp accuracy.”

“We will run a weekly drift report on feature stats and compare to training.”

“We will create a ‘red set’ of known hard cases and require no regression before release.”

If you are early-stage and do not have full tooling, keep it lean. A “red set” can be a folder in your repo with a script that runs inference and prints metrics. The point is that it exists and is used.

If you are building with LLMs, include prompt injection and hallucination risk here, but describe it as product behavior.

“If the assistant invents steps for a machine repair, a tech could damage hardware.”

Then your mitigation should be: “We will restrict responses to a vetted knowledge base for repair steps, with citations, and we will block free-form instructions for high-risk actions.”

That is concrete.

3) Output and UI risks: how you present results shapes how users trust them

Next, the output and interface.

A model can be decent, but the UI can turn it into danger.

If you show a single answer with no context, users treat it as fact. If you show uncertainty well, users slow down and check. This matters a lot for B2B.

Write risks like:

“If we show a suggested action as a command, users may follow it without checking.”

“If we hide uncertainty, users will trust wrong results.”

“If we give a long explanation, users may miss the key warning.”

Mitigations here are design choices:

Use “suggest” language, not “do” language.

Use confidence bands or simple labels like “High / Medium / Low.”

Show “why” in one line, not a wall of text.

Show next steps when uncertain: “Need another image,” “Ask a supervisor,” “Run a quick scan.”

For robotics, you often need “permission gates.” Even if the model proposes a motion plan, the system should require checks before moving.

A simple mitigation can be: “No autonomous movement if a person is detected within 2 meters.” That is an AI risk mitigation even if it is implemented as a rule. Your assessment should include it because it lowers harm.

4) Human use risks: people will use your system in ways you did not plan

Now include user behavior.

In B2B, users are busy. They will click the fastest path. They will find shortcuts. They will use the tool for things you did not design for.

Write risks like:

“Users may treat the tool as the final decision, not advice.”

“Users may paste sensitive data into the chat box.”

“Users may use the system when tired or under pressure, increasing error.”

Mitigations:

Training is fine, but design beats training.

Add friction for high-risk actions.

Add guardrails for sensitive inputs.

Add clear logs and audit trails.

A simple line like “we log every override and review weekly” can make your system much safer. It also helps if you ever face a dispute with a customer.

5) System action risks: when AI triggers real changes

If AI can move machines, place orders, approve claims, change prices, or block access, treat that as a higher class of risk.

Write down every action the system can take. Then mark which ones are reversible and which ones are not.

A wrong email draft is reversible. A wrong robot motion can be irreversible. A wrong medical suggestion can be irreversible. A wrong access revocation might lock out a whole team.

Mitigations often look like:

Two-step approval for high impact.

Rate limits.

Safe defaults.

Kill switches.

Simulation before real execution.

Staged rollout.

Even early teams can do staged rollout. You can start with internal use, then one friendly customer, then broader access.

6) Monitoring and change: what happens after launch matters more than your first test

Models change in the wild because the world changes. That is normal. The risk is ignoring it.

Write what you will monitor:

Input drift.

Output error rates.

User complaints.

Near misses.

Latency spikes.

Abuse patterns.

Then write who owns the dashboard and what thresholds trigger action.

Example:

“If grasp failure rate rises above 2% in a week, we pause new deployments and run a root cause review.”

That single sentence shows maturity.

7) Security and abuse: assume smart users will try to break it

If you have an API, someone will test it. If you have a chat tool, someone will try prompt injection. If you have a robot, someone will try to trick sensors.

You do not need to be paranoid. You need to be ready.

Write risks:

“Prompt injection can bypass system rules.”

“Adversarial stickers can confuse vision.”

“Data exfiltration through logs.”

Mitigations:

Separate secrets from the model context.

Use allow-lists for tools and actions.

Red team a small set of attack prompts.

Limit what gets logged.

Encrypt data at rest and in transit.

Rotate keys.

Use least-privilege access.

Even if you are early, you can do two big things quickly: limit what the model can do, and keep secrets out of prompts.

Why Tran.vc cares about this

Investors and enterprise buyers are asking harder questions now. They want to know you can ship safely. A clean risk assessment gives you a strong answer without drama.

It also creates a paper trail of what you built, why you built it, and how you reduced risk. That is useful for product, sales, and IP. Many founders do not realize that strong IP is often hidden inside good engineering discipline.

Tran.vc helps technical teams turn their real work into patent-ready assets, and we do it early, before you raise a seed round under pressure. If you want help shaping your IP strategy around your core AI system, apply here: https://www.tran.vc/apply-now-form/

The Practical Template You Can Copy-Paste

How to use this template in a real sprint

Treat this as a living doc, not a one-time file. Start it when you begin building, and update it at each release. The best time to write it is right after you make a product choice, while the reasons are still fresh.

Keep the doc short and direct. One person owns it, but the whole team contributes. When you review it, focus on what changed since last time, not on rewriting old parts.

Who should own it and who should review it

Pick one owner who can pull answers from product, engineering, and operations. In many early teams that is the CTO, the tech lead, or the product lead. The owner is responsible for keeping it current and making sure risks have clear owners.

Set up a short review with at least one person who thinks differently from the builder. A sales lead, a customer success lead, or an ops lead is often the best “reality check.” They will spot the ways customers can misuse things that engineers do not see.

What “done” looks like for this doc

A finished assessment is not perfect. It is complete enough to guide decisions. It names the real risks, ranks them in a consistent way, and shows what the team is doing now, next, and later.

Most important, it is tied to work. Every high-risk item has a mitigation plan and a person who owns it. If it does not change what you build, it is only words.

Section 1: System Card

System identity and scope

Write the system name, version, and the exact part of the product it covers. If you have multiple models, do one card per model or per major capability. This avoids a messy document that tries to cover everything at once.

Define the system boundaries in plain terms. Say what is inside your control and what is outside. If a vendor model or a third-party tool is involved, state it clearly, because those can change and create surprise risk later.

Users and real-world context

Describe who uses the system and where they use it. Include the working conditions that shape behavior, like time pressure, noisy spaces, gloves, poor lighting, or unstable connectivity. These details are not “nice to have.” They often explain most failures.

Also state the stakes in that context. If the system can affect safety, money, access, or uptime, say so directly. This helps the team treat the right risks as top priority, instead of arguing about small issues.

Inputs, outputs, and actions

List what the system takes in and what it produces, but explain it with short, clear paragraphs. Mention key sensors, data sources, or user inputs. Then explain what the output looks like, where it is shown, and what happens next.

Finally, write one paragraph that explains what the system can trigger. Even if the model only suggests an action, the suggestion can still lead to harm if people treat it as a command. Being precise here prevents false comfort.