Most AI founders do not lose time because their tech is weak. They lose time because their patent gets blocked by two words: “abstract idea.”

It feels unfair. You built something real. It runs. It saves money. It makes decisions faster. Yet the patent office may say: “This is just math” or “This is just a rule” or “This is just automation of a human task.”

Here is the good news: you can avoid many of these rejections if you design your patent story the right way from day one. Not by “lawyering harder,” but by explaining your AI in a way that shows a clear technical result, tied to real systems, with real limits, and real proof.

That is what this guide will do. I will keep it simple, direct, and practical. No fluff.

At Tran.vc, we work with robotics, AI, and deep tech teams that want strong patents without giving up control early. Tran.vc invests up to $50,000 in-kind in patent and IP services, so founders can build real assets before a seed round. If you want help shaping your AI invention into a patent that stands up to “abstract idea” attacks, you can apply anytime here: https://www.tran.vc/apply-now-form/

Now, let’s start where most founders get trapped.

When an examiner says “abstract idea,” they usually mean one of three things:

They think your AI is only a “model” in the air, not a working system.

They think your claims are so broad that they cover any use of the idea.

They think you are just using a computer to do a task people already do.

So the goal is not to “prove AI is real.” The goal is to show your invention is a technical solution to a technical problem, and that it improves the system in a specific way.

Here is the mindset shift that changes everything:

A strong AI patent is not “we use machine learning to predict X.”

A strong AI patent is “we changed how the system works so it can do X with less compute, less delay, less noise, fewer errors, or more safety—under clear limits.”

That is the difference between “an idea” and “an invention.”

To make this concrete, imagine two versions of the same story.

Version A: “A method uses a neural network to detect defects in images.”

Version B: “A vision pipeline reduces false defect alarms by changing how frames are sampled, how features are normalized, and how the model output is gated based on sensor drift, so the factory line does not stop when lighting changes.”

These are not just different writing styles. They are different inventions on paper. Examiners reject Version A all day. Version B gives them something they can say “yes” to, because it sounds like an engineering fix, not a wish.

This is why many AI patents fail: founders describe what the model does, but not what the system does differently because of the model. Or they describe business value, not technical value. Or they hide the key details because they worry about “giving away the secret.” The result is a vague patent that is easy to reject and hard to enforce.

Your patent should do the opposite. It should be clear, specific, and grounded in the real world.

In this article, we will cover how to do that in a way that cuts down abstract idea risk. We will talk about:

How examiners think about AI claims

What “technical improvement” really means in plain words

How to write your invention so it is tied to devices, data limits, and real constraints

How to avoid common traps like “just apply ML” or “just classify stuff”

How to build a patent plan that protects your moat, not just your model name

And we will do it in a way that you can apply in your next invention disclosure, your next draft, or your next call with a patent attorney.

If you want Tran.vc to help you turn your AI work into an IP-backed moat, you can apply here anytime: https://www.tran.vc/apply-now-form/

Patentability for AI: How to Avoid “Abstract Idea” Rejections”

Why “abstract idea” shows up so often in AI patents

AI patents run into trouble because the first version of the invention usually sounds like a concept, not a machine. Founders describe what the model “decides” or “predicts,” but they do not explain what in the system changed to make that decision possible. Examiners see a lot of filings that say “use machine learning to do X,” and many of them read like a plan, not an engineering build.

When the patent office says “abstract idea,” they are not saying your product is fake. They are saying the claim reads like it covers an idea that could live in someone’s head, or on any computer, without a clear technical structure. The examiner’s job is to stop patents that are so broad they lock up basic ways of thinking.

That is why you must treat “abstract idea” as a writing and framing problem, not as a “my AI is not real” problem. Your goal is to make the invention feel like a specific technical fix, with clear moving parts, and clear limits.

The simple test examiners apply, even if they do not say it

In many AI office actions, the examiner is silently asking: “If I remove the computer words, what is left?” If what is left is “analyzing data,” “sorting,” “ranking,” “predicting,” “recommending,” or “classifying,” they often label it abstract.

They also ask: “Is this just doing a known human task faster?” If your story is “we automate what a human analyst does,” they may treat it as a business process put on a computer. That is another common path to rejection, even when the AI is very strong.

So you need to anchor the invention to something that is not just a mental step. You do that by tying it to real technical constraints, real data handling steps, and a measurable system result.

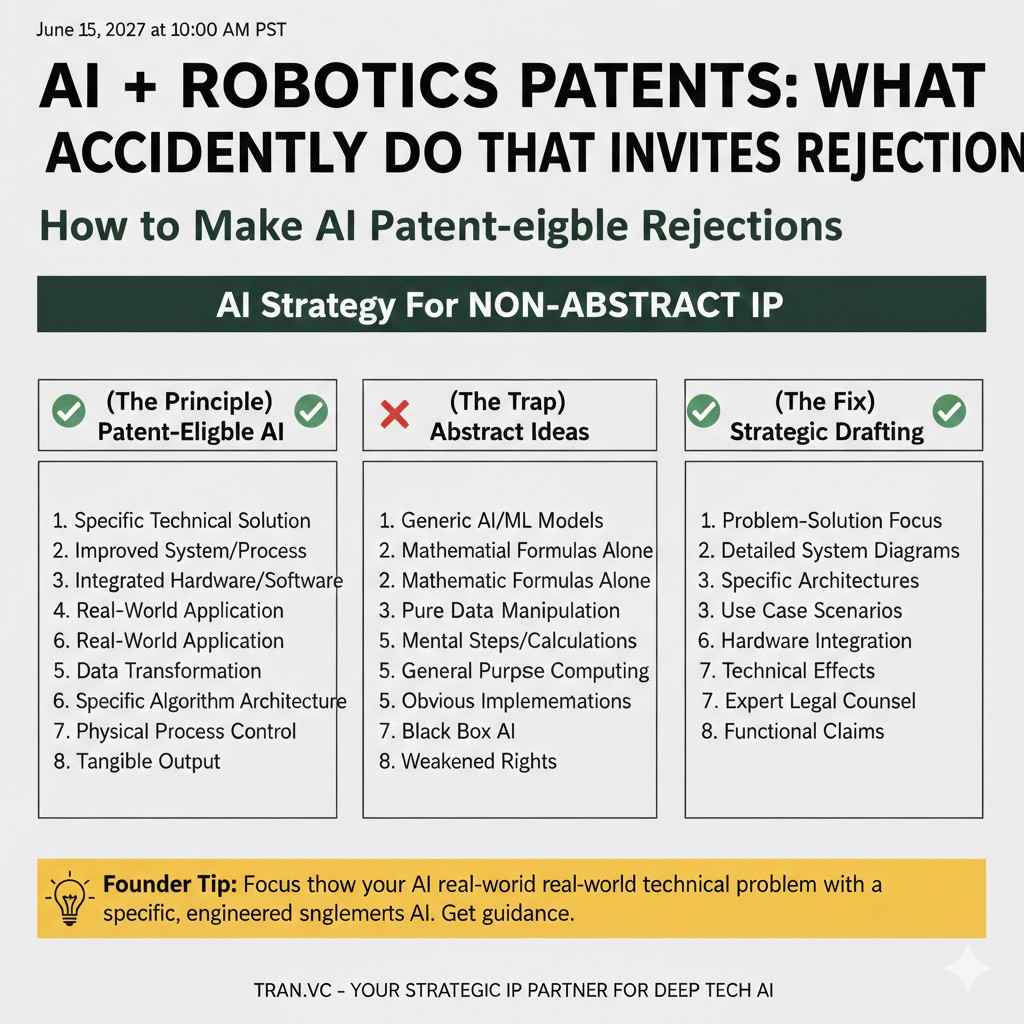

What founders accidentally do that invites rejection

A big mistake is writing the invention like a pitch deck. A pitch deck is allowed to be broad. It is allowed to focus on outcomes, markets, and use cases. A patent is different. A patent must show how you got the result, what is special about your approach, and why it is not simply “using a computer.”

Another mistake is hiding the details because the founders think patents require secrecy. Patents do not reward mystery. If the patent does not teach the invention clearly, you get a weak filing that is easy to reject and easy to design around.

A third mistake is claiming the model itself as the invention, without the system around it. A raw model, described at a high level, can sound like math. But a model used in a carefully designed pipeline, with specific pre-processing, specific training controls, and specific runtime checks, looks like engineering.

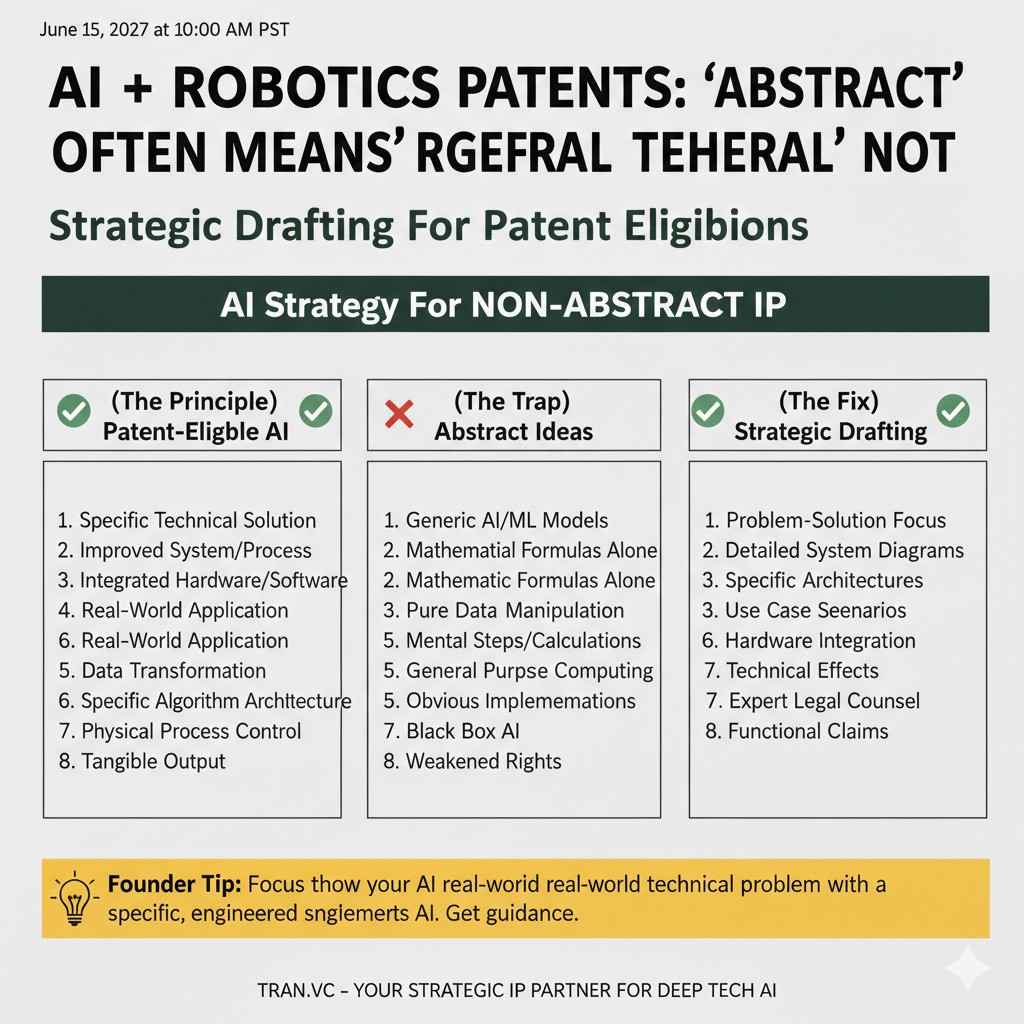

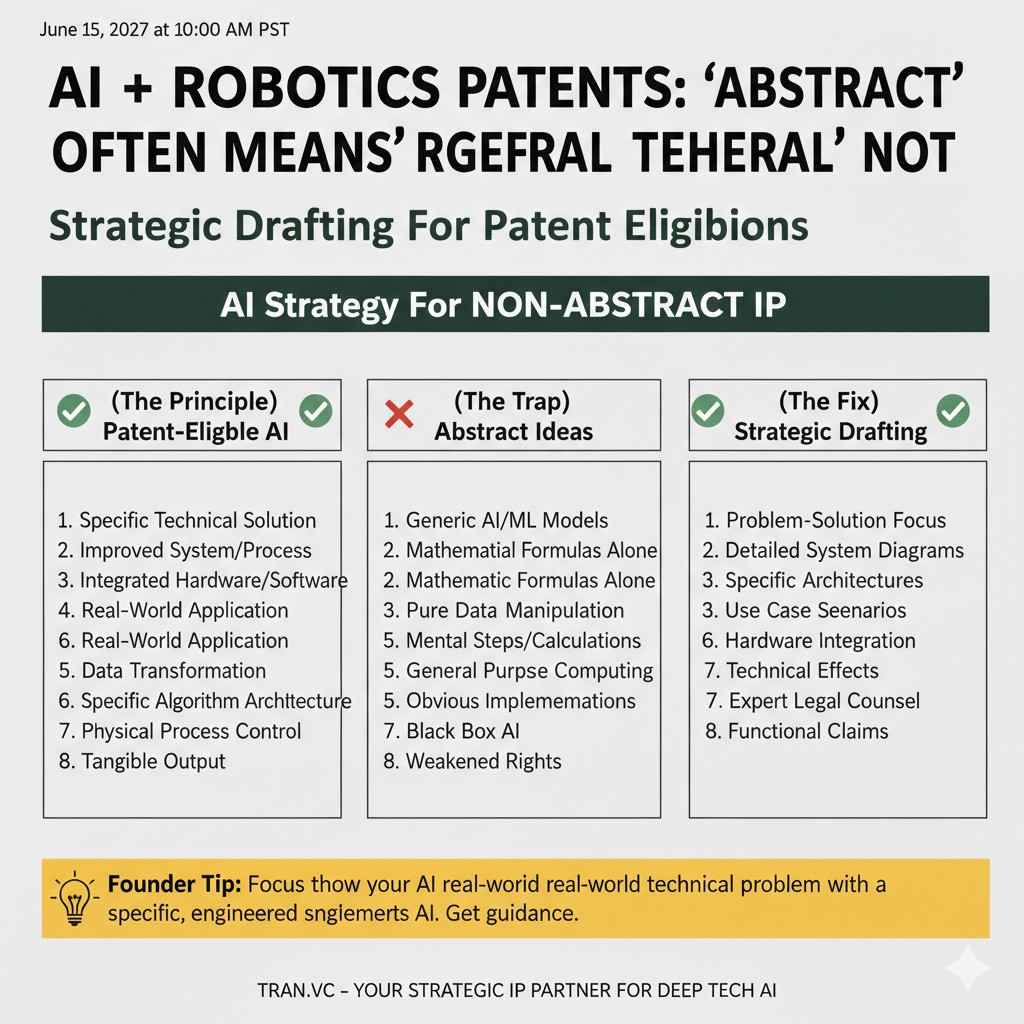

What “abstract idea” really means in plain words

The examiner is not judging your business, they are judging your claim shape

This is important. Examiners do not care if customers love your product. They care what your claims cover. If the claims read like they cover the “idea of using AI to do a task,” they will push back.

Think of the claim as a fence. If the fence is drawn around a huge field, with no clear borders, the examiner will not allow it. Your job is to draw the fence around the real thing you built, in a way that still blocks copycats.

That balance is hard, but it is possible when you focus on the parts of your AI that are unique in the real world, not only in the math world.

“Abstract” often means “too general” rather than “not technical”

Many founders hear “abstract” and think the office is saying “software is not patentable.” That is not the right takeaway. The issue is often that the patent is not describing a specific technical improvement. It is describing a general goal.

For example, “detecting fraud using a trained model” can feel like a goal. But “detecting fraud by generating a device fingerprint from timing noise, normalizing it using a drift model, and gating the model score based on session entropy” begins to look like a technical solution, not a wish.

The more your writing sounds like a real build with real constraints, the less it looks like an abstract thought.

“Just apply AI” is not an invention on paper

A common reason for rejection is that the patent reads like a shortcut. It says, in effect, “we take data and run it through ML, and we output a result.” That is a template, not a new machine.

To avoid that, you must answer a simple question in your patent story: what did you do that someone skilled in the field would not naturally do as the first attempt? That “extra step” is often where your patent value lives.

It could be a new data labeling method, a new training constraint, a new model structure for edge devices, a new way to handle sensor drift, or a new way to combine signals. But it must be described as a build, not as a hope.

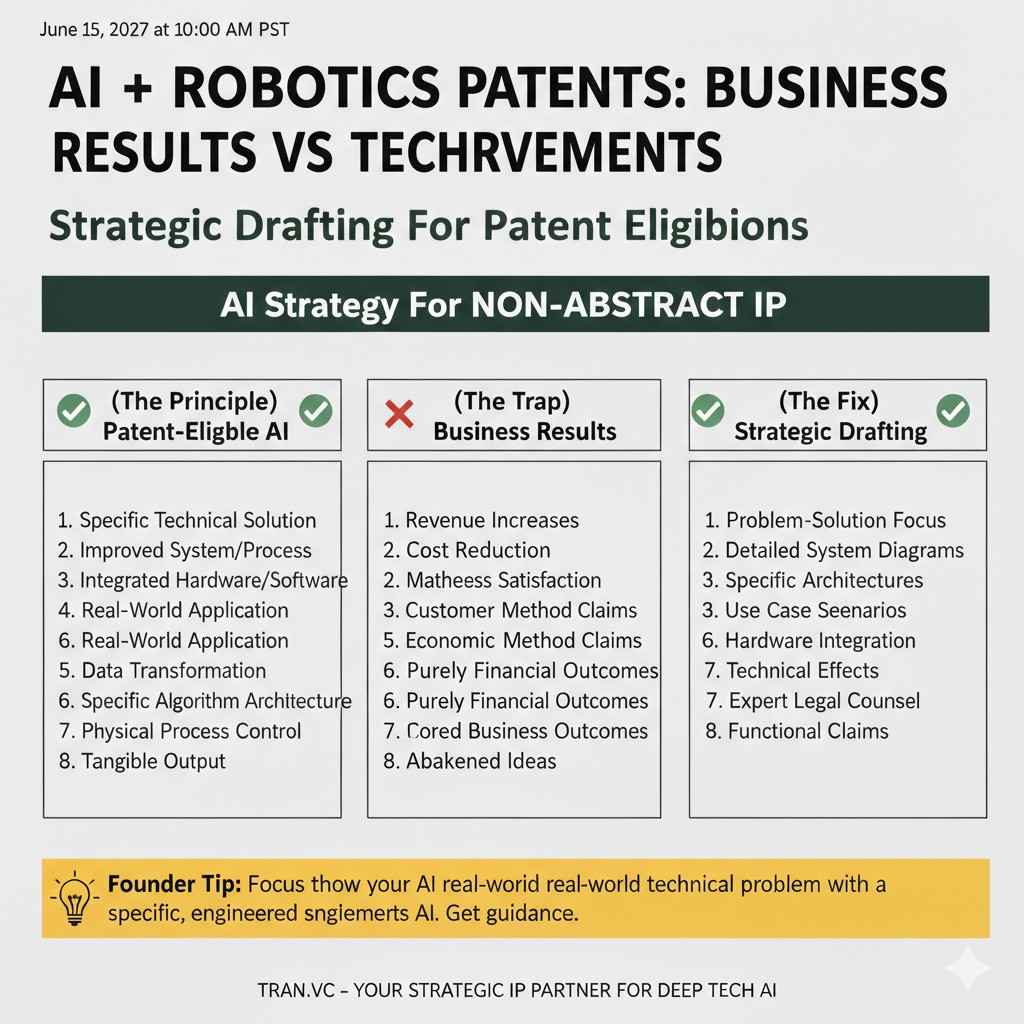

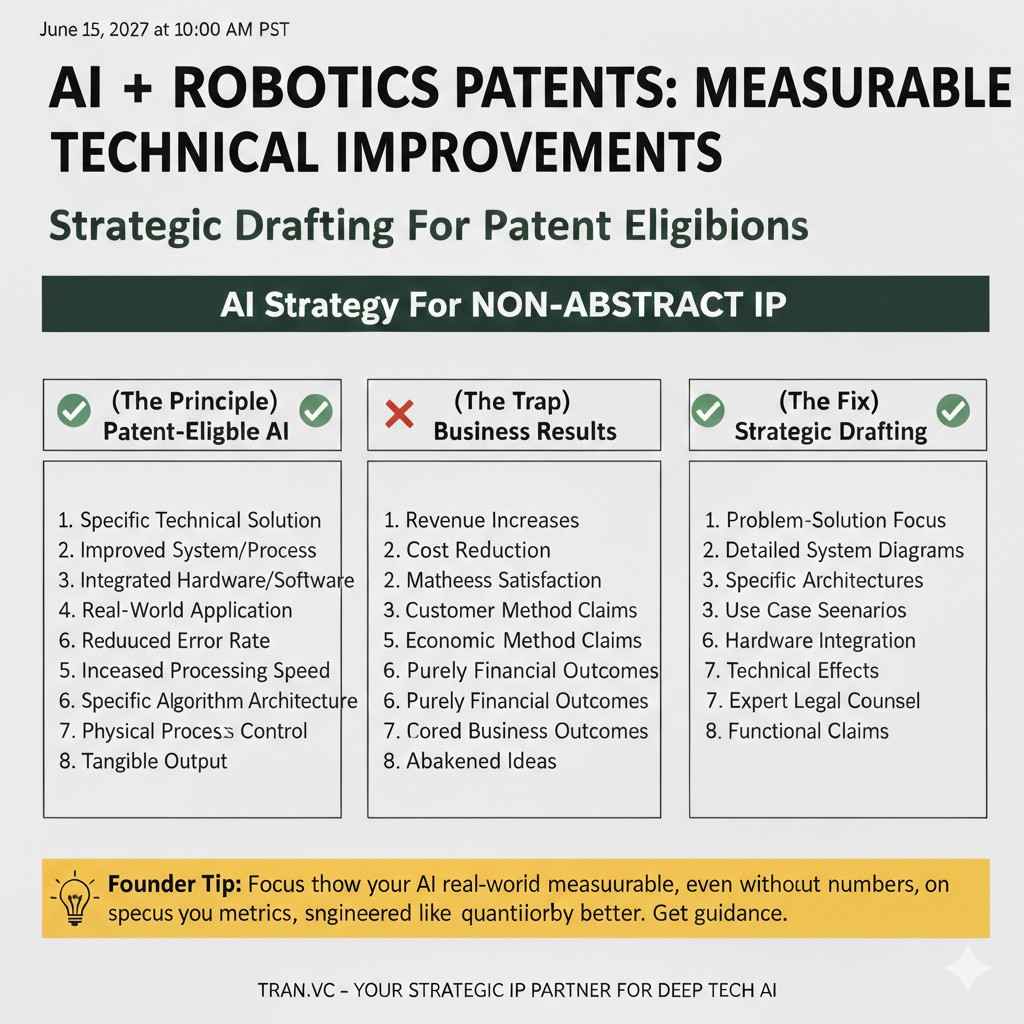

The real distinction: business results vs technical improvements

Business value is not the same as technical value

AI startups often lead with business outcomes like “reduce churn,” “increase revenue,” “lower risk,” or “improve approvals.” Those are great for sales. But in patent work, those claims can sound like a business method.

A technical improvement is different. It is about the machine or system working better. It might mean lower compute, fewer memory reads, fewer network calls, less battery use, faster inference, lower error under noise, better safety checks, or fewer failures when sensors change.

If your invention is used in a business setting, you can still patent it. You just need to frame it as a technical change in how the system processes data or controls devices.

The best AI patents show a “before and after” system story

A strong way to reduce abstract idea risk is to make it easy to see the difference between the old system and the new system. You are not just “using AI.” You are changing how the system operates.

For example, instead of saying “predict maintenance needs,” you can say “detect early mechanical failure by extracting vibration features in a constrained window, compressing them for transmission, and running an on-device model that triggers a specific control action only when confidence remains stable across multiple sampling periods.”

That reads like engineering. It is not just a result. It is a system change that has a clear reason.

Technical improvements should be measurable, even if you do not show numbers

You do not always need exact performance numbers in a patent. But you do need to describe what improves and why. If you can say “reduces false alarms caused by lighting shifts,” that is already better than “improves accuracy.”

If you can say “reduces memory use by using a feature cache and re-using embeddings,” that is clearer than “optimizes resources.” If you can say “keeps latency under a fixed threshold by using early exit layers,” that is better than “faster.”

Clear improvements make it harder for the examiner to call it abstract because now there is a technical reason for the design.

How to write AI claims so they feel like a real system

Start with the system, not the model

One of the simplest tactics is to describe the system first. What are the parts? Where does data come from? Where does it go? What constraints exist? What is the environment?

When the examiner can visualize a real pipeline, the invention feels grounded. The model becomes one part of a larger technical mechanism, not a floating math box.

If you are in robotics, this is even easier because you have sensors, actuators, timing loops, safety controls, and physical outcomes. Use those details. If you are in enterprise AI, you still have real technical objects like logs, network events, database streams, device signals, and compute limits. Use those too.

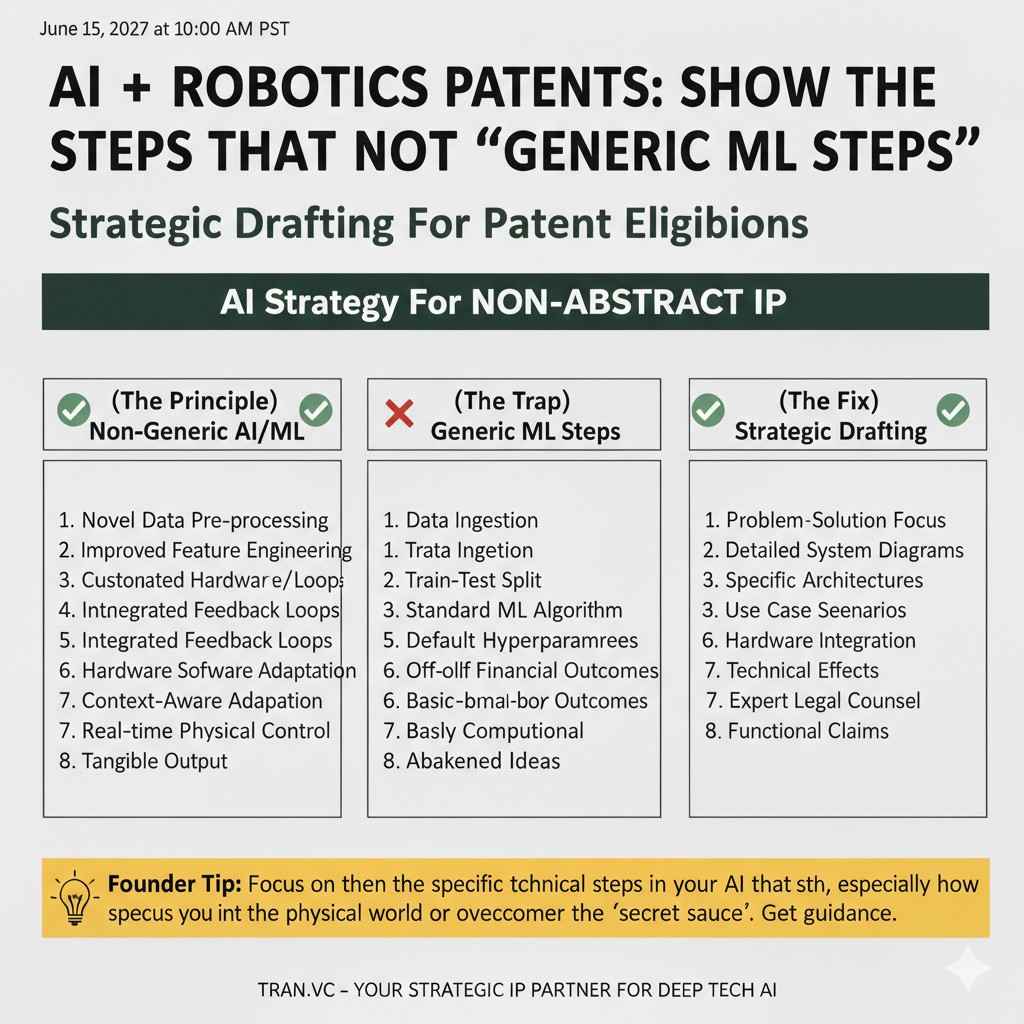

Show the steps that are not “generic ML steps”

A common weak spot is that the patent spends many words on “training a model” but very few words on what is special about training or inference. Many examiners will treat “training a model” as a generic step unless you add your key twist.

So you want to explain the parts that make your approach different. Maybe you create labels in a new way. Maybe you build a training dataset using synthetic noise that matches a device. Maybe you adapt the model weights in a constrained way at runtime. Maybe you use a new confidence gating scheme.

These details matter because they show the invention is not simply a human idea, but a specific technical method.

Tie outputs to actions that change system behavior

Another strong move is to connect the model output to a system action. Not a business decision like “approve a loan,” but a technical action like “trigger a sensor resample,” “adjust a control loop,” “route processing to a different node,” “apply a filter,” or “change a compression setting.”

When the output leads to a concrete system behavior, the invention looks less like “information” and more like “control.” That often helps reduce abstract idea attacks.

This does not mean you must be in robotics. Even in SaaS, your system takes actions: throttling requests, routing traffic, flagging sessions for added checks, changing resource allocation, or blocking suspicious calls. Those are technical actions.

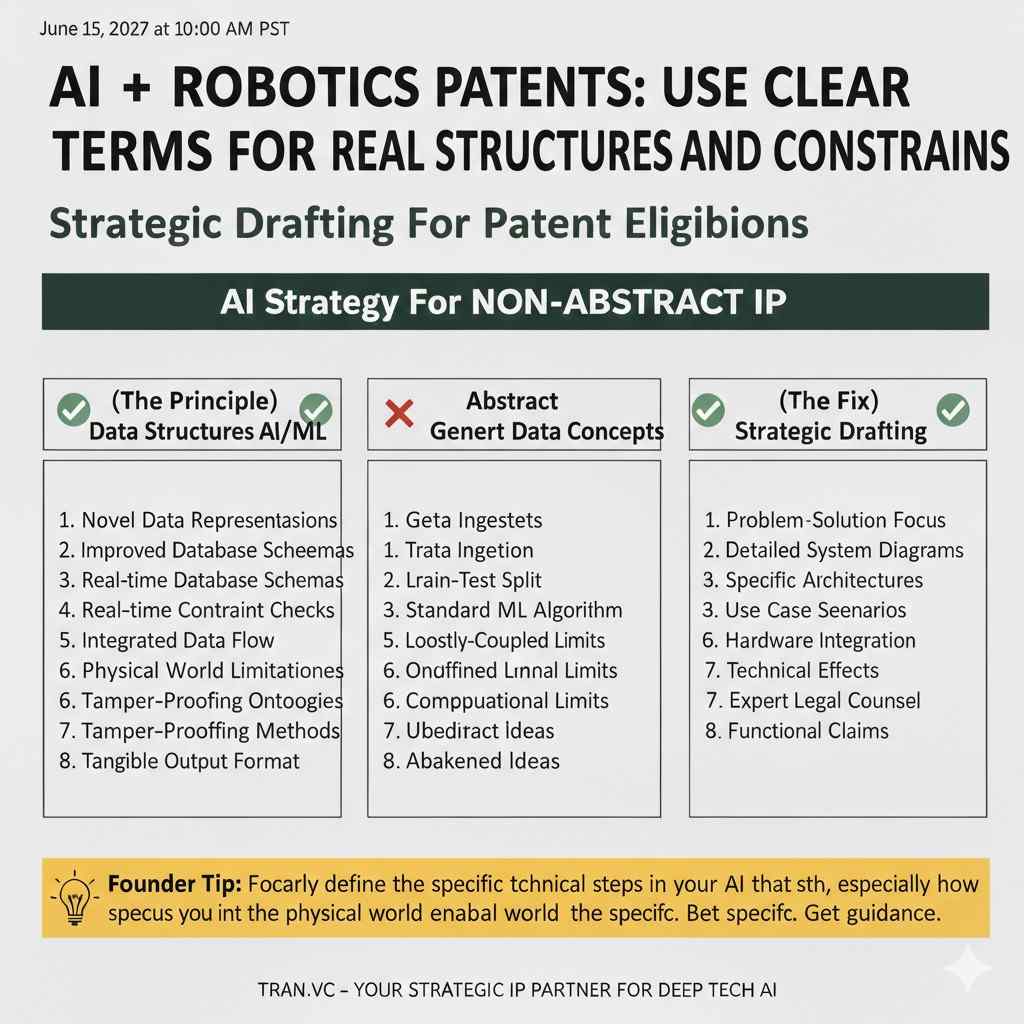

Use clear terms for real data structures and constraints

Examiners are used to seeing vague phrases like “input data” and “output result.” You can do better without becoming overly complex.

You can name the data structures in plain words. You can say “a sequence of sensor frames,” “a set of network flow records,” “a time window of vibration samples,” or “a graph of user-device links.” You can also state constraints like “limited bandwidth,” “edge compute,” “noisy sensors,” or “drifting calibration.”

These details make the invention feel tied to a real machine environment, which helps distinguish it from a pure mental step.

A practical way to “stress test” your AI patent story

Try to remove the AI words and see what remains

This is a simple but powerful check. Take your own invention description and remove words like “neural network,” “machine learning,” “classifier,” “predict,” and “train.” Then read it again.

If what remains is still a clear technical process with inputs, constraints, and system changes, you are on the right track. If what remains is “analyze information to decide something,” you are at high risk for abstract idea rejections.

This test forces you to describe the mechanism, not just the tool.

Ask whether the invention improves the computer, or uses the computer

Many rejections happen because the invention looks like “using a computer as a calculator.” To avoid that, show how your approach improves the computing system itself or a technical field.

Improving the computer can mean reducing compute, memory, network load, or latency. Improving a technical field can mean improving sensor reliability, safety, signal quality, device control, or fault detection.

The more you show a technical gain, the less the examiner can say it is only an idea.

Make sure your novelty is not only in the data label

Some AI inventions rely on clever labeling or clever dataset selection. That can be valuable, but it is risky if it reads like “we decide what counts as fraud,” or “we define a good lead,” because that can sound like a human rule.

If your key advantage is in labeling, describe the labeling method as a technical process. For example, labels based on physical measurements, device logs, or automatic ground-truth generation can feel more technical than labels based on human judgment.

This is not a strict rule, but it is a useful way to reduce risk.

If you want Tran.vc to help shape your AI invention into claims that survive abstract idea attacks, you can apply here anytime: https://www.tran.vc/apply-now-form/